Intel continues to pull in massive amounts of money through its portfolio of datacenter wares and to dominate the market for processors in the glass house. But as we have talked about here at The Next Platform, Intel has issues relating to its increasingly relevant competitors and its chip roadmaps – in particular the delayed 10 nanometer manufacturing process – remain a focus in the industry. Longtime rival AMD is at the head of all this, with its Epyc server processors based on the “Zen” architecture expected to continue chipping away at Intel’s massive market share, which is still well north of 95 percent.

At the same time, AMD is planning to roll out 7 nanometer “Rome” Epyc processors in 2019, based on chip making techniques developed by Taiwan Semiconductor Manufacturing Corp, which is a year before Intel is expected to come out with its own 10 nanometer Xeon processors. The whole situation has the air of what happened in the early 2000s, when AMD was able to grab more than 20 percent of the server chip market with the release of its 64-bit Opteron processors, catching Intel flat-footed as it continued to hold out hope for Itanium as its 64-bit future. Add in IBM with its Power9 processor and OpenPower Foundation effort as well as Arm-based processors like ThunderX2 from Cavium – now owned by Marvell – and the server chip market is looking pretty crowded and Intel is, to some, a little more vulnerable. Intel historically boasted its strength in manufacturing, so its inability to reach 10 nanometer processes at this point also is a concern.

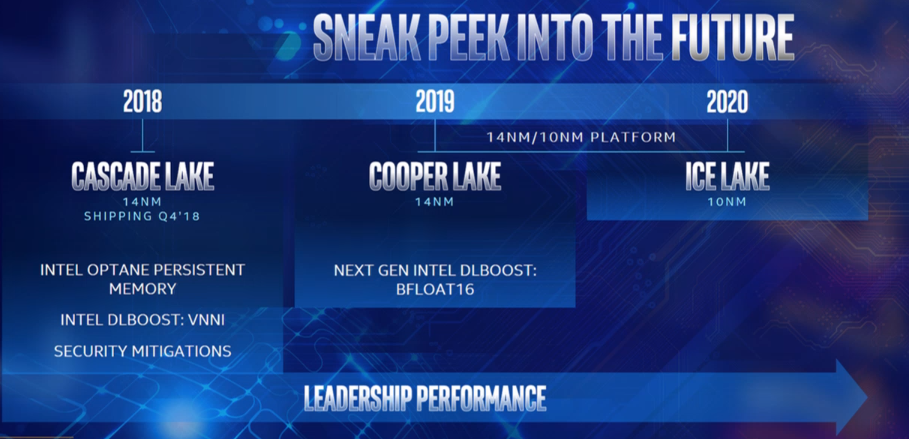

It’s a scenario that Intel has been pushing back against for several quarters and did again this week at its Data Center Innovation Summit at its home stomping grounds in Silicon Valley, where journalists and analysts came to its campus and were given a look at what that chip vendor has been doing and where it’s going. That includes its Xeon roadmap, where another two generations of 14 nanometer chips will roll out later this year (“Cascade Lake”) and in 2019 (“Cooper Lake”), followed by “Ice Lake” in 2020, the first of Intel’s 10 nanometer server processors and confirming what we told you about two weeks ago. And by the way, with much less detail than we gave you.

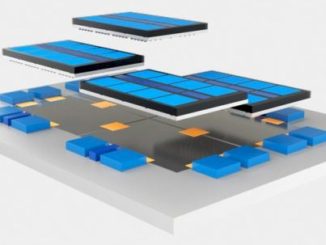

But the message coming out of Intel during the day-long event was that the company is evolving quickly beyond simply client and server chips to become a platform player with a broad portfolio that includes memory, networking and storage, other compute engines such as field-programmable gate arrays (FPGAs) and custom ASICs, software, and capabilities in such emerging areas as artificial intelligence. These and other capabilities contribute to performance enhancements, which is not solely based on manufacturing processes.

“Five years ago, you could have said, ‘Hey, we are defined by the PC and datacenter market as a microprocessor supplier,” Navin Shenoy, executive vice president and general manager of Intel’s Data Center Group, said during the event in response to an analyst’s question. “That’s not the way we see the opportunity we’re going after now. The $200 billion total addressable market that we outlined today is much bigger than we have ever gone at before, and our mindset again is that we have 20 percent of that market. It’s important to remember that the investments we’re making to expand the portfolio that we have is crucial to the way we think about the way we’re competing and the way we’re addressing our customers. We’re not talking to them about just microprocessors. We talk to them about silicon photonics, we talk to them about smart NICs, and we talk to them about Optane persistent memory and we talk to them about FPGAs and we talk to them about custom ASICs. That breadth of our portfolio that we have and our ability to stitch those capabilities together to deliver higher levels of performance is a big-time difference between the way we were viewed five years ago and the way at least that we think about ourselves now.”

Optane DC persistent memory will put a large persistent memory tier between DRAM and SSDs and will provide up to eight times the performance for such workloads as analytics compared to configurations that rely only on DRAM, Shenoy said. Intel began shipping Optane DC units this week. Cascade Lake processors will come with a new integrated memory controller than will enable support for Optane DC persistent memory as well as other upgrades, such as hardware-enhanced security to address such threats like Spectre and Meltdown, higher frequencies, and an optimized cache hierarchy.

Cascade Lake Xeons will include a new AI extension called Deep Learning Boost, which Shenoy said “extends the Intel AVX-512 instructions. It adds a new vector neural network instruction and this instruction can essentially handle convolutions with fewer instructions, thereby speeding up the performance. We expect to deliver an 11X improvement in image recognition from the “Skylake” launch in July 2017 to when we launch Cascade Lake for inference performance.”

Cooper Lake, due out later in 2019, will also bring performance improvements, new I/O features, and more innovations around Optane DC, as well as another AI extension called Bfloat16. It will be a numeric format that’s primarily used for AI and deep learning training workloads.

“We’re going to continue to expand and extend the 14 nanometer performance,” Shenoy said. “We’re making process improvements, we’re adding architectural advancements, and we’ll continue to push on the software front as well. We’re aggressively standardizing on BFloat16 and infusing it into all of our products, in Xeon and in our neural network processing family.”

Few details were released about the Ice Lake Xeons, beyond them being etched in 10 nanometer processors and them being a fast follow-on to Cooper Lake. Intel will also shorten the traditional cadence between client and server chips, according to Shenoy. Usually the datacenter chip in a new architecture would follow the client chip by a year to 18 months; Ice Lake will come a matter of months after the first 10 nanometer client chips, which are expected to arrive in systems in time for the 2019 holiday buying season, he said, and that puts Ice Lake Xeons in early 2020 – just as we have already told you.

In addition, Intel will release new optimized hardware and software stacks with the Cascade Lake Xeons. Since the launch of the Skylake Xeon Scalable Processors last year, the chip maker has been working with more than two dozen partners to create pre-validated integrated hardware-and-software solutions around such workloads as data analysis, HPC, hybrid cloud and networking. With Cascade Lake, the company will roll new solutions around AI with Apache Spark, Blockchain for security, and SAP HANA in-memory processing.

Shenoy argued that the processor roadmap will enable Intel to maintain its dominance in the datacenter.

“Our engineers needed to take a broad approach to apply all of the assets at the company to solve the technical and customers problems that we see, and they’ve harnessed all of these assets year after year after year,” he said. “Transistor and packaging technologies of course are fundamental and foundational, but there’s much more than that. Architectural innovations that we’re driving, such as the AI extensions, our memory investments that we’ve made, such as the Optane persistent memory, investments in interconnects and I/O. How we make it easier to move data faster, such as the investments we’re making in silicon photonics. The expanded view we have and responding quickly to the ever-changing security landscape, and then software and solutions investments.”

Be the first to comment