The “Naples” Epyc server processors do not exactly present a new architecture from a new processor maker, but given the difficulties that AMD had at the tail end of the Opteron line a decade ago and its long dormancy in the server space, it is almost like AMD had to start back at the beginning to gain the trust of potential server buyers.

Luckily for AMD, and its Arm server competitors Qualcomm and Cavium, there is intense pressure from all aspects of high-end computing – internal applications and external ones at hyperscalers and some cloud builders as well as enterprises who feel locked into Intel’s products – for an alternative to the Xeon processor. AMD has been gradually building momentum and trust in its Epyc server platform. It has been a long road back and has taken more than five years to get to this point.

It all started with getting the right server core architecture, something that AMD did not get right with the latter variations of the Opteron server processors from a decade ago. That “Zen” core architecture, divulged in the summer of 2016, that is at the heart of the Epyc processors was designed to strike a balance between compute, with four integer units and two 128-bit fused multiply add (FMA) units per core, and memory bandwidth. The cores also have two-way simultaneous multithreading to boost the efficiency of thread-hungry applications like Java application servers and databases. By the Open Compute Summit earlier this year, it seemed pretty clear that the Naples processors gave AMD a second chance in servers, and it did not hurt that Microsoft endorsed the Naples chips within its next-generation of “Project Olympus” servers for its internal infrastructure on the Azure cloud. (Microsoft is no dummy, and made sure to make it clear that the Olympus machines would also support 64-bit Arm server chips from Cavium and Qualcomm, and also announced an internal port of Windows Server 2016 for its own Azure applications that ran on these Arm chips.) It was around this time that AMD first started making comparisons between its future Naples platforms and Intel’s then-current “Broadwell” Xeon E5 v4 systems, which were quite a bit lower on core count, memory capacity and speed (and therefore a lot lower on memory bandwidth) and I/O capacity.

A few months later, during the summer of this year, AMD started guiding the base that a big part of its strategy would be to try to replace a lot of mainstream two-socket servers using middle bin Xeon processors with single-socket Naples systems. AMD branded the Naples chips under the Epyc moniker and rolled them out in June, a month ahead of Intel with its “Skylake” Xeon SPs, which came out in July. While Intel has closed some of the gap with its Skylake Xeons, these are in many cases still very pricey chips and AMD can still make a credible case for itself. And at least for single-precision workloads, it also has a one-two punch motion in reserve, marrying the Epyc servers with its Radeon Instinct MI25 GPU accelerator cards and fighting the combination of Xeon CPUs and Nvidia Tesla GPU accelerators on a price/performance front. The Project 47 systems, which we profiled back in August, pack a petaflops of single precision compute in a rack and are now available from server maker AMAX. At the SC17 supercomputing conference this month, AMD was showing off these systems and the fact that it had integrated Google’s TensorFlow machine learning framework with its ROCm 1.7 hybrid system middleware, making it easier for companies that have created CUDA applications and adopted TensorFlow to port over to the AMD stack.

The most important news coming out of AMD during SC17 was not just that it had some SPEC CPU benchmark test results to show off for integer and floating point operations, but that the server ecosystem for Epyc platforms was expanding and ramping. The ramp is significant enough that Intel has proactively run a slew of benchmark tests comparing various SKUs of its Skylake Xeons against the top-bin 32-core Epyc 7601 processor. Intel has not been able to get its hands on lower bin Epyc parts, which have been scarce as hen’s teeth, but the volumes there are improving and we can expect benchmarks pitting midrange X86 parts from both Intel and AMD. This is a real contest now, on multiple fronts, and both Intel and AMD are getting points on each other. As it should be in a truly competitive market. (See? Capitalism with real competition works.)

“We think the way that we are stacking up is pretty good,” Scott Aylor, corporate vice president and general manager of the Enterprise Business Unit at AMD, tells The Next Platform. “We had a demonstrable lead against Broadwell Xeons and are doing well against Skylake Xeons. For integer processing, we are slightly behind, but with floating point we have even better performance as gauged by SPEC integer and floating point tests.”

You might be thinking that with AVX-512 math units on every core, which represents twice as many floating point operations per core as AMD is delivering with its pair of 128-bit, AVX2-compatible vector units, that Intel would be smoking AMD in the floating point tests. The AVX-512 hack on the Skylake core sure looked clever when we wrote about it earlier this year, giving Skylake double the floating point oomph over Broadwell with a modest increase in chip area. In essence, one 256-bit AVX unit and a chunk of extended L2 cache are grafted onto the Skylake core. Rather than block copy the AVX-512 math units in Knights Landing, Intel rather fused the two 256-bit math units on Port 0 and Port 1 of the Skylake core to make a single 512-bit unit and then bolted a second fused multiply add (FMA) unit onto the outside of the core on Port 5. The net result is that the chip can, with both FMAs activated, process 64 single precision or 32 double precision floating operations per clock.

So, in theory, the top-bin Xeon SP-8180M running at 2.5 GHz with 28 cores could do 16 double precision operations per clock or 1.12 teraflops; but it has to be geared down to 2.1 GHz if all of the AVX-512 units on the die are fired up because the chip would overheat otherwise. So call it 940.8 gigaflops (theoretical) per chip. The Zen core can do four double precision FMAs per cycle, and on a 32-core chip running at the Turbo boost speed of 2.7 GHz for the Epyc 7601, that’s 691.2 gigaflops (again, theoretical) per chip. So Intel should have a 40 percent floating point oomph advantage.

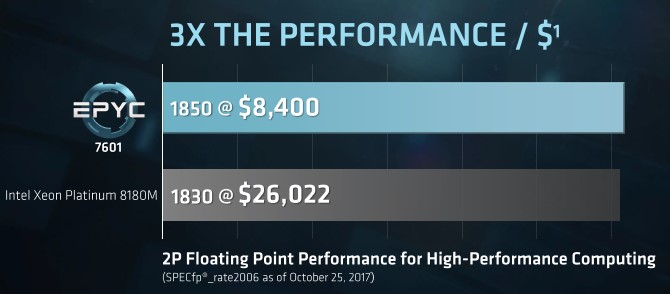

According to the SPEC floating point tests that AMD has done, this did not pan out. Take a look:

A two-socket Sugon A620-G30 system with 32-core, 2.2 GHz Epyc 7601 running Ubuntu Server 17.04 and using the Open64 compilers, equipped with 512 GB of DDR4 memory running at 2.4 GHz and a single 7.2K RPM 1 TB SATA drive, was able to attain a SPECfp_rate2006 rating of 1,850. A Cisco Systems C240 M5 server with two of the Intel Xeon SP-8180M processors, which have 28 cores running at 2.5 GHz each, plus 384 GB of memory running at 2.67 GHz and a 240 GB SATA drive running SUSE Linux Enterprise Server 12 SP2 and using Intel’s compiler suite was able to hit 1,830 on the SPECfp_rate2006 test. The Epyc system did 1.1 percent better.

Aylor offers this explanation between theory and practice when it comes to the floating point performance. “There is a misconception that every parallel application is efficiently vectorizable,” he says. “To leverage AVX-512, you have to vectorize the data to take advantage of this very wide pipeline. And beyond that point, at some point, you have to contend with balance, and you have to have memory bandwidth and the ability to feed such a massive vector pipeline. We have more memory bandwidth, and if you look at our floating point implementation, we run at double data rate and we have dedicated data pipes that run into it, whereas the Skylake architecture has less memory bandwidth and it shares datapaths into the floating point.”

The performance is essentially the same, but he cost per chip – $13,011 for the Xeon SP-8180M and $4,200 for the Epyc 7601– is very different, and hence the Epycs are offering 3.1X the bang for the buck for floating point, at least as far as SPECfp_rate2006 is concerned. This is at the chip level, and at the system level, where the CPU only accounts for maybe 20 percent to 25 percent of the total cost, this difference will be considerably smaller. But it is still a big difference nonetheless.

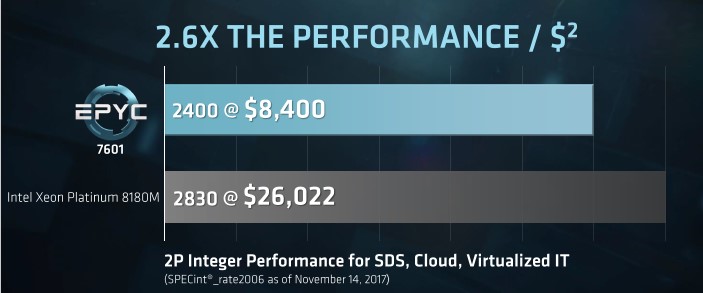

Here is how the SPEC integer tests stack up:

The SPECint_rate2006 tests were run on the exact same iron, with the Epyc system getting a 2,400 rating compared to a 2,830 rating for the Xeon SP machine. That’s a 17.9 percent integer performance advantage to Intel, but a 2.6X price/performance advantage to AMD at the CPU level at list price.

According to Aylor, the big ODMs and the fast-moving OEMs all started shipping their initial Epyc systems starting in the third quarter, and AMD has been able to fully satisfy demand for these customers. Hewlett Packard Enterprise has shipped a one-socket sled for its Cloudline CL3150 based on the Epyc chips and is also going mainstream in the ProLiant DL385 Gen10 system, which starts shipping in December. The ProLiant DL380 is the most popular enterprise server in the world – if you discount all of those home-designed hyperscale and cloud machines, that is – and is based on the Xeon processor from Intel. The DL385 has been its Opteron and now Epyc companion. It will not be long, we suspect, before Dell gets a PowerEdge machine into the field with Epyc chips.

Among the OEMs and ODMs, there are five players shipping Epyc machinery now, including: Asus, Dihuni, Gigabyte, HPE, Supermicro, and Tyan. ASI Computer Technologies, Ingram, Synnex, and Tech Data are distributing Epyc processors and motherboards, and there are thirteen system integrators who are putting together machines on demand using the Epyc processors, including AMAX, Gigabyte, Ingram, Boston, HPE, Synnex, Boxx, Supermicro, Tech Data, Clustervision, Tyan, E4, EchoStreams, Equus/ServersDirect, ICC, Koi, Megaware, NEC, Penguin Computing, and Silicon Mechanics.

One other thing. Aside from competitive performance (both CPU and memory) and a compatible instruction set, the AMD Epycs have one other benefit. AMD is not charging a memory tax, as the Xeon SP Platinum M parts do for access to higher density memory sticks that allow up to 1.5 TB per socket. The regular parts in the Xeon SP line top out at 768 GB per socket, but AMD will do 2 TB per socket thanks to having eight channels to Intel’s six channels with Skylake.

With memory prices more than double what they were a year ago, this is a big advantage. To get a certain memory capacity, an Epyc system can use less dense – and therefore less costly – memory sticks to attain that memory capacity and not sacrifice memory bandwidth. All it takes is making a commitment to filling out the memory slots in the machine and not half populating them as companies often do to leave room for expansion. Until memory prices come down – way down – this is not a good strategy unless money is no object.

“Epyc system can use less dense – and therefore less costly – memory sticks”

kudos for stressing what seems little mentioned. The big memory makers predict shortages at least through 2018.

In a related area, amd have a big edge in extending dram with nand, in the form of nvme raid. Epyc includes a lot more lanes to allow more drives, and includes native raid – arrays that servers page to, to simulate bigger memory.

Interesting article.

I have one question. Does the SPECfp_rate2006 source code use AVX 512 intrinsics, or is it just compiling c/c++ with auto vectorization?