The years-long run-up to the first exascale supercomputers was really a story about the ongoing competition between the United States and China. Who was going to get there first? How long was it going to take? How much of an advantage would the country with the first exascale systems see in everything from technology to business to defense?

In more recent years, the European Union has muscled its way into the conversation through its EuroHPC program and plans to build three exascale-class supercomputers, with the first to be housed at the Forschungszentrum Jülich research institution in Germany and built with as many Euro-centric components, systems, and technologies as possible.

Now here comes the post-Brexit United Kingdom with its own plans to become a significant player on the HPC and AI scene. UK Prime Minister Rishi Sunak last month announced the creation of the Department for Science, Innovation and Technology (DSIT), pulling under one umbrella the tech-related duties that had been spread across the Departments for Digital, Culture, Media and Sports (DCMS) and Business, Energy and Industrial Strategy (BEIS)

Sunak last week unveiled the first key piece out of DSIT, the Science and Technology Framework that includes ten points he wants all parts of the government to embrace, from showcasing the UK’s strengths in science and technology to boosting private and public investment and pursuing international diplomacy and partnerships.

The goal is to push the UK into becoming a technology and science superpower by 2030, with the framework getting an initial financial boost of more than $450 million. The country is aiming high, focusing on such sectors as AI, semiconductors, and quantum computing. Sunak painted the effort as a way to bolster the UK’s economy and future.

“Trailblazing science and innovation have been in our DNA for decades,” he said. “But in an increasingly competitive world, we can only stay ahead with focus, dynamism and leadership. The more we innovate, the more we can grow our economy, create the high-paid jobs of the future, protect our security, and improve lives across the country.”

Reports have since emerged that part of the prime minister’s plan is to build a massive – and expensive – supercomputer approaching or at the exascale level. Bloomberg, citing unnamed sources, reported that Jeremy Hunt, England’s chancellor of the Exchequer, is reviewing requests to spend as much as $970 million to build the system that – keeping with the government’s goals – would be built using systems, chips, and other components from UK companies.

By comparison, “Frontier” at Oak Ridge National Laboratory – the United States’ first exascale system is one of Hewlett Packard Enterprise’s “Shasta” Cray EX235a systems and is power by AMD’s Epyc processors and Instinct MI250X GPU accelerators – cost about $600 million including non-recurring engineering costs.

Nothing has been decided – the UK Treasury has yet to approve the money and Bloomberg’s sources said they were skeptical it would be done before Sunak’s annual budget is released this week. That said, the idea of a homemade supercomputer – which wasn’t included as part of the Science and Technology Framework – creates speculation about what UK companies will supply the necessary technologies.

Arm – based in Cambridge in the UK – immediately comes to mind. It’s a British company with decades of experience designing systems-on-a-chip (SoCs), initially for smaller mobile and embedded devices but increasingly over the years for datacenter systems. Companies from cloud giant Amazon Web Services (and maybe Microsoft?) to Ampere Computing (a startup launched by Intel veterans) and its Altra CPUs chips are leveraging Arm designs, and even Qualcomm reportedly is thinking of getting back into the Arm-based datacenter race. Fujitsu powers its “Fugaku” supercomputer – once the world’s fastest systems, per the Top500 list – with the Arm-based A64X chips.

It seems unlikely that the UK government will sponsor the creation of its own indigenous Arm processor, but weirder things have happened. (This is why Fujitsu is underwritten by the Japanese government for its HPC efforts. It is a national security issue.)

But Arm is really a global company at this point, being part of Japan’s SoftBank congolmerate. In addition, Arm spurned the UK’s London Stock Exchange for its upcoming initial public offering and is reportedly planning to file papers next month in the United States with either the New York Stock Exchange or the NASDAQ. That IPO, scheduled for later this year, could generate some $8 billion for the company. The IPO is essentially SoftBank’s Plan B for Arm, after a $40 billion bid by GPU-maker Nvidia to buy the chip designer fell through last year among antitrust concerns by US and European regulators.

The need to be indigenous could open up the lane for Graphcore, a company based in Bristol that is smaller than Arm but has big ambitions and actually designs its own full processors. (Arm does not, as yet, do that. But it could make one for the UK’s exascale supercomputer project for the right amount of money.) Graphcore is known for its highly parallel processing IPUs, which are designed for running AI training and inference workloads, but it’s aiming higher now and might position itself as an alternative to Arm in Sunak’s supposed massive supercomputer.

Graphcore engineers have been focused on the intersection of AI and HPC and last year said that in 2024 it will deliver an “ultraintelligence AI” system called the “Good” system capable of more than 10 exaflops of AI floating point compute performance. It will run 8,192 of its future-generation IPUs and cost about $120 million, a relative fraction of what Frontier cost and much less than that proposed by Sunak’s government.

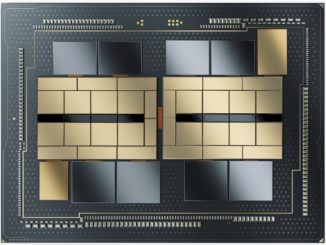

As we noted last year, Graphcore has pushed the innovation envelope in its chip designs, including with the 3D wafer-on-wafer design for its AI chips that it has been working on with Taiwan Semiconductor Manufacturing Co for several years to deliver even more power supply to the IPU while improving performance, consuming less power, and increasing clock speed.

Various US national laboratories have tested Graphcore’s IPUs to gauge how they perform while running workloads in large systems, with Argonne National Lab reporting that the results were “impressive.” Given what the UK government is looking to do with its upcoming supercomputers, such positive feedback from Argonne could make it an attractive alternative – or complement – to Arm-based systems.

Phil Brown, Graphcore’s vice president of scaled systems, told The Next Platform last year that even though the chip maker is building the Good supercomputer to handle exascale-size AI workloads, its IPUs could be used for other exascale systems – AI-focused or otherwise – as part of larger heterogenous systems, based on feedback by those in the HPC space.

“The message that we’ve been getting from them is that they’re very interested in exploring exascale system architectures that include components of different types that give them a good balance of overall capability for their systems, because they recognize that the workloads are going to become more heterogeneous in terms of the space but also the performance and the value proposition you get from these heterogeneous processors is well worth the investment,” Brown said.

Sounds like something the UK supercomputer effort could use. We won’t be surprised if it is a hybrid machine mixing different kinds of compute. But, when you mix, you limit the capability-class scaling of the machine because only a portion of it has the accelerator.

Seems unlikely, HMG prefers to sponsor our competitors and watch British competence dwindle. Even better I’d to start a project, get cold feet, keep changing the spec then pull out and blame the supplier.. who is left carrying the can

It seems to me (to some extent at least) that the British MP is mixing metaphors, conflating exascale computing with UK industrial development, resulting in a quantum paradox of buzzword bingo — as examplified by this very sentence. The confusion (in my mind) is between means and ends, carts and horses, or pits and pendulums, knotted together by a gordian catch-22. If the program goal is to foster the advancement of UK science and industry (end) by providing engineers and scientists with a public exaflop facility (means), then it would be most logical to “simply” purchase an HPE-Cray system based on MI300A, at $300M Approx., to be installed by X-mas ’23, and operational in January ’24. AMD, HPE-Cray, Supermicro, Intel, NVIDIA, IBM, and Fujitsu-NEC have essentially licked that challenge, and the exascale vanity boat has all but sailed at this juncture, horses left the barn, the pendulum has swung (those of them who aren’t quite there yet, will be, in a matter of months, not years). Accordingly, Exascale computing is now manufacturing reality, not research, and accordingly, a plan to foster the development of UK science and industry (end) by sponsoring the development of a UK-made Exascale computer (means, or end?) is, at best, today, steam-punk! Might as well sponsor development of Babbage’s analytical engine, and then use that as the basis for UK HPC, AI and cloud computing. As discussed last week on this very Next Platform, the contemporary target for research and industry development is now the Zettascale. Neither Robin Hood, nor William Tell, could possibly reach the apple of knowledge rising from Newton’s contributions if they were aiming any lower!

Graphcores filed accounts look like they are earning close to nothing given the billion dollars plus of investment they have taken. Hope the government not planning on subsidising poor execution with taxpayers money to cover up their incompetence in coming up with a national semiconductor strategy a decade too late.