For more than a decade, the pace of the server market was set by the rollout of Intel’s Xeon processors each year. To be sure, Intel did not always roll out new chips like clockwork, on a predictable and more or less annual cadence as the big datacenter operators like. But there was a steady drumbeat of Xeon CPUs that were coming out the fab doors until Intel’s continual pushing out of its 10 nanometer chip manufacturing processes caused all kinds of tears in the Xeon roadmap, finally giving others a chance to get a toehold in datacenter compute on CPUs.

As we look ahead into 2022, the datacenter compute landscape is considerably richer than it was a decade ago. And not just because AMD is back in the game, creating competitive CPUs and GPUs and will by the end of the first quarter acquire FPGA maker Xilinx if all goes well. (That $35 billion deal, announced in October 2020, was expected to close by the end of 2021, but was pushed out as antitrust regulators are still scrutinizing the details.) Now, for those of you who have been around the datacenter for decades, the diversity we are looking at is nothing like what we knew in the distant past when system makers owned their entire hardware and software stacks and developed everything from the CPUs on up to operating systems, databases, and file systems. Back in the day in the late 1980s, there were around two dozen different commercially viable CPUs and probably three dozen operating systems on top of them in the datacenter. (Those were the days. . . . ) It looked for a while like we might end up with an Intel Xeon monoculture in the datacenter, but for myriad reasons – namely that customers like choice and competitors chase profits to get a piece of them – that obviously has not happened. Which sure makes datacenter compute a whole lot more interesting.

So does the increasingly heterogeneity of compute inside of a system and the sufficiently wide variety of suppliers and architectures that compete for work in the glass house full of such systems.

This year, albeit a bit later than it had hoped, Intel will be launching the “Ponte Vecchio” Xe HPC GPU, its first datacenter GPU aimed at big compute and the replacement for the many-core “Knights” family of accelerators that debuted in 2015 and were sunsetted three years later. AMD has launched its “Aldebaran” GPU engine in the Instinct MI200 family of accelerators, which absolutely are a credible alternative to Nvidia’s “Ampere” GA100 GPUs and the A100 accelerators that use them and which are getting a little long in the tooth, having been launched in May 2020. (Don’t worry, Nvidia will fix this soon.) And to make things interesting, Nvidia is working on its own ”Grace” Arm server CPU, although we won’t see that come into the market until 2023. So that is all we are going to say about Grace as we look ahead into just 2022. The point is, the big three datacenter compute vendors – Intel, AMD, and Nvidia – will all have CPUs and GPUs in the field at the same time in a little more than a year and Intel and AMD will have datacenter CPUs, GPUs, and FPGAs in the field this year.

Nvidia does not believe in FPGAs as compute engines, so don’t knee-jerk react and think Nvidia will go out and buy FPGA maker Achronix after its SPAC initial public offering was canceled last July or buy Lattice Semiconductor, the other FPGA maker that matters. It ain’t gonna happen.

But a lot is going to happen this year in datacenter compute, and below are just the highlights. Let’s start with the CPUs:

Intel “Sapphire Rapids” Xeon SP: The much-anticipated kicker 10 nanometer Xeon server chip, and one that is based on a chiplet architecture. Sapphire Rapids, unlike its “Ice Lake” and “Cooper Lake” predecessors, will comprise a full product line, spanning from one to eight sockets gluelessly. (Ice lake was limited to one and two sockets and Copper Lake was limited to four and eight sockets because the crumbled up roadmap made them overlap. Sapphire Rapids will have up to 56 cores per socket in a maximum of 350 watts if the rumors are right. Sapphire Rapids will sport DDR5 memory and PCI-Express 5.0 peripherals, including support for the CXL interconnect protocol, and is said to support up to 64 GB of HBM2e memory and 1 TB/sec of bandwidth per socket for those HPC and AI workloads that need it. The chip is rumored to support up to 80 lanes of PCI-Express 5.0, so it will not be starved for I/O bandwidth as prior Xeon SPs, such as the “Skylake” and “Cascade Lake” generations, were. The third generation “Cross Pass” Optane 300 series persistent memory will also be supported on the “Eagle Pass” systems that support the Sapphire Rapids processors.

AMD “Genoa” and “Bergamo” Epyc 7004: While Intel is moving to second-generation 10 nanometer processes for Sapphire Rapids, AMD will be bounding ahead this year to the 5 nanometer processes from Taiwan Semiconductor Manufacturing Co with its “Genoa” and “Bergamo” Epyc 7004 CPUs based on the respective Zen 4 and Zen 4c cores, which were unveiled with very little data back in November while also pushing out the “Milan-X” Epyc 7003 chips with stacked L3 cache memory. The Genoa Epyc 7004 is coming out in 2022, timed with think with whenever AMD thinks Intel can get Sapphire Rapids out, sporting 96 cores and support for DDR5 memory and PCI-Express 5.0 peripherals. It looks like AMD wanted to get the 128 core Bergamo variant of the Epyc 7004 out the door in 2022, but is only promising it will be launched in 2023. We think that depending on yield and demand, AMD could try to ship Bergamo before its official launch to a few hyperscalers this year. We shall see.

Ampere Computing “Siryn” and probably not branded Altra: The company has been ramping up sales of its 80 core “Quicksilver” Altra and 128 core “Mystique” Altra Max processors throughout 2021, both based on the Arm Holdings Neoverse N1 cores and both etched using 7 nanometer processes from TSMC. This year sees the rollout of the Siryn CPUs, which are based on a homegrown core, which we have been calling the A1, that has been under development for years by Ampere Computing as well as a shift to 5 nanometer TSMC manufacturing. It will be interesting to see if the A1 cores go wide, as Amazon Web Services has done with its Graviton3 processors (which are based on the Arm Holdings Neoverse V1 cores), or if Ampere Computing will use a more minimalist design and pump up the core count. As we wrote back in May last year, we think that the Siryn chips will sport 192 of the A1 cores, which will be stripped down to the bare essentials that the hyperscalers and cloud builders need, and we think further that the kicker to Siryn, due in 2023, will have up to 256 cores based on either a tweaked A1 core or a brand new A2 core. The Siryn chips will almost certainly support DDR5 memory and PCI-Express 5.0 peripherals, and very likely will also support the CXL interconnect protocol for accelerators. We never expect for Ampere Computing to add simultaneous multithreading (SMT) to its cores, as a few failed Arm server chip suppliers have done and as AWS does not do with its Graviton line, either.

IBM “Cirrus” Power10: Big Blue claims to not have a codename for its Power10 chip, so last year we christened it “Cirrus” because we have no patience for vendors who don’t give us synonyms. The 16 core Cirrus chip, which we detailed back in August 2020, debuted in September 2021 in the “Denali” 16 socket Power E1080 servers. The Power E1080 has a Power10 chip with eight threads per core using SMT and 15 of the 16 cores are activated in each chip and IBM also has the capability to have two Power10 chips share a single socket. But with the lower-end Power10 chips coming this year, IBM has the capability to cut the cores in half to deliver twice as many cores with half as many threads – a capability that was also available in the low-end “Nimbus” Power9 chips, by the way. Anyway, IBM will be able to have up to 30 active SMT8 cores and up to 60 active SMT4 cores in a single socket using dual chip modules (DCMs), and has native matrix and vector units in each core to accelerate HPC and AI workloads to boot. The Power10 core has eight 256-bit vector math engines that support FP64, FP32, FP16, and Bfloat16 operations and four 512-bit matrix math engines that support INT4, INT8, and INT16 operations; these units can accumulate operations in FP64, FP32, and INT32 modes. IBM has a very tightly coupled four-socket, DCM-based Power E1050 system (we don’t know its code name as yet) which will have very high performance and very large main memory, as well as the “memory inception” memory area networking capability that is built into the Power10 architecture and that allows for machines to share each other’s memory as if it is local using existing NUMA links coming off the servers.

IBM “Telum” z16: The next generation processor for IBM’s System z mainframes, the z16, which we talked about back in August 2021, is architecturally interesting and is certainly big iron, but it is probably not the next platform for anyone but existing IBM mainframe shops. The Telum chip is interesting in that it has only eight cores, but they run at a base 5 GHz clock speed. The z16 core supports only SMT2 and has a very wide and deep pipeline, and it also has its AI acceleration outside of the cores but accessible using native functions that will allow for inference to be relatively easily added to existing mainframe applications without any kind of offload.

It would be great if the rumored Microsoft/Marvell partnership yielded another homegrown Arm server chip, and it would further be great if late in 2022 AWS put out a kicker Graviton4 chip just to keep everyone on their toes. And of course, we would have loved for Nvidia’s Grace Arm CPU, which will have fast and native NVLink ports to connect coherently to Nvidia GPUs and more than 500 GB/sec of memory bandwidth per socket, would come out in 2022.

Now, let’s talk about the GPU engines coming in 2022.

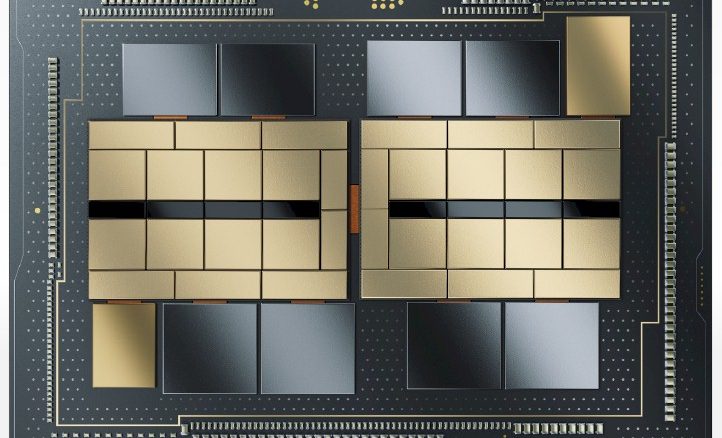

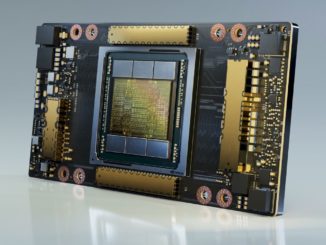

Nvidia “Hopper” or A100 Next: There is plenty of confusion about codenames for Nvidia GPUs, but we think Nvidia will deliver its kicker to the GA100 GPU, called A100 Next on the roadmaps and codenamed “Hopper” and the GH100 in the chatter, will be announced at the GPU Technical Conference in March of this year. Very little is known about the GH100, but we expect it to be etched in a 5 nanometer process from TSMC and we also expect for Nvidia to create its first chiplet design and to put two GPU chiplets into a single package, much as AMD has just done with the “Aldebaran” GPUs used in the Instinct MI200 series accelerators. AMD is delivering 47.9 teraflops of double precision FP64 performance in the Aldebaran GPU and Intel is expected to offer in excess of 45 teraflops of FP64 performance in the “Ponte Vecchio” GPU coming this year, it will be interesting to see if Nvidia jacks up the FP64 performance on the Hopper GPU complex.

AMD “Aldebaran” Instinct MI200 Cutdown: AMD has doubled up the GPU capacity with two chiplets in a DCM for the Instinct MI200 devices, so why not create a GPU accelerator that fits in a smaller form factor, lower thermal design point, and at a much cheaper price point per unit of performance by only putting one chiplet in the package. No one is talking about this, but it is a possibility. It could take on the existing Nvidia A100 very nicely.

Intel “Ponte Vecchio” Xe HPC: Intel will finally get a datacenter GPU accelerator into the field, but at a rumored 600 watts to 650 watts, the performance that Intel is going to bring to bear is going to come at a relatively high thermal cost if these numbers are correct. We profiled the first generation Xe HPC GPU back in August 2021, which is a beast that has 47 different chiplets interconnected with Intel’s 2D EMIB chiplet interconnect and Foveros 3D chip stacking. With the vector engines clocking at 1.37 GHz, the Ponte Vecchio GPU complex delivers 45 teraflops at FP64 or FP32 precision and its matrix engines deliver 360 teraflops at TF32, 720 teraflops at BF16, and 1,440 teraops at INT8. This may be a hot GPU, but it is a muscle car. This is a lot more matrix performance than AMD is delivering with Aldebaran – 1.9X at BF16 and 3.8X at FP32 and INT8.

And finally, that brings us to FPGAs. There has not been a lot of activity here, and frankly, we are not sure where Xilinx is on the rollout of its “Everest” Versal FPGA compute complexes, which have a chiplet architecture, and where Intel is at with the rollout of its Agilex FPGA compute complexes, which use a chiplet architecture and EMIB interconnects, and its successor devices (presumably also branded Agilex), which will use a combination of EMIB and Foveros just like the Ponte Vecchio GPU complex does. We need to do more digging here.

As for AI training and inference engines, which are also possibly an important part of the future of datacenter compute, that is a story for another day. There is a lot of noise here, and some traction and action, but these are nowhere near the datacenter mainstream.

Happy New Year!

While this is mainstream, no comment on Qualcomm, SiPearl or processors made in China, South Korea or Japan?

For exactly the reason I said. A64FX is in and done. We have covered SiPearl and it will be just for a few machines at best. Qualcomm has no server part that I am aware of, although it is apparently working on some accelerator for HPC. We have talked about the Chinese and Korean chips, and they are again special cases for one-off and two-off machines. I’m talking mainstream datacenter compute — hyperscalers, cloud builders, and large enterprise. HPC centers can build an exascale machine out of squirrels on wheels with an abacus in each hand if they want to. . . as you well know.

“late 1980s… (Those were the days)”

An interesting time, full of diverse innovation, but also kind of a great British baking show kind of innovation.

‘Given a limit of 150,000 transisters, and 200 I/O pins, how fast can you make a processor run? -Oh, and get useful work done with 4MB of ram, and open source software doesn’t exist, so your operating system needs to be written by fewer than 20 people, or be based on At&t.’ So, those were the days for tech journalists (and also monetizing a print magazine vs web journalism), but computers really sucked. The pace of innovation, and diversity of ideas may have fallen off, but mostly because we have an embarrassment of riches: Transisters are basically free if you can figure out how to connect them. Ram capacity is free in order to get the bandwidth. Embedded graphics will drive half a dozen HD displays with real-time 3d graphics. Free operating systems include robust networking, encryption, security, dozens of programming languages, built in compilers. I kind of agree with the love of workstation and datacenter systems of that period, but all that innovation was to make very expensive, bespoke systems to do even a couple very basic tasks that are now all taken for granted.

Geez, Paul. I think systems were pretty diverse back then, and doing a lot of interesting stuff. Or rather, as much as people could think of at the time. When I look at the long evolution of systems, from Hollerith punch card machines in 1890 all the way up to today, I am amazed at the incremental innovation every step of the way to today, and each step is always difficult. We have always had 10 percent less than what we need, and it is how we cope that makes this all interesting. I liked the diversity of systems because many different ideas for systems, not just CPUs, were tested out in the field and there was a competition between these ideas in the market. Same as today. This is also what makes it interesting. I ain’t bored yet, and every time has its challenges.

You’re right, and 150k transisters seemed like a heck of a lot when the prior system had 50k.

Please don’t use the old Intel naming terminology (“10 nm”). It will only confuse things more. Let’s have some consistency. Intel has changed its name to “Intel 7” and the Alder Lake CPUs suggests that it really is best compared with TSMC’s “7 nm” process.

I’ll be surprised if AMD’s Zen4 server chips are available in any volume at all soon after the launch of Intel’s Sapphire Rapids.

Regarding the NVIDIA GPU, I’m skeptical NVIDIA will come out with a 5 nm GPU in any volume in 2022. If they do it seems like a bit of a change from how early they produce on a node, historically. Perhaps if it’s a late 2022 product. For the A100 they already had lots of them floating around when they formally launched it at GTC in May 2020. If they use 5 nm for the successor and announce that at GTC 2022 in March I doubt many will be floating around until much later in 2022. I doubt NVIDIA will focus too much on FP64 in their new part unless they can use those transistors for lower precision compute as well. As the years go on the importance of lower precision compute as compared to FP64 compute is becoming greater and greater for NVIDIA’s data center business. AMD’s GPU business, in contrast, consists mostly of supercomputing facilities that rely a lot on FP64. I doubt NVIDIA will sacrifice performance in 95%+ of their revenue stream in order to defend the 5% from AMD. If expanding the FP64 comes at the cost of lower-precision performance NVIDIA is likely to either bifurcate their product line, as Intel originally planned to do with their HP and HPC lines, or they will allow AMD to have an advantage in supercomputing. I think they should have the money to do the former. Even if they don’t make much money on them, those supercomputers are a high-visibility “halo” market. Even though NVIDIA hates to do such a thing, if they can’t repurpose extra FP64 transistors efficiently, it might be worth it to bifurcate their product line to compete better with AMD and Intel in supercomputing.

Regarding supercomputing, it seems reports are now for Frontier to have early access pushed back to June 2022 with full user access pushed to Jan. 1, 2023. This despite assurances otherwise in October of 2021 when Aurora was (again) being pushed back.: https://executivegov.com/2021/12/installation-of-supercomputer-frontier-at-oak-ridge-national-lab-now-underway/ So Frontier seems to arrive not that much before Aurora, any more.

If Aurora really does hit 2.43 exaflops peak it will have a peak efficiency of about 24 MW / exaflops whereas the Frontier machine will have a peak efficiency of about 19 MW / exaflops. Intel is promising 45 FLOPs of FP64 vector performance per GPU. That means, with 54,000 GPUs in Aurora, we have 2.43 exflops of peak FP64 vector performance. So it checks out. Suppose the 650 Watts rumor is true. AMD, on their web site, is promising 45.3 TFLOPs of FP64 vector at 560 W peak. So the Intel GPU uses 16% more power for the same performance as the AMD GPU. If we instead use the 500 W number on AMD’s site we get the Intel GPU using 30% power of the AMD GPU. Frontier’s 19 MW/exaflops plus 16% is 22 MW/exaflops and if instead we had 30% to it we end up with 24.7 MW/exaflops. Aurora’s power usage should be at most around 24 MW/exaflops, putting it in that range. So that all checks out. So all indications are that, from a peak theoretical standpoint, Ponte Vecchio seems to use 15% to 30% more power for the same performance as Aldebaran. It’s not that much more power hungry. However, supercomputers using the A100 seem to use the A100’s FP64 matrix operations for their linpack numbers. So I would have expected that Frontier would do the same, but it seems that they are not because 1.5 exaflops divided by 36,000 GPUs is about 42 TFLOPs per GPU, around what Aldebaran can get from FP64 vector and half what it gets from FP64 matrix. However there could be something else going on there. I have to say somehow these accelerators and supercomputers seem to be shrouded in much more mystery and intrigue than is normal. Between the large number of execution units, the various power consumption figures quoted, vector instructions versus matrix instructions, and strange transistor to die size quotes it isn’t easy to determine how best to compare the accelerators. We will have to wait for some real world experience.

Finally, I have to say this has seemingly become a very AMD-Gung Ho site whereas it used to have a lot of faith in Intel.

The mi200 cutdown you are talking about was already announced it is the mi210.

You assume that the MI210 is not a double whammy, but I do not. I think the PCI-Express version will be almost the same performance as the MI250, as I showed here: https://www.nextplatform.com/2021/11/09/the-aldebaran-amd-gpu-that-won-exascale/

Slower clock, smaller memory, lower memory bandwidth so those who don’t want to use OAM can get a reasonable GPU from AMD for their systems. If I am right, then there is room for something that is a half or a little more than half the MI210 and still a lot better than the MI100.

I certainly hope the mi210 is a double chip card with slightly reduced perf from the 250/x. An Aldebaran with a single chip and ~22TF FP32/64 would be pointless, people would just buy nVidia.