Stanford’s TETRIS Clears Blocks for 3D Memory Based Deep Learning

The need for speed to process neural networks is far less a matter of processor capabilities and much more a function of memory bandwidth. …

The need for speed to process neural networks is far less a matter of processor capabilities and much more a function of memory bandwidth. …

Exascale computing, which has been long talked about, is now – if everything remains on track – only a few years away. …

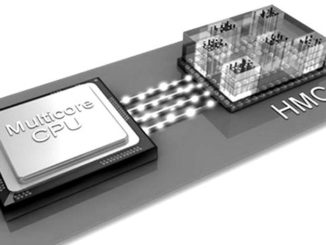

The idea of bringing compute and memory functions in computers closer together physically within the systems to accelerate the processing of data is not a new one. …

Over the last two years, there has been a push for novel architectures to feed the needs of machine learning and more specifically, deep neural networks. …

With the “Skylake” Xeon E5 v5 processors not slated until the middle of next year and the “Knights Landing” Xeon Phi processors and Omni-Path interconnect still ramping after entering the HPC space a year ago, there are no blockbuster announcements coming out of Intel this year at the SC16 supercomputing conference in Salt Lake City. …

People tend to obsess about processing when it comes to system design, but ultimately an application and its data lives in memory and anything that can improve the capacity, throughput, and latency of memory will make all the processing you throw at it result in useful work rather than wasted clock cycles. …

A new crop of applications is driving the market along some unexpected routes, in some cases bypassing the processor as the landmark for performance and efficiency. …

As Moore’s Law spirals downward, ultra-high bandwidth memory matched with custom accelerators for specialized workloads might be the only saving grace for the pace of innovation we are accustomed to. …

We have heard about a great number of new architectures and approaches to scalable and efficient deep learning processing that sit outside of the standard CPU, GPU, and FPGA box and while each is different, many are leveraging a common element at all-important memory layer. …

Intel has planted some solid stakes in the ground for the future of deep learning over the last month with its acquisition of deep learning chip startup, Nervana Systems, and most recently, mobile and embedded machine learning company, Movidius. …

All Content Copyright The Next Platform