Exascale Might Prove To Be More Than A Grand Challenge

The supercomputing industry is accustomed to 1,000X performance strides, and that is because people like to think in big round numbers and bold concepts. …

The supercomputing industry is accustomed to 1,000X performance strides, and that is because people like to think in big round numbers and bold concepts. …

The supercomputing industry is as insatiable as it is dreamy. We have not even reached our ambitions of hitting the exascale level of performance in a single system by the end of this decade, and we are stretching our vision out to the far future and wondering how the capacity of our largest machines will scale by many orders of magnitude more. …

The rumors that supercomputer maker Fujitsu would be dropping the Sparc architecture and moving to ARM cores for its next generation of supercomputers have been going around since last fall, and at the International Supercomputing Conference in Frankfurt, Germany this week, officials at the server maker and RIKEN, the research and development arm of the Japanese Ministry of Education, Culture, Sports, Science and Technology (MEXT) that currently houses the mighty K supercomputer, confirmed that this is indeed true. …

Compute is by far still the largest part of the hardware budget at most IT organizations, and even with the advance of technology, which allows more compute, memory, storage, and I/O to be crammed into a server node, we still seem to always want more. …

Nvidia made a lot of big bets to bring its “Pascal” GP100 GPU to market and its first implementation of the GPU is aimed at its Tesla P100 accelerator for radically improving the performance of massively parallel workloads like scientific simulations and machine learning algorithms. …

Nallatech doesn’t make FPGAs, but it does have several decades of experience turning FPGAs into devices and systems that companies can deploy to solve real-world computing problems without having to do the systems integration work themselves. …

We can talk about storage and networking as much as we want, and about how the gravity of data bends infrastructure to its needs, but the server – or a collection of them loosely or tightly coupled – is still the real center of the datacenter. …

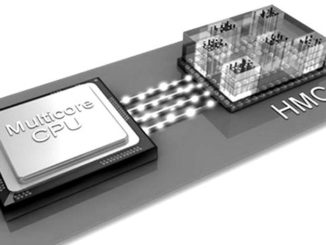

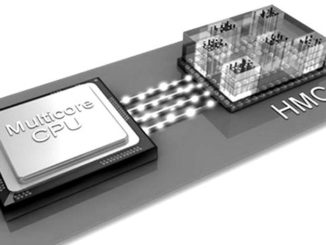

There has been quite a bit of talk over the last couple of years about what role high bandwidth memory technologies like the Intel and Micron-backed Hybrid Memory Cube (HMC) might play in the future of both high performance computing nodes as well as in other devices, but the momentum is still somewhat slow, at least in terms of actual systems that are implementing HMC or its rival high bandwidth memory counterpart, High Bandwidth Memory (backed by a different consortium of vendors, including Nvidia and AMD). …

There is little doubt that the memory ecosystem will heat up over the next five to ten years, with emerging technologies still in development that promise massive bandwidth, capacity, and price advancements. …

All Content Copyright The Next Platform