The server processor market has gotten a lot more crowded in the past several years, which is great for customers and which has made it both better and tougher for those that are trying to compete with industry juggernaut Intel. And it looks like it is going to be getting a little more crowded with several startups joining the potential feeding frenzy on Intel’s Xeon profits.

We will be looking at a bunch of these server CPU upstarts in detail, starting with Nuvia, which uncloaked itself from stealth mode last fall and which has said precious little about what it can do to differentiate in the server space with its processor designs. But Jon Carvill, vice president of marketing with long experience in the tech industry, gave The Next Platform a little more insight into the company’s aspirations as it prepares to break into the glass house.

Before we even get into who is behind Nuvia and what it might be up to, its very existence begs the obvious question: Why would anyone found a company in early 2019 that thinks there is room for another player in the server CPU market?

And this is a particularly intriguing question given the increasing competition from AMD and the Arm collective (lead by Ampere and Marvell) and ongoing competition from IBM against Intel, which commands roughly 99 percent of server CPU shipments and probably close to 90 percent server revenue share. We have watched more than three decades of consolidation in this industry, where there were north of three dozen different architectures and almost as many suppliers of operating systems to Intel dominating almost all of the shipments with its Xeons, almost all of the server CPU revenue, and Windows Server and Linux splitting most of the operating system installations and money.

Why now, indeed.

Or even more precisely, why haven’t the hyperscalers, who own their own workloads, as distinct from the big public cloud providers, who run mostly Windows Server and Linux code on X86 servers on behalf of customers who have zero interest in changing the applications, much less the processor instruction set, just thrown in the towel and created their own CPUs? It always comes down to economics, and specifically performance per watt and dollars per performance and the confluence of the two. And that is why the founders of Nuvia think they have a chance when others have tried and not precisely succeeded even if they have not failed. To be sure, AMD is getting a second good run now at Intel with the Epyc processors after a pretty good run with the Opterons more than a decade ago. But up until this point, Intel has done more damage to itself, with manufacturing delays, unaggressive roadmaps, and premium pricing, than AMD has done to it.

Clearly the co-founders of Nuvia see an opportunity, and they are seeing it from inside the hyperscalers. Gerard Williams, who is the company’s president and chief executive officer, had a brief stint after college at Intel, designed the TMS470 microcontroller at Texas Instruments back in the mid-1990s, and was the CPU architect lead for the Cortex-A8 and Cortex-A15 designs that breathed new life into the Arm processor business and landed it inside smartphones and tablets. Williams went on to be a Fellow at Arm, and in in 2010, when Apple no longer wanted to buy chips from Samsung, it tapped Williams to be the CPU chief architect for a slew of Arm-based processors used in its iPhone and iPad devices – namely, the “Cyclone” A7, the “Typhoon” A8, the “Twister” A9, the “Hurricane” and “Zephyr” A10 variants, the “Monsoon” and “Mistral” A11 variants, and the “Vortex” and Tempest” A12 variants. And Williams was also the SoC chief architect for unreleased products – and that can have a bunch of interesting meanings.

The two other co-founders, Manu Gulati, vice president of SoC engineering at Nuvia, and John Bruno, vice president of system engineering, both most recently hail from hyperscaler and cloud builder Google. Gulati cut his CPU teeth back in the mid-1990s at AMD, doing CPU verification and designing the floating point unit for the K7 chip and the HyperTransport and northbridge chipset for the K8 chip. Gulati then jumped to SiByte, a designer of MIPS cores, in 2000 and before the year was out Broadcom acquired the company and he spent the next nine years working on dual-core and quad-core SoC. Gulati then moved to Apple and was the lead SoC architect for the company’s A5X, A7, A9, A9X, A11, and A12 SoCs. (Not just the CPU cores that Williams focused on, but all the stuff that wraps around them.) Between 2017 and 2019, Gulati was chief SoC architect for the processors used in Google’s various consumer products.

Bruno has a similar but slightly different resume, landing as an ASIC designer at GPU maker ATI Technologies after college and significantly as the lead on the design of several of ATI’s mobile GPUs prior to its acquisition by AMD in 2006 and for the Trinity Fusion APUs from AMD, which combine CPU and GPU compute in the same die. Bruno then did nearly six years at Apple as the system architect on the iPhone generations 5s through X, and like Gulati, moved to Google in 2017, in this case to be a system architect.

Both Gulati and Bruno left Google in March last year to join Williams as co-founders of Nuvia, which is not a skin product or a medicine, but a server CPU upstart. Carvill joined Nuvia last November soon after it uncloaked, and so did Jon Masters, formerly chief Arm software architect for Linux distributor Red Hat.

What do these people, looking out to the datacenter from their smartphones and tablets, see as not only an opportunity in servers, but as a chance to school server CPU architects on how to create a new architecture that leads in every metric that matters to datacenters: performance, energy efficiency, compute density, scalability, and total cost of ownership?

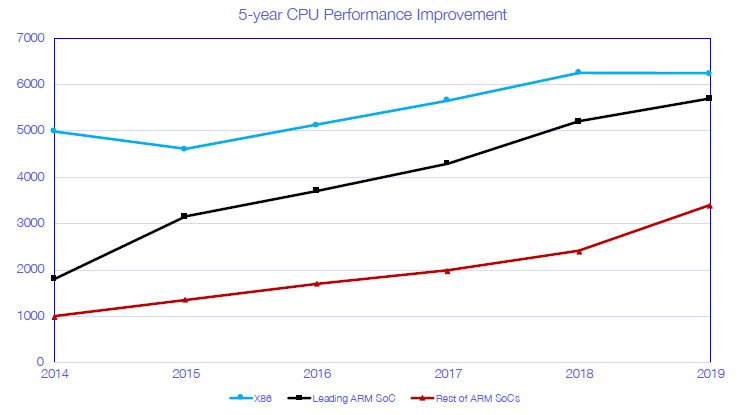

“This is a situation where Gerard, Manu, and John obviously had a pretty substantial role to play at a certain company in Cupertino in building a series of processors that were really designed to establish a step function improvement in performance, and also either a decrease in or, at a minimum, a consistent TDP,” Carvill tells The Next Platform. “And that has essentially redefined the performance level that people expect out of mobile phones. And now you have a scenario where those phones are performing very close to, if not in some cases exceeding, what you get out of a client PC and they are within striking distance of a server. Now, if you look at the servers, by contrast, now we have a similar problem that is beginning to manifest, especially at the hyperscalers, is that their datacenters have thermal envelopes that are becoming more and more constrained. They have not seen any meaningful improvement in IPC in CPU performance in some time. If you look at the last five years, they have largely had the same architectures. They have had incremental improvements in basic CPU performance. There’s been some new workloads on the scene and there’s been a lot of improvements in areas like AI and some other corner cases, for sure. But if you look at the core CPU, can you think of the last time you have seen a big meaningful difference or change in the datacenter?”

We have seen some big instructions per clock (IPC) jumps – think of the big jump with the initial Zen cores from AMD used in the “Naples” Epyc chips or in the Armv8 cores designed by Arm Holdings moving from its “Cosmos” to “Ares” reference chips. Even IBM has relatively big jumps in IPC between Power generations, but it takes more than three years for a generation to come to market. And when these big IPC jumps do happen, it is often one-off jumps because the architectures had been lagging for years. Speaking very generally, instructions per clock has been stuck at somewhere around 5 percent, sometimes 10 percent, and rarely more per generation. But here’s the kicker. As the IPC goes up, the clock speed goes down because the core count is going up because this is the only way to not increase heat dissipation more than is already happening. Over the past decade, server CPUs have been getting hotter and hotter and top-bin parts running full bore will soon be as searing as a GPU or FPGA accelerator.

We agree this is undesirable, but were under the impression that it was also mostly unavoidable if you wanted to maintain absolute compatibility of processors from today back through a very long range of time, which the IT industry very clearly does want to do.

The trick with Nuvia is that it is not trying to build a server CPU for the entire industry, but rather one that is focused on the specific – and thankfully more limited – needs of the hyperscalers.

“This is a server-class CPU, with an SoC surrounding it, and it is designed to be the clear-cut winner on each of those categories – and in totality,” says Carvill, throwing down the gauntlet to all of the remaining CPU players, who each have their own ideas about how to take on Intel’s hegemony. “And we are not talking about the incremental performance improvements that we have come to expect over the past five years. We are talking about really meaningful, significant, double-digit performance improvements over what anyone has seen before. It will be designed for the hyperscale world – we are not going after everybody. We are not going after the entire enterprise, we are starting with the hyperscalers, and we are doing that very deliberately because that’s an area where you can take a lot of the legacy that you have had to support in the past and push that aside to some degree and design a processor for modern workloads from the ground up. What we are doing is custom, and we will not be using off the shelf, licensed cores. We are going to use an Arm ISA, but we are doing it as a clean sheet architecture from the ground up that is built for the hyperscaler world.”

So that begs the question of what you can throw out and what you can add without breaking the licensing requirements to stick to the compatibility of the Arm ISA. We don’t have an answer as to what this might be, but certainly this is precisely what Applied Micro (reborn as Ampere) was trying to do with its X-Gene Arm server chips and what Broadcom and then Cavium and then Marvell were doing with the “Vulcan” ThunderX2 chips; others, like Qualcomm, would claim that they did the same thing. So we are very intrigued about what portion of the Arm ISA the hyperscalers needs and what parts they can throw away, as well as any other interesting bits for acceleration that Nuvia might come up with. For the moment, the Nuvia team is not saying much about what it is, except that numerous hyperscalers are privy to what the company is doing and have given input from their workloads to help the architects come up with the design.

What is also obvious is that this is for hyperscalers, not cloud builders, at least in the initial implementation of the Nuvia chip. By definition, the raw infrastructure services of public clouds run mostly X86 code on either Linux or Windows Server operating systems, and this chip certainly won’t support Windows Server externally on any public cloud, although there is always a chance that Microsoft will run Nuvia Arm chips on internal workloads in its Azure cloud. Microsoft has made no secret about its desire to have half of its Azure compute capacity running on Arm architecture chips, and all of the other hyperscalers – notably Google and Facebook as well as Apple, which is not quite a hyperscaler but is probably interested in what the Nuvia team is up to since it probably has millions of its own servers and would no doubt love to have a single architecture spanning its entire Apple stack if it could happen. We could even see Apple get back into the server business with Nuvia chips at the end of this adventure, which would be interesting indeed but perhaps only for its own internal consumption working with a bunch of ODMs but perhaps through the Open Compute Project.

“John and Manu were the founders who really had the initial idea because they were working at Google with a lot of the internal teams, looking at the limitations and challenges in their datacenter architecture and infrastructure, and they thought they could build something a lot better for what Google needs to scale this thing forward. But they needed a CPU architect who came with the pedigree and legacy to be able to go build something that was custom that had been successful at scale. And then that’s what when they got Gerard.”

The point is this: Google, Apple, and Facebook do not have to design a hyperscale-class CPU because they can get Nuvia to do it and spread the cost across Silicon Valley venture capitalists instead of spending their own dough.

There are precedents for the kind of tight focus on hyperscalers, and it comes from none other than Broadcom with its distinction between its “Trident” Ethernet switch ASICs aimed at the enterprise, which frankly did not have as good of a cost, thermal, and performance profile as the hyperscalers – in this case Google and Microsoft – wanted. And so, they worked with Broadcom and Mellanox Technologies to cook up the 25 G Ethernet standard, whether or not the IEEE standards committee would endorse it. In the case of Broadcom, the company rolled out the “Tomahawk” line, with trimmed down Ethernet protocols and more routing functions as well as better bang for the buck and better thermals per port. Innovium, another upstart switch ASIC maker, just went straight to making an Ethernet switch ASIC aimed at the hyperscalers.

There are not a lot of details about what Nuvia will do, but here is what the company is planning:

- Build a custom, proprietary, and flexible SoC focused on delivering performance leadership with energy efficiency and ease of use for datacenters

- Build a leadership server-class design and reference platform

- Build a prototyping environment will also be developed for risk reduction

- Engage early with potential customers to identify all key areas of focus: networking, storage management, AI accelerators (NN), and GPUs.

All of this work is being supported by an initial $53 million Series A investment from Capricorn Investment Group, Dell Technologies Capital, Mayfield, WRVI Capital, and Nepenthe.

As soon as we learn more, we will tell you.

Be the first to comment