Nvidia has for years made artificial intelligence (AI) and its various subsets – such as machine learning and deep learning – a foundation of future growth and sees it as a competitive advantage against rival Intel and a growing crop of smaller chip maker and newcomers looking to gain traction in a rapidly evolving IT environment. And for any company looking to make its mark in the fast-moving AI space, the healthcare industry is an important vertical to focus on.

We at The Next Platform have talked about the various benefits AI and deep learning can bring to an industry that is steeped in data and in need of anything that can help them gain real-time information from all that data. AI will touch all segments of healthcare, from predictive diagnostics that will drive prevention programs and precision surgery for better outcomes to increasingly precise diagnoses that will help lead to more personalized treatments and systems that will create more efficient operations and drive down costs. It will impact not only hospitals and other healthcare facilities but also other segments of the industry, from pharmaceuticals and drug development to clinical research.

Nvidia has put a focus on healthcare. For years the company has worked with GE to support its medical devices, and a year ago announced it was working with the Scripps Research Translational Institute to develop tools and infrastructure that leverage deep learning to fuel the development of AI-based software to expand the use of AI beyond medical imaging, which in large part has been the primary focus of AI in medicine.

However, in a hyper-regulated environment like healthcare where the privacy of patient data is paramount, any AI-based technology needs to ensure that the data is protected from cyber-criminals that want to steal it or leverage it, as illustrated in the increasingly targeting of healthcare facilities by ransomware campaigns, where bad actors take a hospital’s data hostage by using malware to encrypt it and giving the facility the decryption key only after it pays the ransom.

At the Radiological Society of North America (RSNA) conference this weekend, Nvidia laid out a plan aimed at allowing hospitals to use machine learning models to train the mountains of confidential information they hold without the risk of exposing the data to outside parties. The company unveiled its Clara Federated Learning (FL) technique that will enable organizations to leverage training while keeping the data housed within the healthcare facility systems.

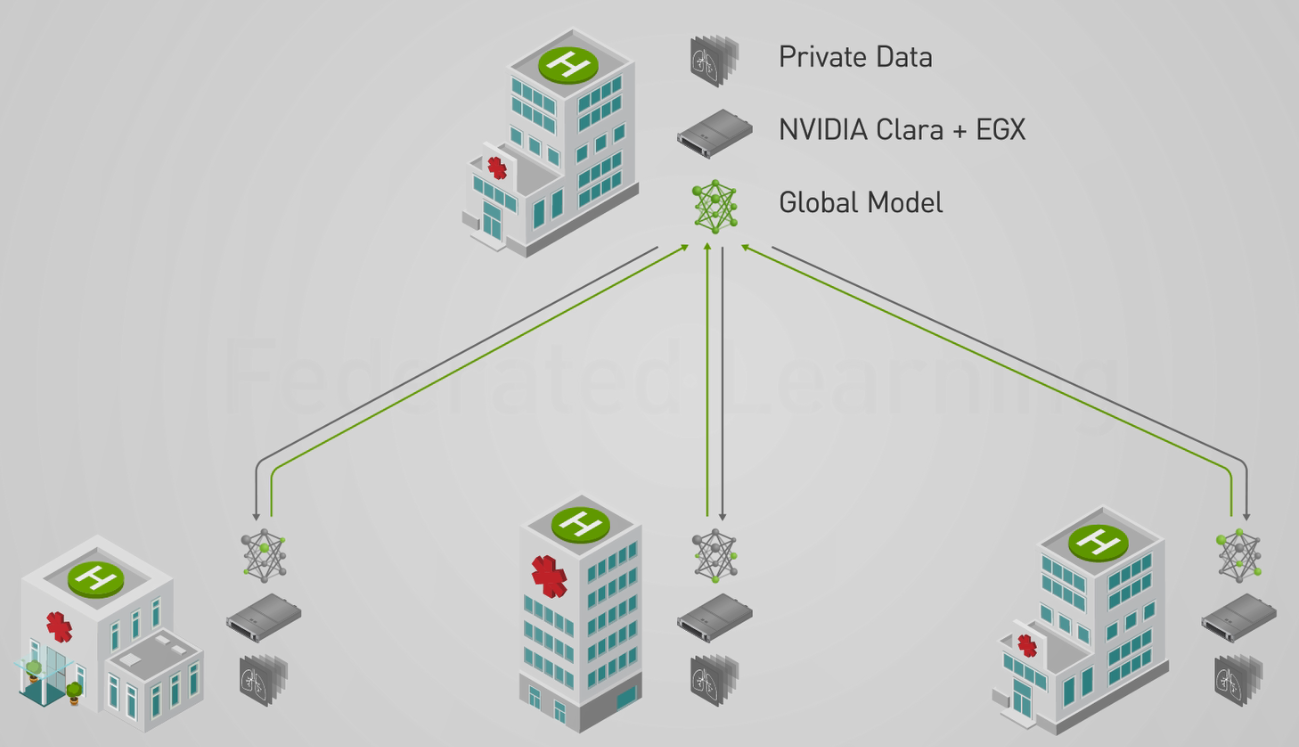

Nvidia describes Clara FL as a reference edge application that creates a distributed and collaborate AI training model, a key capability given that healthcare facilities house their data in disparate parts of their IT environment. It’s based on Nvidia’s EGX intelligent edge computing platform, a software-define offering introduced in May that includes the EGX stack, which comes with support for Kubernetes and contains, GPU monitoring tools and Nvidia drives, which are managed by Nvidia’s GPU Operator tool. The Nvidia distributed NGC-Ready for Edge servers are built by OEMs leveraging the Nvidia technologies and not only can perform training locally but also collaborate to create more complete models of the data.

The Clara FL application is housed in a Helm chart to make it easier to deploy within a Kubernetes-based infrastructure, and the EGX platform provisions the federated server and the clients that it will collaborate with, according to Kimberly Powell is vice president of Nvidia’s healthcare business.

The application containers, initial AI model and other tools needed to begin the federated learning is delivered by the platform. Radiologists use the Nvidia Clara AI-Assisted Annotation software development kit (SDK) – which is integrated into medical views like 3D slicer, Fovia and Philips Intellispace Discovery – to label their hospital’s patient data. Hospitals participating in the training effort use the EGX servers in their facilities to train the global model on their local data and the results are sent to the federated training server via a secure link. The data is kept private because only partial model weights are shared rather than patient records, with the model built through federated averaging.

The process is repeated until the model is as accurate as it need be.

As part of all this, Nvidia also announced Clara AGX, an embedded AI developer kit that is powered by the company’s Xavier chips, which are also used in self-driving cars and can use as little as 10 watts of power. The idea is to offer lightweight and efficient AI computers that can quickly ingest huge amounts of data from large numbers of sensors to process images and video at high rates, bringing inference and 3D visualization capabilities to the picture.

The systems can be small enough to be embedded into a medical device, be small computers adjacent to the device or full-size servers, as pictured below:

“AI in edge computing isn’t only for AI development,” Powell said during a press conference before the show started. “AI wants to live with the device and AI wants to be deployed very close, in some cases, to medical devices for several reasons. One: AI can deliver real-time applications. If you think about an ultrasound or an endoscopy, where the data is streaming from a sensor, you want to be able to apply algorithms and many of them at once in a livestream. They want to embed the AI. You may also want to put an AI computer right next to a device. This is a device that is already living inside the hospital. You can augment that with an AI-embedded computer. The third way that we can imagine edge computing and how we’re demonstrating that at RSNA today is by connecting many devices and streaming devices to an NGX platform server that’s living in the datacenter. We really see three models for edge and devices coming together. One is embedded into the instrument. The next is right next to the instrument for real-time and the third is to augment many, many devices around the hospital – fleets of devices in the hospital – to have augmented AI in the datacenter.”

At the show, Nvidia also showed off a point-of-care MRI system built by Hyperfine Research that leverages Clara AGX. The device is about three feet wide and five feet tall:

The Hyperfine system uses ordinary magnets that do need power or cooling to produce an MRI image, can run in an open environment, and do not need to be shielded from electromagnetic fields around it. The company is still testing it, but early tests indicate that it can image brain disease and injury using scans that are about five to ten minutes long, according to Hyperfine.

Be the first to comment