Hewlett Packard Enterprise has not been a stranger to traditional high performance computing or the emerging technologies like artificial intelligence, machine learning, deep learning, and data analytics that are driving rapid changes in the datacenter. In the last Top500 list of the world’s fastest supercomputers, 64 were based on HPE systems.

At the same time, HPE has been building out its AI and analytics portfolio, both through hardware – including such systems as the Apollo 6500 deep learning platform (below), which launched last year with such components as Intel’s latest Xeon SP processors and NVM-Express drives – as well as through software and services, in particular its PointNext offerings.

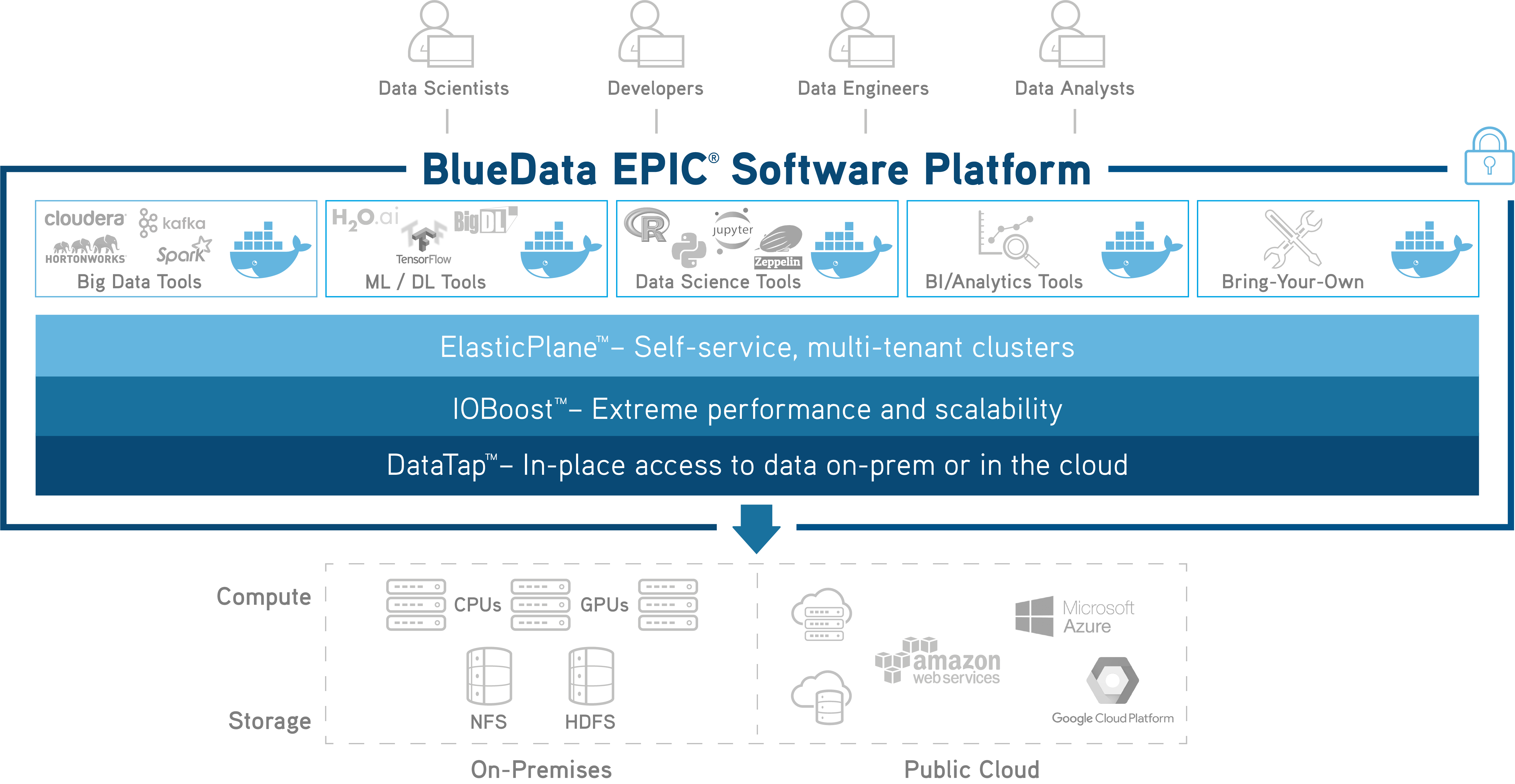

The company took a significant step forward in December 2018 when it bought BlueData, a company founded in 2012 that brought with it its Epic big data-as-a-service software platform and the BlueData AI/ML Accelerator, package of software and professional services for deploying Docker containerized environments that developers could use to test and run machine learning, deep learning, and analytics workloads, leveraging such technologies as TensorFlow and DataIQ.

BlueData’s software can run on hardware from a wide array of vendors (including HPE) as well as in public clouds – including Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform – and in hybrid cloud environments. Bringing the company into the fold as a boon for HPE’s AI and data analytics ambitions, giving it even more software tools to pair with its broad hardware portfolio.

Fewer than five months after the acquisition closed, the first fruits of the deal are being picked this week, with HPE unveiling an integrated bundle that brings together its Apollo servers with BlueData’s AI and analytics software platform and throws in the PointNext services to boot. In addition, the company announced a new reference configuration that will deploy the software on HPE’s modular Elastic Platform for Analytics (EPA) that offers building blocks for compute, storage and networking.

It is also the first step in a longer ranging plan to leverage the BlueData software in other parts of the portfolio, including with HPE’s GreenLake consumption-based, pay-as-you-go on-premises model. In addition, there are plan for other BlueData-based pre-tested and pre-validated reference architectures a range of AI, machine learning and analytics use cases and tools, such as Apache Kafka, Spark, Cloudera, H2O and TensorFlow.

“The value of the platform is certainly in the fact that we’re allowing folks to be able to deploy these workloads very quickly on their choice of private vs. public cloud infrastructure,” Patrick Osborne, vice president and general manager of big data and secondary storage at HPE, tells The Next Platform, adding many of the larger enterprise customers are looking for a vertical infrastructure solution. “If we can provide them a stack that includes services as well as the BlueData Epic platform and the infrastructure that runs underneath it, from a compute, networking and certainly storage perspective, then they would gladly welcome that.”

Similar to announcements made by Dell EMC late last month when it showed off deeper integration with VMware in a range of cloud and other offerings, HPE said that the BlueData software will continue to support other vendor infrastructures, but the deep integration with the HPE hardware will make the platform run even better there.

“We absolutely want to make that clear that HPE’s intention is to retain that infrastructure-agnostic capability of BlueData, leveraging the portability of containers,” Jason Schroedl, vice president of marketing for BlueData at HPE, tells The Next Platform. “But we also have a very strong better-together solution an story around BlueData with Apollo servers and HPE PointNext services. The key is that as part of HPE, we’re now bringing together a solution with the software and the infrastructure and the services to help speed deployment and reduce costs and accelerate time to value for our customers.”

The integrated offerings – which are available now in the United States, the United Kingdom, Germany, France, and Singapore – are aimed at a range of industries, from financial services and healthcare to manufacturing, retail and life sciences. While HPE has strong roots in HPC, BlueData traditionally has focused on enterprises, according to Anant Chintamaneni, vice president of products at BlueData. Enterprises have embraced the ideas of big and fast data and collecting the data in a centralized place for easier access for analytics, Chintamaneni tells The Next Platform. Now they are looking for ways to better exploit the data and develop new applications with predictive analytics capabilities, and they’re beginning to extend their reach from machine learning into deep learning.

“Machine learning is still the primary focus for most of the enterprises,” he says. “We’re talking about basic algorithms and using things like logistic regression or some of the regression-type techniques. Those are still tools for machines learning, where you are taking data, you’re extracting the right features, you’re training that data, creating a model based on that data and then applying that model to do predictions. But that said, one of the things that’s happening with a lot of these enterprises, especially in the emerging tech groups and in the engineering groups, is there’s a lot of interest in figuring out how to leverage and identify use cases where they can update their deep-learning techniques, especially in the areas of customer service. That is traditionally not a great fit for structured data, where you can apply machine learning.”

Open source technologies like TensorFlow and CubeFlow are becoming more accessible, Chintamaneni says. As such pipelining technologies being more accessible, enterprises “are dabbling from an engineering perspective to see what use cases are good for deep learning, where they’re using neural nets and so on. There’s a lot of deep-learning type of techniques being applied. It’s progressing in the enterprise, but there is a lot of interest and a lot of evaluation from a technology perspective to make sure that whatever platform they choose is future-proofed, whether that is a specific tool like that they’re selecting or whether it’s a platform they’re selecting, so they can go from the present focus on machine learning to the advanced deep-learning techniques.”

A platform like BlueData’s gives enterprises the tools to work with such technologies, Schroedl says.

“We can help them get up and running with some initial sandbox environments that their data science teams and analysts can start to play with the different tools sets,” he says. “As they begin to delve into the specific use cases and they are looking at expanding beyond an initial pilot or dev test environment, they want to scale that environment and they want to operationalize their pipelines. We allow them to have a distributed environment where they can have multiple data scientists getting access to the data, sharing their models and automating the deployment of those models and operationalize their pipelines.”

With BlueData now part of HPE, enterprises can take better advantage of the components in the hardware – such as Nvidia’s Tesla GPU accelerators – that are designed for such workloads as AI, machine learning and data analytics, Schroedl says.

Hopefully with Meg out hp has a chance. she was the highest paid CEO i know that ran a shrinking company, kept what she thought was the best 10% (40k of 400K employees) and it still goes nowhere under her. Thank god she is gone. Not to mention that she failed big time to run for office.