Frontier: Step By Step, Over Decades, To Exascale

Any time you build anything with more than 60 million parts, it is going to be a headache. …

Any time you build anything with more than 60 million parts, it is going to be a headache. …

The hyperscalers, cloud builders, HPC centers, and OEM server manufacturers of the world who build servers for everyone else all want, more than anything else, competition between component suppliers and a regular, predictable, almost boring cadence of new component introductions. …

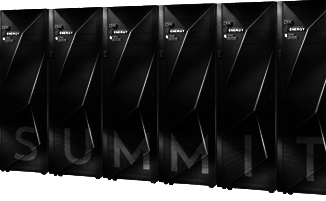

We have spent the past several years speculating about what the “Summit” supercomputer built by IBM, Nvidia, and Mellanox Technologies for the US Department of Energy and installed at Oak Ridge National Laboratory might be. …

The increasingly distributed nature of computing and the rapid growth in the number of the small connected devices that make up the Internet of Things (IoT) are combining with trends like the rise of silicon-level vulnerabilities highlighted by Spectre, Meltdown, and more recent variants to create an expanding and fluid security landscape that’s difficult for enterprises to navigate. …

Big iron aficionados packed the room when ORNL’s Jack Wells gave the latest update on the upcoming 207 petaflops Summit supercomputer at the GPU Technology Conference (GTC18) this week. …

It is one thing to scale a neural network on a single GPU or even a single system with four or eight GPUs. …

All Content Copyright The Next Platform