With over thirty years and more than thirty patents under his belt, Dr. Peter Kogge is one of the foremost leaders in high performance computing, both in terms of developing new ideas–and creating a vision for the most efficient routes to future extreme scale computing.

In addition to designing the Space Shuttle I/O processor, the first multicore processor (EXECUBE), and more awards than can be gracefully mentioned here, Kogge has blazed trails through computing—both as an IBM Fellow and more recently as one of the lead thinkers behind how the future of exascale architectures will align with Department of Energy goals. What is interesting about Kogge’s background on both the multicore and memory fronts is that not only was his chip the first of its kind (with eight independent cores on a single die) but it was done on a DRAM technology, representing true in-memory processing, decades before the concept was widely accepted or productized.

The challenges of exascale computing, which according to the 2008 DARPA report, would require 1000x computational power with system power of less than 20 megawatts, have been well described and debated. Among such arguments for how future architectures might need to shift is Kogge’s Gauss Award-winning paper, “Updating the Energy Model for Future Exascale Systems,” which introduces a “major update to the ‘heavyweight’ (modern server-class multicore chips) model, with a detailed discussion on the underlying projections as to technology, chip layout and microarchitecture, and system characteristics.”

“Back in 2008, it looked like we’d never get a heavyweight processor up that far. Today it’s possible, but we’re looking at a couple hundred megawatts,”

Over the last several years he has spent time looking at other architectures, including those with smaller form factors and smaller power per core, which are well-suited for dense boxes. Two of the most interesting technologies that were on Kogge’s radar were BlueGene and Calxeda, which although some of the core IP is showing up elsewhere, are now out of circulation. Further, he has explored the capabilities of GPU based systems as the powerhouses for energy-efficient exascale computing but overall, against all of these and other architectures, there are major issues with memory—and the jury is still out about what will change when 3D stacks wend their way into the ecosystem. Knights Landing is interesting, Kogge tells The Next Platform, “but at the end of the day, we think there might be something even better than that—something better than the stacked memory approach seen with Knights Landing, anyway.”

Even with the new innovations on the horizon, however, he says that the applications are changing shape while the architectures for both memory and processors are not keeping pace. The future will be more rooted in applications like we see tested on benchmarks like HPCG or graph operations. In short, the future of real exascale applications might look a lot more like the above than what we see now, which means a rethink of memory and compute and the balance they strike for high performance and low energy consumption.

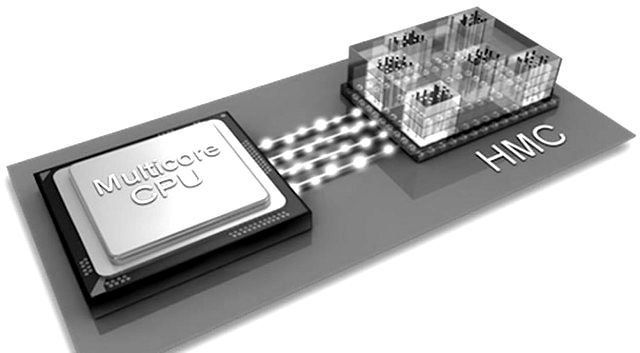

We will get to a more technical description of the technology Kogge alludes to tomorrow in the second half of this article, which will explore the architecture he has developed with his team at Emu Technology. But to set the stage, we wanted to understand the new wave of memory options from his perspective, namely, in terms of where the AMD-led High Bandwidth Memory (HBM) effort and the Hybrid Memory Cube (HMC), led by Micron and others, will fit into the exascale picture from his perspective.

Whether it is HMC, where there is a logic chip at the bottom of a stack of DRAM and that stack has a lot of memory controllers going into the stack, or HBM, where instead of a logic chip there are several stacks put on a module with a conventional high-end ASIC or Xeon and thousands of micro-connections between them for high bandwidth, the memory parts are essentially the same—it is the interfaces presented that are different. “In HMC there’s that logic die on the bottom providing nice, well-behaved high-speed serial interfaces—well defined protocols so it’s possible to tie multiple ones together in various topologies, versus HBM, which has a specialized substrate that talks to specialized ASICs with a lot of on-module I/O. It is in that former, HMC, where we see things going, when computing will move onto the bottom layer so there is no need for a high performance core somewhere making requests to and from that. The goal is to run a program in one of the cores on the bottom of the stack and that program can talk to other programs running on other stacks.”

Such stacks will consume far less power with the logic die than an HBM collection, which Kogge says could consume a couple hundred watts per module in theory. “The same thing happened with BlueGene; the power per node was reduced to the point where it could be densely packed,” he notes. Ultimately, Kogge says he tends to be in favor of the HMC approach, but of course, this is all still conjecture since no one has shipped any products yet.

Hypothetically, in any of the memory approaches above, there is the possibility for quite a bit more bandwidth, but Kogge says it is unclear if it’s possible, at the system level, to get any significant power savings. “The cost is that it is still expensive to move data across these very high-speed interfaces,” which is what Emu Technology is addressing. “We’ve noticed that a large number of emerging applications are not dense linear algebra-like, where you can use short vectors and the high bandwidth of existing systems with their heavy caches. For that, those memory approaches don’t buy you much,” Kogge says.

We will delve into how Kogge and team at Emu Technology envision the future of memory and compute for energy-efficient large-scale systems in a detailed piece tomorrow.

Its not complete conjecture, since HPC systems that use HMC have been shipping and in use since early 2015.

In point of fact, there are plenty of them on the current Top500 list: #22, #31, #61 and #84 on the November 2015 Top500 are all using HMC and are all roughly 90% computationally efficient.

HBM heterogenous GPU architectures and large Knights Landing systems will be very interesting to compare, once they finally ship.

“Whether it is HMC, where there is a logic chip at the bottom of a stack of DRAM and that stack has a lot of memory controllers going into the stack, or HBM, where instead of a logic chip there are several stacks put on a module with a conventional high-end ASIC or Xeon and thousands of micro-connections between them for high bandwidth, the memory parts are essentially the same—it is the interfaces presented that are different. “In HMC there’s that logic die on the bottom providing nice, well-behaved high-speed serial interfaces—well defined protocols so it’s possible to tie multiple ones together in various topologies, versus HBM, which has a specialized substrate that talks to specialized ASICs with a lot of on-module I/O. It is in that former, HMC, where we see things going, when computing will move onto the bottom layer so there is no need for a high performance core somewhere making requests to and from that. The goal is to run a program in one of the cores on the bottom of the stack and that program can talk to other programs running on other stacks.”

Really, HBM has a rather large number of parallel traces that can be clocked much slower than high-speed serial interfaces while allowing for more effective bandwidth that is inherently less messy and latency inducing than than serial encoding and encoding Protocols! And HBM has a bottom logic DIE.” HBM will offer much more power savings with those 1024 bit parallel traces to each HBM stack, something that is very good for the exascale power budget!

https://www.amd.com/Documents/High-Bandwidth-Memory-HBM.pdf

More Here:

http://www.hotchips.org/wp-content/uploads/hc_archives/hc26/HC26-11-day1-epub/HC26.11-3-Technology-epub/HC26.11.310-HBM-Bandwidth-Kim-Hynix-Hot%20Chips%20HBM%202014%20v7.pdf

And an FPGAs on the HBM die stacks: For some programmable in memory compute:

http://www.theregister.co.uk/2015/08/11/amd_patent_filing_hints_at_fpga_plans_in_the_pipeline/

““In HMC there’s that logic die on the bottom providing nice, well-behaved high-speed serial interfaces—well defined protocols so it’s possible to tie multiple ones together in various topologies, versus HBM, which has a specialized substrate that talks to specialized ASICs with a lot of on-module I/O.”

This perplexes me. HBM re-uses the same very efficient (and exceptionally well-behaved!) protocols as other DDR-like DRAM devices. Further, it is a standards-based memory. The physical interface is documented and supported by packaging companies and IP will likely be available shortly from multiple vendors for controllers.

I also find it a little odd that he criticizes HBM for having a “lot of on-module I/O”, when that’s exactly the purpose of HMC – to have a base logic chip with a large amount of on-module I/O.

The author of this series should seek out someone who hasn’t staked their career on memory offload for a follow-up article. It might provide an interesting contrast.