The supercomputing industry is accustomed to 1,000X performance strides, and that is because people like to think in big round numbers and bold concepts. Every leap in performance is exciting not just because of the engineering challenges in bringing systems with kilo, mega, tera, peta, and exa scales into being, but because of the science that is enabled by such increasingly massive machines.

But every leap is getting a bit more difficult as imagination meets up with the constraints of budgets and the laws of physics. The exascale leap is proving to be particularly difficult, and not just because it is the one we are looking across the chasm at.

At the ISC supercomputing conference in Germany back in June, the Japanese government’s RIKEN supercomputing center and server maker Fujitsu made a big splash by divulging that the future Post-K supercomputer that is being developed under the Flagship 2020 program would be based on the ARM architecture, not the Sparc64 fx family of processors that Fujitsu has been creating for massively parallel systems since Project Keisoku was started back in September 2006. For the past several weeks, rumors have been going around that the Post-K supercomputer project was going to be delayed, and sources at RIKEN have confirmed to The Next Platform that the Post-K machine has indeed been delayed, by as much as one to two years.

These sources, speaking on the condition of anonymity, tell us that the issue has to do with the semiconductor design for the processors that are to be used in the Post-K machine, which will be based on a homegrown ARMv8-compatible core created by Fujitsu and which will also be using the vector extensions for the ARM architecture being developed by ARM Holdings in conjunction with Fujitsu and unnamed others.

The precise nature of the problem was not revealed, and considering that we do not know the process technology or fab partner that Fujitsu will be using – it is almost certainly a 10 nanometer part being etched by Taiwan Semiconductor Manufacturing Corp – it is hard to guess what the issue is. Our guess is that adding high bandwidth memory and Tofu 6D mesh torus interconnects to the ARM architecture is proving more difficult than expected. Fujitsu has already added HMC2 to the current Sparc64-XIfx processor, which is an impressive chip that many people are unaware of and which is a good foundation for a future ARM chip. Adding the SVE extensions to the ARM cores and also sufficient on-chip bandwidth for a future HMC iteration and a future Tofu interconnect might be the challenge, coupled to the normal difficulties of trying to get a very advanced 3D transistor process to yield.

When it comes to supercomputing, you have to respect ambition because without it we would never hit the successive performance targets at all.

The original Project Keisuko, which was also known as the Next Generation Supercomputer Project when it launched precisely a decade ago, was certainly ambitious and was meant to bring all three major Japanese server makers into the project. Being a follow-on to the Earth Simulator supercomputer, which was a massively parallel SX series vector machine, the Keisuko machine was supposed to have a very large chunk of its 10 petaflops of performance coming from future NEC vector motors. Earth Simulator cost $350 million to build and with 5,120 SX nodes it was able to reach 35.8 teraflops of performance.

The Keisuko machine had a $1.2 billion budget, including the development of a scalar processor from Fujitsu, which turned out to be the eight-core “Venus” Sparc64-VIIIfx, and the Tofu interconnect created by Hitachi and NEC. NEC pulled out of Project Keisuko in May 2009, when the Great Recession was slamming the financials of all IT suppliers, and but commercialized some of the vector advances that it co-created with Hitachi. By November 2009, rumors were going around that the Japanese government, under extreme financial pressure, was going to cancel the Keisuko effort. Fujitsu started talking up its Venus chip, and did a lot of financial jujitsu and managed to talk the Japanese government and the Advanced Institute for Computational Science campus of RIKEN in Kobe to accept a scalar-only super. In the process, it took control of the Tofu interconnect, and has built a tidy little HPC business from the parts of the original Project Keisuko effort, which resulted in a machine simply called K.

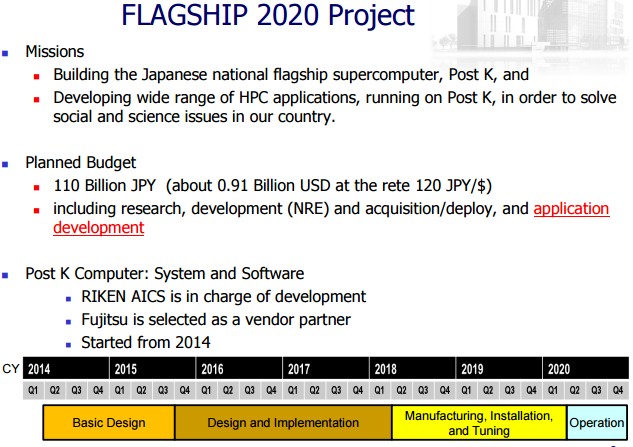

When the Flagship 2020 effort resulting in the Post-K machine was started in 2014, it had a $910 million budget, and that lower number was more about the exchange rate between the US dollar and the yen than the actual amounts budgeted. The K project had 115 billion yen allocated for it, and Post-K is costing 110 billion yen. So the financial cost is basically the same for a system (including facilities, running costs, and software development) that will have 100X more raw performance.

As you can see from the chart above, the basic design of the Post-K super was done last year, and it is not a coincidence that this was about the same time the rumors started going around that Fujitsu would be abandoning its Sparc64-fx chip in favor of an ARM design. Under that original schedule, design and implementation was supposed to run through early 2018, when manufacturing would commence and the machine was to be installed and tested in phases in 2018 and 2019 to be operational around the second quarter of 2020 – just in time for the ISC supercomputing event and a killer Top500 rating.

Now, that is not going to happen until 2021 or 2022, if all goes well, and RIKEN was looking forward to having bragging rights to having the first exascale-class machine in the field. The US government does not expect to get its first exascale machine into the field until 2023, and does not seem compelled to try to accelerate its exascale roadmap based on the ambitious schedules set by the governments of Japan and China. The Middle Kingdom has three different exascale machines under development right now – based on Shenwei, AMD (presumably Opteron), and ARM architectures, as we have previously reported. The Chinse government was set to deliver an exascale machine with 10 PB of memory, exabytes of storage, and an efficiency of 30 gigaflops per watt by 2020. Now, China is ahead of Japan and way ahead of the United States.

It is likely that there will be delays with any project of such scale, which is why China is backing three architectures and it is also why the US is probably going to back two architectures, as it is doing with the pre-exascale machines, “Summit” (a hybrid IBM Power-Nvidia Tesla system) and “Aurora” (based on the “Knights Hill” massively parallel processor from Intel). Europe has a number of exascale research projects, but thus far only Atos/Bull has an exascale system deal, in this case with CEA, the French atomic agency, for a massively scaled kicker to its Sequana family of systems. by 2020.

We expect more exascale projects and more delays as the engineering challenges mount. But we also think that compromises will be made in the power consumption and thermals to get workable systems that do truly fantastic things with modeling and simulation. That’s just how the HPC community works, and even tens of billions of dollars for a dozen exascale machines is not too much for Earth to spend. It is a good investment for so many reasons.

Is China actually ahead of Japan, the USA or Europeans? Just because they have an extremely FLOPS heavy system that does well on HPL and plans on paper to have a bigger system by 2020 doesn’t mean it will be a useful exascale system, capable of working on real code that works at exascale performance.

I suspect that the fact that their current Top500 system has proportionally less memory than older systems, no NVRAM, and is beaten by a system with 1/10 the FLOPS in Graph500, doesn’t inspire confidence in their approach to pre exascale, much less to an actual exascale system. They simply have the #1 spot on the Top500, which is becoming less and less relevant as supercomputers get more powerful.

Exascale’s biggest problems are caused by the simple fact that modern processors expepend most of their power budget on moving data around, branch prediction, speculative execution, rather than actually doing useful calculations. The cost of moving bits around on a chip is a huge problem, but an even bigger one is when bits need to be moved off chip to memory or other chips.

Reducing that problem involves a codesign approach that the Japanese are way ahead on, and that the Americans and Europeans are very quickly advancing in. That’s more about being very clever with data locality and reducing dependence on synchronization as well as actual circuit optimization.

The PrimeHPC FX100s SPARC XIfx and the Tofu 2 utilizes various techniques to reduce the amount of unnecessary communication that’s done already, as did IXfx and the K before it. That’s why I said they’re ahead. The big heterogenous systems like Titan waste most of their electricity on the latency optimized CPU’s that don’t do much of the work, but apparently that’s changing with Pascal and Volta.

I’ve heard people working on the pre exascale Nvidia and Intel people speak about the issues I mentioned, and they seem to be progressing at an increasing pace, which is good news for the Summit, Sierra and Aurora system users. Intel, Micron and their associates in the HMC consortium are ahead in terms of memory performance since they’ve had their tech deployed and HMC 3.0 is in the works.

I do wonder if the delay for Flagship2020 has something to do with integrating NVRAM(which I assume will be XPoint since Fujitsu already uses HMC) to their new ARM architecture? NVRAM is essential for fast checkpointing, which is essential for exascale work. Or could it be something to do with HMC 3.0 not being ready in time?

In terms of scalable architectures capable of doing real work, it seems like Japan is ahead even with a two year delay, considering the past and current state of their architectures. Especially considering that the positively ancient and relatively low FLOPS K is still beating the rest of the world in Graph500. America seems to have “suddenly” realizing the fact that FLOPS don’t matter if the rest of the architecture is a bottleneck with Summit, Sierra and Aurora. Taihu Light seems like a throwback to the FLOPS heavy, memory light, systems that are now irrelevant.

It should also be noted that the FX100s XIfx CPU uses HMC 2 rather than the competing HBM memory. A very low level look at HMC3 vs HBM3 would make a very interesting investigation in itself, since both are competing for exascale. How many pJ per bit does each one cost to move data etc? Hope you will do a piece like that.

I agree completely about the elegance of the K system, and the fact that it uses a processor that is six years old and still does marvelously on real work is a testament to the design. I can only imagine what K equipped with Tofu2 and Sparc64-XIfx would look like….

And yes, I have a dyslexic short circuit on HBM and HMC. Apologies for that.

Well I wouldn’t be so harsh on the recent Chinese achievement that they have multiple Gordon Bell submissions shows you it is not just performance on paper and synthetic benchmarks but actual useful computation. And they are ahead of the rest of the world. The previous #1 and now #2 might be a different story yes that was more of a benchmark machine but that’s because the heterogeneous architecture is in general flawed as you point out because of basically pushing memory through the system all the time. And even nVidia Pascal, Volta doesn’t make it that much better you still need to context switch the memory from one to the other and back. That’s where KnL has a major benefit.

I’m not saying that the fact that they’ve gone from almost nothing to #1 on Top500 is totally unimpressive, but they are kind of standing on the shoulders of giants. They do basically have access to all of the west’s intellectual property, since the west outsourced the manufacturing of just about all technology to China. Now the west is paying the price in multiple painful ways for their shortsighted greed. Being kicked from the top spot ob Top500 for years is a huge embarassment to the west at large, and should be a wake up call.

I still disagree that Taihu Light i ahead of the Japanese, American and European architectures. The HPCG rankings and Graph500 beg to differ with you as well.

The Taihu Light performs worse than Tiahne 2 in HPCG. The comparison of HPCG/HPL for these systems is also very telling.

Tianhe 2, which outperforms Taihhu Light, is only 1.7% of its HPL. Compare that to K, the PrimeHPC FX 100’s and various Cray and SGI systems that are >5%, despite some of them being quite old like K.

And then compare that to NEC SX-ACE vector systems which are almost 12%! Nothing else on the list comes close to that.

The comparison of HPCG to HPL performance was written about here on Next Platform in June. https://www.nextplatform.com/2016/06/21/measuring-top-supercomputer-performance-real-world/

Even though Tianhe 2 may have been a benchmark machine, I don’t see it as being less capable than Taihu Light. The Taihu Light manages just 0.4% of its HPL in HPCG, which is 4x WORSE than the popr performing Tianhe 2! That’s progress? Not when you have machines from 2011 managing 5.3% and 2013 doing 12%.

Exascale should come on 7nm or smaller (but that may push us into 2025).