An Evolving View of AI Infrastructure

By all accounts, artificial intelligence is still in its early days. …

By all accounts, artificial intelligence is still in its early days. …

At this point in the history of the IT business, it is a foregone conclusion that accelerated computing, perhaps in a more diverse manner than many of us anticipated, is the future both in the datacenter and at the edge. …

The hyperscalers and cloud builders of the world build things that often look and feel like supercomputers if you squint your eyes a little, but if you look closely, you can often see some pretty big differences. …

For the past decade, flash has been used as a kind of storage accelerator, sprinkled into systems here and crammed into bigger chunks there, often with hierarchical tiering software to make it all work as a go-between that sits between slower storage (or sometimes no other tier of storage) and either CPU DRAM or GPU HBM or GDDR memory. …

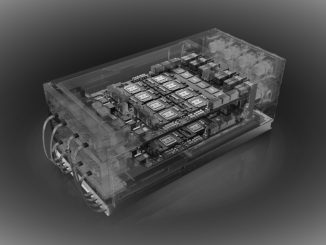

The diverse set of applications and algorithms that make up AI in its many guises has created a need for an equally diverse set of hardware to run it. …

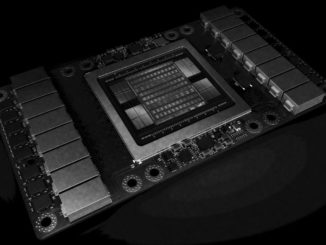

To a certain way of looking at it, Nvidia has always been engaged in the high performance computing business and it has always been subject to the same kinds of cyclical waves that affect makers of supercomputers and enterprise systems. …

Disaggregated storage has become the norm in large-scale infrastructure and we expect that in 2019 those who are pushing the limits with NVMe will have quite a successful year—vendors as well as their hyperscale and high performance computing users. …

Generative adversarial neural networks are the next step in deep learning evolution and while they hold great promise across several application domains, there are major challenges in both hardware and frameworks. …

It might be a bit early to call generative adversarial networks (GANs) the next platform for AI evolution, but there is little doubt we will hear much more about this beefed up approach to deep learning over the next year and beyond. …

Every important benchmark needs to start somewhere.

The first round of MLperf results are in and while they might not deliver on what we would have expected in terms of processor diversity and a complete view into scalability and performance, they do shed light on some developments that go beyond sheer hardware when it comes to deep learning training. …

All Content Copyright The Next Platform