Every important benchmark needs to start somewhere.

The first round of MLperf results are in and while they might not deliver on what we would have expected in terms of processor diversity and a complete view into scalability and performance, they do shed light on some developments that go beyond sheer hardware when it comes to deep learning training.

Over the holiday we will work in some models to this analysis that include the most important factor in all of this—relative price—and try to work some scalability and other numbers backwards to show what is performing and to what degree the software side of this benchmark could shake things up. This takes some reading between the lines, as the title suggests, but it will provide a baseline of where training dollars might go for what AI workloads and why.

The Sudoku-like results leave us hoping to fill in the blanks with something to chew on in terms of price (from our own knowledge), performance, scalability, and optimization but the submissions are scattered are thin with submitters leaving out far more data than we care to see. We will make what connections we can below.

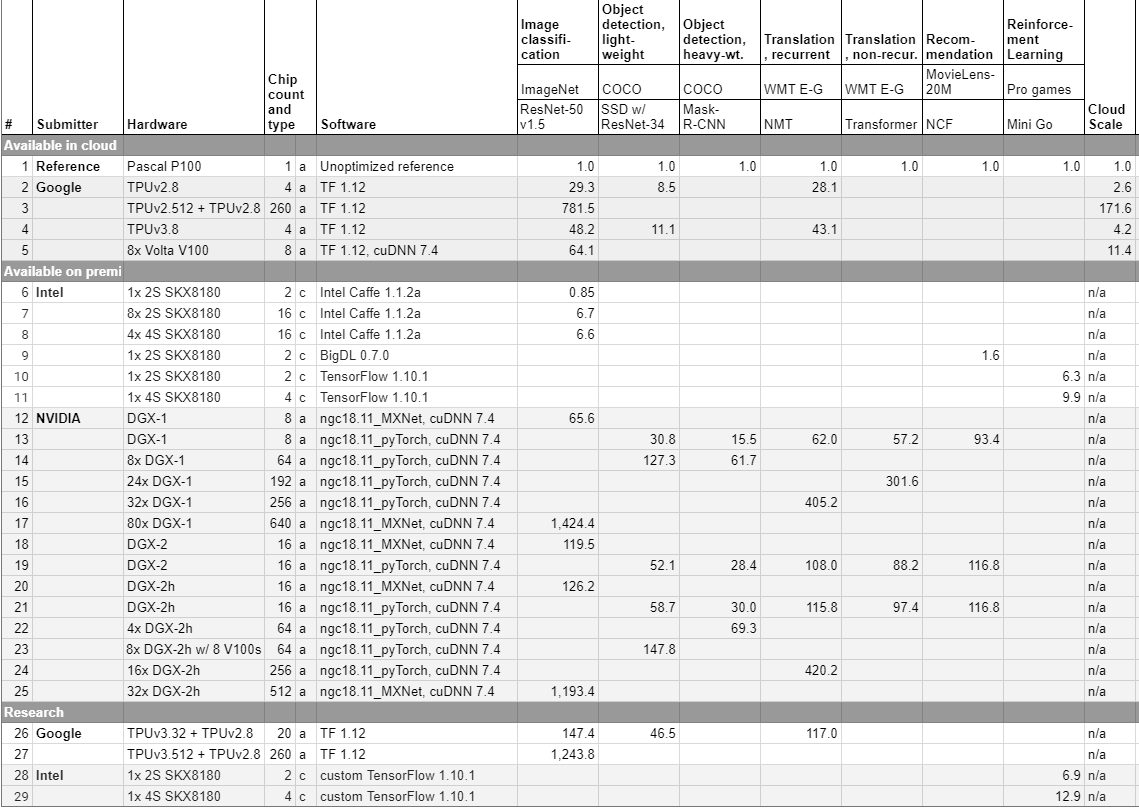

The “cloud scale” metric in the top right uses the P100 GPU as the baseline and provides factors that roughly translate to cost on various clouds, although it is not a clean dollar amount, certainly. The TPU pod with its 260 chip count adds an element of imbalance here as well, which makes apples to apples somewhat difficult, especially since the pods have mixed chip versions. A single chip estimate can be gleaned from simply dividing but herein lies one of the weaknesses of this benchmark. People do not think of performance and price in terms of individual chips in cloud or on-prem. The standard for this benchmark is thus very relative, which is fine given the challenges of building such a benchmark on wildly different architectures, but it does take some time to really understand how to tighten performance comparison estimates.

Even still, it is possible to plot a curve to gauge general scalability of some of the key benchmarks on various hardware platforms (remember, this is not device scalability, it’s workload driven). For those who have not spent time with this new data, the idea is simple–so simple, in fact, that this limits its usefulness in some ways. In terms of comparisons, if you take one DGX-2 with its chip count total of 16 (see line 18), the way to read this is that it’s 119.5X better performance over a single P100. You can then isolate a single chip performance number and draw the lines, which will do for several permutations of this in the coming month.

The 2-socket and 4-socket Intel results are interesting but incredibly thin yet still say quite a bit about how to make decisions based on the data, at least for image classification in particular—something that is relevant to other machine learning workloads performance wise. Here you can what a single 2-socket Skylake brings performance-wise (about 0.85 the performance of a single P100) yet with 8x 2-socket it’s 6.7. A big improvement that one might expect to go through the roof by upgrading to a 4-socket Skylake but that is actually slightly less performance—at least for this workload—and only a bit higher performance for reinforcement learning.

In other words, the value of this benchmark is in showing relative performance on different devices for the same vendor as well. With a much bigger 4-socket node and shared memory addresses (remember, there is a lot more memory to hold your model there, which is a factor that doesn’t appear here)and so on, why pay more? The results matter. The Skylake (8180) is $13,000 approximately, doubled for 2-socket. On the flip side, a P100 is probably $14,000 on its own (plus the cost of a CPU to drive it) and the V100 is around $20,000 with same addition. Looking at this data, you still get quite a bit more out of a GPU for the money. Again, assuming our pricing is relatively on-target.

The point is, the benchmark gives us a way to start making some real comparisons, even if they omit pricing. For instance, if we know that the DGX-2 with NVSwitch runs around 400,000, what is the difference between doing that versus with 80 DGX-1s that cost around $170,000 each (our own pricing estimates). The DGX-2 does a little more work according to these numbers but 80 DGX-1s looks to cost a bit less.

In other words, if you use MLPerf and bring your own pricing data, you can actually start to read between these lines and get a real sense for scalability as well as how to pick based on cost and relative performance.

Despite the breadth of new devices aimed at training and inference, the only hardware in this initial run that we can see is from Intel, Nvidia, and Google. We are chasing some of the more notable AI chip startups we have covered in the last few years to understand why they have not submitted but if we had to venture two guesses, one would be that the process is simply too much time and cost overhead for now or the hardware from companies like Wave Computing and Graphcore, to name a few, just is not ready for that kind of primetime yet.

With quarterly results expected from submitters the interesting trend line that will emerge will be around software and optimization. With just three companies submitting for now, the addition of new hardware will be limited but what we will be able to see clearly is just how much new optimizations add to overall training performance. We suspect it will be huge and will push vendors to compete here in entirely new ways.

The companies that submitted were able to see that other devices had submitted results but the submission process was mostly blind. Hardware makers could run the benchmark and do it again and then choose whether or not to publish results but could not remove them once they were uploaded. In other words, the thing to watch for is where results are omitted, something that is particularly noteworthy in the case of Intel, which is the only company to submit results for reinforcement learning. This could mean that these workloads do not scale well on other devices than CPU, for instance.

We have seen a great many numbers from Nvidia and Intel when it comes to deep learning performance but MLPerf is placing the TPU directly against the GPU and finally giving us a sense of how these devices scale. Even though the question of scalability in this workload is always open since most training runs do not (and cannot) work at the hundreds and certainly thousands of device scales, having a more objective view into this scalability is useful.

As we detailed in August when the early iteration of the benchmark was being developed, the results will not include inference until late next year, perhaps closer to 2020 given the diverse complexities of the inference workload and hardware. MLPerf v.0.5 is still under development with new submission rounds expected to come quarterly along with updated benchmarks around those same times with v.1.0 emerging by Q3 2019.

Among the enhancements coming this year are an expected uptick in target quality, the adoption of a standard batch size for hyperparameter tables, and scaling up particular benchmarks, including recommendations in addition to adding more overall benchmarks.

The real value of this thing is that we can see quarter by quarter the impact of software optimizations and tweaks—and that is something that we can see dribble out but not in one place and across time. More coming in early 2019 on the extent to which various factors improve and detract from raw hardware performance–an in-depth on the different memory configurations as well as the all-important software stacks and improvements.

Be the first to comment