After many years of rumors, Microsoft has finally confirmed that it is following rivals Amazon Web Services and Google into the design of custom processors and accelerators for their clouds. That confirmation came today as Satya Nadella, Microsoft’s chief executive officer, announced the Cobalt 100 Arm server CPU and the Maia 100 AI accelerator chip.

The move is a surprise to precisely no one, because even if Microsoft does not deploy very many of its own chips, the very fact that they exist means it can negotiate for better pricing from chip makers Intel, AMD, and Nvidia. It is like spending hundreds of millions of dollars to save billions, which can be reinvested back into the infrastructure, including further development of homegrown chippery. Particularly at the relative high cost of X86 server CPUs and the outrageous pricing for Nvidia “Hopper” H100 and H200 GPU accelerators and, we presume, for the forthcoming AMD “Antares” Instinct MI300X and MI300A GPU accelerators. With supplies limited and demand far in excess of supply, there is no incentive at all for AMD to undercut Nvidia on price with datacenter GPUs unless the hyperscalers and cloud builders give them one.

Which is why every hyperscaler and cloud builder is working on some kind of homegrown CPU and AI accelerator at this point. As we are fond of reminding people, this is precisely like the $1 million Amdahl coffee cup in the late 1980s and the 1990s when IBM still had a monopoly on mainframes. Gene Amdahl, the architect of the System/360 and System/370 mainframes at IBM founded a company bearing his name that made clone mainframe hardware and that would run IBM’s systems software, and just having that cup on your desk when the IBM sales rep came to visit sent the message that you were not messing around anymore.

This is one of the reasons, but not the only one, that a decade ago, Amazon Web Services came to the conclusion that it needed to do its own chip designs because eventually – and it surely has not happened yet – a server motherboard, including its CPU, memory, accelerators, and I/O – will eventually be compressed down to a system on chip. As legendary engineer James Hamilton put it so well, what happens in mobile eventually happens in servers. (We would observe that sometimes the converse is also true.) Having an alternative always brings competitive price pressure to bear. But more than that, by having its own compute engines – Nitro, Graviton, Trainium, and Inferentia – AWS can take a fill stack co-design approach and eventually co-optimize its hardware and software, boosting performance while hopefully reducing costs to, thus pushing the price/performance envelope and stuffing it full of operating income cash.

Microsoft got a later start with custom servers, storage, and datacenters, but with the addition of the Cobalt and Maia compute engines, it is becoming a fast follower behind AWS and Google as well as others in the Super 8 who are making their own chips for precisely the same reason.

The move by Microsoft to design its own compute engines and have them fabbed was a long time coming, and frankly, we are surprised it didn’t happen a few years ago. It probably comes down to building a good team when everyone else – including a few CPU and a whole bunch of AI chip startups – is also trying to build a good design team and get in line at the factories run by Taiwan Semiconductor Manufacturing Co.

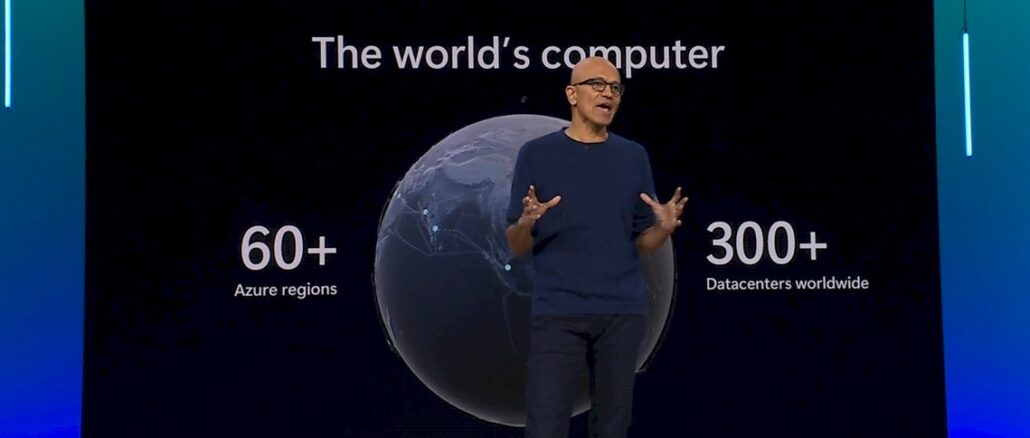

“Being the world’s computer means that we need to be even the world’s best systems company across heterogeneous infrastructure,” Nadella explained in his opening keynote at the Microsoft Ignite 2023 conference. “We work closely with our partners across the industry to incorporate the best innovation from power to the datacenter to the rack to the network to the core compute, as well as the AI accelerators. And in this new age of AI, we are redefining everything across the fleet in the datacenter.”

Microsoft has wanted an alternative to the X86 architecture in its fleet for a long time, and way back in 2017 it said its goal was for Arm servers to be 50 percent of its server compute capacity. A few years back, Microsoft was an early customer of Cavium/Marvell with its “Vulcan” ThunderX2 Arm server CPUs and was on track to be a big buyer of the “Triton” ThunderX3 follow-on CPUs when Marvell decided in late 2020 or early 2021 to mothball ThunderX3. In 2022, Microsoft embraced the Altra line of Arm CPUs from Ampere Computing, and started putting them in its server fleet in appreciable numbers, but all that time there were persistent rumors that the company was working on its own Arm server CPU.

And so it was, and so here it is in Nadella’s hand:

We don’t know what Microsoft has been doing all of these years on the CPU front, but we do know that a group at the Azure Hardware Systems and Infrastructure (ASHI) team designed the chips. This is the same team that developed Microsoft’s “Cerberus” security chip for its server fleet and its “Azure Boost” DPU.

The company provided very little in the way of details about the internals of the Cobalt server chip, but the word on the street is that the Cobalt 100 is based on the “Genesis” Neoverse Compute Subsystems N2 intellectual property package from Arm Ltd, which was announced back at the end of August. If that is the case, then Microsoft is taking two 64-core Genesis tiles with the “Perseus” N2 cores with six DDR5 memory controllers each and lashing them together in a single socket. So that’s 128 cores and a dozen memory controllers, which is reasonably beefy for 2023.

The “Perseus” N2 core meshes scale from 24 cores to 64 cores on a single chiplet, and four of these can be ganged up in a CSS N2 package to scale to maximum of 256 cores in a socket using UCI-Express (not CCIX) or proprietary interconnects between the chiplets as customers desire. The clock speeds of the Perseus cores can range from 2.1 GHz to 3.6 GHz, and Arm Ltd has optimized this design bundle of cores, mesh, I/O, and memory controllers to be teched in 5 nanometer processes from TSMC. Microsoft did confirm that the Cobalt 100 chip is indeed using these manufacturing processes. Microsoft said that the Cobalt N2 core would offer 40 percent more performance per core over previous Arm server CPUs available in the Azure cloud, and Nadella said that slices of Microsoft’s Teams, Azure Communication Services, and Azure SQL services were already running atop the Cobalt 100 CPUs.

Here is a shot of some racks of servers in Microsoft’s Quincy, Washington datacenter using the Cobalt 100 CPUs:

Nadella said that next year, slices of servers based on the Cobalt 100 will be available for customers to run their own applications on.

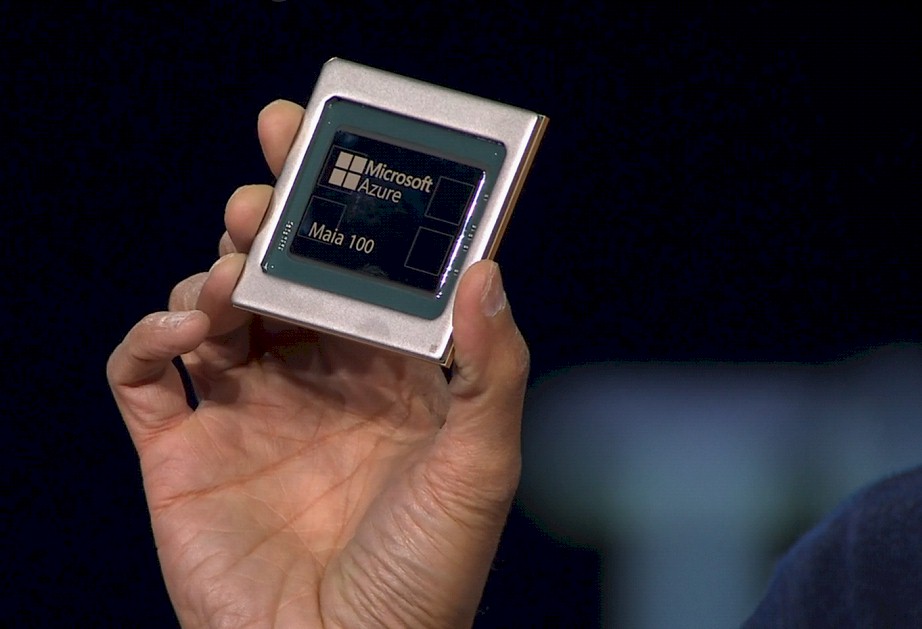

The Maia 100 AI chip is probably the one developed under the code-name “Athena” that we have been hearing about for more than a year and that we brought up recently as OpenAI, Microsoft’s large language model partner, was rumored to be looking at creating its own AI accelerator, tuned specifically for its GPT generative AI models. This may have all been crossed wires and Athena is the chip the rumors about OpenAI were referring too, or maybe OpenAI is hedging its bets while also getting Microsoft to tune up an AI engine for GPT. Microsoft has been working on an AI accelerator for about four years, if the scuttlebutt is correct, and this may or may not be the one it intended to do back then.

Here is the Maia 100 AI accelerator chip that Nadella held up:

What we can tell you is that the Maia 100 chip is based on the same 5 nanometer processes from TSMC and includes a total of 105 billion transistors, according to Nadella. So it is no lightweight when it comes to transistors or clock speed. The Maia 100 chip is direct liquid cooled and has been running GPT 3.5 and is powering the AI copilot that is part of GitHub right now. Microsoft is building up racks with the Maia 100 accelerators and will be allowed to power outside workloads through the Azure cloud next year.

One of the neat things about the Maia effort is that Microsoft has designed an Open Compute compatible server, which holds four of the Maia accelerators, that slides into the racks it has donated to OCP and has a companion sidekick rack that has all of the liquid cooling pumps and compressors to keep these devices from overheating and allowing them to run hotter than they otherwise might with only air cooling. Take a look:

The Maia 100 is designed to do both AI training and AI inference, and is optimized for large language models – and presumably, given how fast this part of the IT industry is changing, is going to be able to support other models besides flavors of OpenAI’s GPT.

The other interesting thing is that Microsoft is going to be using Ethernet interconnects to lash together the Maia accelerators, not Nvidia’s InfiniBand.

We will be poking around to get more details on the Cobalt and Maia compute engines.

These copy-paste CSS Genesis IP Neoverse N2s seem to be quite the ticket to allow hyperscalers (deep pockets) to cut the middlefolks (Ampere?) with rather standardized and proven designs (unlike RISC-V), here with the pretty-decent dual-chiplet 128-cores Cobalt 100. I do like Graviton3E, Rhea1, and Grace’s Neoverse V1 and V2 better though … for the extra oomph!

On the other hand, Maia 100 is quite the riddle, wrapped in a mystery, inside an enigma … I doubt they’ve planted a field full of cauliflowers for this … maybe they did just buy Tachyum!?? 8^b

Uh.. Arm is another word for “Raspberry Pi.”

I view the Zen cores a closer analogy to Amdahl because both are binary compatible with the market leader during their times. It is plausible one of the main reasons the x86 architecture is still relevant is because there are two independent companies making compatible products. For example, the architecture would not even have made the jump to 64-bit without AMD.

There is a similar competition for ARM due to the fact that Apple has rights. At the same time, the trouble Qualcomm recently had with their Nuvia architecture licenses suggests future ARM designs may not include the same level of the competitive engineering as the current dynamic between AMD and Intel.

What I wonder is whether Cobalt is mostly the same as Ampere Altra or whether Cobalt includes significant features specially suited for Microsoft’s cloud.

Small typo: “AWS and Google as well as others in he Super 8 who are making their own chips for precisely the same reason.”->should be “in the Super 8” I think.

->but that’s not the reason, why I write you Mr. Morgan. I want to tell how you always deliver some fresh and open views, as here with the real reasons for the chip-development at Microsoft. Always well written, a pleasure to read, but always no “hey look, how good I can write” as other writers, which write as they like to hear themselves.

Thanks always for the small side stories, like the one with the coffee cup with Amdahl on it.

Thanks for the catch, and the morale boost. Appreciate you.

I find it interesting that even Microsoft can’t get rack utilization above 50% with those ARM systems on a per-rack basis. Is it power or cooling limited? Or have the just not deployed enough that they want to fill the rest of the racks yet?

The next big change in DC architecture will be when someone defines a way to get rid of all the fibre and other cables by having standard rack designs with plugs in the back for the network/power/ILO support all in one connector in a standard placement.

It is peculiar, isn’t it? I have been to Quincy, so cheap space is not a problem. Microsoft could buy 10,000 acres easily.

I think these companies think of racks and rows like we think of servers. Once they roll them in and wired them up, they really don’t want to touch them again unless they have to. But maybe they are leaving space for future capacity. In the past, this was done at the datacenter room level within the datacenter within the region. But maybe there is a reason why you want to fill all the rooms halfway now because of AI loading. But as you say, these are just Arm server CPUs, nothing crazy hot.

Datacenters already do this. HP blades, SAN arrays, ect.

The only people who implement this way are end user customers paying others to do the work for them.

As with most things IT, there is no single correct choice, because *it depends*.

When you have a green field out there to fill, why go for density if it costs more?

In other places where latencies are really critical, specialized IT service providers will optimize density in a manner that just wouldn’t be competitive in the green field.

As usual, you’ll see diverse architecture, oddly enough enabled by the huge scale of the workloads.

When it comes to connectors and standard placement: unfortunately physics play a role there and with everything copper, trace lengths become critical. There is a lot of cables these days on Gen5 PCIe servers, because running equal length traces on planar mainboards becomes either impossible or too expensive as you add layers. So going for cables rather than slots and connectors may just be dictated by a combination of physics and economy, even if these high precision cables are neither cheap to make nor to deploy.

How that will work out with optical interconnects remains to be seen, but again cables may win, unless the assembly cost become to excessive… where something more robot friendly may emerge.

I guess the main drivers will be a) cheapest assembly cost for the performance, b) near zero maintenance cost, c) disassembly is “for the next guy”

Copilot for Microsoft 365 is Microsoft’s AI assistant for Microsoft Office and other Microsoft Apps. There are 350 million Microsoft 365 subscribers. I can’t predict how many of them will sign up for Copilot ($30/month), but each 20% that does is $25 billion per year of revenue. Microsoft’s Maia 100 AI chip is going to be doing inference for those subscribers, as it already does for GitHub Copilot. Given Microsoft’s track record for security and privacy, I am amazed people are willing to have essentially every keystroke they make sent to Microsoft’s servers. Perhaps the telemetry added to Windows was done to get people used to the idea that George Orwell’s Big Brother should be able to see everything they type.

AI in the cloud will always be more powerful than what is possible on a client device. On the other hand, as client devices get more AI hardware and AI algorithms improve, client devices might become good enough for most purposes. Will the AI assistants most people use will stay in the cloud or will they eventually run on client devices? The computer industry has gone through several transitions between centralization and decentralization. I would like to know if AI in the cloud is going to remain the dominant way of implementing AI assistants or is it just a temporary thing (less than 5 years) until client devices become more capable and AI algorithms improve.

I think the few resources to favour hegemony for gigant microsoft co., there is duet ARM-WINDOWS and their asociate programs suite, I think Apple had arrived late for big presence on heavy enterprise soft, S. Jobs (rip), dont fall in this error if he live. Otherwise DEll, HEWLETT PACKARD, SUPERMICRO and other makers big digital infrastucture, to love MSFT if this last company only make tuned CPUs ARM for only general sale, dont for make Microsoft their complete server line product new business. Important!!!

Microsoft is so into cloud these days, these custom CPU’s probably will end up as specific purpose CPU’s for something Microsoft is cooking up.. It would not be something like Apple did with its M series silicon. The Surface lineup won’t benefit from custom ARM chips and not even sure the investment in Surface is top priority at Microsoft any more. Qualcomm’s Snapdragon X reads like a real option for those who want max battery life and still get good performance. Intel has yet to prove its hybrid approach really makes any real difference. I think maybe on mobile but seems odd in a desktop scenario.

Precisely the reason why my AMD machine was always called “AMDahl”.. while there was only one. “Gene” came next and then I stuck to A* and I* names. With ARM I guess I’d have to use B* (for the BBC micro), but so far they are unicorns. Nothing R* yet, unfortunately.

The cost/speed to service advantages seem hard to beat for custom SoC vs. commodity when you are in an expansion phase.

Once that peters out, you’ll want to commoditize again, which is what OCI is all about.

Doing the balance will be tough and making sure you really fully depreciate all that stuff before it becomes obsolete, even more so.

Can’t say that I wish M$ good luck, because I want them miles away from any of my data: Copilot, just say no-op!