We think that waferscale computing is an interesting and even an inevitable concept for certain kinds of compute and memory. But inevitably, the work you need to do goes beyond what a single wafer’s worth of cores can deliver, and then you have the same old network issues.

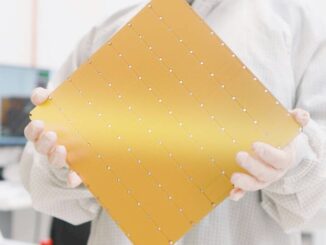

But don’t take that too far. Science and data analytics still need to get done, and there are places where these squared circles of cores and SRAM memory like the three generations of Wafer Scale Engine devices created by AI startup and sometime HPC player Cerebras Systems can whip a massive GPU accelerated machine.

This is why Bronis de Supinski, chief technology officer at Livermore Computing at Lawrence Livermore National Laboratory, told us back in July 2023 that the lab was working with AI upstarts Cerebras Systems and SambaNova Systems to see where their architectures might be useful for the management of the nuclear weapons stockpile in the United States and the fleet of nuclear-powered vessels in the US Navy. This is one of the mandates of the so-called Tri-Labs, which consists of Lawrence Livermore as well as Sandia National Laboratories and Los Alamos National Laboratory and which are all part of the US Department of Energy, which funds the largest supercomputers in the country and which is in charge of the NNSA as well.

As it turns out, Cerebras is working with the Tri-Labs on six different problems. And as part of the ISC24 festivities this week, Cerebras and researchers from Tri-Labs have publishing a paper on how a molecular dynamics application that relevant to the management of the nuclear stockpile was accelerated by a factor of 179X compared to the same application running on the “Frontier” supercomputer at Oak Ridge National Laboratory, also a DOE facility but not one that is formally part of the National Nuclear Security Administration. (You can check out that paper at this link.) The homegrown molecular dynamics simulation from Tri-Labs was also run on the “Quartz” CPU-only cluster at Lawrence Livermore.

Here is the crux of the problem, and it has to do with the weak scaling of modern massively parallel supercomputers versus the strong scaling of individual compute engines, and what it really comes down to is having high bandwidth between all compute elements and their local memory. With massively parallel systems like Frontier and Quartz, the weak scaling of these systems allows for a massive scale in the number of atoms and their interactions that can be simulated.

As the paper points out, these MD applications can resolve atomic vibrations with femptosecond timestepping and can simulate billions to tens of trillions of atoms. But when you add up all of the time, the simulation can at best show a few microseconds of the atoms interacting, and for the kinds of physical and chemical phenomena in that Tri-Labs and others want to simulate, interesting behaviors only happen on longer timescales on the order of 100 microseconds or more. The examples given in the paper include annealing of radiation damage in nuclear reactors, thermally activated catalytic reactions, phase nucleation close to equilibrium, and protein folding.

A waferscale compute engine, by definition, is a strong scaling device, and so Tri-Labs worked with Cerebras to port its Embedded Atom Method (EAM) simulations, which run atop the Large-scale Atomic/Molecular Massively Parallel Simulator (LAMMPS) tool originally created by Sandia and Temple University back in 1995, to the second generation WSE-2 processors in its CS-2 systems. The specific simulation was to beam radiation into three different crystal lattices made of tungsten, copper, and tantalum. In these particular simulations, which were for 801,792 atoms in each lattice, the idea is to bombard the lattices with radiation and see what happens. On the Frontier and Quartz machines, the simulations can only see nanoseconds worth of the simulation, which is not long enough to see what happens to the lattices that are bombarded with radiation.

But with the WSE, which can simulate one atom per core (and still have some cores left over) and store all of the data for processing in local SRAM, the number of timesteps per second that can be processed in the EAM/LAMMPS simulation is 109X higher for copper, 96X higher for tungsten, and 179X higher for tantalum compared to the GPUs, giving tens of milliseconds of time and therefore enough to see what actually happens to the lattice.

If you want to test your susceptibility to color-blindness, here is the chart showing the number of nodes tested, the timesteps per joule of electricity used, and energy efficiency factor of the WSE-2 over the Frontier and Quartz machines:

What is interesting in the chart above is that the Frontier system with its GPUs peters out in terms of timesteps per second of simulation, and that the CPU-based cluster can scale further and drive more timesteps than the GPUs, but the WSE-2 still beats it out, as you can see in the chart and table above.

Having seen those results, let’s talk about hardware for a second.

The WSE-2 engine was announced in April 2021 and is etched in 7 nanometer processes from Taiwan Semiconductor Manufacturing Co. The WSE-2 die has 2.6 trillion transistors and 850,000 cores with 40 GB of SRAM memory with 20 PB/sec of aggregate SRAM bandwidth. You night be wondering why Tri-Labs didn’t test the EAM/LAMMPS benchmark on the newer WSE-3 device that launched in March of this year. Well, the shrink to 5 nanometers with the WSE-3 only boosted the core count to 900,000 and only boosted the SRAM to 44 GB and the SRAM bandwidth to 21 PB/sec. Using the WSE-3 would only have allowed for slightly larger collections of atoms to be simulated, although at 2X the performance per core, the simulation would run twice as fast or, perhaps, be able to deliver 2X the number of timesteps per second simulated. We conjecture that the latter would be useful – for instance, boosting the simulation window for the tantalum lattice from 40 milliseconds on the WSE-2 to perhaps 80 milliseconds on the WSE-3. This is almost human-scale time. (An eye blink, which is our average attention span since the advent of the commercialized Internet, is about 200 milliseconds.)

The Frontier supercomputer at Oak Ridge is comprised of nodes with a custom 64-core “Trento” Epyc processor coupled to four “Aldebaran” Instinct MI250X GPU accelerators; 9,408 of these nodes are lashed together with the Slingshot 11 Ethernet variant from Hewlett Packard Enterprise. But as you can see from this test, adding more GPUs or CPUs does not add more timesteps of simulation after a certain point. A Frontier node can simulate about 100,000 atoms per GPU with strong scaling, and the scaling stalls at around 32 GPUs. So the other 37,856 GPUs in Frontier are useless for the purposes of this test.

The Quartz machine at Lawrence Livermore has 3,018 nodes with a pair of 18-core “Broadwell” Xeon E5-2695 v4 processors from Intel in each node, and a 100 Gb/sec Omni-Path network. This is no speed demon, but it is no slouch either. The Tri-Labs researchers say they can simulate about 1,000 atoms per CPU socket and at 400 nodes (800 sockets) the scaling also peters out.

All of that brings us to the next problem, and one that we ribbed Cerebras co-founder and chief executive officer about in our briefing: What happens when you connect multiple waferscale engines together and try to run the same simulation. Feldman says no one knows yet.

The proprietary interconnect in the WSE-2 systems could scale to 192 devices, and with the WSE-3, that number was boosted by more than an order of magnitude to 2,048 devices. This is pretty decent weak scaling, of course, but we strongly suspect that the same scaling principles apply to WSEs as apply to GPUs and CPUs. You could do larger aggregations of atoms, but still only see tens of milliseconds into the future.

Unless, of course, there was some way to lash WSEs together physically. Imagine, if you will, a bunch of square WSEs dovetailed at the edges like the drawers in Amish furniture or those rubber mats at the gym. You could make a stovepipe of squares of interconnected WSEs, where they link to each other at their edges, and run power on the inside of the stovepipe and cooling on the outside of the stovepipe. The effectiveness of strong scaling would be limited to the interconnects at the edges of the WSEs and the length of trace wires from the top of the pipe to the bottom of the pipe. But one thing we know for sure: This kind of configuration could not be worse than using InfiniBand or Ethernet to interlink CPUs or GPUs.

I remain quite partial to the Denny’s stack-of-pancakes approach to 3-D extension of the WSE computational dish-plates (with engineered heat-removal strategy). A 900-high stack (below the WSE-3 limit) would essentially provide for a 900x900x900 prism of cores, to efficiently simulate 700 million tantalum atoms, from which to evaluate pre-asymptotic behavior (eg. pre-Fickian property transport, of maple-syrup flavor) — that could be useful to parameterize related multicontinuum approximations (Kac/Goldstein?) (ref: https://www.nextplatform.com/2024/03/14/cerebras-goes-hyperscale-with-third-gen-waferscale-supercomputers/ )

But, of course, a stove-pipe approach, laid on its side, and curved to form a torus, could be the practical winner here (like a computational Tokamak, a perfect domain for Conway’s game of Life, and rather tasty in its interpretation as a donut). In any instance, great job by Cerebras, and the TriLabs MAD scientists (MoleculAr Dynamics), in demonstrating the substantial speedup of dataflow archs in such multi-Hamiltonian computation (if I understood well)!

I like the toroid. You can put chocolate glaze on it, and then some sprinkles….

The trick with all these is power and cooling. And packaging. How small can we make cooling channels to remove heat, while at the same time bringing in power? Or so we also need to drop voltage? Where’s the next step below the 1.2V stuff I hear about? We’re slowly moving from 5V to 3.3V in hobbyist electronics, and I’m sure it’s lower elsewhere, but can we do some funky tricks where we give high voltage deep into the system, then drop it down efficiently and without much waste heat to then power just enough to make things work?

This is a total side step, but the comment on the 900 x 900 x 900 pancake stack made me think of the issues.

Heck, the torus (stovepipe style) could even have multiple layers torii stacked around each other. Hmm… maybe not, hard to get power/cooling in and out efficiently.

I think hollow will cool easier than pancakes. Nested donuts… mmmm.

I don’t immediately know details about the supported scale and topologies but my understanding was that Tesla’s Dojo wafer scale compute engines leverage a TSMC waferscale. Interconnect to achieve roughly what you discuss here, at least for a single digit number of wafers. News stories around the Dojo launch cover this.

Typo

Femtosecond

Not

Femptosecond

Imagine stacking these like AMD does x3d or like HBM. Perhaps even a cache+interconnect logic layer between each WSE with vias through all layers that connect all of the interconnect wafers together like one big memory/cache controller.

Trash technology, they cannot power / cool well