At the ISC conference this week, HPC market analyst firm Hyperion Research offered its mid-year HPC market update, which recapped what happened in 2018 and provided some particularly interesting observations on the exascale space.

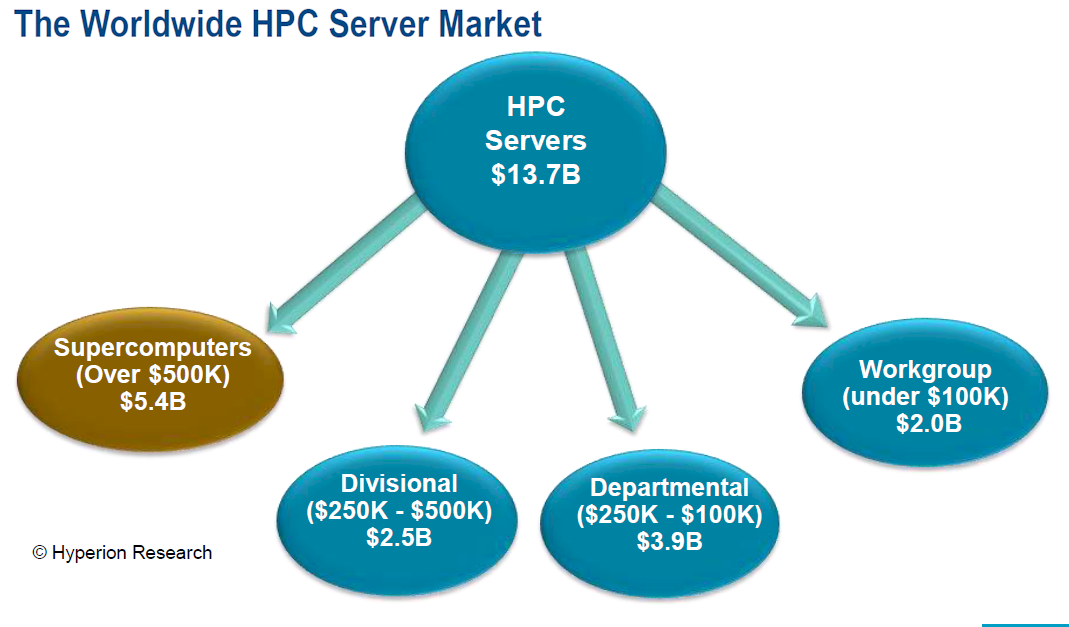

To begin with, according to Earl Joseph, chief executive officer at Hyperion, 2018 turned out to be an exceptionally good year for the high performance computing business. Server revenue year hit a record $13.7 billion, representing an annual growth rate of 15 percent. The supercomputer category, which Hyperion defines as systems costing more than $500,000, had a particularly robust year, increasing 23 percent over 2017, reaching $5.4 billion.

Those numbers were unchanged from what the company presented back in April at Hyperion’s HPC User Forum in Santa Fe, New Mexico. But good news is worth repeating, especially when you have the attention of an international audience.

“For almost five years, the high end of the market was flat,” Joseph told the ISC crowd. “So it’s good to see it back into a growth mode.” More significantly, because on what Hyperion is seeing with the pre-exascale and exascale deployments just over the horizon, Joseph thinks the industry is in store for an extended period of very healthy growth – probably for four or five years.

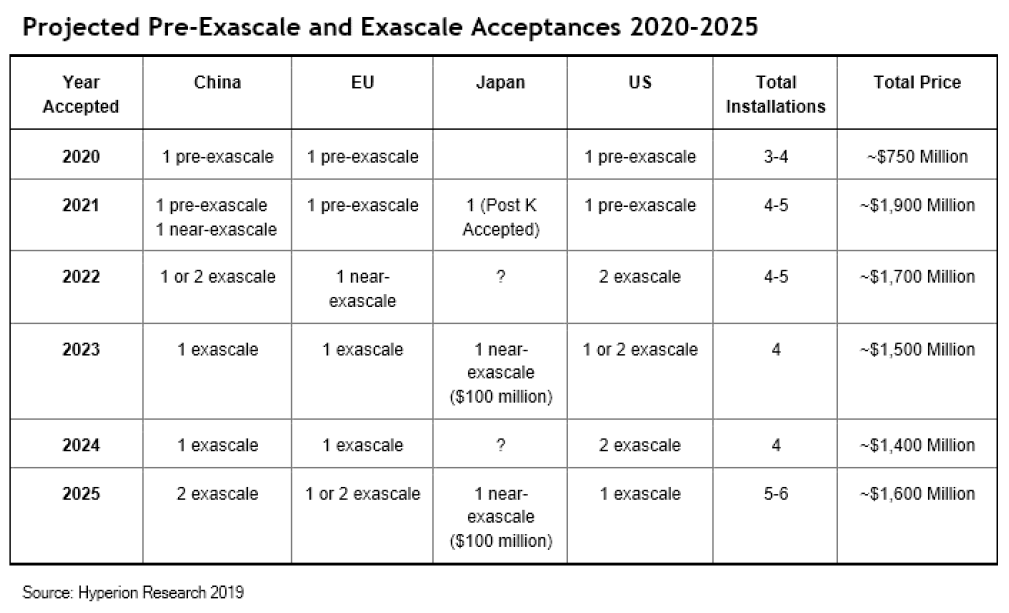

At this point, the company is tracking about $9 billion in the pipeline for pre-exascale and exascale systems that are scheduled to be installed between 2020 and 2025. That should really provide a big boost to the overall HPC market over this timeframe. However, because of the high price tags for the individual systems, it will drive some rather volatile financial results for individual vendors.

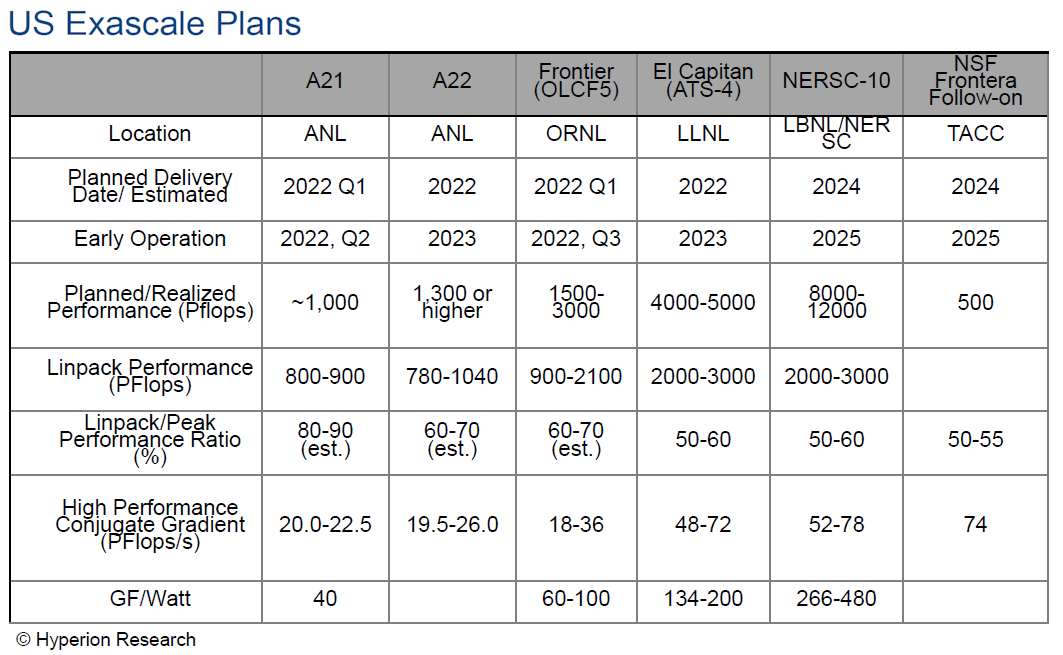

Take Cray, whose total revenue in 2018 was $456 million. The company was recently awarded the contract for Frontier, the exascale system slated to go to Oak Ridge National Lab in 2021. The contract value for that single machine was over $600 million, or about 30 percent larger than Cray’s total revenue last year. Of course, by 2021, Cray will be part of HPE after being acquired for $1.3 billion, and HPE is a much larger company. But the financial impact will still be substantial, nonetheless. As it will be for the other exascale vendors.

That sounds like a good problem to have, but as Joseph pointed out, Wall Street is not a big fan of such volatility. Investors love to see their company double its size but will be less enthusiastic if they realize the following year the business gets sliced in half. Of course, that’s not going to deter anyone’s enthusiasm to get these machines out the door.

As you can see the chart below, Hyperion has Japan, with its “Fugaku” Post K system, reaching the exascale milestone first, in 2021. In 2022, the United States should have its first two exascale machines, “Aurora” and “Frontier,” up and running, and at least one exascale system should be up and running in China. The first European exascale supercomputer isn’t expected until 2023. These dates reflect the year the machines are expected to go into production. At least some of these will be installed and running the Linpack test for their rankings the year before they are generally available to users.

The exascale plans for the US and China are particularly interesting, inasmuch as both countries will probably be deploying the largest number of these systems over the next several years. At this point, Hyperion is tracking potentially six US-based machines that will go into operation between 2022 and 2025. If the projected performance estimates are accurate, not all will achieve 1 exaflops on Linpack, so for some people, they won’t even be considered true exascale systems.

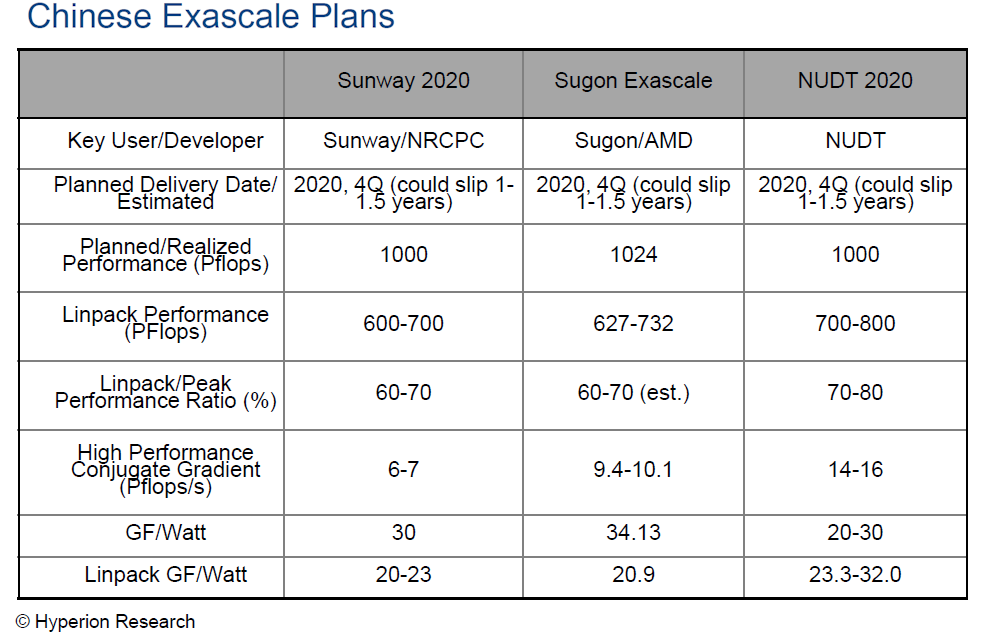

But we’re not going to quibble about that, especially considering that it’s possible none of the three Chinese exascale systems in the pipeline (from Sunway, Sugon, and NUDT) will reach 1 exaflops on Linpack either. For the past couple of years, Hyperion has been more skeptical of the Chinese exascale effort, in both reaching performance milestones and installation dates. While China was originally aiming to debut one or more of these machines in 2020, Hyperion is predicting a delay of up to one-and-a-half years for all of them. Considering that China has yet to demonstrate a pre-exascale system (at least publicly), such skepticism is probably warranted.

As arbitrary as the 1 exaflops performance milestone is, one thing is pretty clear: the effort to reach this level of performance is spurring a lot of new processor development. Joseph said the proliferation of general-purpose processors and accelerators is particularly interesting to them, and between the multiple X86, Arm, Power, and GPU implementation, as well as the custom HPC processors being developed in China and Europe, there is going to be plenty of diversity and choice for HPC customers in the next decade. “We are expecting that the typical-looking HPC system five years from now may have more than four different types of processors in it,” Joseph said.

And not just processors. Exascale, along with AI, is driving new memory technologies, processor packaging approaches, and interconnect capabilities, not to mention emerging platforms like quantum and neuromorphic computing. “There really is a whole new family of technologies coming together,” Joseph noted, “and that’s something that we will be tracking.”

This is the first time I’ve seen mention of an “A22” supercomputer at Argonne being delivered in 2022. Do you have any more information about it?

Well that’s rather a nice bonus for the one that has begun to offer a more competitive product line in the x86 market and with a better CPU/MB platform feature set also. And that’s exascale market growth in addition to the Cloud services market and the AI market makes for some even better news for all those concerned.

Also is does look like renewed competition in the x86 based market is forcing the incumbent leader to go wider order super-scalar as well and that this widening of x86 based processor designs is now becoming a back and forth/tit for tat sort of race that most assuredly will see x86 moving more in the Power8/9 direction of very wide order super-scalar CPU core designs with single core IPC gains being had even though clocks speed increases will remain more stagnant for the foreseeable future, even with continued process node shrinks.

That’s great for Exascale where power usage is a major consideration and higher IPC even at the same clock rates will get more work done. Exascale computing is all about flops/watt(GFlops/Watt actually) and that fits more within the space constraints and thermal budget of some tightly packed cabinets. So going wider order super-scalar(IPC Gains) is maybe the only relatively easy method to achieve continued performance gains in the face of diminishing returns of any process node shrinks where that relates to not being able to afford any greater performance increases via clock speed increases on newer generation processors.

For the incumbent it’s maintaining those high margins and for the competitor it’s growing its margins a bit more even though the competitor’s current relatively lower margin levels have begun to show some earnings per share. More investment is going to be required to stay in the race even after any IPC to IPC parity has been accomplished. So for Cloud, HPC, Exascale, and any other usage, that more competitive x86 market is making it rather more easy to achieve that exascale goal while still remaining within the tighter budget constraints imposed by those funding sources and their Bean Counters.

Chiplet based processor designs where core counts can be scaled up without needing to add more MB sockets, new Motherboard expense, is a very nice option for a more affordable upgrade option especially where custom cooling solutions are more bespoke affairs tailored to specific MB’s layout and form factor.

There are some things to consider related to any PCIe MB compatibility issues where the latest PCIe 4.0 and 5.0 standards are concerned(1) but I’d maybe would want any MB to be certified for PCIe 5.0 signaling even if I where only using a PCIe 4.0 capable processor. And that may be feasible considering just how close the PCIe 4.0 standard is to being the 5.0 standard as well with some speed increase being the only difference for the most part between the 2 new standards.

All the PCI-SIG protocol standards are backwards compatible so any extra MB certification expenses incurred may be well worth the costs if that affects the overall upgrade cycle part of the TCO figures over the lifetime of any exascale system and these systems do have upgrade options to consider in order to get more service and extra performance out of the hardware before it really is time for that EOL and the fully amortized life-cycle part of any computing platform procurement.

This video(1) explains the differences between PCIe 4.0 and PCIe 5.0. But I have also listed its author and his credentials make this video very appropriate while I’ll provide some details in advance. It appears that PCIe 5.0 is just PCIe 4.0 with a speed bump and some very minor additional tweaks.

The Author’s info provided in the via its YouTube entry:

“Keysight Technologies, Inc.

Published on Jun 12, 2019

Rick Eads, member of the PCI-SIG board of directors, explains in this tutorial video the goals that the consortium wants to reach with PCI Express 5.0. A big step lies in doubling signal speeds from 16 GT/s to 32 GT/s. The video sheds light on the differences between Gen4 and Gen5 and the implications.” (1)

(1)

“What are the goals of PCIe 5.0 and what the differences between PCIe 4.0 and PCIe 5.0?”

https://www.youtube.com/watch?v=35Bmq_NYg6Q&feature=youtu.be