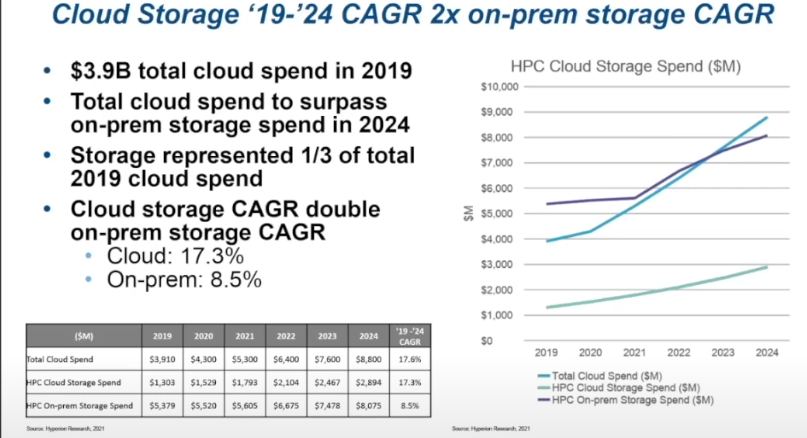

AI/ML, more sophisticated analytics, and larger-scale HPC problems all bode well for the on-prem storage market in high performance computing (HPC) and are an even bigger boon for cloud storage vendors.

It might be surprising since HPC centers have been cloud-hesitant over the years, but according to Mark Nossokoff, Senior Analyst at Hyperion Research, the leading source for HPC market analysis, cloud storage is expected to grow even faster than the expanding on-prem market to $3 billion by 2024—double that of on-site storage growth. He points to emerging use cases with exabyte-class storage requirements like the UK Met Office’s weather operations running on Azure with over an exabyte of cloud storage backing weather simulations and research.

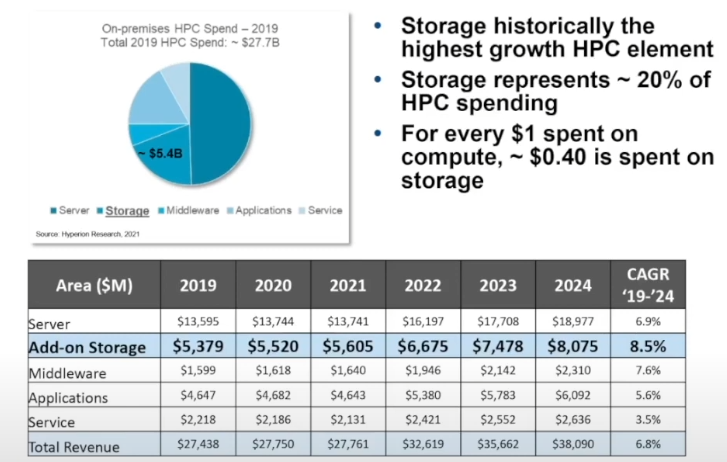

While this anticipated growth in cloud storage is striking, there will continue to be significant growth in the HPC on-prem storage market. “Storage continues to be the largest growth segment with forecast 8.5% growth over the next five years, hitting $8 billion in revenue by 2024,” Nossokoff says. “20% of overall user spend for on-prem HPC infrastructure is storage and for every dollar spent on-prem for servers, around 40 cents is on storage.”

Nossokoff points to several shifts in the storage industry and among the top supercomputing sites, particularly in the U.S. that reflect changing priorities with storage technologies, especially with the mixed file problems AI/ML introduce into the traditional HPC storage hierarchy. “We’re seeing a focus on raw sequential large block performance in terms of TB/s, high-throughput metadata and random small-block IOPS performance, cost-effective capacity for increasingly large datasets in all HPC workloads, and work to add intelligent placement of data so it’s where it needs to be.”

In addition to keeping pace with the storage tweaks to suit AI/ML as well as traditional HPC, there have been shifts in the vendor ecosystem this year as well. These will likely have an impact on what some of the largest HPC sites do over the coming years as they build and deploy their first exascale machines. Persistent memory is becoming more common, companies like Samsung are moving from NVMe to CXL, which is an indication of where that might fit in the future HPC storage and memory stack. Companies like Vast Data, which were once seen as an up and coming player in the on-prem storage hardware space for HPC transformed into a software company, Nossokoff says.

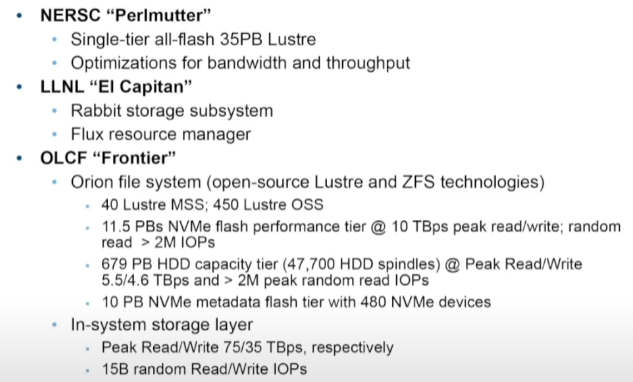

He also points to shifts in the HPC file system space, including a renewed zeal for Lustre. As we noted during our look at the 35-petabyte Perlmutter supercomputer’s storage stack, Lustre is being brought up to speed to handle massive system scale as well as the mixed workload demands of AL/ML in addition to traditional HPC file types and ZFS is also being retrofitted to address mixed and emerging workloads.

Even though IBM’s system share in HPC is down below 4%, the Spectrum Scale (formerly GPFS) file system is widely used in HPC because it’s been the file system base for years. Now, HPE has embraced it and will give it continued life and hopefully a Lustre-like set of modifications to keep it rooted in the AI/ML era ahead.

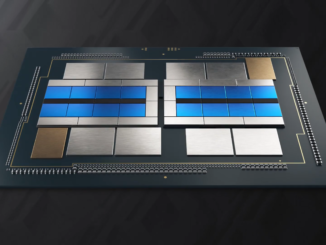

A look (above) at the scale and breadth of storage technologies behind some of the largest supercomputers reveals some major investments at the labs in bolstering file systems and making use of all-flash, for instance but this takes the power of a large center to accomplish. For the rest of HPC, those in the departmental or lower, the storage story looks quite a bit different and is perhaps just as much the reason for the drive toward higher cloud storage spending as anything.

While flash prices have come down enough for systems like Perlmutter to take that major step, the average HPC shop is likely not taking such strides. If use cases like the UK Met Office with exabyte-class storage in the cloud are compelling, this could push an even larger wave toward the cloud for storage—and at that point, compute may quickly follow.

Very interesting and good article. There was an interesting study conducted recently by Dimension Research which said on-prem software sales are growing. In fact, demand for on-prem is as much as public cloud. Here is the link to the article.

https://www.toolbox.com/tech/enterprise-software/news/92-of-companies-say-on-premises-software-sales-are-growing-dimensional-research-report/