There are two key barriers to fully exploring the emerging use of Field Programmable Gate Arrays (FPGAs) at the scale found in data centers: capital investment for private equipment, and gaining full, unfettered access to shared equipment.

Derek Chiou, and his colleagues at the Texas Advanced Computing Center (TACC), are on a mission to change all that. They hope to spur ground breaking research for applying FPGAs. Their initiative, the FPGA Accelerator Research Infrastructure Cloud (FAbRIC), offers researchers an advantage through access to multiple platforms to enable quantification of the performance and portability of different approaches.

While FAbRIC offers unfettered access to the FPGAs, Derek was careful to explain that such access is only needed for fully exploring the use of FPGAs. Derek said, “While you can get plenty of research done running on top of a shell, you must port your code to those shell interfaces provided which can be less than ideal. In addition, you cannot do infrastructure work, that may require a different shell interface, or shell work that requires fully unfettered access.”

This concept of CPU + FPGA is different than other CPU + accelerator options because it is a combination of highly optimized compute + reconfigurable compute that can be customized to meet a precise need. Other accelerators, including ASICs, GPUs, TPUs, and neuromorphic chips, do not offer the same level of reconfigurability as an FPGA. This is a prime area for developers and researchers to find new innovative techniques for many application domains.

Thus far, FPGAs have not been a mainstream capability outside of hyperscale centers such as Microsoft and Amazon. There is great anticipation that this is changing; recent announcements of major OEM support for Intel FPGAs in OEM platforms indicate a level of confidence in this trend. Strong interest in FPGA usage in data centers come from numerous published results showing substantial benefits from reconfigurable and customized computational designs.

Data movement: a key concern for FPGA users

Data movement remains the challenge it is for all system designs, and therefore truly remarkable results involve careful attention to communication with memory, and with the interconnect between processors and FPGAs.

Groundbreaking work with FPGAs must include consideration of data movement, and exploration of all the techniques that are possible. Unfortunately, public cloud access to FPGAs has been unable to offer unfettered access for exploring and experimenting with all the data movement controls possible with an FPGA. For instance, Amazon F1 instances broke new ground by offering FPGAs in the public cloud, but security and stability concerns prevent the full access that researchers may need. FPGA programmers using Amazon F1 instances have understandable restrictions that aim to protect Amazon and other users in a commercial production environment.

In addition to developers seeking to explore FPGAs usage, there is a small community of researchers wanting to develop ‘jailcells’ for FPGAs that are effective with the hope of improving public FPGA usage. Public clouds have relied heavily of hypervisors for CPU sharing, but there is not an equivalent for FPGAs. Maybe there will be one day, an area that some researchers hope to advance. Most such research requires bare metal access to the FPGA.

FAbRIC: Access for Researchers to the Frontier of FPGA Development

Reconfigurable hardware offers performance and power efficiency, but the high cost of systems, tools, and low-level programming put such systems out of reach of most researchers. To help solve this challenge, and enable leading research, the FAbRIC (FPGA Research Infrastructure Cloud) project is working to acquire and maintain such systems and their tools at the Texas Advanced Computing Center for open use by all researchers.

“Having an open, shared resource of this kind makes some of the highest performance FPGA systems available to all researchers, regardless of their location or their ability to purchase and maintain such systems.”- Derek Chiou

The project team offers distributed classes for students and researchers to learn how to use such platforms. The project uses a default usage model of “open source to play” to enables researchers who agree to open source their code to use the facility free of charge.

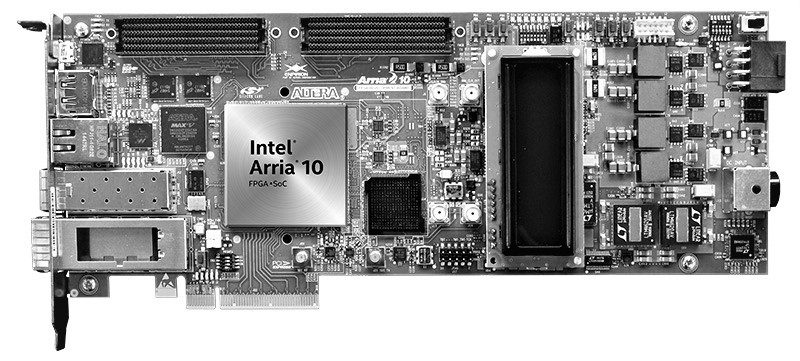

In just a few years, the project has already deployed four systems, a Microsoft Catapult cluster, an IBM POWER8+CAPI cluster, an Intel Xeon processor + FPGA cluster, and a Convey MX system.

Catapult: FPGA in the flow of data in the cloud

Project Catapult is the Microsoft initiative to transform cloud computing by augmenting CPUs with an interconnected and configurable compute layer composed of programmable silicon (FPGAs). Project Catapult began in 2010 when a small team began exploring alternative architectures and specialized hardware. They realized that FPGAs offer a unique combination of speed, programmability, and flexibility ideal for delivering cutting-edge performance and keeping pace with rapid innovation. Though FPGAs have been in use for decades, Microsoft pioneered their datacenter scale use in cloud computing. Microsoft demonstrated that FPGAs could deliver efficiency and performance without the cost, complexity, and risk of developing custom ASICs.

Using the Catapult system design, Microsoft Azure and Bing servers are being deployed with FPGAs that sit both between the server and the data center network, and on the PCIe bus. The FPGAs help accelerate networking on Azure machines and searches on Bing machines, but could very quickly and easily be retargeted to other uses as needed.

The most recent addition for FAbRIC users is a Microsoft Catapult system consisting of 384 two-socket Intel Xeon processor-based nodes. Each node offers 64 GB of memory and an Intel/Altera Stratix V D5 FPGA with 8 GB of local DDR3 memory. The FPGAs communicate to the processors via a PCIe Gen3 x8 connection, providing up to 8GB/s bandwidth. Each FPGA can read and write data stored on its host node using this connection. The FPGAs are connected to one another via a dedicated network using high-speed serial links. This network forms a two-dimensional 6×8 torus within a pod of 48 servers and provides low latency communication between neighboring FPGAs. This design supports the use of multiple FPGAs to solve a single problem, while adding resilience in the event of server or FPGA failures.

TACC offering FAbRIC

All the FAbRIC platforms are equipped with FPGA accelerators and development servers in a cloud-based production environment. To be available for open use, FAbRIC systems are located in the Texas Advanced Computing Center (TACC) at The University of Texas at Austin. In addition to NSF support, Intel (formerly Altera), Nallatech, Xilinx and Alpha-data have all committed FPGA/board donations.

If you qualify (start by being a university researcher), you can request access to use the FAbRIC system from the FAbRIC home page. The process starts by being a user of the Texas Advanced Computing Center. If you already have a TACC account, you are already part way there! If not, instructions on applying for a TACC account are supplied as well.

Links for Additional Reading and Follow-up

- FAbRIC home page, by Derek Chiou (sign up on this page)

- New to FPGAs? Read an Overview of FPGAs and visit the Intel FPGA Acceleration Hub

- Digging deeper on the Cloud use of FPGAs

- Microsoft Catapult: Microsoft Research Catapult home page, paper (IEEE), and video (YouTube)

- Inside the Microsoft FPGA based configurable cloud (YouTube)

- Intel FPGAs Power Microsoft Project Brainwave AI

- Supercharging Data Center Performance while Lowering TCO: Versatile Application Acceleration with FPGAs

- Microsoft* Turbocharges AI with Intel FPGAs. You Can, Too

- Intel FPGAs Bring Power to Artificial Intelligence in Microsoft Azure

- Intel Processors and FPGAs – Better Together

- Intel’s Hardware Acceleration Research Program (HARP) for researchers

- Texas Advanced Computing Center (TACC)

- Another Step Toward FPGAs in Supercomputing, by Nicole Hemsoth

Be the first to comment