Hyperconverged storage is a hot commodity right now. Enterprises want to dump their disk arrays and get an easier and less costly way to scale the capacity and performance of their storage to keep up with application demands. Nutanix has a become as significant player in a space where established vendors like Hewlett Packard Enterprise, Dell EMC, and Cisco Systems are broadening their portfolios and capabilities.

But as hyperconverged infrastructure (HCI) becomes increasingly popular and begin moving up from midrange environments into larger enterprises, challenges are becoming evident, from the need to bring in new – and at times expensive – infrastructure resources to datacenter sprawl. At the same time, organizations need to wrestle with the challenge of determining how to manage both their primary storage and their backup systems. A growing number of smaller startups – including ClearSky Data, Cohesity, and Rubrik – are aiming to solve some of these problems through a range of pathways, from software-defined storage and cloud-based storage to hybrid offerings.

Datrium can be included in that list. We first spoke with the company almost a year ago, after it had launched its DVX converged infrastructure platform (below) that includes software that runs on X86 systems that include DVX Compute Nodes and DVX Data Nodes that – in a key difference from traditional HCI – can scale independently from each other. The software can run on Datrium systems or an enterprise’s existing X86 servers. The Datrium software also echoes of how blockchaining works to ensure data integrity and security. The company was started in 2012 by executives with a broad range of experience in the story field with such vendors as Data Domain (bought by EMC before that company was bought by Dell), VMware, NetApp, IBM, Sun Microsystems, and OpsWare (which was bought by HPE).

Datrium had launched converged primary and secondary storage systems – which do both consolidation and backup of virtual machine (VM) workloads – in a single system by the time The Next Platform first caught up with them, and had increased the scalability to up to petabyte of capacity within a single Datrium system.

“We’ve got this architecture whereby all of your performance – both VM and IO performance – really lives on servers, and then all your durable capacity – your first copy and all subsequent copies – live in the data nodes on the network,” Craig Nunes, vice president of marketing, tells The Next Platform. “It’s very cost-effective for the data protection aspect of the platform.”

More recently, during the AWS re:Invent show last fall, Datrium pre-announced a cloud-native version of DVX, porting the software to the cloud provider and tapping into its EC2 and S3 resources to offer backup and restore as a service from Amazon Web Services. The service launched earlier this year and was followed with the release this month of DVX 4.0, which also included support for Oracle RAC and improved VM fault tolerance and data and security backup. Datrium is seeing some momentum, with more than 300 DVX deployments in seven quarters and a customer list that includes Siemens, Oberto, and Osprey Packs. The list of ecosystem partners has grown from six to about 30, Nunes said.

“The fundamental thing we’re finding out there is there’s obviously a shift going on from traditional RAID-based infrastructure to something more modern in the form of X86-based architecture,” Nunes says. “HCI is the hottest thing, but when people are coming off these larger infrastructures to hyperconverged, hyperconvergence has been a tough sell as it relates to any consolidation at scale. It’s awesome for smaller workloads, for virtual desktop infrastructure, but you very quickly end up with many clusters in a traditional enterprise environment that creates a lot of management headache and pain. What we’ve positioned is, ‘Look HCI was an important step forward, but you really need to look at more of a tier-one scalable hyperconverged-like model.’ That’s precisely what we do, and we find a lot of folks who have been struggling with how to attack modernization with the HCI building blocks, and with Datrium they’re finding something that really does address what they need at scale.”

That scale continues to be an issue for enterprises that want to combine primary workloads with data protection for their backup workloads.

“When folks are thinking about it, not only do enterprises have 1,000 servers – so a bunch of eight-node clusters are going to kill them – but also the fact that we’re not just talking about storing the primary workloads and serving performance but also maintaining days, weeks, and months of snapshots, which are fundamentally synthetic full backups on our system, that need for capacity scale is big,” Nunes adds, noting the challenges of trying to move 150 TB of midrange or all-flash arrays to an HCI. “If you were to move that straight across to hyperconverged storage, you would absolutely want to carve those up by workloads so you could size your nodes appropriately and manage them, and so forth. That’s not what anybody wants to do who’s running a Pure Storage array right now. If they want to move it to a comparable system that offers the same sorts of scale and performance characteristics that they’ve had, thus far there’s been very little choice for them but to stay with all-flash technology. This is a way forward.”

The DVX systems supports VMware’s ESXi and Red Hat’s KVM hypervisors, Red Hat Enterprise Linux and CentOS operating systems and Docker containers. A Datrium system includes up to 128 Compute Nodes and 10 Data Nodes, though users can combine with the Data Nodes with their own X86 servers. The move to offer DVX as a service from AWS further enhances the consolidation story Datrium pushes.

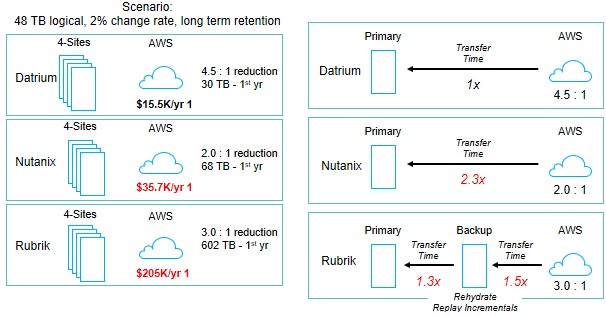

“The story with our Cloud DVX is the fact that the platform itself is very good from a data reduction perspective,” Nunes said. “On average our customers see about a three-to-one data reduction with the platform. But then, when you replicate that to cloud, Cloud DVX will again deduplicate across DVXes or across sites and we’re expecting about, on average, a 4.5 to 1 deduplication rate, so when I put something up on AWS, it’s taking less than 25 percent or 20 percent of the space of my actual data.”

Given that AWS charges by capacity and when the data is moved, having the smallest possible footprint is important financially and makes AWS more interesting for backup and not just development, he said. It also helps open up more opportunities for larger enterprises that are looking for ways to move away from traditional storage arrays to reduce costs and improve scalability and agility.

“If you call what we are doing hyperconverged – and architecturally it’s a little different, but trying to keep it simple for folks who are trying to figure the world out – hyperconverged as we know it today is pretty much dominated by the entry, smaller configurations,” Nunes said. “But where the $20 billion array market lives is mostly above that, and if they’re to modernize in an X86 way that facilitates consolidation with low-latency mission-critical performance, there’s are huge opportunity, a huge gap to fill for folks looking to take the next step.”

The blockchain comparison was raised recently by Datrium co-founder Sazzala Reddy, who also was a co-founder of Data Domain, during a customer meeting. The technology grew out of the cryptocurrency space but is now being pushed by the likes of IBM and HPE as a way of improving security, data integrity and trust in online transactions. It involves the use of distributed encrypted ledgers to keep track of data and transactions across a geographic space, cryptographic hashes to ensure immutability and data integrity, and a content-address system to store the crypto-hash units.

In a system like Datrium’s DVX platform – like in blockchain – data integrity is important, Nunes explains.

“The core construct of our approach is that we have used that crypto-hash algorithm for parts of data within a VM,” he said. “A VM is basically a list of crypto-hashes in our architecture, so a given VM is immutable. If a data block is unknowingly changed or corrupted, we know about it. In fact, we’re able to inspect those hashes multiple times a day to make sure something hasn’t happened to affect the integrity of that VM. Those hashes are at the core of our content-address file system and we actually leverage those hashes to provide dedupe within the system. Then he applies these data ledger concepts to when we move data from one site to another or one cloud to another because it will inspect what’s been sent and written and compare the crypto-hashes on either side and confirm that was what sent was what was written remotely.”

Datrium didn’t cut and paste the blockchain algorithm, but the DVX platform does leverage the principles of the technology for end-to-end data integrity, Nunes says.

Be the first to comment