Amazon Web Services essentially sparked the public cloud race a dozen years ago when it first launched the Elastic Compute Cloud (EC2) service and then in short order the Simple Storage Service (S3), giving enterprises access to the large amount compute and storage resources that its giant retail business leaned on.

Since that time, AWS has grown rapidly in the number of services it offers, the number of customers it serves, the amount of money it brings in and the number of competitors – including Microsoft, IBM, Google, Alibaba, and Oracle – looking to chip away at Amazon’s dominant 44 percent market share.

Given the growth and the number of top-tier vendors taking aim, many in the industry have wondered when AWS would start to tail off, would start to see revenue or customer growth start to slow or see that those competitors are getting larger in the rear-view mirrors. That day will most likely come, but it hasn’t happened yet. As we reported in The Next Platform, AWS now over a million organizations that are running part or all of their businesses on the AWS cloud. That includes Cox Automotive and Shutterfly, both of which said this week that they are migrating most of their infrastructures to the cloud provider. For Cox – whose 20 or so brands include AutoTrader, Kelley Blue Book, and Dealer.com – that means closing 40 datacenters and moving those workloads onto AWS. Shutterfly is putting its 75PB image library onto the cloud. As of the fourth quarter 2017, AWS had an annual run rate of more than $20 billion, which was a 45 percent year-over-year increase. The company continues to see accelerating growth.

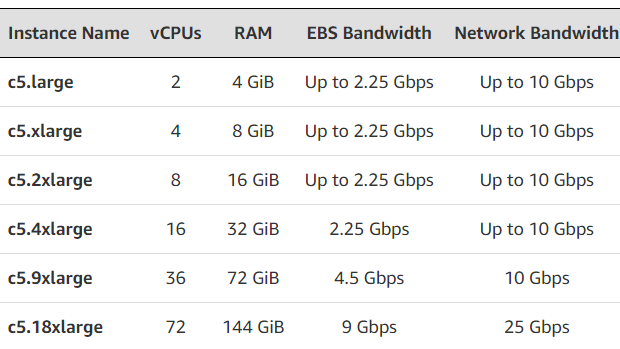

Certainly AWS has been helped along by rising trends within the industry, from the growth of data to the rise of the Internet of Things (IoT) to a reinvigorated push around artificial intelligence (AI) and machine learning, all of which has increased demand among enterprises, financial services firms, research institutions and government agencies for the kind of IT scaling that would be too expensive to buy themselves but which is available through the public cloud. At the same time, AWS engineers have been aggressive in rapidly growing the number of services, tools and compute instances (as seen below) that are available through the company, which was on display this week at the AWS Summit in San Francisco.

In front of more than 9,000 attendees at the event, Werner Vogels, vice president and chief technology officer, and Matt Wood, general manager of AI and deep learning, unveiled a range of services – some just announced and others that are now available after being hinted at in the past – that touched on everything from AI and machine learning to database, storage and security.

“Machine learning is experiencing a renaissance in the cloud,” Wood said. “Customers from virtually every industry – tens of thousands of app developers – are using machine learning today across virtually every industry and across every size of every organization. So tens of thousands of customers are running machine learning on AWS. But for many companies, it’s pretty early, so what we’ve had to do over the past five to ten years at AWS is build an entirely new software stack to drive machine learning for customers.”

That has included providing open source frameworks that include TensorFlow and MXNet as well as SageMaker, introduced last year as a managed service that customers can use to build, train and host custom models in the cloud and then deploy them for inference and at the edge. Developers can choose their algorithms and leverage a model chosen by SageMaker that best fits their need, and then they can run whatever data they want. For organizations that don’t want to build their own custom models, AWS offers a range of machine learning services that touch on everything from natural language (Comprehend) and speech (Polly) to video (Rekognition Video) and chat (Lex). The company last year previewed two other services, Transcribe (voice into text) and Translate (exactly what the name suggests), but Wood said that the they now are generally available.

AWS also introduced the latest stable versions of TensorFlow (1.6) and MXNet (1.1), which will be in available in SageMaker, which uses containers to house its algorithms, though customers can bring their own machine learning algorithms to the service via containers. In another move, AWS is “taking the containers that we use under the hood to drive this first-class support and making them open source, and this allows you to take those containers, customize them, add any packages and methods and models, and put them back into SageMaker – and with those same 20 lines of Python code, you can drive the training at basically any scale,” he said.

In addition, Wood introduced a local mode for SageMaker, enable developers to use their local notebooks to train machine learning models before deploying them for training on notebook instances.

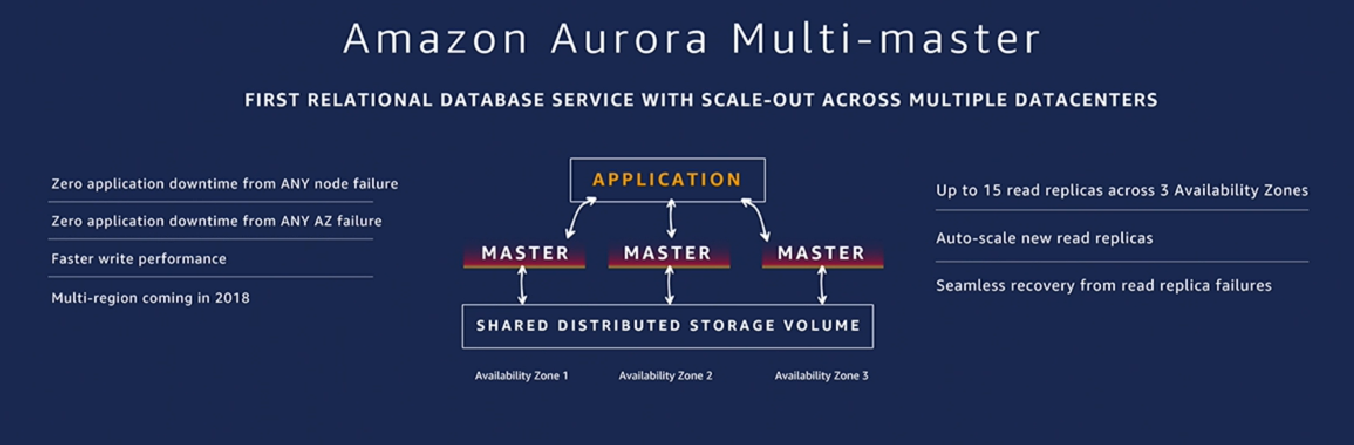

AWS also is expanding the capabilities within its Aurora relational database, which is now seeing tens of thousands of users, a jump of more than two times over the last year. The database is compatible with both MySQL and PostgreSQL and, according to Vogels, is the fastest-growing service in the history of the company. Adding to the service, AWS unveiled Aurora Serverless – serverless computing for the database – and Aurora Multi-Master, which enables customers to scale database write operations across multiple datacenters and drive scalability and availability.

“Data is still at the core of what most of our businesses do,” Vogels said. “One of the things that cloud computing has done is [give us] access to the same computers. We all have access to the same storage. We all have access to the same algorithms. So what makes our businesses unique now is the data that we have, and the quality of the data and how we operate on that data. That makes the storage for our data increasingly important, because that is really where the pot of gold of your business sits.”

Much doesn’t sit in data lakes, but instead in databases, which are coming over during the migration to the cloud, he said. In two years, the company through its Database Migration Service has migrated more than 60,000 databases.

In terms of security, AWS announced AWS Secrets Manager, through which organizations can store and retrieve applications secrets – think password, API keys or database credentials. Customers can access the Secrets Manager either through an API or a CLI. The tool means that developers no longer have to put secrets in their code to secure them, enabling them to build more secure systems, Vogels said.

Also launched were Firewall Manager to give customers greater control over security policies and to detect and fix compliance issues. Private Certificate Authority, a new feature in AWS Certificate Manager, enables customers to manage certificates in a pay-as-you-go fashion, sharply reducing the costs in terms of money and time for using certificates.

Be the first to comment