Absorbing a collection of new processing, memory, storage, and networking technologies in a fast fashion on a complex system is no easy task for any system maker or end user creating their own infrastructure, and it takes time even for a big company like Oracle to get all the pieces together and weld them together seamlessly. But the time it takes is getting smaller, and Oracle has absorbed a slew of new tech in its latest Exadata X6-2 platforms.

The updated machines are available only weeks after Intel launched its new “Broadwell” Xeon E5 v4 processors, which have been shipping to hyperscalers and cloud builders for the past four months or so and which were originally anticipated to launch around last September or so, a year after the launch of their predecessor “Haswell” Xeon E5 v3 chips. The Broadwell chips deliver somewhere between 20 percent and 45 percent better performance on various workloads, and with faster memory and more cores should deliver a substantial performance boost per node in the Exadata clusters, which employ Oracle’s Linux variant, its 12c in-memory database, its Real Application Clustering (RAC) for gluing together distributed databases, its Exadata storage servers for accelerating database queries, and of course a mix of disk and flash memory and InfiniBand networking to accelerate all of that software.

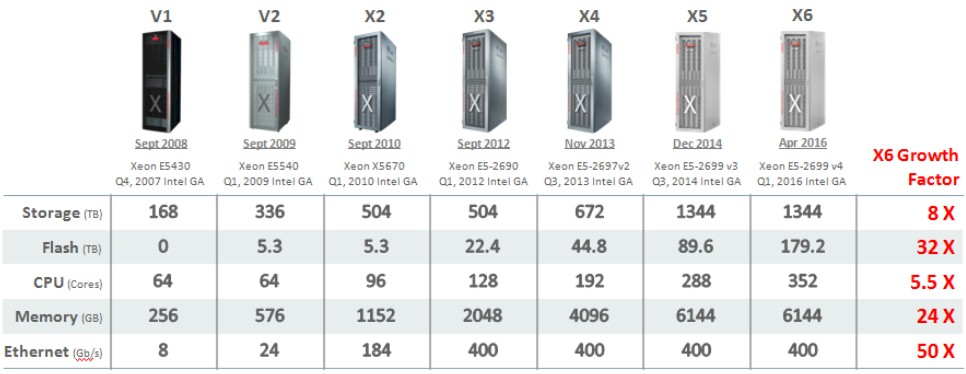

The Exadata X6-2 is the seventh generation of homegrown Oracle database machines, the first of which was announced back in September 2008 in a partnership with Hewlett-Packard Enterprise to much fanfare because it expressed Oracle’s desire to engineer a complete hardware-software stack and what had been cobbled together by customers or partners as what is in essence an appliance. After Oracle moved to buy Sun Microsystems in early 2009 (which it accomplished a year later) it shifted to Sun iron for the Exadata clusters and over the past eight years has kept up a pretty steady pace of upgrades on the systems. But Exadata is about more than just having a preconfigured system that is easier to install. The idea is to have a complete software stack supported by Oracle that is cheaper for both Oracle and customers to support and to use the collective experience of all Exadata customers (including Oracle itself) to improve the operation and speed of patching of the collective system.

This is, in fact, the right way to go about building such a complex system and one that mirrors the way that Cray, SGI, and IBM have always built their capability-class supercomputers. To a certain way of looking at it, you can think of an Exadata as a breed of supercomputer with its own parallel file system designed to speed up SQL accesses by prechewing data in parallel down where it is stored and sending summary results back up to database nodes, which think they are doing all the work. In the Exadata machine, the database and its storage engines are both parallelized, and are done so independently so each can be scaled as needed for both capacity and performance for different aspects of database processing.

In the early years, it took Oracle quite some time to get new processing technologies into the field with the Exadata, somewhere between 8 and 12 months, depending on the generation. But now Oracle is more tightly engaged with Intel (as are other hyperscale and HPC customers) and the gap between when Intel has launched a new Xeon and when it is available in the Exadata iron is essentially zero.

Just like with HPC clusters running simulation and modeling workloads, customers do not have to upgrade all of the nodes in an Exadata cluster so they are running the same exact thing across all of the nodes to run a parallel database. In the past, with parallel databases, this was not always the case, and there may still be good reasons to upgrade to new iron to get balanced performance out of a cluster. But it is not a requirement. Furthermore, the current Exadata software stack that came out with the X6-2 systems – including the 12c database, RAC, and Exadata storage servers – is compatible with the previous six generations of Exadata iron. Oracle may not make every future release available on early Exadata iron going forward, of course. But for now, this is the case, and incidentally, customers can mix and match different database and Exadata releases on their iron, too. All of this allows customers to choose when and how to upgrade both the software and the hardware in their database clusters.

The interesting bit for us and the important thing for customers is that Oracle has been able to scale up the performance of a rack of machines over those many years and has, by and large, kept its prices for a rack of Exadata fairly constant. Those of you who buy Oracle software know that the company generally sets a price and, at least at the list price level, doesn’t change it very frequently. So most of the Moore’s Law improvements in the system are therefore converted directly to increases in price/performance on behalf of customers. Oracle is making a bet that customers will be happy and add racks and racks more as their applications, and therefore their databases, grow and that it will be able to make it up in volume. With thousands of customers and the Exa line driving billions of dollars in sales per year, say what you will, it is working even if it is not taking over the world.

The capacity increases are quite large on the cores, memory, flash, storage, and networking vectors, as shown in the table above. And we think with next year’s “Skylake” Xeon E5 v5 processors and possibly the addition of 3D XPoint main memory and SSDs to Exadata X7 clusters, the jump in core counts will grow some (about 27 percent, from 22 cores per socket with the new X6-2 machines to 28 cores per socket with the X7-2s), but the boost in non-volatile memory could be substantial. We also think that the InfiniBand interconnect could get a boost, although as far as we know Oracle is using Mellanox InfiniBand SwitchX ASICs in its X6-2 clusters but Oracle is working on its own InfiniBand chips running at 100 Gb/sec speeds and could very well use these in the next iteration of the Exadata machines.

For the database servers in the Exadata X6-2 clusters, the nodes are configured with Xeon E5-2699 v4 processors, which have 22 cores running at 2.2 GHz and which are the priciest versions on the public SKU list from Intel. The nodes come with 256 GB of main memory (which can be expanded to 768 GB) and have four 600 GB 10K RPM disk drives for local data storage (expandable to eight drives). These database nodes do not have any flash storage, and they each have two 40 Gb/sec InfiniBand ports to link to storage servers and five 10 Gb/sec Ethernet ports to link to the outside world.

The Exadata storage servers come in two flavors, but both are based on a pair of ten-core Xeon E5-2630 v4 processors running 2.2 GHz. The High Capacity (HC) variant has four PCI-Express flash cards with 3.2 TB of capacity each plus a dozen 8 TB 7.2K RPM drives (the ones filled with helium to make them heat up less) for database storage. The Extreme Flash (EF) variant of the Exadata storage server has no disk drives at all and has eight PCI-Express flash drives with that 3.2 TB of capacity. The Flash Accelerator F320 cards that Oracle has manufactured on its behalf employ 3D NAND flash – Samsung is supplying the chips – and they make use of the NVM-Express software stack over PCI-Express to more directly link the flash to the processing complex in the storage servers.

The Exadata engineered system is not a static machine, but one that can have different numbers of database and storage nodes depending on the needs of the workload. The specs allow for anywhere from 2 to 19 database servers and from 3 to 18 storage servers per rack, which works out to up to 836 cores and 14.6 TB of memory for database and up to 360 cores and either 460 TB of flash or 1.7 PB of disk per rack. With the hybrid columnar compression that Oracle invented for its database way back in 2008 with the original Exadata iron, Oracle has made improvements such that it can now get somewhere between 10X and 15X data compression ratios for databases, which allows it an effective capacity of somewhere between 4.6 TB and 25.5 TB, depending on the media and the compression it achieves.

That compression – and the ability to do scans and queries on compressed data and to move around compressed data within the cluster – is a key component of affordability of the Exadata machine. Without such aggressive compression, Oracle would not be able to provide a step function in performance and price/performance over running big relational databases on more commonplace NUMA iron like its own Sparc M series or IBM’s Power Systems servers. (We do not believe that new customers are buying HPE’s Itanium-based Superdome Integrity servers, but these could also run Oracle databases on Unix and were once a popular platform on which to do so.)

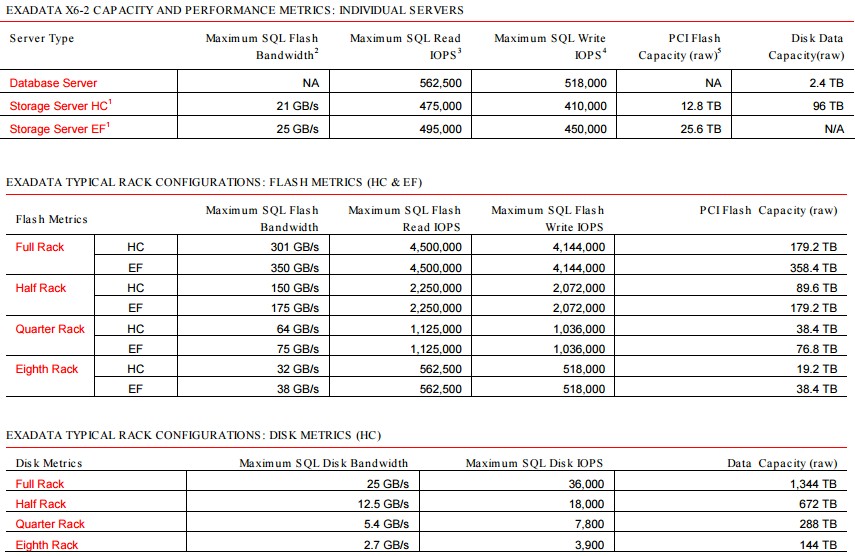

Here are some generic performance specs on the Exadata X6-2 clusters:

Oracle is not supplying a lot of benchmark data for the Exadata X6-2 machines, but says that on a setup with eight database servers and fourteen all-flash storage servers (a full rack), the system can do 350 GB/sec of analytical scans from SQL on the database and that it can achieve a 0.25 millisecond I/O latency with the flash handling an aggregate of 2.4 million I/O operations per second (IOPS). The disk variant is no slouch by the way, so don’t think you need to be afraid of disk. The HC variant (which has some flash to accelerate performance) could handle 301 GB/sec of analytic scan bandwidth with 2 million IOPS out of the flash across the rack and the same 0.25 millisecond average latency for the SQL queries. So going all flash only boosted the database throughput by 16.3 percent and the IOPS by 25 percent. It is a testament to the engineering that Oracle has done in the Exadata system that all flash does not boost performance by all that much.

If Oracle was charging a premium for the all-flash Exadata EF storage nodes compared to the HC nodes, this would be a big issue. But, as it turns out, each Exadata storage node, whether EF or HC, costs the same $48,000, according to the company’s public price list. (Yes, unlike all of its competitors, Oracle publishes its prices for its Exa line of engineered systems, which includes Exalogic machines for application servers and Exalytics machines for data analytics.) The Exadata storage servers do not include the licenses for the Exadata storage software, which costs $10,000 per node. The database servers – which include Linux but which do not have licenses for the Oracle databases – cost $40,000 a pop. A full Exadata X6-2 rack as outlined above in the benchmark tests costs $1.1 million. Premium support for the systems costs 12 percent of the list price per the iron per year, plus another 8 percent per year to support the underlying operating system.

Adding in the cost of licensing for the database and storage software can radically increase the price of the system, obviously. But, Oracle database software is not free on any other hardware, either. The 12c Enterprise Edition database costs $47,500 per core for a perpetual license, and even with a 0.5 processor scaling factor that chops that price in half on the Xeon chips, on a rack of Exadata servers with eight database servers that works out to 352 cores at a cost of $8.36 million and another $140,000 for the Exadata storage software on a rack with 14 of the storage servers. The software bill is 7.7X higher than the hardware bill. Just what you would expect, in fact, from Oracle.

>>>The Flash Accelerator F320 cards that Oracle has manufactured on its behalf employ 3D NAND flash – the company does not reveal who is supplying the chips>>>

Oracle website clearly states it is Samsung

https://docs.oracle.com/cd/E65386_01/html/E65387/z40001d91389212.html#scrolltoc

https://docs.oracle.com/cd/E65386_01/html/E65387/gokdw.html#scrolltoc

3D V-NAND chips more specifically, from Samsung (as you state)

“plus another 8 percent per year to support the underlying operating system.”

Oracle does not charge additional support for OS and virtualization on Exadata or any Oracle HW.

In your last example, the storage server software is $10,000 per drive (for HC) – so for 14 storage servers it’s not an additional $140,000 but an another $1,120,000

Regarding your statement in the article above: “The Exadata storage servers do not include the licenses for the Exadata storage software, which costs $10,000 per node.”

Note that, per my understanding, Exadata Storage Server Software licenses are charged on a per-drive basis (i.e. HDD = $10K; SSD = $20K)…not “per node” – please see page-3 at http://www.oracle.com/us/corporate/pricing/exadata-pricelist-070598.pdf.

I want to add the fact that each node has 4 (not 5) 10GbE ports to connect to outside networks.

12% of the HW, which represents the Premier supports, covers support of HW+OS.

The all flash (EF) storage cells are $20k list per PCI Flash card and there are 8 per storage cell –> $160k per storage cell and that times 14 in a full standard rack is: $2,240,000 at list.

The Flash/spinning disk (HC) storage cells are $10k per disk and there are 12 disks per storage cell –> $120k per storage cell and that times 14 in a full standard rack is: $1,680,000 at list.

Typical maintenance per year is then 22% of that $$.