One look at the architectural ambitions for next-generation supercomputers in the United States versus those in Europe reveals an expected, but no less striking, emphasis on ARM systems for high performance computing applications.

While ARM in high performance computing is still emerging (in part, because 64-bit ARM processors have not been around for long), Europe is pushing a great deal of investment toward its native processor powerhouse while elsewhere in that ecosystem, Qualcomm, Cavium, Applied Micro, AMD, and others are swiftly beyond small devices and into hyperscale datacenters—a trend that we expect will play out in full force beginning this year. In the U.S. the next-generation pre-exascale and future systems are already being cobbled together in theory to include OpenPower and X86 architectures with various accelerators and all of the future interconnect, memory, and storage elements coming down the line this year and beyond. However, for Europe, there’s a broader view for architectures–and it’s one that will take some legwork, both on the software side in particular–the hardware is far easier to piece a story together for given what we already have understand about how the handful of major ARM vendors and their integration partners are tackling the market with cores and capabilities.

What all of this means is that there will need to be a broad push to build an ecosystem, especially for an ecosystem as niche (and important) as HPC. We have already reported in depth about new projects featuring ARM, including the Mont Blanc project at the Barcelona Supercomputer Center, the potential for ARM to tackle data-intensive workloads at CERN, work at the UK’s Hartree Centre using Lenovo ARM machines, and efforts to firm up the code base for future HPC applications running on the ARM architecture, among others, and one can expect that more projects supported by Europe’s Horizon2020 program will continue to push new use cases in HPC to the fore, including most recently, the ExaNest project.

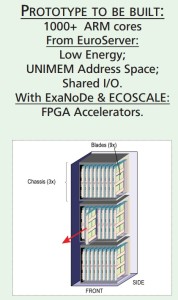

The goal of ExaNest under the Horizon2020 FET-HPC funding program is to develop and test new and emerging technologies in interconnects, storage, and cooling which in this case, applies to ARM-based machines. The first prototype won’t be incredibly large, ExaNest coordinator (and professor of computer science at the University of Crete) Manolis Katevenis tells The Next Platform, it will set the stage for an ARM-focused view into what European pre-exascale and exascale machines might look like. The system, which sports a still inexact number of ARM cores (more than 1,000 ARM cores is the official word, which means around 40 nodes or so is our guess), will be designed by member of the related ExaNode team based out of the University of Manchester, which is focused on developing ARM-based HPC microserver designs.

“ARM solves the problem, or at least goes a long way toward that end, of reducing energy consumption,” Katevenis says. “Once that is done, it’s possible to put the processors closer to each other and when that’s done, and cooling has been improved, that means far more processors in the total energy budget.” As core counts increase according to plan (eventually up to 96 per chip) the work done on the research side will continue to evolve, notes Katevenis. The overall package the ExaNest team is developing focuses on other elements, including in-node storage, different interconnect strategies, and the potential for Xilinx FPGAs to fit into future production systems, but for now, hardware-level tweaks to bolster power efficiency are at the core.

“ARM solves the problem, or at least goes a long way toward that end, of reducing energy consumption,” Katevenis says. “Once that is done, it’s possible to put the processors closer to each other and when that’s done, and cooling has been improved, that means far more processors in the total energy budget.” As core counts increase according to plan (eventually up to 96 per chip) the work done on the research side will continue to evolve, notes Katevenis. The overall package the ExaNest team is developing focuses on other elements, including in-node storage, different interconnect strategies, and the potential for Xilinx FPGAs to fit into future production systems, but for now, hardware-level tweaks to bolster power efficiency are at the core.

ExaNest partner and cooling vendor Iceotope is providing the fully immersed cooling solution for the prototype and will be developing its next generation of technology with the team to target up to 240 kW per rack over the existing 60 kW per rack. The ExaNest project has gathered several other collaborators for different aspects of the prototype build and deployment, which Katevenis says will be matched against real-world applications to feed a better understanding of how the system might perform at larger scale in the future.

Among other partners for this most recent prototype ARM system for HPC are a number of software tooling vendors, including Allinea, which is supplying the ARMv8-A profiling and debugging tools, Enginsoft, which works with key industrial simulation codes in materials science, among others. Other tool vendors that want to get an early edge with helping HPC end users port their codes to the architecture, including eXact-lab are also on the list of collaborators, along with MonetDB, which is focused on business analytics. Katevenis says that he considers high performance databases to be part of HPC, especially as the range of data-intensive computing problems continues to grow. UPV-GAP will be providing the research and development for the interconnect network and has been selected to deliver the final photonic solutions for the full system. Other collaborators, including Fraunhofer Institute with its BeeGFS parallel file system, will be working on the project as well to understand how that might work with ARM at scale for HPC applications.

European institutions will be contributing specific elements to the project, including the Italian Research Institute in Astronomy and Astrophysics, which will feed to the network and storage design concepts. According to Giuliano Taffoni, a PI at the center, their codes will “contribute to the design and testing of the network and storage infrastructure and in turn, access to ExaNest’s prototype resources will provide an opportunity for computational cosmology.” Another lead at INFN says that the prototype will also allow the teams there to “explore and validate innovative architectural solutions required to speed the execution of large-scale scientific applications.”

Although we are still some time away from seeing powerful ARM-based machines topping the Top 500 list of the fastest supercomputers, momentum in Europe to build an environment rich with options (and including FPGAs as a central feature according to researchers in one Horizon2020 camp we’ll be talking to next week) is building.

Be the first to comment