If complex artificial intelligence applications could easily be run on the fly across hundreds of thousands of compute cores around the world–and at a cost that significantly undercuts the top public cloud providers–a new world of potential uses and new applications will certainly flourish. So, too, would the company capable of providing such a paradigm.

Long the domain of big supercomputing sites in research and commercial spheres, AI at this scale has been off limits for all but the largest institutions. But one startup, not long out of stealth and armed with the creators of Siri on the software side and some mysterious hardware infrastructure, and $143 million to push to the its own massive grid of compute, is aiming to make this a reality. The interesting thing is that the company has already shown how it can work in the context of some unique use cases–but the question is how it actually can compete in price, performance, and scalability.

While Sentient Technologies is not in the business of selling cycles for AI workloads, the infrastructure angle is worth noting, in part because it’s using an older approach to a much newer array of problems. (Sentient is not saying precisely how it is aggregating its compute capacity or where it is getting it from, so we are trying to puzzle it out.)

In the days before the public clouds provided access to many thousands of nodes nearly instantly, big distributed problems required either a dedicated supercomputer or the much slower grid approach that tapped into remote clusters, workstations, or PCs. In the latter case, this would simply be too slow and involve far too much data to distribute, especially in terms of moving data for all but the most parallel of problems—at least that’s what one might think.

It turns out, the same concepts that underpin large-scale distributed computing projects like Folding@Home, which taps into unused computing power, is at the core of Sentient’s push to provide millions of cores for large-scale financial, medical, and other AI projects. The question is, however, why, with the “unlimited” resources from large public cloud providers like Amazon Web Services, would a user choose to carry out a massive-scale AI project using this approach, especially when AWS, Google Cloud Platform, Microsoft Azure, and other providers have standardized hardware, an exact view of system performance, a sense of where the workloads are running, and what is essentially a straightforward pricing model.

As one might imagine, the answer comes down to cost. But the next logical question is how anyone can run hundreds of thousands of cores cheaper than a web-scale giant with all the coherent middleware, network, and other features that are necessary. We thought Sentient is tapping into cycles from Google, Amazon, and other cloud providers and can compete on price by using something akin to AWS Spot Instances, which is essentially a bidding-based systems that lets savvy users score compute cycles a bit cheaper as demand ebbs and flows.

Adam Beberg, co-creator of large-scale distributed computing projects like Folding@Home and principal architect at Sentient, assured The Next Platform that AWS Spot instances or low-cost cloud compute capacity are not part of its distributed AI approach, but he said that it is something Sentient has and will continue to evaluate. So then, how on earth is Sentient undercutting the giants and running at a potential million-node level for AI, which is both compute and data-intensive?

Following his large-scale grid computing research at Stanford University, which includes creating distributed.net and other commercial grid computing projects, Beberg joined forces with Sentient, the software star-studded San Francisco distributed AI startup backed by Tata Communications, among others. The goal was to create a computing resource for addressing AI problems at scale, an aim that the company has succeeded in proving through a number of early-stage use cases, from large predictive blood pressure studies to helping doctors spot deadly sepsis infections before they could take root.

All of this comes at a time when artificial intelligence, which encompasses a broad range of potential uses, is finding its way into common vernacular following popular uses such as IBM’s Watson question-answer system and advances in natural language processing that many encounter daily. The Sentient team includes the Siri talent as well as other notable AI researchers, among them Babak Hodjat, who has extensive patents and publications around agent-oriented software engineering and distributed artificial intelligence. The rest of the development team are software innovators that have been plucked from pharma, finance, and medicine. In other words, all the right ingredients are here–but how can hardware be standardized, packaged together, and built to run on the fly?

The Compute Is “Free” But Data Movement Is Not

Distributed computing for artificial intelligence across a large pool of remote, diverse resources (not in the monolithic cloud sense) might sound like a grand challenge on the compute side, but Beberg says new middleware and container tools are making this a possibility. Even for massive jobs that require many thousands of compute cores.

While it’s tempting to detail the AI stack that Sentient has built, a lot of that is homegrown and secret sauce, says Beberg. The use cases are noteworthy, too, but the question is how the scale required for these massive distributed workloads is achieved given the performance and data movement that demanding AI applications need. While the processing power of the CPU cores is important here, it isn’t just about raw floating point capability. Because of the data movement issues inherent to any distributed computing problem, the company’s approach is not a good fit for classic data-intensive problems with massive file sizes, but Sentient says it is already utilizing petaflops of computing capacity, even if the datasets are at terabyte scales. In other words, for compute-intensive workloads, which certainly describes AI, the approach the company has come up with can be a useful way to scale and retain performance.

Sentient has found some interesting ways to get around that data movement problem, which is derived from the middleware, scheduling, and application delivery and packaging platforms that Beberg has helped develop. At the highest level, Sentient has built some clever caching and pre-fetching tools, which is essential to countering the data movement bottleneck. A large amount of the data is used over and over again, with new writes happening as “learning” takes place in the software. This cuts down on the constant back and forth, streamlines the read/write process, clearing the way for optimization of how data is managed. Of course, given the mixed resources and other demands, the story does not end there.

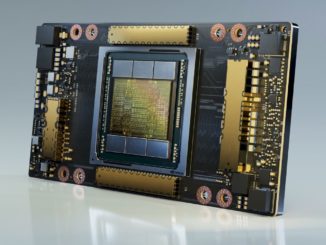

Part of the overall performance for the Sentient AI stack comes from the use of GPUs. Although Beberg couldn’t be specific about what these GPUs are (consumer/gaming chips or GPGPU cards like those found in Top 500 supercomputers), they add a performance benefit even if they also created some challenges on the software side initially. In terms of regulating across different resources, which use both CPUs and GPUs, Beberg says Sentient has “minimum system requirements and typically, these are multicore systems.” Beyond that, he says Sentient uses virtual machines and Linux cgroups for containers (not Docker for “good reasons” but they are looking to Rocket in the future). In essence, this combination allows Sentient to make all the diverse compute look somewhat homogeneous.

Sentient’s system takes care of scheduling the jobs according to available resources and optimizes the data flows in an effort to try to get peak utilization of the mystery capacity. “This is harder to do than it sounds because a lot of times you’re waiting for data—a lot of what we have to do in terms of data flow optimizing and getting the capacity boils down to pipelining and pre-fetching, Beberg explains.”We do the scheduling at a larger-grain level, which means we can optimize the data flows—those data flows are the major bottleneck, just as they have been for the last twenty years I’ve been doing this. The compute is free, moving the data is the problem.”

The other potential bottleneck is on the other end. Although it’s not clear where all of these resources are coming from, they are from machines that are handling other production workloads—whether on workstations, PCs, or servers inside cloud datacenters. “We are congestion aware,” Beberg told The Next Platform. “We don’t want to impact local resources at all if possible but we’re in situations where we don’t want to saturate bandwidth due to other users. We’re doing work to reduce the impact on the local networks.”

It will be interesting to see how this scales on larger problems, but more interesting to see how users make decisions between infrastructure options for their AI workloads. While the end users of AI represent a growing base, the benefits of using a system that pulls sources from several places for scale is clear. But what about users who want to know where their data is located? This seems to be especially concerning in the financial and medical spheres where some early use cases have run.

Beberg says that for some customers, they can show where the resources are located but at this stage, most projects are using open datasets so security and issues of location are not particularly important. Still, he says they are evaluating different security models for those who are location-sensitive.

Sentient has managed to undercut large public cloud providers like Amazon Web Services on price, even significantly against the reduced price spot instances, and there has to be a reason why this is the case. While Beberg could not comment on how this is achieved, it’s hard not to speculate on where the resources are coming from. One guess is that they have a number of corporate partners for resources, possibly even one of their backers, Tata Communications, with the kind of big spare cycles required for at least some of the workload. Where the rest is coming from is a mystery.

Still, it’s notable that there is a currently a petascale-class distributed computing project running real workloads for medicine and finance—and that the middleware, virtual machine, and application portability has come far enough since the old “grid” days to make something of this scale possible.

Excellently written.