Some Surprises in the 2018 DoE Budget for Supercomputing

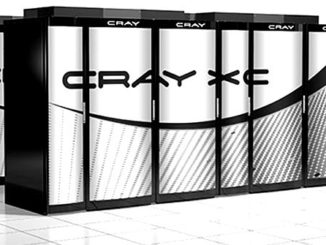

The US Department of Energy fiscal year 2018 budget request is in. …

The US Department of Energy fiscal year 2018 budget request is in. …

GPU computing has deep roots in supercomputing, but Nvidia is using that springboard to dive head first into the future of deep learning. …

During the five years that Red Hat has been building out its OpenShift cloud applications platform, much of the focus has been on making it easier to use by customers looking to adapt to an increasingly cloud-centric world for both new and legacy applications. …

Chip maker Intel takes Moore’s Law very seriously, and not just because one of its founders observed the consistent rate at which the price of a transistor scales down with each tweak in manufacturing. …

It is hard to tell which part of the systems market is lumpier – that for traditional HPC systems like supercomputers or that for massive cluster deployments for the hyperscalers that run public clouds and public facing applications on a massive scale. …

It has just been announced that there has been a shift in thinking among the exascale computing leads in the U.S. …

When it comes to supercomputing, you don’t only have to strike while the iron is hot, you have to spend while the money is available. …

The ultimate success of any platform depends on the seamless integration of diverse components into a synergistic whole – well, as much as is possible in the real world – while at the same time being flexible enough to allow for components to be swapped out and replaced by others to suit personal preferences. …

In Supercomputing Conference (SC) years past, chipmaker Intel has always come forth with a strong story, either as an enabling processor or co-processor force, or more recently, as a prime contractor for a leading-class national lab supercomputer. …

The future “Summit” pre-exascale supercomputer that is being built out in late 2017 and early 2018 for the US Department of Energy for its Oak Ridge National Laboratory looks like a giant cluster of systems that might be used for training neural networks. …

All Content Copyright The Next Platform