There is no question that that the combination of Nvidia and Arm Holdings would have been a powerful one in the datacenter. This is something that we have discussed at length before Nvidia launched its $40 billion acquisition of Arm Holdings, when Nvidia actually launched the takeover a year and a half ago, and when we had a number of conversations with Nvidia co-founder and chief executive officer about Nvidia’s aspirations in the datacenter, like this one.

But that deal has now been quashed by regulators in the United Kingdom, where Arm Holdings is still mostly located, by regulators in China, who have their own concerns as well as a trade war running with the United States, and by all of the other Arm licensees who depend on Arm technology a lot more than Nvidia does.

And now, the important thing to sort out is what will Arm Holdings do? And now, ironically, Rene Haas, who was just named the new chief executive officer of the chip design division of the SoftBank Group Japanese conglomerate that still owns Arm Holdings, is going to be tasked with figuring that out.

We say ironically because Haas was vice president and general manager of Nvidia’s Computing Products business unit during its formative years of 2006 through 2013, when HPC shops first deployed GPU accelerated computing and then machine learning training took off using the same technology to great effect. Haas moved over to Arm Holdings to become vice president of strategic alliances for a few years, was chief commercial officer for a few years, and was in charge of its intellectual property group most recently, and Haas takes over for Simon Segars, who managed the acquisition by SoftBank eight years ago and who is leaving as the Nvidia deal has come unwound.

There was plenty of talk last summer that if the Nvidia acquisition didn’t happen that Masayoshi Son, who controls SoftBank, would try to take Arm Holdings public in an effort to raise money to cover some bad bets that SoftBank has made in recent years, such as WeWork and Alibaba, have seen their market capitalizations implode.

Now, there is no question that Arm Holdings will be going public, since this was disclosed in the joint statement put out by Nvidia and SoftBank. The plan is to do so before the end of Arm Holding’s fiscal year in March 2023, which is a reasonable pace for a return to the public markets; there is chatter that the company will list on the Nasdaq, but it would seem more appropriate (had it not been for Brexit at least) for Arm Holdings to return to the London Stock Exchange. We shall see.

The important thing is for Arm Holdings to have access to capital, and whether it is in the City or on Wall Street doesn’t really matter all that much.

“Even during the five years or so that Arm has been private under SoftBank’s ownership, we have been self-funded,” Inder Singh, chief financial officer at Arm Holdings, explained in a conference call in the wake of the announcements. “The difference, of course, is that during the private years, we were able to bring the profit margins down during the funding phase, and then we were looking for the return on some of those investments we were making and now we expanded margins. So we are back to where the margins were before and we maybe will aim higher in future years. And over the next five to seven years, being a public company again, gives us access to capital should we need it, and we have more degrees of freedom. The deal with Nvidia would have helped, where we would have been part of the corporation and able to tap its balance sheet. As an independent company, we will be able to tap capital markets.”

And given the margins Arm Holdings is now enjoying, Singh adds that the company is in “a very good place” to drive future growth.

The trick to growing Arm is the same for any ecosystem and any platform – it needs to be extended to new use cases and markets, as Haas explained on the call. Haas said that Arm Holdings will be returning to its IP licensing roots, having spun off some uncore business back into SoftBank, and seeking out new markets as it has been able to do with hyperscalers, cloud builders, and car manufacturers. The reason those new markets are approachable is because of the vast ecosystem that the Arm architecture has, with an estimated 15 million developers, over 10 million applications running on every major client, server, and embedded system operating system.

“Beyond IoT and the metaverse, we have got some other things up our sleeves, but there will be a right time and place to talk about these,” Haas hinted. “I have been at Arm for nearly nine years, and one of the things I found about working for Arm is that there literally is not a technological space or place we cannot participate in. I think our challenge over the next couple of years is making wise choices on which markets to invest in.”

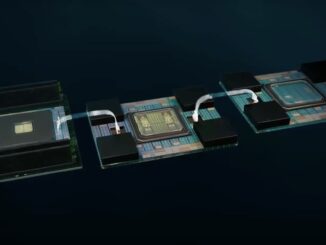

That got us to thinking about things other than CPUs that could be licensed and put in the datacenter, and we asked Haas if there was any chance that Arm Holdings would think about making beefier Mali GPU designs and maybe even DPU designs in its own right and maybe even NPU matrix math accelerators to boost AI training or inference – all licensable and customizable, not just buyable as a finished product

“Your commentary is an interesting one,” Haas said. “Because today, Mali is largely known for our footprint in the mobile, and it’s the most popular GPU on the planet. If you start to fast forward into the hyperscalers, and you start to fast forward into the cloud, you are getting some really interesting opportunities relative to the CPU and the GPU. Where is the machine learning taking place? Is it a standalone NPU? Can you do something else relative to that? I can tell you that we have got a lot of people looking at that. And I think it’s one of the it’s one of the biggest opportunities for us, I think, in terms of where the puck goes, if you will. And it’s a playbook, again, that is very relevant from cloud to hyperscaler and into autonomous vehicles, all of which needs an accelerator to do offload from the CPU. So, there’s nothing I can tell you yet about product plans, but I can tell you that we have a lot of a lot of smart people inside the company spending a lot of cycles, thinking about different things we can do in that space.”

Well, good. That’s what we like to hear.

Whatever Nvidia does in the wake of this deal with Arm Holdings fall apart, it had better move fast. Because if Nvidia doesn’t license its GPU technology through the Arm channel, as it has said that it would do, given the amount of compute that is being done by accelerators in the datacenter and at the edge, Arm Holdings will have no choice but to create its own designs and license them.

And then Nvidia will be facing a difficult GPU moment just like Intel has been facing difficult CPU moments in the datacenter for the past three years. (This issues will come home to roost soon, regardless of Intel’s recent financials.)

When Intel was worried about AMD Epyc chips, what it really needed to worry about was boosting price/performance to keep Arm CPUs out of the hyperscaler and cloud builder datacenters. And if Nvidia is worried about AMD GPUs – as it should be given the strength of the “Aldebaran” Instinct MI200 – and to a much lesser extent about Intel GPUs – the “Ponte Vecchio” Xe HPC is not as impressive on many fronts, and we have yet to see any pricing at all – maybe it should be more worried about discrete, beefy, and licensable Mali GPU complexes designed by Google, Amazon, Microsoft, and maybe even Facebook, Alibaba, Tencent, and Baidu.

These companies are sitting around waiting for each generation of discrete GPUs from Nvidia, AMD, and Intel to come out when they – meaning not the customers, but the chip designers – need them to enter the market, when what they could be doing is designing such GPU accelerators themselves and having Taiwan Semiconductor Manufacturing Co build them to their schedule. These eight companies have half the volume of CPUs and probably more than half the volume of GPU compute engines, too. And could the timing be any worse or the ramp of products be any slower than what they are seeing now with the proprietary vendors in control?

Probably not. Amazon Web Services has Graviton Arm server chips on a pretty regular schedule, and you can bet Masayoshi’s last yen that Graviton4 will debut at re:Invent 2022 this year.

Don’t get us started about networking. Maybe we need licensable Ethernet switch ASICs, licensable network interface chips, and licensable DPUs that mix up various kinds of compute and offload them from the CPUs. But everything we just said about compute applies equally well to networking, as Nvidia knew when it talked about pushing its intellectual property through the Arm licensing channel.

Be the first to comment