Enterprises are said to be awash in data, and one of the problems posed by all this data is not just storing it, but processing it. Storage architectures have by and large been keeping up with the capacity problem, while the introduction of flash has also given storage a much-needed speed-up over the past decade, significantly boosting the performance of transactional workloads such as databases as well as file, block, and object storage.

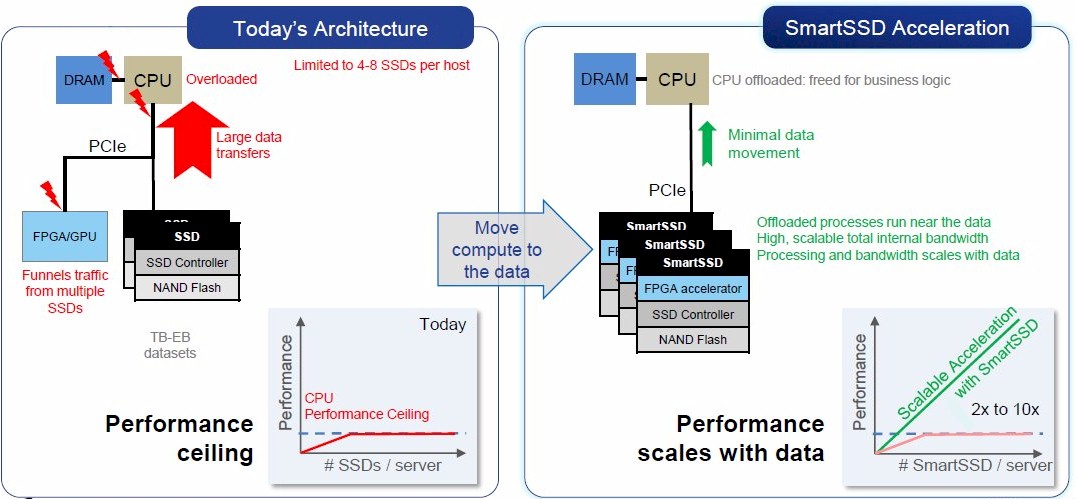

However, as datasets continue to grow and workloads start to incorporate techniques such as advanced analytics and AI, storage is coming under new pressure. There is both a cost and a time factor involved in moving data from where it is generated to where it is processed it, and with certain classes of computational problems being very I/O bound, it makes more sense to perform the processing as close as possible to where the data is stored, rather than shuffling gigabytes or terabytes of information around.

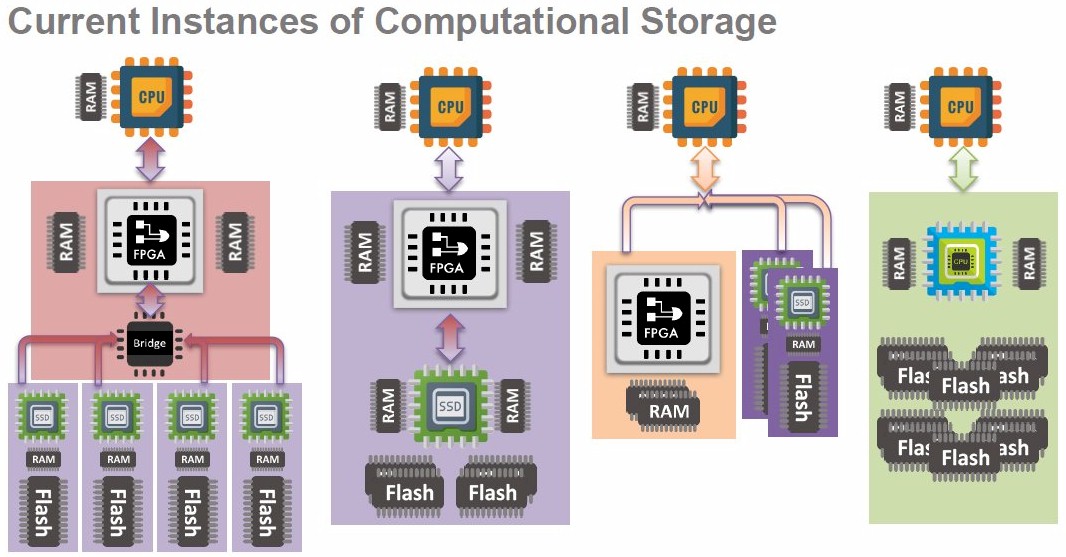

This has become known as “in-situ processing” or “computational storage” and is an emerging field that is chiefly being pioneered by a small number of startup companies. These have adopted several differing approaches to computational storage, from integrating processing power into individual drives, to accelerators that sit on the storage bus but do not contain any storage themselves. Most revolve around NVM-Express SSDs.

While this is still a developing area of technology, the Storage Networking Industry Association (SNIA) stepped in and formed a Computational Storage Technical Work Group (TWG) in 2018 to define interface standards and to promote the interoperability of computational storage across different vendors.

As the SNIA itself noted, all those startup companies doing one-off proof-of-concept deployments are unlikely to get the kind of traction their investors would like without there being a larger market to tap into.

A year on, and the SNIA TWG has come up with a set of definitions that cover the component parts of all the computational storage architectures and their relationships with each other, plus draft specifications for the architectural models and a programming model for those architectures and services.

The SNIA definitions include Computational Storage Processor (CSP), Computational Storage Service (CSS), Computational Storage Drive (CSD), and a Computational Storage Array (CSA), which is defined as a collection of collection of computational storage devices, along with control software and optional storage devices.

SNIA also differentiates between Fixed Computational Storage Services (FCSS), those designed to fulfil a well-defined function or related set of functions, such as compression, erasure coding, or encryption; and Programmable Computational Storage Services (PCSS), which are dynamically reprogrammable by the end user.

The TWG’s goal is to revise the architecture specifications until they are polished enough to be considered a 1.0 version (the current version is 0.3), at which point the group aims to pass it to an existing standards body that will manage it going forwards. Current expectations are that this will be the NVM-Express body or the PCI-SIG, because of the central role PCI-Express and NVM-Express now have as storage interfaces.

“We know that we have to involve things like NVM-Express or PCI-SIG for things like how to discover, how to manage and how to configure. Telling people how to do that is great, but we need a protocol to do that with, it’s not going to be something that we’re going to write from new,” says Scott Shadley, co-chair of the SNIA Computational Storage TWG and also vice president of marketing for NGD Systems, a company that manufactures SSDs capable of computational storage.

Shadley expects that support for computational storage devices will become a core part of the NVM-Express specification, so they can be exposed to the host system thus enabling a computational storage service driver to configure the discovered computational storage service and prepare it for use by applications. “So the idea is you plug it in and it just works,” he says.

Putting Cores Inside SSDs

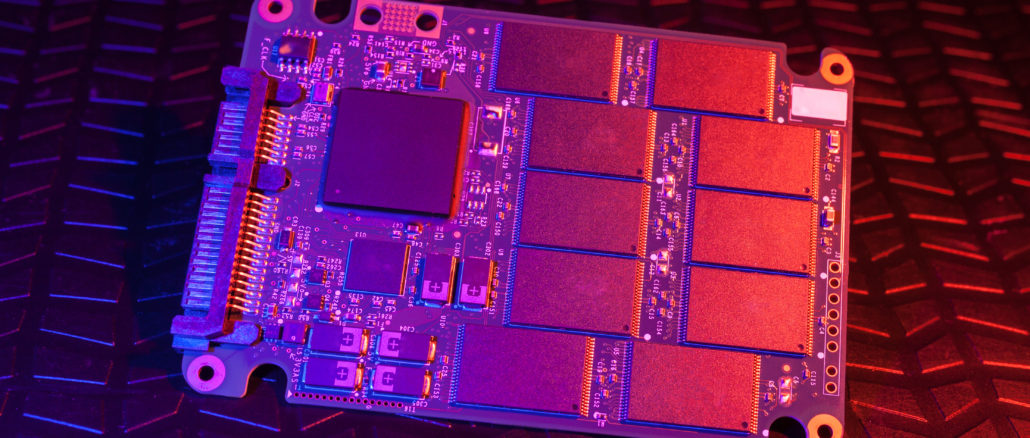

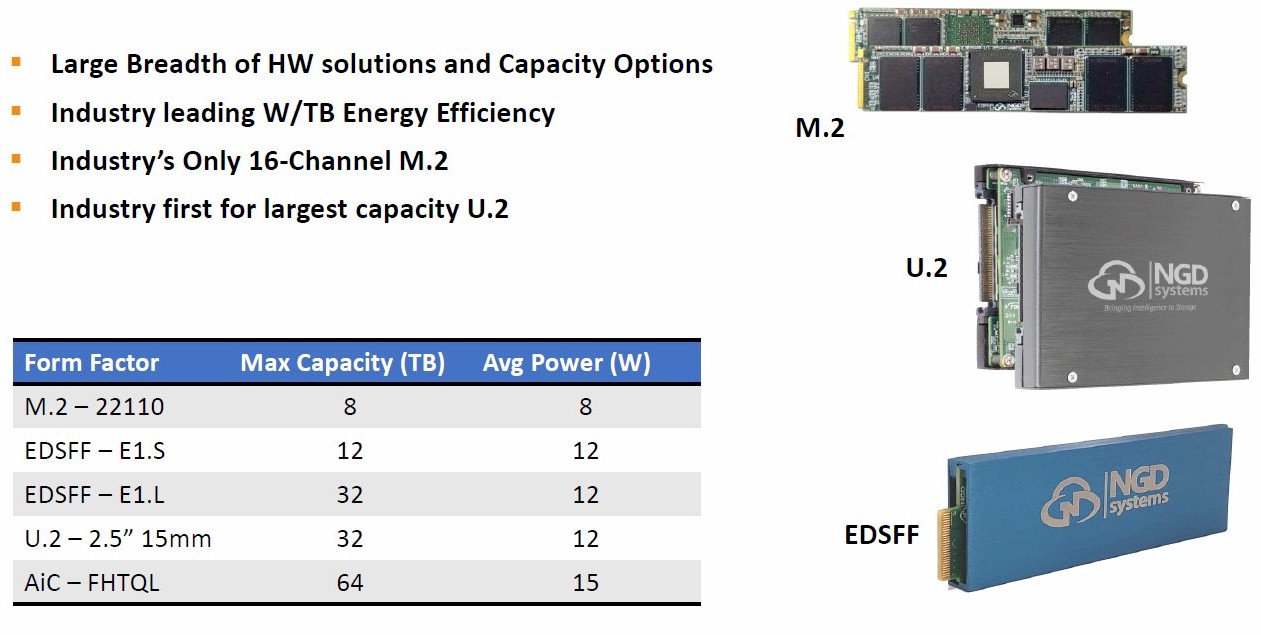

NGD’s technology is a good place to start for an introduction to computational storage. It is one of several vendors in the field developing CSDs that integrate processing capability into an SSD storage device. With Newport, the third iteration of its platform, the firm has developed a custom ASIC that incorporates both the SSD controller functions and a quad-core ARM Cortex-A53 CPU block, making it a Programmable Computational Storage Service (PCSS) product. The block diagram on the right hand side of the SNIA chart above depicts this architecture.

The beauty of NGD’s design is that its products can be used either as a computational device or as a straight SSD. In fact, Shadley claims that some NGD customer wins were on the basis of storage capacity first (it offers SSDs up to 32 TB), with the ability to do computational storage only being discussed later.

Embedding compute into the SSD controller means it has direct access to the NAND flash chips inside the drive. The Common Flash Interface (CFI) channels connecting the SSD controller to the NAND flash each has a bandwidth of 1.6 GB/sec (for a 16-bit channel), and a typical SSD controller sports eight channels for a total bandwidth of 12.8 GB/sec. In comparison, an enterprise SSD with a U.2 host connector has four lanes of PCI-Express 3.0, which adds up to 3.94 GB/sec of bandwidth – less than a third as much.

Meanwhile, the SSD controller is paired with enough DRAM (up to 16 GB can be fitted if required) for the ARM cores to run a version of Ubuntu Linux, greatly simplifying the development and deployment of applications. Microsoft’s Azure IoT Edge service is also supported, and NGD has also been talking to VMware about running the ESXi hypervisor on the ARM cores, enabling virtual machines to run inside its SSDs, completely independently of the host processor.

Edge scenarios are a market where NGD has spotted an opportunity and is running proof of concept trials, according to Shadley, who cites the example of a convenience store chain that wanted to deploy an object detection and facial recognition platform in stores to help combat crime.

“They put the whole thing together in the lab and tested it, went out to a store and found that the power and the footprint they needed to install it doesn’t exist in that convenience store. They don’t have a closet where they can put a half rack of compute and storage; they have room for an edge server or a gateway type platform, and that’s where we started talking to them,” Shadley explains.

NGD Systems announced in January that it had secured $20 million in Series C investment to fund expansion, with money coming from MIG Capital and Western Digital Capital, among others. This investment is a sign that NGD is “at a point where we’ve reached a production level solution with our product,” according to Shadley, making its technology among the most mature in this market.

CSDs from other vendors are largely based around FPGAs instead of integrating CPU cores, and so may be considered less flexible in that instead of running applications, they are typically configured by programming and reprogramming the FPGA to accelerate specific functions. Where FPGAs are used, those from Xilinx mostly seem to be the choice.

One example is ScaleFlux, which offers its CSD 2000 Series SSD hardware in PCI-Express add-in-card and U.2 form factors with up to 8 TB of capacity. The Xilinx FPGA is typically used to accelerate functions such as in-line data compression and decompression, erasure coding and database analytic functions. ScaleFlux claims that wherever possible, it uses existing APIs so that the computational storage functions are transparent to the application on the host system that is being accelerated. Among the applications it can accelerate are Aerospike, MySQL and PostGreSQL.

The ScaleFlux hardware is effectively an open-channel SSD; the FPGA does not implement the flash translation layer (FTL) that lives in the SSD controller in standard drives. Instead, the FTL functions are implemented in software that runs on the host system.

Scaleflux claims that its hardware cuts in half the cost per usable GB of flash by compressing the data on-the-fly and exposing the space savings to the file system.

The company raised $25 million in Series B funding back in 2018, led by Shunwei Capital and several Tier-1 strategic corporate investors.

Netint is another company with an ASIC that combines an SSD controller with compute power. In this case, the company is focused chiefly on multimedia production, and its Codensity G4 SSD Controller SoC offers video compression using H.265 encode/decode engines. The SoC is used in the Codensity D400 Series of SSDs, which have a capacity of up to 16 TB and support NVM-Express over PCI-Express 4.0, in an add-in card or U.2 formats. Netint was founded by several storage SoC veterans and is venture-funded, but exact figures on the investment involved are not available.

Samsung is using a Xilinx FPGA for its CSDs, known as SmartSSDs. This design is a PCI-Express add-in-card with up to 8TB of capacity, made up of Samsung’s own V-NAND flash. Here, the flash is managed by a Samsung SSD controller as normal, front-ended by the Xilinx FPGA.

Like ScaleFlux, Samsung sees acceleration of storage functions such as compression, decompression, erasure coding as opportunities, plus specific functions for application frameworks, such as video encoding, database acceleration, search, and machine learning. One partner using SmartSSDs is Nimbix, which has made them available as part of its Nimbix Cloud. Here it is used to accelerate Apache Spark, running queries up to six times faster when using software from Bigstream.

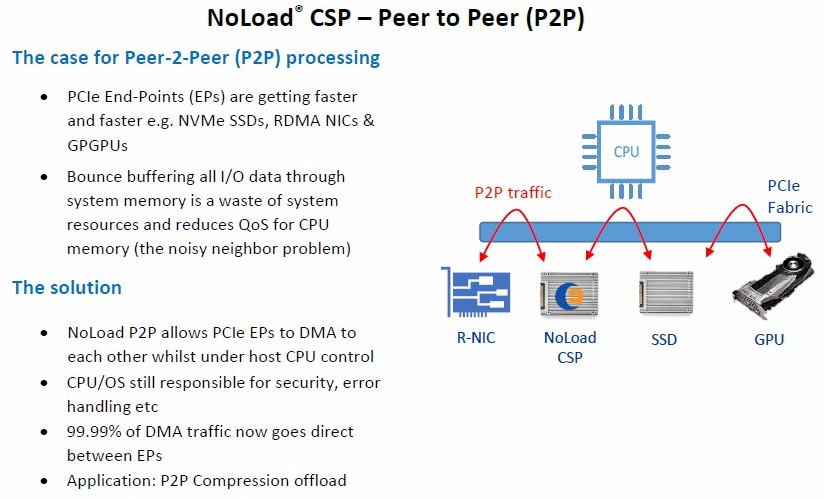

Peer To Peer Via NVM-Express

Other computational storage products are classified as Computational Storage Processors (CSPs) rather than CSDs. These are typified by products from vendors such as Eideticom and Pliops, which provide compute power to offload from the host processor, but do not include any persistent data storage, unlike a CSD.

Eideticom’s NoLoad CSP devices are available in a U.2 form factor that looks like a drive enclosure, a PCI-Express add-in card, or the newer Enterprise and Datacenter SSD Form Factor (EDSFF) derived from Intel’s Ruler format. They contain an FPGA-based processor and DRAM, but they fetch and process data stored elsewhere on SSDs using NVM-Express, or an NVM-Express-oF network connection. This architecture is depicted by the second from right block diagram in the SNIA chart above.

This arrangement means that NoLoad devices are limited by the bandwidth of the PCI-Express Gen4 interface to access data from storage, but Eideticom claims that it allows processing and storage to be scaled independently of each other, by adding additional NoLoad devices or SSDs. Data transfers are peer to peer, and therefore impose little or no load on the host processor. Support for NVM-Express-oF also means that NoLoad devices can be located in an external chassis, such as a storage enclosure (Eideticom has validated NoLoad with Broadcom, Mellanox and Q-Logic RDMA NICs).

Applications for Eideticom’s NoLoad devices cover both storage and compute applications again, with the company stating it supports computational accelerators for compression, encryption, erasure coding, deduplication, data analytics, AI and ML workloads.

Eideticom announced last year that it had worked with the Los Alamos National Laboratory to develop a storage system using a Lustre/ZFS-based parallel filesystem with NoLoad performing compression, erasure, checksumming and dedupe functions.

Eideticom received seed and strategic financing from Inovia Capital and Molex Ventures in 2019, but exact figures have not been disclosed.

Another solution from Pliops sounds similar on the surface. The Pliops Storage Processor (PSP) is a software accelerator that uses a Xilinx FPGA sitting on a PCI-Express add-in card to accelerate storage functions. However, Pliops is focused on storage-intensive applications such as databases, and is developing PSP instances that replace the storage engine that underpins specific database systems (such as InnoDB for MySQL) with its own storage engine implemented using the FPGA.

In tests using the dbbench benchmark, Pliops claims to have demonstrated a 13X boost in performance versus the RocksDB storage engine, both using a single 1TB NVM-Express SSD. Pliops has to date received $40 million in funding, including $30 million Series B funding led by Softbank Ventures Asia, plus investors from the previous round of funding such as Intel Capital, Western Digital Capital and Xilinx.

One company that has followed a slightly different path is Nyriad. The New Zealand firm is a commercial spinout from the Square Kilometre Array radio observatory, to commercialise technology developed for storing and processing the data it produces. This comprises over 160 TB/sec of astronomical data in real-time, with over 50 PB of data per day stored at its regional supercomputing center in Perth, Australia.

The platform is called Nsulate, and uses GPUs to handle the storage processing required for erasure coding schemes to provide fast and reliable storage for hyperscale, big data and high performance computing installations. It is claimed to offer high performance, even with substantial degradation of storage components, and enables highly parallel arrays with hundreds of devices. (A whitepaper on Nsulate is available here.) Nsulate is exposed to the application layer as a Linux block device, making it compatible with Linux filesystems and applications. The company states that the GPUs can be used simultaneously for other workloads such as machine learning. The platform is currently offered as part of pre-built systems by partners such as Boston Limited and EchoStreams, the latter supplying it as part of a 1U server holding up to half a petabyte of storage, capable of data encryption/decryption speeds of up to 50 GB/sec.

Nyriad has had over $30m of funding from investors such as IDATEN Ventures, and at present its web site states that the company is preparing for a commercial launch into the market in early 2020.

Computational storage is still a developing field, and it is just one of many emerging technologies aimed at boosting performance in key areas. As such, computational storage is not a panacea for all applications, and is unlikely to replace GPUs and FPGA accelerators on the PCI-Express bus.

However, it is likely to gain traction if it can prove useful in specific scenarios. These include workloads such as AI inferencing and distributed machine learning training, processing data using Hadoop, or edge deployments where power and space constraints apply.

What we can see already is that solutions are converging on the NVM-Express protocol as the unifying technology, which is hardly surprising given its ascendancy in high performance storage over the past several years. If the SNIA TWG has its way, this will provide a standard way for host systems to discover and configure any computational storage resources they have.

Currently, most vendors in this sector are focused on delivering specific functionality, typically accelerating storage layer features such as compression or erasure coding, making them more of a Fixed Computational Storage Service (FCSS), with perhaps the ability to add additional capabilities if the customer desires.

The exception appears to be NGD Systems, which has embedded general purpose compute power into each of its SSDs, effectively turning each drive into a quad-core server in its own right, when combined with the right software. This means that one host server or even an external NVM-Express storage array can become a compute cluster for distributed processing.

NGD’s Shadley explains it like this: “If you have a large amount of stored data that you need to do something with, our tagline for moving forward is – we store massive amounts of data, and we return just the value of that data to you.”

Be the first to comment