It is hard to say what will happen first: Switching and routing will merge, or an independent networking operating system that can do both will emerge. If Arrcus, which dropped out of stealth mode last July with a shiny new network operating system that can provide both switching and routing functions on merchant silicon, has anything to say about it, both will happen at the same time. And it won’t matter which is the primary cause.

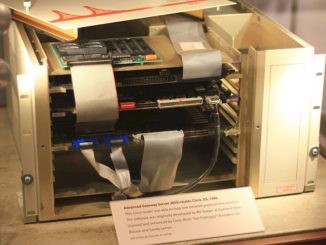

Switching was invented first for telephone networks back in the 1920s, but datacenter switching as we know it today got its start with ARPANET in 1969 and was used to link multiple computers together so they could share data. The router, which was created independently at Stanford University and MIT back in 1981, is used to link multiple networks together – a kind of switch for switches, as it were, but one that operates at Layer 3 of the network protocol stack instead of Layer 2 where switching plays.

Since that time, switching and routing have been at war with each other, and in recent years router functionality has been pulled into switch ASICs and their operating systems mostly because hyperscalers and cloud builders have much more complex dataflows than the typical enterprise and they are sick and tired of paying huge sums of money for router backbones when switches with Layer 2 functions gussied up with Layer 3 routing capabilities can do the job for a lot less money.

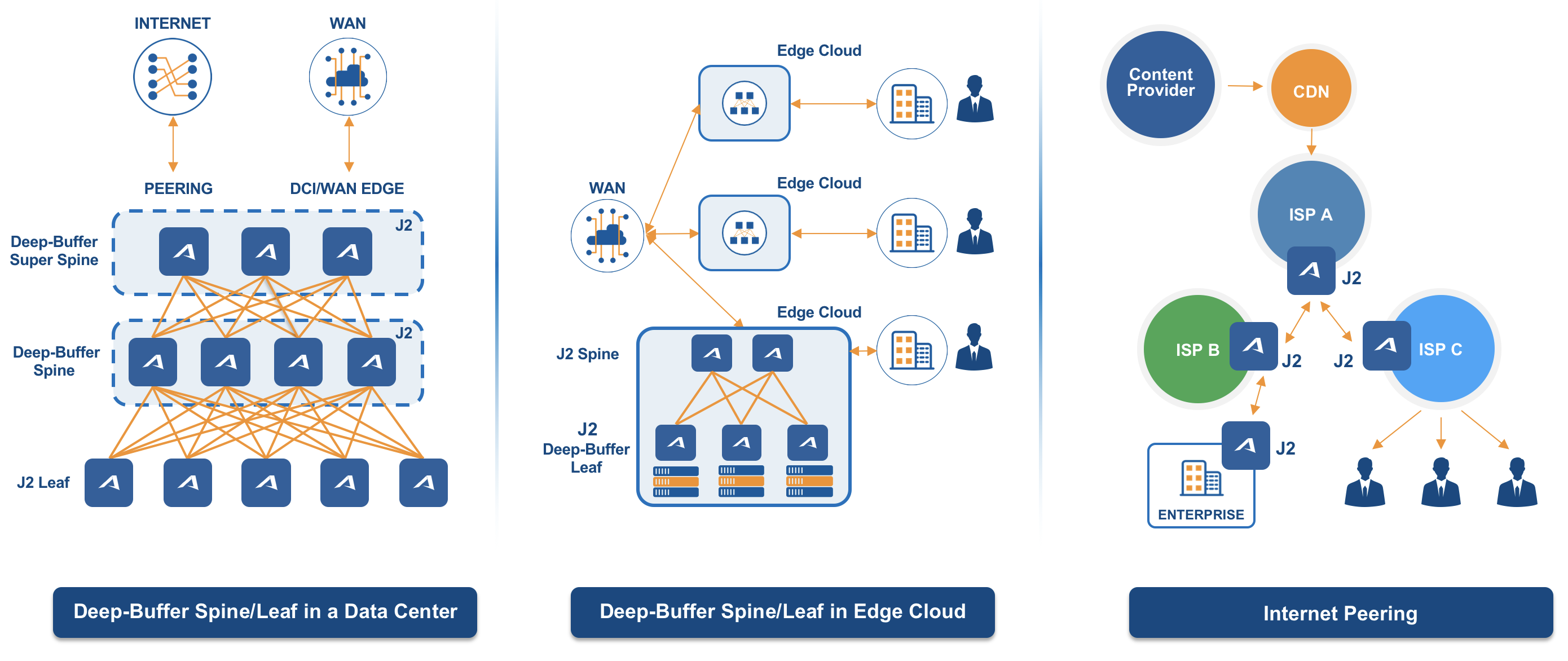

In essence, the datacenter is starting to look more like our homes when it comes to connectivity: You have one big pipe coming down with Internet and we use wired and wireless routers to link devices to the Internet directly. The old adage, switch when you can and route when you must, could get turned on its head with emerging network architectures, which could look more like route when you can and switch when you must. Or, even more precisely, use shallow ASIC buffers when you can and use deep buffers when you must, because the latency requirements of the applications, be they on the edge or in the datacenter, are what will drive architectural decisions and ASIC selection. Switch and router vendor choice will be tertiary.

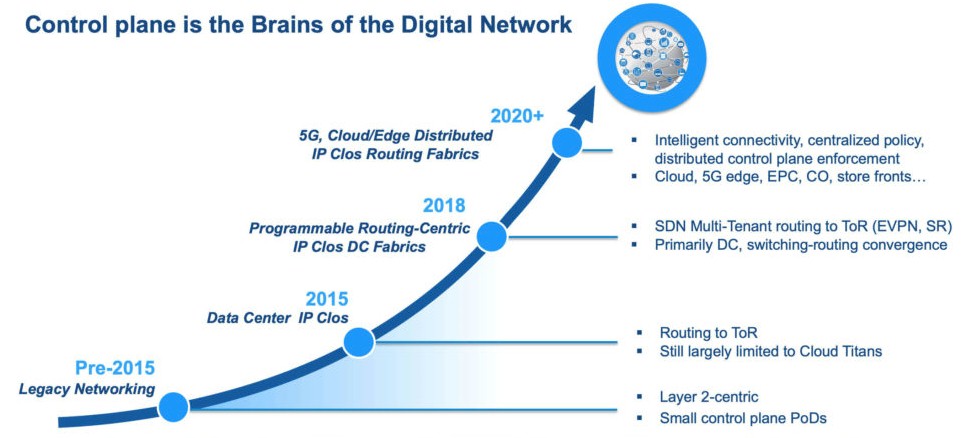

This radical change in network architecture has been made possible through the evolution of routing functions in merchant switching silicon, something that was absolutely been commanded from on high by the hyperscalers and cloud builders who were not going to spend many hundreds of thousands of dollars for routers to interlink their vast compute and storage farms. They want wider and flatter Clos networks that mix Layer 2 switching and Layer 3 routing in a more dynamic fashion and across multiple ASICs where necessary.

And if they had the choice, they would have a single stack of switching and routing software running across hardware from multiple OEMs or ODMs. This is the founding principle of Arrcus and the design goal of its ArcOS network operating system, which we detailed here earlier this year when it was ported to the “Tomahawk 3” Ethernet switch ASIC from Broadcom, which was designed for hyperscalers and cloud builders to provide cheaper 100 Gb/sec ports that are often carved down to 25 Gb/sec down to the server. ArcOS supported the enterprise-class “Trident 3” chips from Broadcom initially, which have a deeper protocol set and low latency, and added support for the “Jericho+” deep buffer ASICs from Broadcom in October last year. And now, this week, ArcOS has been added to the “Jericho 2” deep buffer switch, which can have gobs of HBM memory hooked to it for very deep buffers indeed and which supports 100 Gb/sec and 400 Gb/sec ports. We think that it won’t be too long after the impending “Trident 4” ASICs, which were unveiled in June, ship in products that Arrcus will support them, too.

“For the first time, both enterprises and service providers have the full toolkit to pick and choose the combination that they want for the specific use cases that they are trying to address,” Murali Gandluru, vice president of product management at Arrcus, tells The Next Platform. “Where there is a requirement for high fidelity storage – in traditional datacenters or at the edge close to the user, it doesn’t matter – they will require a Jericho-based platform. They may build a spine using high density switching ASICs to interconnect those, like one of our customers is actually doing, or they may need the deep buffer all the way to the edge of that site because they are combining peering capabilities onto that spine. There is going to be very many interesting things happening, and for the first time in the merchant silicon toolkit you actually have the full range of possibilities and flexibility. And ArcOS is unique in that it can span it all. For example, you can actually build a full, end to end, cookie cutter edge site with ArcOS – from superspine to spine to leaf – without having to go through multiple operating systems.”

As we have pointed out before, the changes coming to datacenter networking are not just about disaggregating the network operating system from the underlying hardware and exposing the APIs in that operating system to the outside world. This is a big part of it, and there are those that think the future network operating system in the datacenter should be open source, creating a base of commodity hardware and probably a single dominant vendor of support for that open source network software stack. Arrcus certainly agrees that there should be one NOS that can span all of the important hardware. So in the end, it’s not so much about switching versus routing, but getting proper routing support – in terms of the silicon’s capabilities and the software’s ability to exploit it – into switch ASICs.

So what took so long to get here, and why have Cisco Systems and Juniper Networks ruled routing for so long?

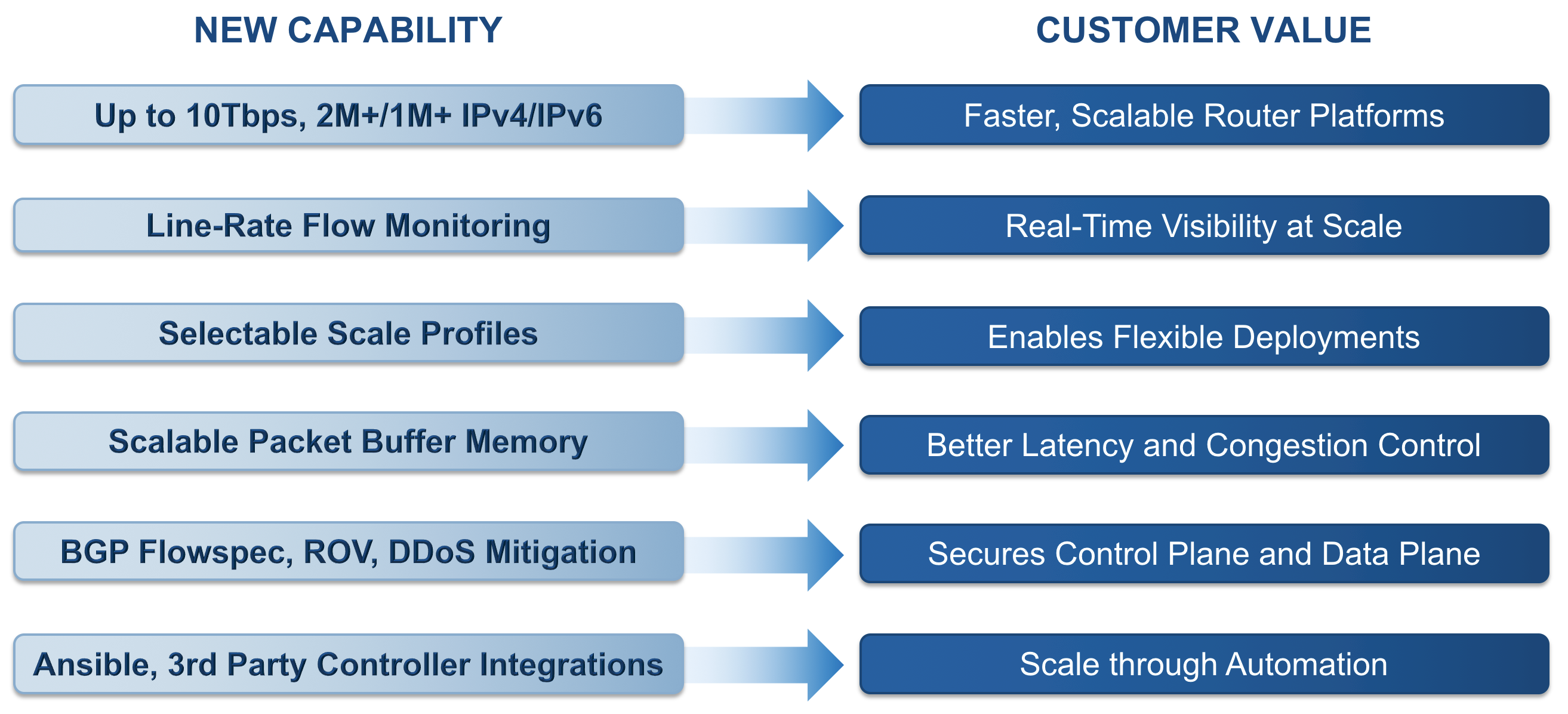

“The reason it has not happened until now is because there was no alternative,” explains Gandluru. “We are at an inflection point where Clos architectures using spines and leaves are now being mimicked in both switching and routing, and merchant silicon combined with ArcOS has a great shot of competing with the custom ASICs from Cisco, Juniper, and others. So now we can go on the offense and provide customers with routing platforms that are a fraction of the cost of what Cisco or Juniper charge. You could not do that before. The prior generation of the routing silicon did not have many routing capabilities, like line rate flow modeling, which is absolutely required in the Internet and in the backbone. Now you have them. This comes in parallel with other events. First, the routing control plane came from the backbone or the edge into the datacenter to make the application interaction easy. Then the chipsets in the merchant silicon side caught up and exceeded the custom silicon. Now you have the control plane plus that combination of better ASICs addressing all of these edge environments. That is the big thing that will take down the cost of ownership for networks.”

Here is a list of the routing capabilities that have been added to ArcOS to exploit the routing functions in the Jericho 2 chips:

The ODMs are catching on fast to this wave, and are adopting ArcOS as an option on the switches (and switch/router hybrids) that are based on the Broadcom chips that Arrcus supports. Celistica, Edgecore, and Delta are supporting ArcOS on the Broadcom chips, with Quanta Computer soon to follow we reckon based on the fact that it supports Trident 3 ASICs already and will want to add others.

The venture capitalists that have been funding Arrcus have also seen this pattern, and have just kicked in another $30 million in Series B funding. This round was led by Lightspeed Venture Partners, as was its Series A round last year, bringing its total to $49 million. General Catalyst and Clear Ventures have also kicked in some money. This Series B round was oversubscribed and all of the investors who kicked in funds the first time wanted to do so again, which is always a good sign. The money will be used to build out engineering, sales, and marketing to expand the capabilities of ArcOS and, presumably, to make it available on ASICs outside of those from Broadcom. The Tofino chipsets from Barefoot Networks (soon to be part of Intel) and the Teralynx chipsets from Innovium are obvious choices, but there are others.

According to Devesh Garg, co-founder and chief executive officer at Arrcus, the company now has around 50 employees and will be doubling that between now and the end of the year. A key new hire is Arthi Ayyangar, who has worked at Juniper and who more recently was director of product management at Arista Networks in change of its Extensible Operating System (EOS) for its switches.

ArcOS is in production at a handful of service providers and currently it has a “double digit” count of total customers, with “four or five handfuls” of proofs of concept running and an even broader pipeline. This is about what you expect for a ramp of a company selling systems software on a select number of pieces of hardware this early in ramping up the hockey stick to an acquisition or an initial public offering.

This week, rounding out the switching and routing software stack from Arrcus, the company is announcing a free standing add-on tool called ArcIQ, which is an AI-driven network operations center (NOC) platform akin to those that hyperscalers, cloud builders, and service providers have coded for themselves. ArcIQ does all kind of higher level management and monitoring, including watching network health across edge, cloud, and datacenter network devices and tracking assets in these facilities. The AI comes into play in taking telemetry pulled off the network and using it to shape traffic as it changes. ArcIQ can also help mitigate against distributed denial of service attacks using the FlowSpec feature of the BGP protocol that is widely deployed by hyperscalers and cloud builders. By the way, you need line rate flow streaming to do this, which is only becoming available with the latest Broadcom ASICs.

The updated ArcOS will be in production this quarter, with ArcIQ starting to ship to early adopters this quarter and general availability in the fourth quarter.

Note: This and other topics in storage/networking will be the subject of The Next I/O Platform on September 24, 2019 in San Jose, CA. Learn more about the technical conversation-driven day.

When I used router primarily I would be faced so many problems then I switched hyper scalers this is better than switch router.you can also get more suggestion through the internet regarding this.

The last I heard, there are no ASICs that can perform NAT. Switches have traditionally done things using ASICs and routers have relied on their CPU. Unless you’re saying there’s an ASIC that does NAT, nothing has changed.