Tech vendors often like to boast about being first movers in a particular market, saying that leading the charge puts them at a great advantage over their competitors. It doesn’t always work that way, but sometimes it does.

A case in point is Amazon Web Services (AWS), which officially launched in 2006 with the release of the Simple Storage Service (S3) after several years of development and with it kicked off what is now the fast-growing and increasingly crowded public cloud space. Eleven years later, AWS owns just over 44 percent of the market, according to CEO Andy Jassy, pointing to numbers from market research firm Gartner. The next nine combined – which include Microsoft Azure, Google Cloud Platform, Alibaba Cloud and Oracle Cloud – account for less than half that number.

In the most recent financial quarter, AWS saw revenue jump 81 percent, to $1.82 billion, with income rising 407 percent, to $391 million. By most measures, AWS is the undisputed king of the space, and at this week’s AWS re:Invent conference in Las Vegas, Jassy and the rest of the company are pressing their advantage.

The cloud provider is unleashing a broad array of new services that touch on everything from compute and databases to data analytics, machine learning and the internet of things (IoT), most of which were introduced during Jassy’s lengthy keynote address. Still, the CEO made sure to briefly highlight the scope of the company’s dominance, noting its $18 billion run rate, 42 percent growth rate and the millions of customers that are leveraging AWS’ infrastructure-as-a-service (IaaS), which include many the largest players in multiple industries.

“What you’ll see is most ISVs will adapt their software to work on one technology infrastructure platform,” Jassy said. “Some will do two, very few will do three, and they all start with AWS because we’re such a significant market leader. We have very significant market share and leadership position.”

What followed was more than two hours of introductions of new services that Jassy said adds capabilities that other providers can’t compete with and that are designed to give customers more options in the instances they use, to derive value faster from data that they can now more easily move and manage, and to leverage emerging technologies to get more value from the workloads they want to run.

Technology builders “want to be free from the abusive relationships that stop them from doing what they want to do,” he said. “For the technology builders, it’s the old guard technology providers optimizing for themselves. For technology builders, AWS … radically changes what’s possible, not just vs. other alternatives that are out there today, but vs. what’s been possible in the past.”

Among the new offerings was the introduction of the public preview bare metal instances in AWS’ Elastic Cloud Compute (EC2) service, which will enable customers to run their applications – primarily workloads that aren’t virtualized, need specific hypervisors or aren’t allowed to use virtualization – directly on the Intel Xeon-powered hardware powered. They get more control over the servers they use in the AWS cloud while also retaining access to such features as Amazon Elastic Block Store (EBS) volumes, Virtual Private Cloud and Elastic IP addresses and Elastic Load Balancers. The initial bare metal instances will be in AWS’ i3 instance lineup, with plans to expand it to other instances in the future.

Other new instances include the H1 family of EC2 storage-optimized instances aimed at data-intensive workloads like MapReduce, distributed file systems, Apache Kafka and big data clusters. They’re powered by Xeon “Broadwell” chips and offer up to 64 vCPUs, 16TB of storage and 256GB of DRAM. The M5 lineup is the latest generation of EC2 general purposes instances that include Intel’s Xeon SO Platinum 8000 “Skylake” processors.

The cloud provider earlier this month unveiled new C5 instances powered by Intel’s Skylake chips, and in October became the first to introduce Nvidia’s Volta GPU accelerators into the infrastructure.

AWS also is building on the container service it began working on in 2014, eventually launching the Elastic Container Service (ECS), which is integrated with many of the other services from the cloud provider. The service is growing, Jassy said, with hundreds of millions of containers being launched every week. Now the company is bringing in support for the Kubernetes container orchestration technology in its ECS for Kubernetes (EKS) services, making it a fully managed service. Kubernetes has become the top orchestration in a relatively short amount of time.

“Over the last 18 to 24 months, lot of people have become interested in Kubernetes,” Jassy said. “The majority of Kubernetes that runs in the cloud today runs on top of AWS. And yet, for customers who want to run Kubernetes on top of AWS, there’s work to do. You have to deploy a Kubernetes master and Kubernetes workers, and if you want high availability, you have to do that across multiple availability zones, you’ve got to configure them to talk to each other, and load balance and fail over. It’s just work.”

“Over the last 18 to 24 months, lot of people have become interested in Kubernetes,” Jassy said. “The majority of Kubernetes that runs in the cloud today runs on top of AWS. And yet, for customers who want to run Kubernetes on top of AWS, there’s work to do. You have to deploy a Kubernetes master and Kubernetes workers, and if you want high availability, you have to do that across multiple availability zones, you’ve got to configure them to talk to each other, and load balance and fail over. It’s just work.”

EKS will automatically deploy Kubernetes masters across multiple availability zones to avoid a single point of failure, it provides automatic patching and upgrades but with control over when to do them, and it currently integrates with some ECS features, with more integration coming in the future. Another new service is Fargate, which enables customers to run containers without having to manage server clusters. Rather than having to do such tasks as choosing an instance type or deciding when to scale the clusters, customers define their applications as a Task, which includes lists of containers, CPU, memory and networking information and identity and access management policies, and then launch the Task. It’s available now on ECS and will come to EKS next year.

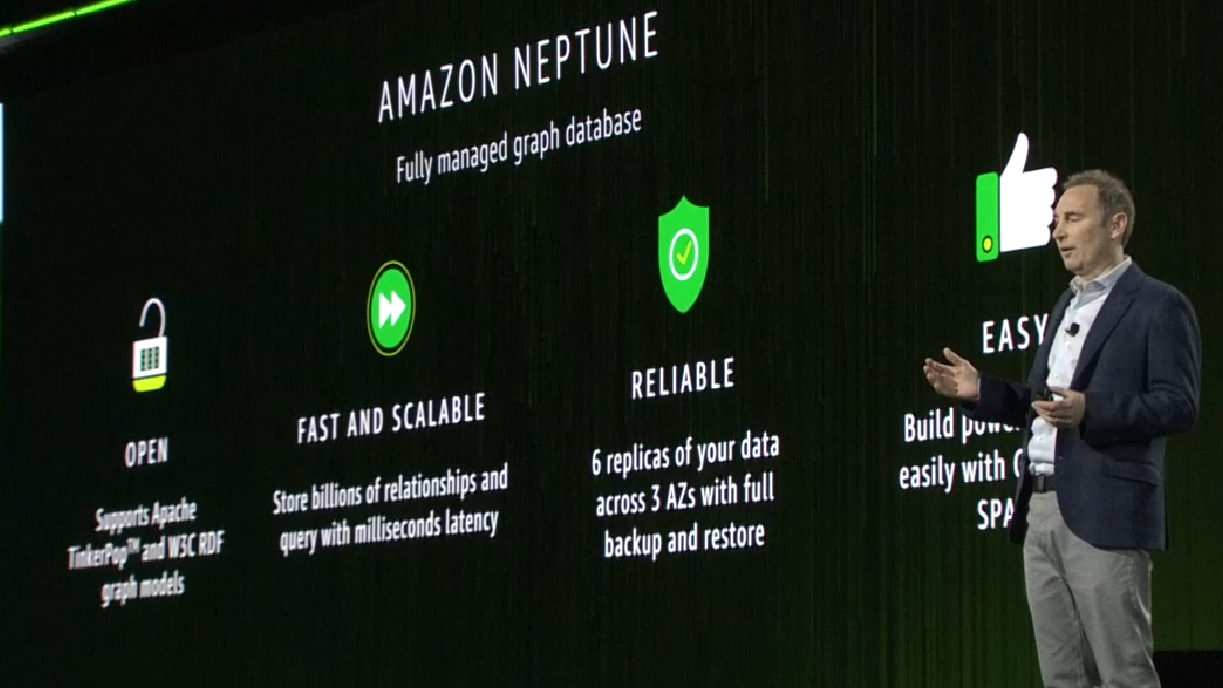

Databases also were a focus, with the Amazon Aurora database now able to scale both database reads and writes across multiple datacenters through its new multi-master feature. Jassy also introduced Amazon Aurora Serverless, eliminating the need for customers to provision or manage database capacity. Instead, they specify the capacity needs of the application and the database automatically starts, scales and shuts down based on the specifications, and they pay by the second for the database capacity they use. Global Tables enables the Amazon DynamoDB managed service to offer multi-master, multi-region read and writes, while DynamoDB Backup and Restore provides on-demand continuous backup. In addition, Jassy introduced the public preview of Neptune, a fully managed graph database service. It allows users to store billons of relationships and query them with millisecond latency.

AWS makes six replicas of the data across three availability zones that continually back up to S3. It will support both Gremlin and SPARQL query languages.

“The landscape of the way people use databases is really different than what’s been the case over the last number of years,” he said. “You don’t use relational databases for every application. That ship has sailed. Modern companies that use modern technologies are not only going to use multiple databases in all their applications, but many are going to use these multiple types of databases in a single application.”

In storage, Jassy unveiled S3 Select, an API that enables customers to select what data to retrieve from object, and Glacier Select, which allows queries to run on data in AWS’ Glacier archival storage technology.

Be the first to comment