Twice a year, the TOP500 project publishes a list of the 500 most powerful computer systems, aka supercomputers. The TOP500 list is widely considered to be HPC-related, and many analyze the list statistics to understand the HPC market and technology trends.

As the rules of the list do not preclude non-HPC systems to be submitted and listed, various OEMs have regularly submitted non-HPC platforms to the list in order to improve their apparent market position in the HPC arena. Thus, the task of analyzing the list for HPC markets and trends has grown more complicated.

In 2007, I published an article titled The New Face of the TOP500, in which I described the historical evolution of the TOP500 supercomputers list: What had started out to represent the high-performance computing (HPC) market in 1993 had since evolved into a list that included around 40 percent non-HPC systems. At the time, as a young HPC evangelist focusing on high performance computing, high speed interconnects, leading edge technologies, and performance characterizations, I felt that the TOP500 list should be dedicated to HPC platforms as had been stated in its charter at its creation.

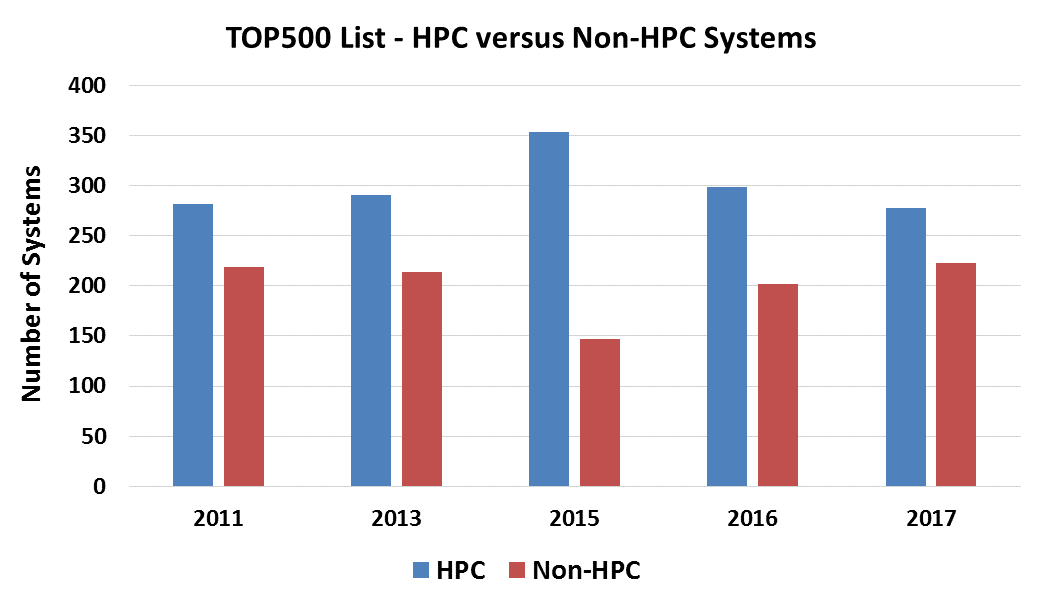

An interesting change occurred at the end of 2014, when IBM sold its System x X86 server business to Lenovo. The number of non-HPC system submissions to the TOP500 list in 2015 significantly decreased, and only represented 30 percent systems on the TOP500 that year. However, this trend did not last long, and once again, we are witnessing an increase in the number of non-HPC system submissions. In the November 2017 list, non-HPC platforms account for more than 45 percent of the TOP500 systems, as shown in the figure below:

For several years now, a Chinese HPC organization has published the China TOP100 Supercomputers list. In the recent list published in October 2017, besides a few HPC top systems, the majority of the systems are related to Chinese hyperscale and cloud companies, making the list less relevant to the HPC market. As a result, the China TOP100 authors have announced that the list will not be carried forward and that the October 2017 release to be the last one.

It is probably common knowledge that if US hyperscale companies were to submit their systems to the TOP500 list, they could probably take over the entire list. This has not happened yet, but it clearly could. [Editor’s note: We did that math in own coverage of the TOP500 rankings here. And yes, collectively they could so this with their approximate 8 million server nodes.]

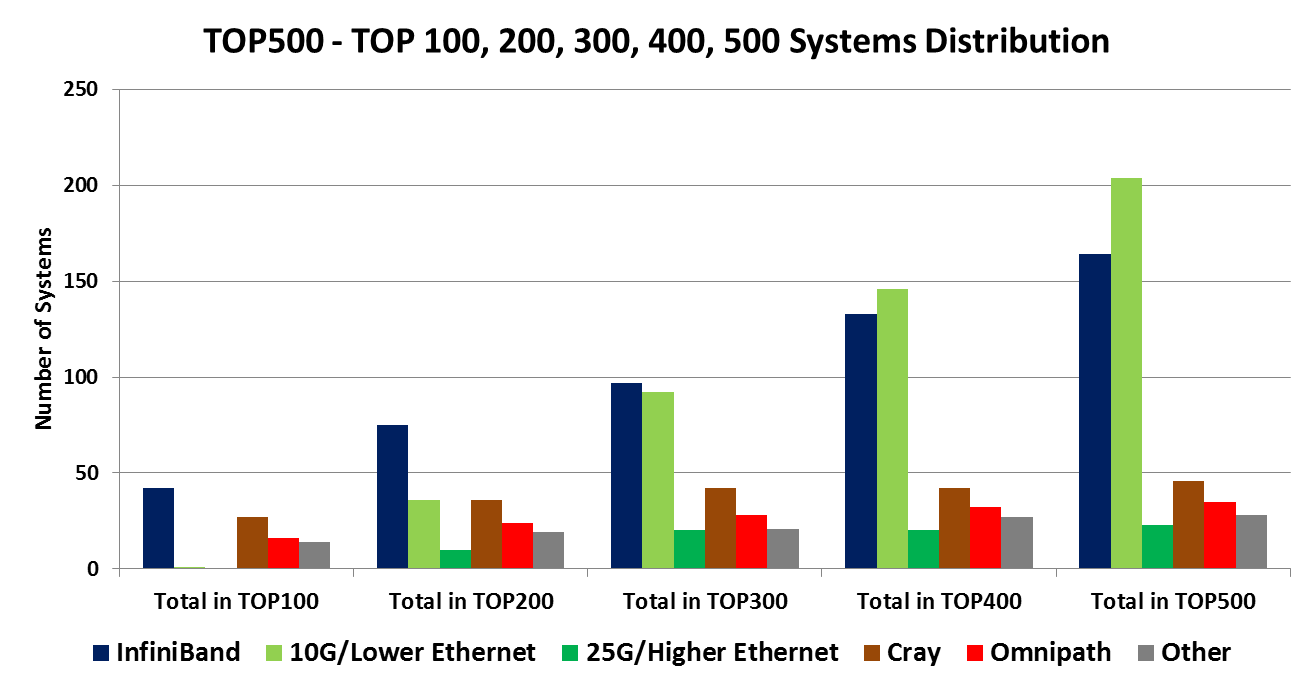

So what can we actually learn from the TOP500 list regarding the HPC market? In order to do the right analysis we need to avoid looking at the list as a whole; rather, we need to deeply analyze subsets of information and compare those results to the respective information of previous lists. Not doing so can lead to erroneous conclusions; for example, the November 2017 TOP500 list includes – for the first time – 25 Gigabit Ethernet systems (19 systems in number), all of which are manufactured by a single OEM and installed at a single hyperscale company in China. (By the way, all 19 systems use Mellanox 25 Gigabit Ethernet solutions.) This of course does not mean that only one OEM has deployed 25 Gigabit Ethernet nor that only one hyperscale company uses it. The inclusion of these 19 systems on the TOP500 list has caused further skew in the appearance of HPC platforms on the list; it is the main reason for the reduction in the number of listed InfiniBand systems, Cray systems, and Omni-Path systems, and other platforms with proprietary interconnects.

Looking at the TOP500 list’s top 100 systems reveals that InfiniBand connects two of the top five systems (#1 from China and #4 from Japan); moreover, nearly 45 percent of the systems are InfiniBand connected. Only a single system is Ethernet connected, in the lower ranking of the top 100 systems, and it is related to a Chinese hyperscale company.

The figure below provides a mapping of the different interconnects in the top 100, 200, 300, 400 and 500 systems on the TOP500 list. The majority of the proprietary interconnects appears higher on the list, and the majority of Ethernet interconnects appear lower in the list. In most cases HPC sites prefer to be listed as high as possible on the list, whereas the non-HPC platforms are often submitted for a quantity purpose. InfiniBand systems are spread across the entire list due to the vast adoption of the technology and its ability to support large-scale and departmental-scale compute platforms in a very efficient way.

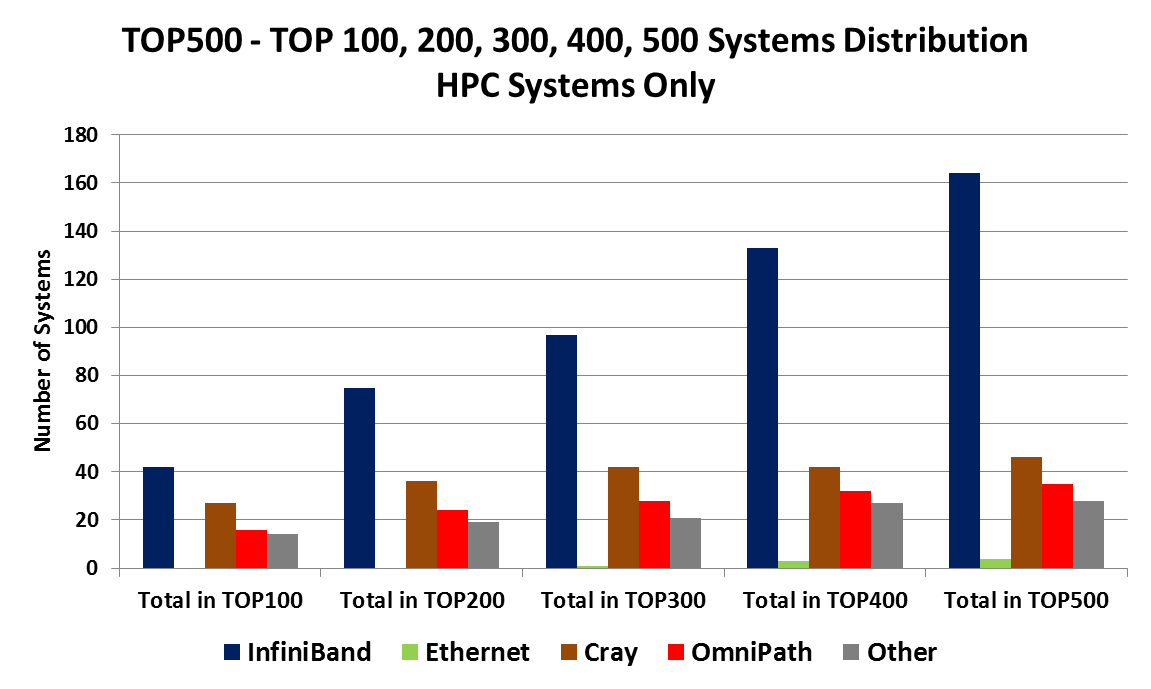

Figure 3 below shows the same information as Figure 2, but only for the HPC platforms appearing on the TOP500 list (excluding non-HPC platforms).

In order to extract the more interesting information, one needs to actually look at the newly added systems in the TOP500 list. It can be a tedious task as the list includes systems that have been appearing for several years. (Most of the Ethernet systems do not appear very long on the list, but the higher HPC related platforms can appear for several years.) The November 2017 list includes HPC systems that were deployed in 2010.

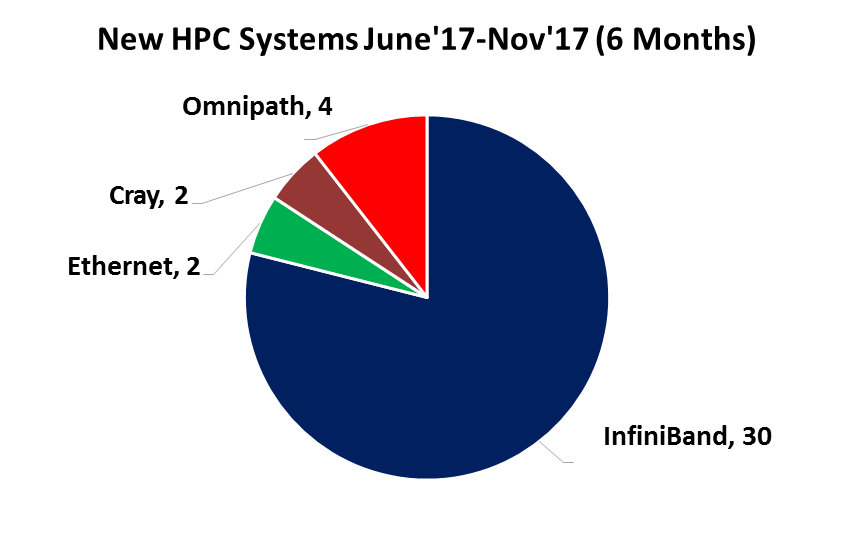

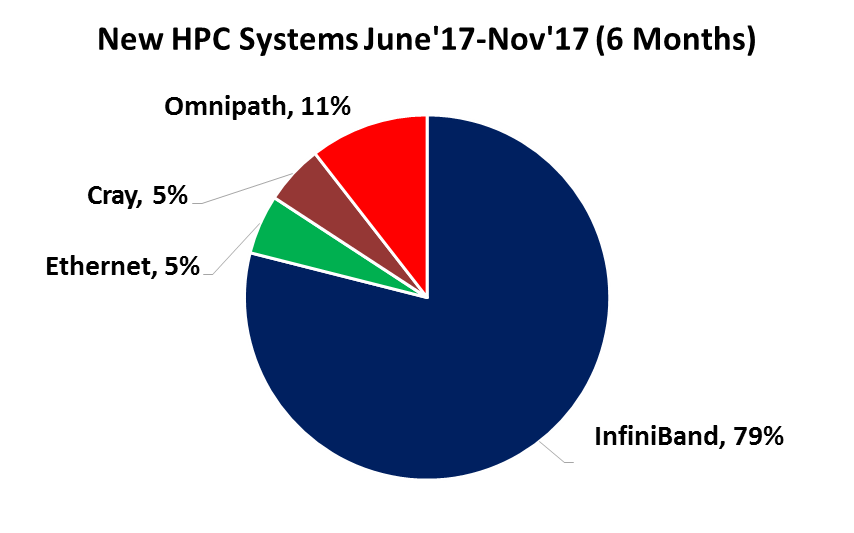

Scanning for the new HPC systems that have been added during the last six months (from June 2017 to November 2017) reveals 30 new InfiniBand systems, four new Omni-Path systems, two new Cray custom interconnect systems and two new Ethernet systems (see Figure 4 and Figure 5).

The TOP500 list aims to rank the 500 highest performing supercomputers in the world, but the list has shifted away from focusing only on HPC systems to also represent systems used for other purposes. We should expect this trend to increase in the future due to hyperscale infrastructures that allow OEMs to expand their share of the list in a simpler way than before.

As shown above, we can no longer perform a simple review of the TOP500 statistics to gain a true understanding of HPC market trends. Therefore to analyze the vital information in the recent TOP500 list and extract the real technology and market trends, one needs to perform a tedious data analysis. Hopefully in the future some of the work can be done by an HPC deep learning system. We can also hope that the TOP500 authors may find a way to focus on the HPC supercomputers on the list.

Gilad Shainer has served as Mellanox’s vice president of marketing since March 2013. Previously, he was Mellanox’s vice president of marketing development from March 2012 to March 2013. Shainer joined Mellanox in 2001 as a design engineer and later served in senior marketing management roles between July 2005 and February 2012. He holds several patents in the field of high-speed networking and contributed to the PCI-SIG PCI-X and PCIe specifications. Shainer holds a MSc degree (2001, Cum Laude) and a BSc degree (1998, Cum Laude) in Electrical Engineering from the Technion Institute of Technology in Israel.

Seems to only be a problem for Mellanox, Cray, Intel and others to claim network victory. That’s what this is really about right? Calming investors.Seems like the TOP500 submission criteria can be adjusted to avoid non-hpc systems from climbing in. I don’t understand why this hasn’t changed anyways. Everyone has been barking about it forever. Well at least the marketing folks in all the server OEMs and more.