Hyperscalers and cloud builders are different in a lot of ways from the typical enterprise IT shop. Perhaps the most profound one that has emerged in recent years is something that used to be only possible in the realm of the most exotic supercomputing centers, and that is this: They get what they want, and they get it ahead of everyone else.

Back in the day, before the rise of mass customization of the Xeon product line by chip maker Intel, it was HPC customers who were often trotted out as early adopters of a new processor technology and usually a new interconnect, too – more times than not from Mellanox Technologies. But with cloud service providers (a term that Intel uses to describe both hyperscalers and public cloud) and now communications service providers (the parallel IT organizations that are part of telcos, cable companies, and such) growing to account for more of the world’s servers (and hence its processors), these companies are not just ahead of others in the line to get access to CPUs, they are actually shaping the Intel Xeon product line itself in terms of feeds and speeds.

At its recent Cloud Day in San Francisco, Intel talked about the progress that it has been making in the past year in accelerating the deployment of public and private clouds to help make IT shops more efficient and to keep its Data Center Group gravy train running down the rails faster and faster. As Jason Waxman, general manager of the Cloud Platforms Group within the Data Center Group at Intel, said during his keynote at the Open Compute Summit a few weeks ago, by 2025 Intel anticipates that 70 percent to 80 percent of all servers shipped will be deployed in large scale datacenters, which Intel qualified as being one with thousands of systems and one that is engaged in infrastructure, platform, or software services; this includes the big cloud providers as well as smaller players, the service providers and telcos that compete against them, and the hyperscalers that provide their own infrastructure for their consumer and customer facing applications. Last year, Intel’s cloud server chip business grew by more than 40 percent, with sales of CPUs aimed at enterprise customers down slightly and the remaining hodge podge of HPC, workstation, networking, and storage collectively growing at around 10 percent. Each of these segments is projected to account for about a third of CPU revenues this year and Intel is forecasting that cloud sales (using the broadest definition as above) will see more than a 20 percent growth rate annually through 2019.

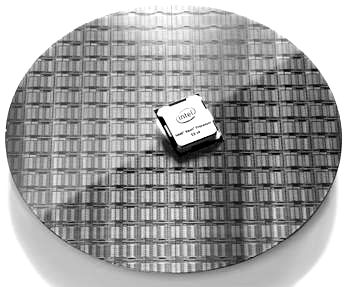

These are Intel’s most advanced customers, and they are driving the designs of the new “Broadwell” Xeon E5 v4 processors, launched at the end of March, and the future “Skylake” Xeon E5 v5 processors, expected sometime by the end of 2017. Of the top seven hyperscalers and cloud builders – Google, Amazon, Facebook, Microsoft, Baidu, Tencent, and Alibaba – five are in Intel’s early ship program and five have custom processors; four of them are sampling hybrid Broadwell-FPGA units and two are looking at Intel’s implementation of silicon photonics.

To take the pulse of the hyperscalers and big cloud builders, who are secretive about their infrastructure except when it suits their needs, The Next Platform sat down with Waxman to get some perspective on what is driving these customers and how they are in turn driving Intel. The first thing that Waxman did was caution us about making generalities.

“The trap about cloud service providers,” explains Waxman, “is that depending on who you talk to, you will get a completely different view of the world. You can talk to a Google or a Facebook, but we have to look at it as sort of the whole in an aggregate.”

The first thing that happened when the hyperscalers and cloud builders started to get really large and needed to work more closely with Intel is that everybody went down the wrong technology roadmap for a bit – even big IT organizations with the smartest people in the world weave when they should bob sometimes.

“About eight years ago, we saw that some companies were emerging like Google – Amazon was still nascent and still kicking the tires on what AWS was going to become – and what we heard from them back then was that power was everything and that they need lower power,” Waxman says. “So we went into this nosedive of how we can create the lowest-powered CPU that we could. And then we scribbled some math on the wall and showed them that if we did lower power, we were leaving performance on the table, and they were trading that off. But if you looked at it at a systems level, you would need fewer systems to get there and they could save the overall power that way. So we talked to be big folks, and we never get the ‘Oh, yes, you are right’ from any of them. They thank us for sharing and walk out of the room. But a year later, we started to hear that they needed more cores and we needed to increase our power envelope.”

And so the Xeon product roadmap got a revamp, and if you look closely, Intel invented a ring architecture for the Xeon E5s that allowed for more cores and caches to be on a die while at the same time creating three different general SKUs that had low, middle, and high core counts. This is the model that has persisted to this day, as the Broadwell Xeons show, and there are really still three different Xeon E5 processors that share a common socket, not one processor. In the case of the Broadwells, as we explained in detail, the LCC model has ten or fewer cores, the MCC model has a maximum of 15 cores, and the HCC model has up to 24 cores. The top bin parts in the Broadwell Xeon E5 line have 20 or 22 cores active, but we suspect that there are some 24 core parts shipping to hyperscalers (those running search engines or selling VM slices are the obvious candidates).

“It started with a couple of the bleeding edge companies, but now, the vast majority of the cloud service provider volume is moving to our latest generation CPU and not just buying at the top of the stack, but sometimes over the top of the stack – even before we broadly launch the product,” says Waxman. “Most of them have had Broadwell Xeons for three or four months ahead of launch, and with every generation they want that gap to be wider. And I can tell you on Skylake Xeons, the competition to get out the door first and fastest CPU is fierce. I don’t know if this is bragging rights or just sheer efficiency, but either way, but this concept of early ship is now becoming the fastest route to market for our technology.”

Waxman thinks that telecommunication companies building clouds for their own use or for public consumption as infrastructure or platform clouds could start wanting top bin parts, too.

“For the most part, it might be different in terms of the optimization point,” Waxman elaborates when pressed on the differences between the hyperscalers and the cloud builders from each other and from other datacenter users. The majority of the volume that we have as part of this early ship program is what I would call top bin plus. It is either the top bin SKU that you are seeing, or slightly enhanced above the top bin for their unique demands. One may say they want 20 cores and they want to push the frequency envelope. Another one might want 22 cores and are fine with the frequencies, but they may want slightly different thermals. In general, I would say that if your application looks like some sort of high performance computing application or you are trying to do a lot of high throughput search queries, or you are trying to drive as many VMs per system as you can, these lend themselves to a very high core count. There are some customers who just want high frequency, and we are just talking about putting an FPGA on Broadwell.”

All of the other standard SKUs in Broadwell line – Intel did not divulge how many custom parts it is making that are “off roadmap,” as it calls the custom parts, but it is probably a few dozen chips at least – are used in a variety of enterprise machines, including tower servers used by SMBs and HPC boxes that need a different price and performance point than the top speed or high core parts offer. The main thing is that over the past several years, the drift toward chips with either higher core count or higher clock speed has been compelling Intel’s average Xeon chip price to drift upwards. As we explained in our analysis of Xeon bang for the buck from the “Nehalem” Xeon 5500 back in 2009 through today’s Broadwell, those new top-bin parts are not cheap, but the cost per unit of performance is coming down across the generations of Xeon chips (by about 50 percent) as the performance has risen by a factor of 6.3X.

It doesn’t hurt that there is not much in the way of direct competition with the Xeons at this point in the IT industry – although that could change next year with AMD’s plans for its “Zen” Opterons.

No Time To Wait For Skylake

With only minor signs of a possibility of a recession out there over the next few years and infrastructure busting out of the datacenter seams, hyperscalers and cloud providers are not sitting on the sidelines and waiting for the Skylake Xeons next year, which will be worth the wait for sure and which will possibly represent as big of a leap for Intel’s Xeon CPUs as the “Nehalem” Xeon 5500s did back in March 2009, when AMD was for the most part vanquished from the datacenter.

“I think the behavior has changed for these customers,” says Waxman. “If you go back four years, they would tell us when they wanted to launch their instance, and now they are lining up their roadmaps a bit more and aligning to our tick-tock cycle. I had a meeting with a big customer who runs everything in a cloud, and they want to know everything about the cadence so they can work with their service provider and line everything up. And it used to be we had to figure out when to work these new chips in, but now they are working with us to figure out when their ODMs need things to get stuff to market. We have helped them to do it, and it is good for everybody. Their customers get access to technology than they might otherwise, and for us, it is a great ramp for the latest technologies.”

These high end customers are growing their workloads linearly or exponentially, and they cannot wait another 18 months or so for Skylake Xeons to come out officially. The question now is now much earlier will hyperscalers and cloud builders get access to early Skylake chips. If it was four months for Broadwell, it might be six months for Skylake. And that is a big advantage for those who get in the front of the line.

All of this will have a tendency to make the public clouds and the hyperscaler services a little better off than other IT organizations, at least where early access to the best compute is critical.

“That underlying dynamic is the world that we are moving forward in,” explains Waxman. “There are going to be fewer end users, and you will either have scale in your datacenter five or ten years from now or you will be using someone else’s scale. The hyperscale datacenter is the future, and that means they are optimizing for somebody for their particular thing, whether it means a search implementation versus a media streaming implementation versus an IoT connected car implementation, they are going to be different and we are trying to figure out how to live in that world of mass customization.”

A few years ago, before hyperscalers and cloud builders became the bleeding edge for certain kinds of compute, HPC customers got the Intel parts early. But they have budget constraints and precise times to spend their money, while the cloud service providers (as Intel calls them collectively) are always spending. So they are ready to take the new thing whenever that happens.

“Hyperscalers have multi-billion dollar businesses at stake and they are spending billions on infrastructure, and a 10 percent shift in performance – or in some cases a 20, a 30, or a 40 percent shift – on a billion dollars of infrastructure is hundreds of millions of dollars saved. But here’s another thing,” says Waxman. “When we go through debug with Broadwell, they are smart as all hell and the other thing that is interesting is they find things because they test at scale when a lot of our validation systems test on a single system. When you put a thousand systems together, there is sometimes something strange occurring because we didn’t have that kind of scale in our clusters.”

Be the first to comment