When many think of the Internet of Things (IoT) and connected vehicles from a compute perspective, the first thing that springs to mind is likely the small processors that are hard at work sensing, analyzing, and feeding data to remote systems. This is certainly a large part of IoT, but many of the most ambitious, complex applications require supercomputing resources for development and larger-scale analysis and advanced machine learning.

Connected vehicles require deep research in entirely new areas including remote sensing and artificial intelligence. These efforts require extensive computational and research resources, which not all automakers have for the task of building next generation intelligent vehicles.

With this in mind, an ideal center of research into the future of connected and autonomous vehicles would have computing capabilities that scaled up to supercomputer class while supporting research efforts to develop much smaller IoT or sensing devices. Such a center would also have to offer a world-class emphasis on multidisciplinary research to that can support a wide breadth of projects in terms of funding, available technology, and collaboration.

As it turns out, The University of Michigan has all of these key elements covered, particularly in terms of research empowerment. They represent the largest public research institution in the U.S. in terms of dollars spent with $1.5 billion going toward a staggering array of initiatives in countless fields. As an added bonus, it is centrally located in the heart of the U.S. auto industry, which has made opportunities for partnerships far easier.

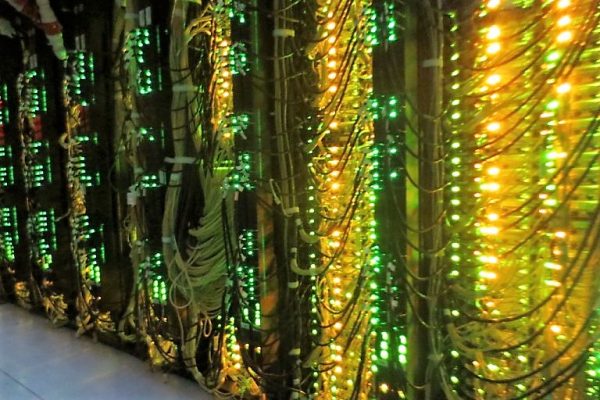

In addition, The University of Michigan is also home to world-class supercomputing resources to drive the future of connected vehicle research. From exploration of the tiny devices that connect, analyze, and transmit individual car information, to machine learning algorithms that are trained on their GPU-backed systems, to simulations of more efficient traffic flows at scale, the university is at the fore of what is next in smart, sensing vehicles.

We will explore the high performance computing (HPC) connection and why it is crucial to the success of this kind of automotive and IoT research in a moment. First, however, it is worth pointing out some of the projects that have already been enabled by the university’s MCity initiative.

Smart Cars, Smarter Cities: A Research View

MCity is the University of Michigan’s broad, multidisciplinary program to develop smarter cities with an emphasis on the role of intelligent, connected vehicles. These are a key element of smart cities as they hold promises to reduce traffic congestion, improve safety, and even reduce carbon impacts by reducing idle time in traffic jams, among other benefits.

The program is driven by a wide range of research groups at The University of Michigan in areas as diverse as economics, public health, engineering, cybersecurity, to name just a few. Industry partners include Honda, Ford, and GM, and Toyota, as well as insurance companies, including State Farm, in addition to technology partners.

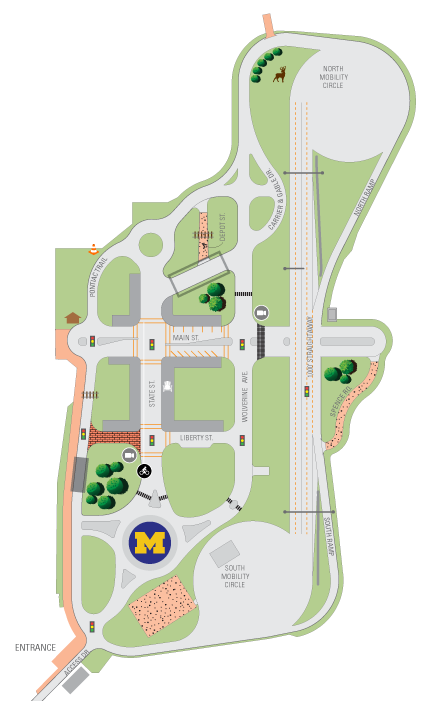

One of the central features of MCity is a 32-acre test facility with more than 16 acres of roads with much of the infrastructure drivers would encounter, including hazards and GPS black spots from simulated tree cover. It is here that much of the real-world and simulated test conditions are studied for autonomous vehicles.

There are countless research projects based on the data collected as smart vehicles roam the test infrastructure. One team is focused on computer vision algorithms that take cues from human observations in near real time, another concentrated on pedestrian position systems to classify their behavior to feed into cars that more accurately predict pedestrian activity. Others refined intelligent transportation systems by using volumes of mobility data while others used data to better understand how drivers might interact with warning systems inside connected vehicles.

The highway that all of these research projects use are the computational resources provided by the University. At the core of these and future programs is the forthcoming ‘Great Lakes’ supercomputer, which Dell EMC helped The University of Michigan plan and test.

Innovative Research Takes Careful Focus on Compute Infrastructure

Given the diverse needs of the MCity program and those in the many research departments campus-wide, Dell EMC and The University of Michigan had to partner closely to determine what would provide the most value to the widest set of applications with the ‘Great Lakes’ cluster.

One of the growing requirements for MCity in particular was the need to have resources to augment traditional modeling and simulation with AI workloads. Simply training a deep learning model to recognize street signs, pedestrians, and hazards can be a compute-intensive task and something that the massive parallelism of GPUs is known to target efficiently.

The ‘Great Lakes’ supercomputer will provide 44 of the highest performing GPUs from Nvidia, the V100 with the TensorCore component from Nvidia, which is designed to accel with deep learning training problems by tackling inputs and layers with dense multiply/accumulate capabilities. These can take the training data for AI models that are deployed on devices inside smart and connected vehicles to provide near real-time intelligence. Research into how these algorithms function and how common AI frameworks like TensorFlow and PyTorch can be adapted for autonomous vehicle inference deployments would be far less efficient without high performance, scalable GPUs inside the ‘Great Lakes’ machine.

Dell EMC worked closely with networking partner, Mellanox, to deliver another key feature for large-scale research efforts like MCity. Many applications for the test track (not to mention the university’s 2,500 other active users) will require high bandwidth to keep the high performance GPU and CPU cores fed. In addition to being at the front end of autonomous driving and smart cities research, the University of Michigan is also leading the world in network performance as the first production customer to have Mellanox HDR 200 Gb/s Infiniband. This means projects that require multiple nodes, including those that are GPU enabled, will have access to the fastest data transfer speeds (thus application performance results) on the planet.

“Users of Great Lakes will have access to more cores, faster cores, faster memory, faster storage, and a more balanced network,” according to Brock Palen, who directs the Advanced Research Computing and Technology Services division at The University of Michigan.

Novel Research Requires Strong Partnerships

The success of MCity initiatives hinges on robust partnerships between academia and industry as well as among technology providers.

While the core technologies inside the connected vehicles are what will drive the future forward, the research and development that backs those applications is the first priority. The ‘Great Lakes’ cluster was the result of a collaboration that looked at the MCity efforts as well as the entire campus-wide network of requirements to build the perfect system for IoT, HPC, and AI research at The University of Michigan.

Palen adds that Dell EMC proved to be a remarkable partner as his team sought to serve traditional HPC workloads and emerging applications to feed connected vehicle and smart vehicle research. Without the compute capabilities to model and develop concepts for IoT devices and how they communicate with each other over a network, for example, the MCity program would be more limited in scope.

With AI as a central feature for most of what is critical for autonomous cars; from computer vision applications to inferring human interaction with smart vehicles, the program would be limited if it did not have a world-class supercomputer with AI-optimized GPUs on board and the fastest network in research to date.

It is an exciting time to see how disparate areas in compute infrastructure are coming together. From accelerated supercomputers like ‘Great Lakes’ to the tiny devices that transmit signals and data to feed to the networks of other smart cars, this convergence will continue, creating smart cars, smarter cities, and intelligent machines that can continue to pave the way.

Be the first to comment