The Ohio Supercomputer Center’s mission to supply supercomputing capabilities to educational institutions and companies throughout the state is about to get a significant boost in the form of a powerful and highly-efficient cluster based on Dell EMC servers and leveraging liquid-cooling technology from CoolIT.

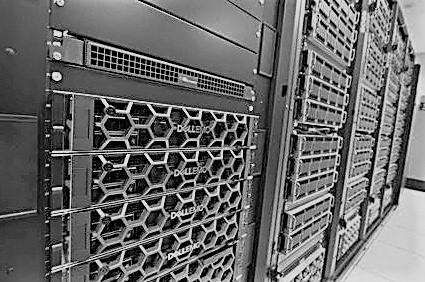

The organization, which already houses a parallel processor cluster, a vector processor machine, and a mid-size shared memory processor system, this month will deploy a new 260-node cluster that will include Dell EMC PowerEdge C6420 systems that come with CoolIT’s Direct Contact Liquid Cooling (DCLC), which brings warm water to particular areas of the system. In addition, the Pitzer cluster, which is named after Russell Pitzer, emeritus professor of chemistry and one of the founders of the Ohio Supercomputer Center back in 1987, will consist of PowerEdge R740 system, 528 “Skylake” Xeon SP-6148 Gold chips from Intel and 64 Nvidia Tesla “Volta” V100 GPU accelerators. We have come a long way from the Cray machines that Pitzer had back in the day.

Connectivity for the Pitzer cluster will come from Mellanox Technology’s 100 Gb/sec EDR InfiniBand network that delivers a high message rate of 200 million messages per second. In all, the Pitzer Cluster will deliver about 1.3 petaflops of peak performance and up to 7 petaflops of peak performance for mixed-precision artificial intelligence workloads.

The goal of the supercomputer is to deploy a dense, high-performance system to run such data-intensive workloads like DNA sequencing as well as deep learning algorithms and AI applications, according to OSC executive director David Hudak.

“The Pitzer Cluster follows the long-running HPC trend of higher performance in a smaller footprint, offering clients nearly as much performance as the center’s most powerful cluster, but in less than half the space and with less power,” Hudak said in a statement. “This valuable new addition to our data center allows OSC to continue addressing the growing computational, storage and analysis needs of our client communities in academia, science and industry.”

The cluster will replace the center’s six-year-old Oakley Cluster, a system built by the pre-split Hewlett-Packard with 694 nodes, 8,328 Intel Xeon E5 cores and 128 Nvidia Telsa M2070 GPU accelerators, with a peak performance of just over 154 teraflops. Pitzer will join the Ruby Cluster, named after actress (and Ohio native) Ruby Dee and deployed in 2015, at the center. Ruby is a 240-node system built by HP and powered by Intel Xeon chips. The system had used Xeon Phi co-processors, but those were removed from service in 2016. Twenty of the nodes also including Nvidia’s Tesla K40 GPU accelerators and two more use K80 GPUs. Ruby offers a peak performance of 125 teraflops.

Also on the OSC datacenter floor will be the Dell EMC-built Owens Cluster, launched last year with 824 nodes powered by 14-core “Broadwell” Xeon E5 processors and Nvidia “Pascal” Tesla P100 “Pascal” GPU accelerators. The system has a total of 23,392 cores and a CPU-only peak of 750 teraflops.

Dell EMC for several years has been working to expand its HPC portfolio with the aim of making such high-performance capabilities – and modern workloads like those using AI – available to mainstream businesses. The company has developed its PowerEdge C-Series modular servers for HPC clusters and has developed supercomputers for such institutions as the Texas Advanced Computer Center (TACC) at the University of Texas at Austin and the Julich Supercomputing Centre in Germany.

This summer, we talked about how TACC will be home to the “Frontera” system, with the first portion of the system that will deliver two to three times the application performance of the five-year-old Blue Waters supercomputer at the University of Illinois. Dell EMC will provide the primary computing system based on its PowerEdge C6420 systems, with DataDirect Networks (DDN) bringing primary storage, Mellanox the high-performance interconnect with CoolIT the direct liquid cooling. TACC’s Frontera will be one of the first machines to get 200 Gb/sec HDR InfiniBand inside a cluster.

More recently, the University of Michigan in October announced Dell EMC as the lead vendor for its upcoming $4.8 million Great Lakes cluster, which again will include Mellanox for the networking and DDN for storage. The cluster, which will become available to users on campus in the first half of next year, will include PowerEdge C6420 compute nodes, PowerEdge R640 high-memory nodes and PowerEdge R740 GPU nodes.

The Pitzer Cluster at Ohio State will offer similar technologies and capabilities to the Great Lakes machine at Michigan. (Perhaps OSC and ARC-TS will simulate The Game. . . .)

“The Pitzer Cluster brings to bear a multitude of new technologies to help OSC and its researchers more quickly and efficiently tackle immense challenges, using artificial intelligence and deep learning to ultimately drive human progress,” Thierry Pellegrino, vice president of Dell EMC’s HPC business, said in a statement.

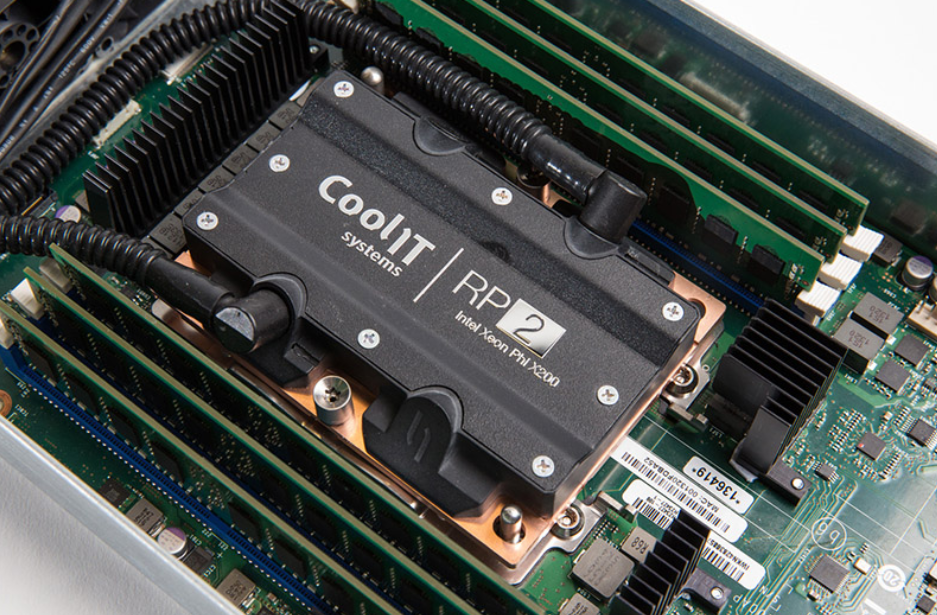

That includes CoolIT’s DCLC, which is a modular rack-based cooling system that will leverage the company’s Passive Coldplate Loop for the C6420 servers that will deliver dedicated liquid cooling to the Intel processors in each of the 256 CPU nodes and will be managed by a stand-alone central pumping CHx650 Coolant Distribution Unit.

In addition, the Intel chips feature integrated six-channel memory controllers, which will improve the bandwidth by 50 percent when compared to the cores in the Owens Cluster.

Through Pitzer Cluster, users will have access to four large-memory nodes of PowerEdge R940s, with up to 3 TB of memory per node, which OSC said will be aimed at data-intensive applications. The GPU nodes based on the R740s will include the Tesla V100 GPUs, which provide 50 percent the power efficiency of the previous generation of GPUs and improvements in speed, which will be important for projects involving deep learning algorithms and AI.

Ruby should have been built using Radeon GPUs!