After years of planning and delays after a massive architectural change, the Blue Waters supercomputer at the National Center for Supercomputing Applications at the University of Illinois finally went into production in 2013, giving scientists, engineers and researchers across the country a powerful tool to run and solve the most complex and challenging applications in a broad range of scientific areas, from astrophysics and neuroscience to biophysics and molecular research.

Users of the petascale system have been able to simulate the evolution of space, determine the chemical structure of diseases, model weather, and trace how virus infections propagate via air travel. The most recent allocations on the system, announced last week, run from 25,000 to 600,000 node-hours of compute time, and they now include some deep learning jobs as well as more traditional scientific modeling and simulation workloads.

Most recently, the Blue Waters system made news in the areas of supercell and tornado research and natural gas and oil exploration. Scientists at the University of Wisconsin-Madison used 20,000 processing cores in Blue Waters over three days to simulate supercell storms to help find out why some produce tornados, crunching huge amounts of data about such factors as temperatures, air pressure, wind speed and moisture. As we have talked about at The Next Platform, engineers at ExxonMobil used 716,800 cores on the supercomputer to run a proprietary application that did complex oil and gas reservoir simulations, an effort that resulted in data output that company officials said was thousands of times faster than similar simulations in the industry.

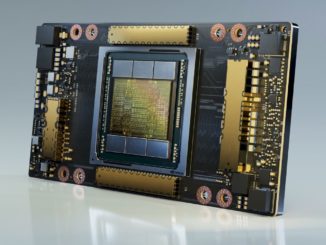

Those workloads are just a couple of examples of the broad array of applications that run on the Blue Waters supercomputer, which is housed at the University of Illinois at Urbana-Champaign and supported by the National Science Foundation. The system boasts 22,640 Cray XE6 nodes and 4,228 XK7 nodes –powered by AMD processors and using GPUs from Nvidia – more than 1.5 petabytes of memory, more than 25 petabytes of disk storage an up to 500 petabytes of tape storage. The two types of nodes are connected by a single Cray Gemini high-speed network in a large-scale 3D torus topology. The supercomputer delivers a sustained speed of more than 1 petaflops (quadrillion floating point calculations per second) and a peak of more than 13 petaflops. Blue Waters runs the Cray Linux Environment.

Recently, a group of researchers from the Center for Computational Research at the University of Buffalo and the NCSA turned their attention to the supercomputer itself, conducting a study of the workloads that are running on Blue Waters with hopes of not only making the system work even better but also to fuel research into future computer designs.

“Given the important and unique role that Blue Waters plays in the U.S. research portfolio, it is important to have a detailed understanding of its workload in order to guide performance optimization both at the software and system configuration level as well as inform architectural balance tradeoffs,” the researchers wrote in their lengthy paper, Workload Analysis of Blue Waters. “Furthermore, understanding the computing requirements of the Blue Water’s workload (memory access, IO, communication, etc.), which is comprised of some of the most computationally demanding scientific problems, will help drive changes in future computing architectures, especially at the leading edge.”

The study’s authors wanted to better understand the disparate use patterns and performance requirements of the jobs that run on Blue Waters, focusing on differences in such areas as average workload size, the use of GPU accelerators, memory, I/O, and communication. They studied the use of numerical libraries and the parallelism technologies that were employed, such as MPI and threads. In addition, they also took a look at how the supercomputer had been used over the first three years of its life to determine any changes in requirements, which they said could impact future architectural designs.

This analysis of the workloads on Blue Waters posed its own computational challenges. It required more than 35,000 node-hours – and more than 1.1 million core-hours – on Blue Waters to analyze about 95 TB of data from more than 4.5 million jobs that ran on the system between April 2013 and September 2016, work that itself generated about 250 TB of data across 100 million files. That was all put into a MongoDB and a MySQL data warehouse for searching, analysis, and display in Open XDMoD, an open source technology for managing HPC systems that offer data in such areas as the number of computational jobs that have been run, resources used – such as processors, memory, storage and network – and job wait times.

In addition, the researchers made it so that data from future jobs on the supercomputer will be automatically put into the Open XDMoD data warehouse for faster analysis.

The researchers had a list of questions they wanted answered, including whether the proportional mix of scientific sub-disciplines is changing – including the use of the XE CPU nodes and XK hybrid CPU-GPU nodes – what are the top algorithms running on the nodes, how much of Blue Waters’ resources are being used by high-throughput applications. They also wanted to know whether job sizes are changing, I/O patterns and bottlenecks are popping up, and whether memory use is increasing or decreasing.

Among the key findings is that more than two-thirds of all node-hours are being used by mathematical and physical (MPS) and biological sciences groups, and that the number of fields of sciences that are accessing Blue Waters has increased during the supercomputer’s first three years of operations – more than doubling since its first year. It’s “further evidence of the growing diversity of its research base,” the authors wrote. Along the same lines, the applications running on the system also are becoming more diverse, from the wide use of community codes to more specific workloads for scientific sub-disciplines. The top ten applications used on Blue Waters consume about two-thirds of all node-hours, with the top five – NAMD, CHROMA, MILC, AMBER, and CACTUS – using about half.

There is a broad range in the size of job running on the supercomputer, from single-node tasks to workloads that leverage more than 20,000 nodes. Single-node jobs account for fewer than 2 percent of the total node-hours used on Blue Waters. On jobs running on the XE nodes, all of the top sciences disciplines run an array of job sizes, and all have workloads that use more than 4,096 nodes. The largest 3 percent of jobs – determined by node-hours – accounted for 90 percent of the node-hours consumed, and most node-hours are used running parallel jobs that use some form of message passing, they found. At least a quarter of the workloads used some sort of threading technology.

On the XK nodes, the molecular biosciences, chemistry, and physics groups were the largest users, with NAMD and AMBER the most used applications. The largest 7 percent of the workloads accounted for 90 percent of the hours consumed on the XK nodes. The use of GPUs also varied by application, they found. On the XE nodes, most of the jobs used less than half of the memory available, while most on the XK nodes use less than 25 percent. In terms of memory and I/O, the supercomputer’s three file systems have balanced read/write ratios with significant fluctuations, with the volume for traffic for the largest file system peaking at 10 PB per month. There also is a wide range of I/O patterns, a small fraction of time is spent by jobs in file system I/O operations – .04 percent of runtime for 90 percent of jobs – and many workloads use specialized libraries for their I/O operations, with about 20 percent using MPI-IO, HDF5, and NetCDF.

Be the first to comment