One of the challenges vendors are trying address when it comes to artificial intelligence is expanding the technology and its elements of machine learning and deep learning beyond the realm of hyoerscalers and some HPC centers and into the enterprise, where businesses can leverage them for such workloads as simulations, modeling, and analytics.

For the past several years, system makers have been trying to crack the code that will make it easier for mainstream enterprises to adopt and deploy traditional HPC technologies, and now they want to dovetail those efforts with the expanding AI opportunity. The difference with enterprises is that they are deploying HPC and now AI to adapt to the rapid changes roiling their industries, including cloud computing, mobility and computing at the network edge.

As we at The Next Platform have noted, system vendors like IBM, Lenovo, and Hewlett Packard Enterprise have all been pushing to build out their HPC capabilities and bring those capabilities to the masses. HPE has been a player in the HPC and supercomputer realm for years, and bulked up those efforts last year when it bought SGI for $275 million. For its part, Lenovo also expanded its presence in the space when it bought IBM’s System x server business in 2014 for $2.1 billion and licensed such software as the Platform Computing middleware stack. While IBM’s HPC focus with the X86 server unit was on the high-end systems, Lenovo said last year that it has seen increasing success with smaller systems that can help bring HPC capabilities to mainstream businesses.

More recently, IBM last month expanded its own efforts in this area by integrating its PowerAI deep learning enterprise software with its Data Science Experience, both of which are designed to reduce the challenges for enterprises interested in using advanced AI technologies and enable developers and data scientists to develop and train machine learning models. Software vendors like Microsoft and SAP also are pushing to make it easier for enterprises to adopt AI and deep learning technologies.

Similarly, for years, Dell EMC has been growing its HPC product portfolio with an eye toward getting the technologies into the mainstream. That has included innovations around its PowerEdge C-Series modular servers for HPC clusters and efforts with such supercomputers as the Texas Advanced Computer Center (TACC) at the University of Texas at Austin and the Julich Supercomputing Centre in Germany. In 2015, the vendor launched its portfolio of HPC systems, which includes its Ready Bundle for HPC, pre-configured and pre-validated engineered systems that include the compute, storage, network, services and software support needed for enterprises to quickly deploy and use HPC technologies in their datacenters. The lineup includes Ready Bundles for HPC optimized for NFS and Lustre storage.

Dell EMC also offers a range of HPC services that touch on everything from deployment and management to the cloud and finances and support for various HPC software stacks. The goal is to deliver the same kinds of capabilities that at one time had only been afforded to research and educational institutions and large businesses, according to Ravi Pendekanti, senior vice president for product management and marketing for Dell EMC’s Server Solutions business.

“HPC started off as more of a scientific and research kind of oriented effort,” Pendekanti said in a recent press conference. “Over the last couple of years, it has transformed itself into being may one of the pivotal things in the financial industry. If you look at any of the emerging workloads – machine learning, deep learning and edge computing – they’ve all got something to do with HPC. The workloads that we talk about today, when we talk about things like machine learning and deep learning, we weren’t talking about 10 years ago. We’re now talking about how HPC has moved into the mainstream from the back alley of research and the scientific community.”

At the SC17 supercomputing conference in Denver this week, Dell EMC is unveiling efforts to help bring AI, deep learning and machine learning to enterprises in much the same way that it’s been pushing HPC into the mainstream, through bundles engineered systems. At the same time, the company is upgrading its current HPC Ready Bundle offerings with the latest-generation PowerEdge C-Series servers.

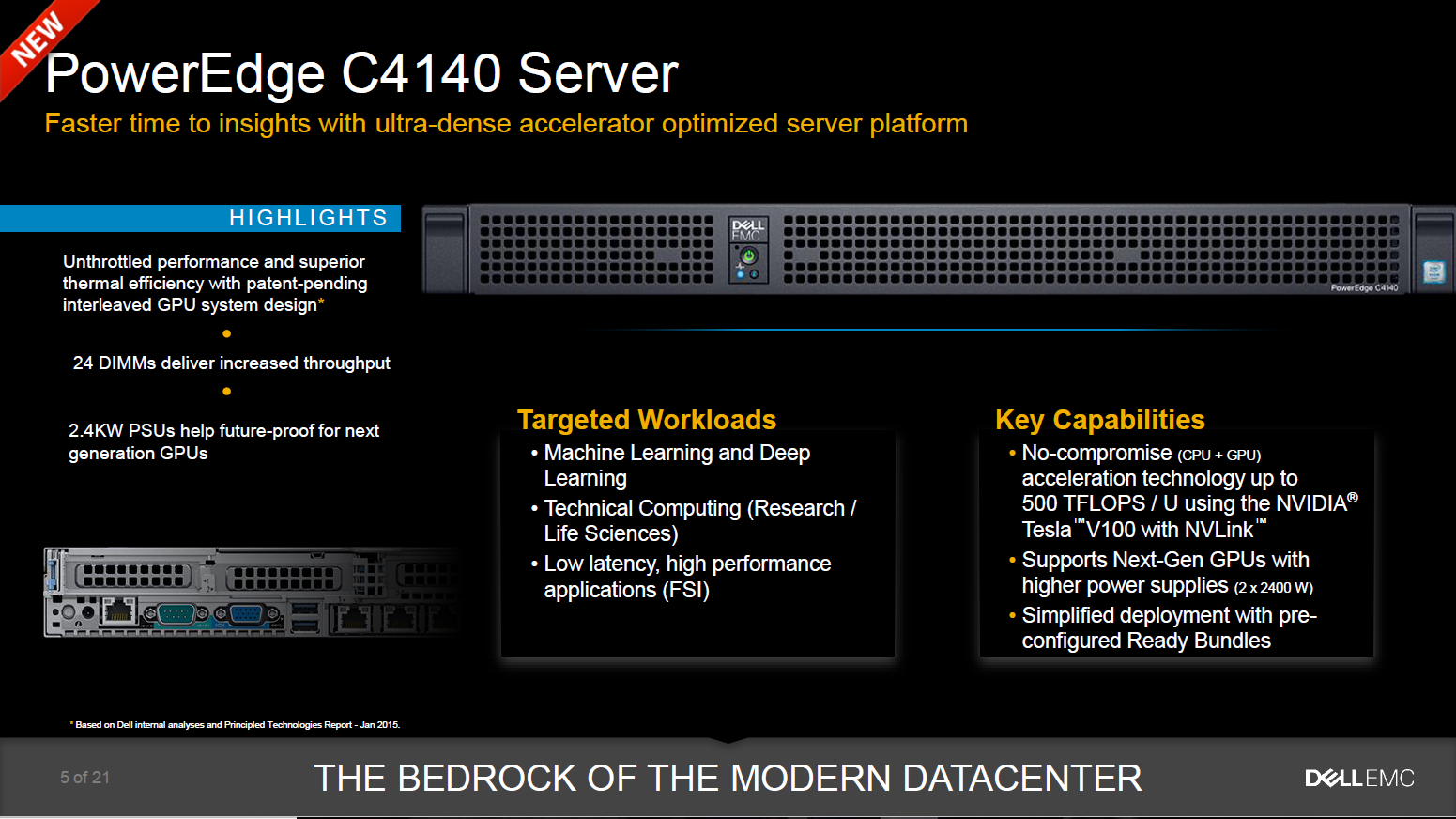

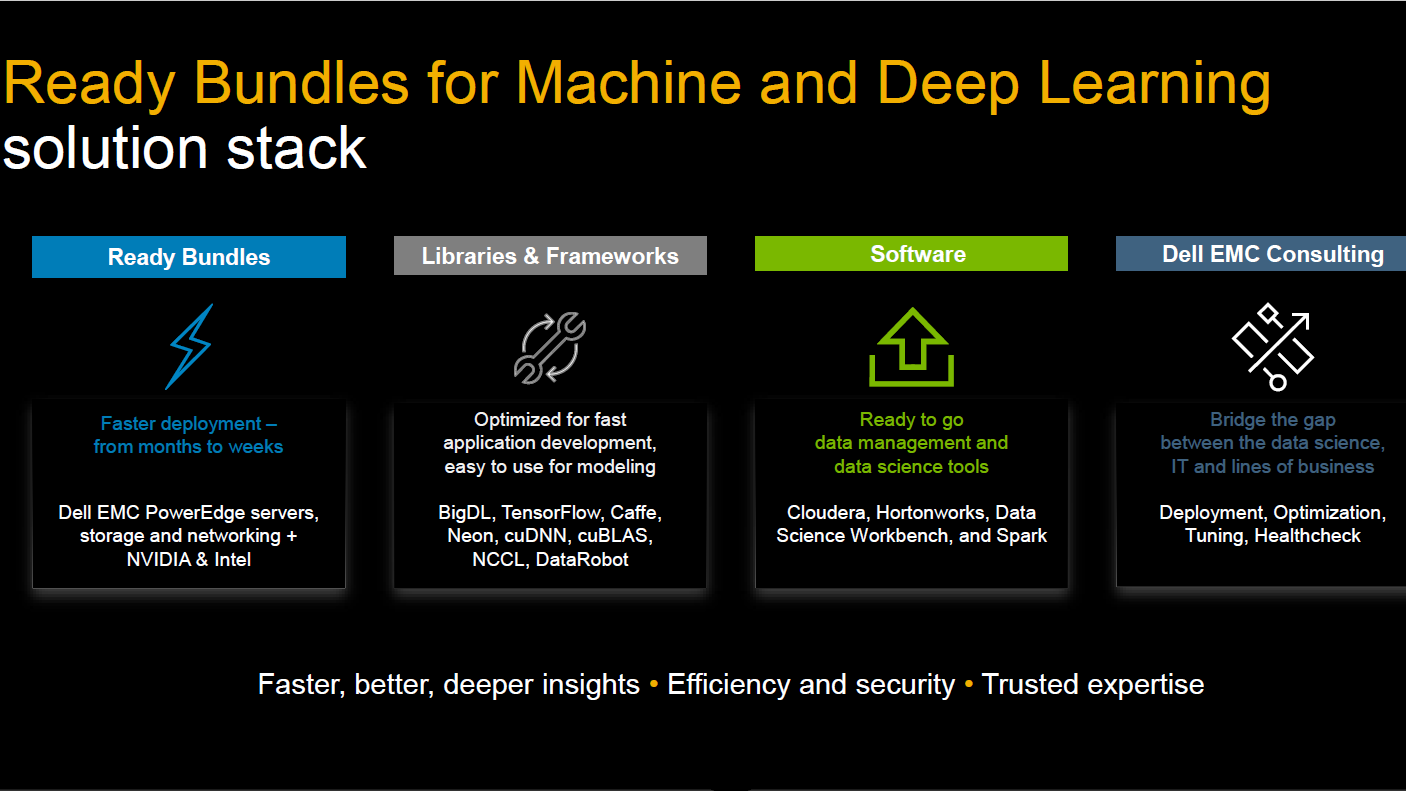

The AI bundles are designed to enable enterprises to more quickly analyze the massive amounts of data being collected and generate insights that can be applied to their businesses. The Ready Bundles for Deep Learning with Nvidia, Deep Learning with Intel and Machine Learning with Hadoop, which will become available in the first half of 2018, will be based on Dell EMC’s new PowerEdge C4140, an ultradense system that is part of Dell’s 14th Generation PowerEdge lineup. The bundles also include libraries and frameworks around such open-source HPC technologies TensorFlow, Caffe, BigDL and cuBLAS and support for such software platforms as Cloudera, Hortonworks and Spark. The bundled systems follow Dell’s announcement at the ISC 17 show in June of a strategic partnership with Nvidia in which the companies will jointly develop new products around HPC, data analytics and AI. Dell has a similar collaboration with Intel around AI, machine learning and deep learning solutions.

The C4140 includes two of Intel’s 14 nanometer “Skylake” Xeon SP processors – each chip will have up to 20 cores – and four Nvidia Tesla V100 GPU accelerators with PCI-Express and NVLink interconnect technology to link the compute elements together. The 1U C4140, which will be available in December, delivers up to 500 teraflops through the Tesla V100 GPUs, which are pooled using the NVLink interconnect, and provides 2,400 watts in its power supplies to support next-generation GPUs. The system offers 24 DIMMs for up to 1.5 TB of DDR4 memory on the Xeon SPs for faster throughput and supports Linux distributions from Red Hat, Canonical and SUSE. The GPU accelerators also speed up the processing of HPC workloads while driving down costs, according to Dell EMC, which estimated that for molecular dynamic applications, a single C4140 can do the work of 19 CPU-only servers and save 12 times the cost. For financial services workloads, the server can equal the work of eight CPU-only servers and save five times the costs.

Be the first to comment