Nuclear fusion, the opportunity to harness the power of the stars, has been a dream of humanity from around the time of the Manhattan Project. But while making bombs has been readily achieved, controlling the process for thermonuclear fusion for civilian use has been frustratingly elusive.

Nuclear fusion could be a clean, safe and practically limitless energy source, but despite decades of research, it’s difficult to sustain in any meaningful kind of way. Put simply, the challenge is to confine the particles involved, control the temperature and harvest the energy in a process where even the smallest change can have huge consequences. The amount of energy that goes into making a nuclear fusion reaction far outweighs the energy produced – for now.

There’s only one way to model the kind of nonlinear interactions produced by magnetically confined burning plasmas – including processes like microturbulence, mesoscale energetic particle instabilities, radio frequency waves, macroscopic magnetohydrodynamic modes and collisional transport. And that way involves supercomputers and some very cool coding.

The Gyrokinetic Toroidal Code (GTC), is one such scientific code and happens to be one of 13 projects chosen by the Center for Accelerated Application Readiness (CAAR) to run on the brand-new “Summit” supercomputer at Oak Ridge National Laboratory, one of the key supercomputer centers controlled by the US Department of Energy.

Summit is the new jewel in the crown for US supercomputing, succeeding the Titan supercomputer that was the most powerful machine in the world five years ago, with 27 peak petaflops and currently ranked seventh in the world. But Summit is targeting 200 peak petaflops of performance at 13 megawatts of power for traditional HPC simulations and more than 3 exaflops for machine learning codes, which should make it the fastest and smartest supercomputer in the world. It’s the US answer to China’s Sunway TaihuLight.

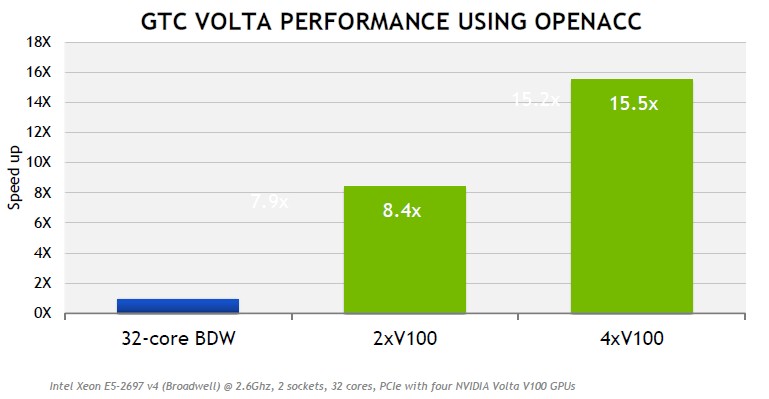

Summit is being built by IBM, Nvidia, and Mellanox Technologies for the DoE’s Oak Ridge Leadership Computing Facility (OLCF). Just like Titan, Summit is a hybrid CPU-GPU system, with 4,608 nodes with two IBM Power9 processors and six Nvidia Volta V100 GPU accelerators per node – you can see the performance benchmarks in the chart below. The supercomputer will have a large coherent memory of over 512 GB DDR4 and 96 GB HBM per node, all directly addressable from the CPUs and GPUs, and an additional 1600 GB of NVRAM, which can be configured as either burst buffer or as extended memory.

Summit will be a powerhouse machine for scientific supercomputing, with projects underway to use machine learning to help select the best treatment for cancer in a given patient and to help classify the types of neutrino tracks seen in experiments. Porting GTC over to the new supercomputer was also an easy choice.

“We look at different things when we make these decisions and a very important one is the science domain that the application supports,” says Tjerk Straatsma, the OLCF’s Scientific Computing Group leader, explaining why GTC was chosen for Summit.

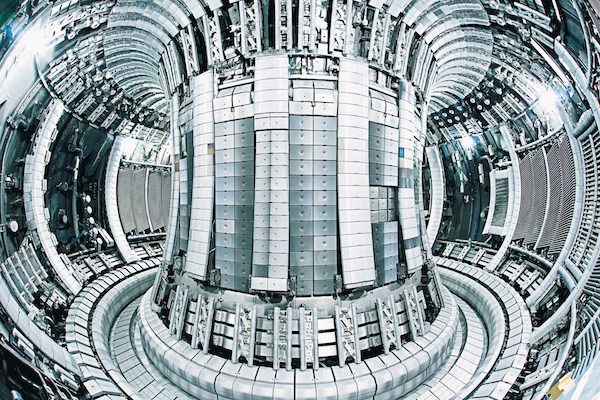

There’s also the fact that the DoE is one partner in the huge international consortium that is building the ITER fusion reactor in France, a multi-billion pound hope for the first large scale fusion reactor to generate a sustained, burning plasma that will produce more energy than it consumes. GTC plays an integral role in getting that hope off the ground by simulating plasma turbulence within Tokamak fusion devices.

The Tokamak device generates a magnetic field that confines the plasma within a donut-shaped cavity and accelerates the plasma particles around the torus. GTC simulates the motions of the particles through the donut using a particle-in-cell (PIC) algorithm. During each PIC time-step, the charge distribution of the particles is interpolated onto a grid, the electric fields are interpolated from the grid to the particles, and the phase-space coordinates of the particles are updated according to the electric field.

That’s a lot of moving parts, which is why massively parallel systems are so instrumental in advancing this science. And Summit will represent a huge step-change in how accurately GTC can simulate plasma turbulence.

“The confinement of the alpha particles involves many physical processes and each process involves different spatial scales and timescales,” explains Zhihong Lin, professor of physics and astronomy at UCI and the creator of that smart GTC code.

“In the past, we could only simulate one process at a time in order to resolve both spatial scale and timescale for that particular process. Now with more powerful computers, we can use more particles, have higher resolution and run a longer time simulation,” he says.

All this modelling should help researchers understand the turbulence of the alpha particle in the Tokamak and how it is ultimately driven out of the fusion device – a result nobody wants. With that understanding, engineers can develop the technology necessary to confine the alpha particles over extended periods.

GTC has been kicking around for more than 20 years and is used by a variety of researchers on different systems across the world. Originally written in Fortran 90/95, porting it to new hybrid supercomputers while maintaining as few versions of the source code as possible has been challenging. To run on Summit, OLCF and the GTC team chose PGI compilers and OpenACC to offload the work to the GPUs without changing the original code.

“They decided to go with OpenACC, which is a directive-based method to offload work to GPUs. You can actually use your original code but tell the compiler that certain loops should be offloaded to the GPU to make it faster,” says Straatsma.

“The advantage to this is that it can run on machines that have these GPU accelerators, but also still runs on machines without. You would get a slightly better performance out of it if you recoded everything in CUDA, but it would only run on NVIDIA GPUs, so it would be harder to port it from one machine to the other. In order to be portable, they use OpenACC.”

With the help of GTC, ITER aims to create its first plasma in 2025 and scale up to maximum power output by 2035. Its success would revolutionize world energy production and make the price tag of over £16 billion well worth it to the international consortium of the US, the EU, India, Russia, China, South Korea, and Japan. But it’s not the only group with skin in the game.

Late in 2015, a €1 billion reactor in Germany produced its first helium plasma for a duration of one tenth of a second at a temperature of around one million degrees. The reactor uses a stellarator in which the plasma ring is shaped like a Mobius strip instead of a donut.

Even Google is in on the action, having developed a new algorithm with leading fusion firm Tri Alpha Energy, backed by Microsoft co-founder Paul Allen. The Optometrist algorithm aims to combine HPC with human judgment to find better solutions for the problems of controlling plasma reactions.

But every attempt to make the dream of clean, usable fusion energy a reality has something in common. We are going to need next-generation supercomputers and portable coding to make it so.

Be the first to comment