The Barcelona Supercomputing Center is one of the flagship HPC facilities in Europe and it arguably has the most elegant and spiritual of datacenters in the world, located inside of Torre Girona Chapel. While BSC has been a strong proponent of IBM’s Power processors in past systems, for the core MareNostrum 4 contract that went out for bid in 2016, the company went with Intel’s “Skylake” Xeon SP processors as the core compute, but also hedged its bets by mixing in a fair amount of other architectures, all adding up to 13.7 petaflops and all linked using Intel’s 100 Gb/sec Omni-Path interconnect.

IBM is the prime contractor for the MareNostrum 4 system, which is more than an order of magnitude more powerful than its predecessor, a cluster based on 56 Gb/sec InfiniBand interconnects with “Sandy Bridge” Xeon E5 processors with a smattering “Knights Corner” Xeon Phi 5110P parallel X86 coprocessors. That primary MareNostrum 4 cluster, which is rated number 16 on the November 2017 list, became operational back in June 2017, and we profiled the core machine in the autumn when it started doing real work, not just attaining its Linpack Fortran benchmark rating for the Top 500 supercomputer rankings. Just to review, that core machine is actually built by Lenovo (which acquired the former System x server business from Big Blue) and has 3,456 nodes (48 racks) with a pair of Xeon SP-8160 Platinum processors with 24 cores each running at 2.1 GHz, for a total of 153,216 cores. This machine has a peak performance of 10.3 petaflops and a sustained performance of 6.47 petaflops on the Linpack test, for a computational efficiency of 62.8 percent.

As we explained two years ago, BSC would have considered a Power-based core system if IBM had been able to deliver its “Nimbus” Power9 chip on a schedule similar to that of Intel with the Skylake Xeons; as it turns out, both were later than either Intel or IBM thought they would be, respectively, but Skylake did make it to market considerably earlier than Nimbus.

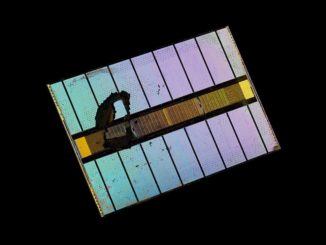

Just the same, BSC hedged some of its bets, and it bought three racks of IBM’s “Newell” Power AC922 systems – the same nodes used in the “Summit” supercomputer at Oak Ridge National Laboratory and the companion “Sierra” machine at Lawrence Livermore National Laboratory. BSC is the first supercomputing center in Europe to get its hands on the Newell machines, which support either four or six of Nvidia’s top-end “Volta” V100 GPU accelerators. Those three racks have a total of 54 hybrid CPU-GPU of the Power AC922 nodes, with each node having a pair of Power9 chips with 20 cores running at 2.1 GHz and 512 GB of DDR4 main memory, 6.4 TB of flash, and four of the Volta accelerators with 16 GB of frame buffer memory. Add it all up, and the 54 nodes have 2,160 cores and 27 TB of DDR4 memory, and 345.6 TB of flash on the CPU side and 17,280 streaming multiprocessors and 3.4 TB of frame buffer memory on the GPU side. All of the nodes are connected by 100 Gb/sec InfiniBand from Mellanox Technology.

Here’s the funny bit, and why many (including us) are firm believers in accelerated computing. The MareNostrum 3 system, which was no slouch in its day, weighed in at 1.1 petaflops peak and had 3,056 nodes. The 54 nodes in the Power9-V100 portion of the MareNostrum 4 hybrid cluster weigh in at 1.48 petaflops peak, according to BSC, which is a 35 percent increase in performance with a 98.2 percent contraction in node count.

Based on early benchmark results, the Power9-V100 portion of MareNostrum 4 has a rating of 1.01 petaflops on the Linpack parallel benchmark, or a computational efficiency of 69.2 percent. This part of the cluster was rated at 85.8 kilowatts, which works out to 11.77 gigaflops per watt. That’s about the same power efficiency as several DGX-1 systems listed on the Green 500 supercomputer list, which ranks machines by their energy efficiency not on their computational throughput. The most energy efficient machine tested so far is the Shoubu System B at RIKEN in Japan, which uses ZettaScaler floating point accelerators from PEZY Computing to achieve 17.01 gigaflops per watt.

On the much more strenuous High Performance Conjugate Gradients (HPCG) benchmark, the GPU-enhanced Power9 systems ran at a mere 27.5 kilowatts and delivered 28 teraflops of oomph, for a 0.59 gigaflops per watt and a 2.77 percent computational efficiency against the Linpack rating. This horrible level of performance is actually typical on the HPCG test, so don’t be alarmed. The old K supercomputer at RIKEN, which cost a cool $1.2 billion mind you, has a peak performance on Linpack of 10.51 petaflops, and delivered 0.603 petaflops on HPCG, or 5.3 percent of sustained performance.

The test drives the architecture, remember. And so do the economics. It is a lot cheaper to buy Power AC922 nodes than it was to buy K supercomputer nodes, even if you adjust for crazy pricing on GPUs and for inflation.

As part of the hedging against improvements in other future architectures, the MareNostrum 4 cluster was supposed to have a 500 teraflops slice of the pre-exascale “Aurora” system that was commissioned from Intel in partnership with supercomputer maker Cray and that was to be based on Intel’s now defunct “Knights Hill” Xeon Phi processors and its 100 Gb/sec Omni-Path interconnect. That original Aurora system was canceled and is now being re-architected with a different Intel processor and presumably at least a 400 Gb/sec Omni-Path interconnect (it is hard to imagine that the 200 Gb/sec of the second generation Omni-Path, which has yet to be announced, will be enough.) It is unclear if MareNostrum will get a slice of this Aurora A21 machine, due in 2021, but it seems likely. MareNostrum 4 will also include more than 500 teraflops of the “Post-K” Arm-based server computer nodes being built for RIKEN by Fujitsu. That will let BSC play around with three other architectures over the next few years in addition to the core Xeon SP cluster. Consider it a way to get aggressive pricing and good treatment from Intel and its partners.

We exist in a digital data-rich era, and competition makes us thrive to innovate and excel. Hybrid infrastructure will only increase our efficiency in the digital landscape.