Networking has always been the laggard in the enterprise datacenter. As servers and then storage appliances became increasingly virtualized and disaggregated over the past 15 years or so, the network stubbornly stuck with the appliance model, closed and proprietary. As other datacenter resources became faster, more agile and easier to manage, many of those efficiencies were hobbled by the network, which could take months to program and could require new hardware before making any significant changes.

However slowly, and thanks largely to the hyperscalers and now telcos and other communications service providers, that has begun to change. The rise of software defined networking (SDN) and network function virtualization (NFV) has forced significant changes in networks, which have increasingly become based on open software.

SDN decoupled the control plane in networks from the underlying hardware, making networks easier to program, more flexible and scalable, more adaptive to current conditions, and less costly to acquire and operate. With virtualized network functions, tasks like load balancing, routing and intrusion detection and prevention were moved off the proprietary switches and put into software that could run on industry standard systems, which are much less expensive than proprietary switches. New network operating systems have been introduced and white-box switch makers are becoming a larger part of the networking hardware mix. Hyperscalers like Facebook – with its Open Compute Project initiative – and Google, determined to make their massive datacenters more efficient and cost-effective helped fuel the drive for more efficient, software-based networking through internal efforts to build their own networking hardware and software.

The drive for more efficient and responsive networks also has had a determinedly open source strain throughout it, particularly with the launch of the OpenFlow in 2011. Soon after SDN and NFV became the talk of the networking world, multiple open-source projects – many driven by established vendors – cropped up to create common frameworks and platforms that could be used to build other capabilities on top of and to accelerate adoption of the technologies. Those included such efforts as OpenDaylight (whose founding members included Microsoft, Cisco Systems, and IBM), Open Networking Automation Platform (ONAP), and Open Platform for NFV (OPNFV). In addition, networking vendors began donating their own SDN technologies to open-source communities, with Juniper Networks and its OpenContrail being the latest example. Juniper in December 2017 moved its OpenContrail codebase to the Linux Foundation, which has become the home for the bulk of open networking efforts (nine of the largest ten such initiatives). In fact, the Linux Foundation had so many such projects under its umbrella that earlier this month, the group created the LF Networking Fund to house all the projects and make it easier for the various communities to collaborate.

The hyperscalers and other service providers like AT&T and Verizon have embraced SDN and NFV in their networks as they look to make their infrastructures faster and more responsive. However, enterprise adoption has been slow due to the increased complexity that network virtualization can bring, as well as a lack of skills at many of these companies. But the development of software-centric approaches has given rise to the latest step in the evolution of networks, which is intent-based networking, something that has been talked about for the past couple of years but was pushed to the forefront last year when Cisco, with its large megaphone, kicked off its initiative.

The idea behind intent-based networking is essentially to create an environment where a network administrator can determine the state of a network, and the network software can automatically implement the policies and procedures. Intent-based networking is getting attention even if the technology itself isn’t fully baked because the goal of introducing greater automation and making networks more proactive is attractive to enterprises and the underlying technologies like machine learning algorithms are gaining wider use, and because vendors are beginning to push the merits and the first products in their portfolios.

“The big problem we are trying to solve is the ability of networks today to solve problems proactively,” Sundar Iyer, distinguished engineer at Cisco, tells The Next Platform. “If you look at the past couple of decades of networking, we do day-to-day operations and things break. We do security and a security issue occurs. What’s needed to fix that? If a compliance issue is found, that’s a year after things break. We do changes, and change may occur every week, every day. Sometimes we make mistakes in these changes, then we have to go back in and fix those.”

Having a networking system that can anticipate security issues, problems, or necessary changes – and that automatically implement the desires of the networking administrator – can address many of those problems, Iyer says. Cisco estimates that IT staffs spend 43 percent of their time troubleshooting while network operators spend four times as long collecting data than analyzing it while troubleshooting.

Established networking vendors like Cisco, Juniper Networks, and Extreme Networks and smaller companies like Apstra and Forward Networks are touting intent-based networking as the next logical step in the software-centric drive in networks. Juniper in December 2017 rolled out a set of Juniper Bots based on its Contrail SDN controller that are designed to bring greater automation to networks. The bots introduced automation of the network peering process for improved policy enforcement and on-demand scaling, network auditing and provisioning modifications, and tracking the health of the network, taking the tasks out of human hands. Apstra earlier last year unveiled the latest version of its AOS network operating system that brings greater automation to networks by collecting and keeping information around configuration, telemetry and intent to ensure through APIs and tools that the network is always in the state defined by network administrators.

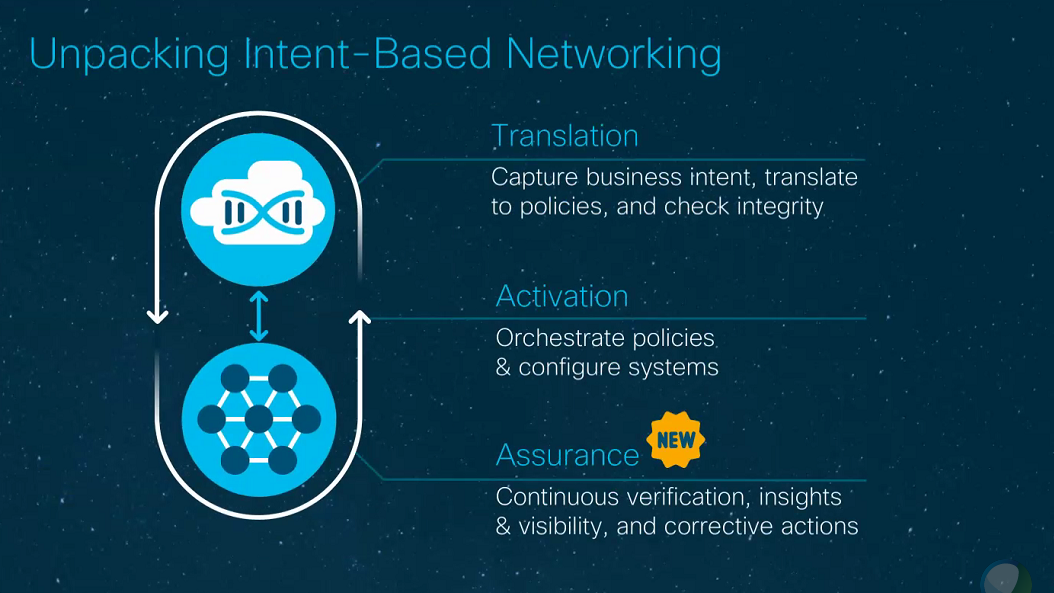

For Cisco, the intent-based network initiative is part of its larger strategy to move away from its history as a hardware seller to more of a software and services vendor that relies more on subscription sales and recurring revenue than one-time upfront infrastructure sales. Over the past several years, Cisco has rolled out its Application Centric Infrastructure (ACI) for software-centric networking based on its Nexus 9000 switches and Tetration for real-time datacenter analytics, both of which are key parts of its intent-based networking strategy. At the company’s Cisco Live show this week in Barcelona, the company unveiled the third leg of the stool, adding assurance capabilities to enable networks to ensure that the network is running in accordance with set policies and make recommendations when something needs to be fixed or changed.

Last year, Cisco address the other two stool legs: translation, for capturing the intent of the network administrators and translate those intents into policies, and activation, through orchestrating the policies and set configurations to meet the intent.

With assurance, “we can continuously verify that what you intended to do is actually being delivered,” Sachin Gupta, senior vice president of product management for enterprise networks at Cisco, explains. “‘I programmed the memory, the forwarding logic, in the network to do something. Is it correct? Is it doing what I expect? Am I compliant? Is the configuration of all my networks nodes compliant?’ We also need to get insights and visibility into the infrastructure to monitor and troubleshoot and remediate much more quickly. The third part is, how do we take the decades of knowledge Cisco has with networks in working with our customers to automatically identify issues and help you with corrective actions? You’re not just looking at raw data; the system is actually telling you, ‘We believe this is the issue that’s facing you and here are the steps you can take to mitigate.’”

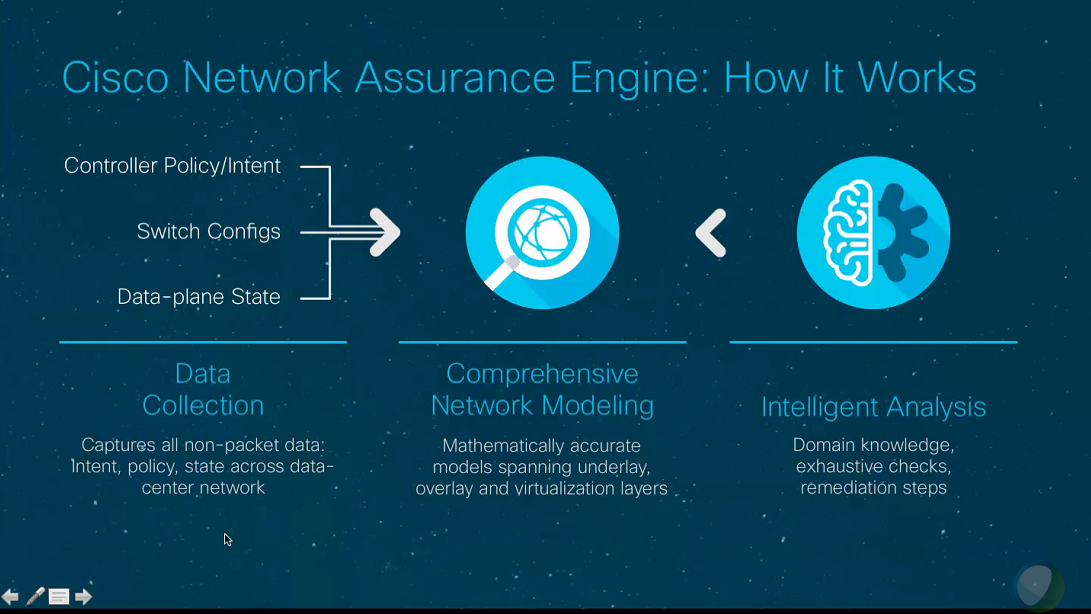

Cisco’s assurance capabilities come in part through DNA Center Assurance, which is software that uses data from across datacenters to find problems and make recommended fixes. The company’s Meraki Wireless Health software plays the same role in wireless networks. Another key part of the assurance play is Cisco’s Network Assurance Engine, which runs in a virtual machine and is used in datacenters that use ACI.

“What the network assurance engine does is build a precise mathematical model of the entire datacenter,” Cisco’s Iyer says. “We read policies, configurations, security information, routes, IP addresses, VLANs – virtually every piece of metadata is read in to build this comprehensive model. The concept of modeling is not new. We’ve had many fields that have done models for the last twenty years. We use models when we do hardware verification. Once you have a model, you have a map of how networks behave. What changes the game when it comes to networking is the ability to look at every conceivable possibility in the network. The beauty of mathematical modeling is the ability to argue over every conceivable possibility. With models, it means you can do a number of interesting things – you can ask questions from it, you can do analysis, you can essentially look at it and ask did it do what was intended.”

Be the first to comment