InfiniBand and Ethernet are in a game of tug of war and are pushing the bandwidth and price/performance envelopes constantly. But the one thing they cannot do is get too far out ahead of the PCI-Express bus through which network interface cards hook into processors. The 100 Gb/sec links commonly used in Ethernet and InfiniBand server adapters run up against bandwidth ceilings with two ports running on PCI-Express 3.0 slots, and it is safe to say that 200 Gb/sec speeds will really need PCI-Express 4.0 slots to have two ports share a slot.

This, more than any other factor, is why Mellanox Technologies, which has been champing at the bit to double up the speed of its InfiniBand switching, is not expected to ramp up the Quantum InfiniBand switch chips until the middle of next year. Mellanox unveiled the Quantum InfiniBand ASICs at the SC16 supercomputer conference last November, which we analyzed here, and at this year’s SC17 event in Denver, the high performance networking company is rolling out the details on its forthcoming Quantum top-of-rack and modular switches.

As has been the case with prior generations of devices across every switch maker, customers do not always have to use the top speed per port that is available when they buy a switch. Rather, they can use cable splitters to cut the bandwidth per port by a factor of two or sometimes four and jack up the effective port density in the switch and therefore the rack. And, we think a lot of customers who only need 100 Gb/sec networking will do just that, and thereby also position themselves to switch to 200 Gb/sec ports in the future when and if they need to by swapping out the splitter cables for single-port cables.

But in the end, we think that bandwidth will be what customers really want – provided they can get low and consistent latency, that is – and that many clustered systems that are built using the forthcoming Quantum InfiniBand ASICs will push the ports to 200 Gb/sec and customers will be soon wondering when 400 Gb/sec ports are available. There is generally a two year cadence between speed bumps, so look for an announcement in November 2018 on that and deployments maybe 12 months to 18 months later, depending on where the CPU and PCI-Express roadmaps are at in their cycles.

The Quantum ASICs represent a big leap in performance, with 16 Tb/sec of aggregate switching bandwidth and latencies on a port-to-port hop down below 90 nanoseconds. The main innovation here is that the speed of the serializer/deserializer (SERDES) communications circuits that are the key part of the switch have signaling that runs at 50 Gb/sec per lane. This is twice the 25 Gb/sec per lane that the Switch-IB chip from 2014 and Switch-IB 2 chip from 2015 had, and it is also twice the signaling rate of the 100 Gb/sec Spectrum Ethernet ASICs that Mellanox has created. (Both the Switch-IB and Switch-IB 2 ASICs also supported 100 Gb/sec ports.) All three of the InfiniBand chips – Switch-IB, Switch-IB 2, and Quantum – have 144 SERDES blocks on them, and with HDR InfiniBand, four lanes of 50 Gb/sec are grouped together to create a 200 Gb/sec port on the switch.

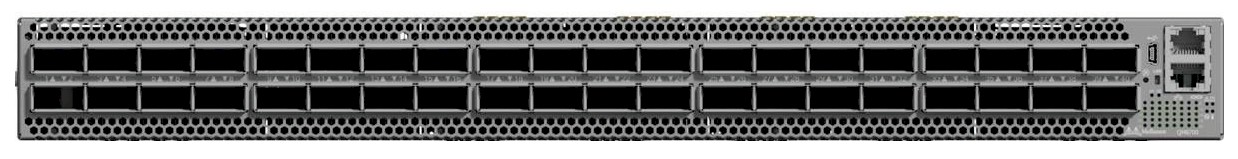

Here is the neat bit. On the new Quantum QM8700 top of rack switch, which was just unveiled, the base configuration comes with 40 ports running at 200 Gb/sec. And yes, you can use cable splitters to cut that down to 100 Gb/sec (or even 50 Gb/sec) per port to get 80 ports or 160 ports, respectively, out of a single device. But, Mellanox is also allowing switch ASIC customers to slice the lane groups down in the machine and only gang up two 50 Gb/sec lanes of they want and literally making an 80 port, 100 Gb/sec switch. By doing so, either Mellanox or its switch customers could jack up the port density on the device by 2X and cut the bill of materials by a lot because it would take two 40 port switches with two ASICs, two power supplies, and two enclosures to do the same task.

The Quantum switch also has a “Broadwell” Xeon E5 processor embedded on it that supports the subnet manager software stack and can handle up to 2,000 nodes in the cluster fabric from that processor. The MLNX-OS network operating system has the congestion control and adaptive routing necessary for HPC workloads, and also has built in functions to accelerate the MPI and SHMEM protocols commonly used in HPC and in some machine learning setups. The switch can be used in traditional fat tree topologies, but also supports Dragonfly+, SlimFly, and 6D torus topologies.

While the QM8700 top of rack switch has only one Quantum ASIC per box, the CS8510 and CS8500 modular switches gang up bunches of them. The big, bad CS8500 director class switch running the HDR InfiniBand protocol has a total of 60 of the Quantum ASICs to deliver its 800 ports running at 200 Gb/sec. Each leaf in the modular switch has a single Quantum ASIC, with 20 ports facing out to the servers and storage and 20 facing inwards to the backplane of the switch. That backplane is a fully connected fat tree topology that is implemented using 20 of the Quantum ASICs. It is like a two-layer leaf-spine network with no oversubscription of ports in a single box, in essence, and multiple of these director switches can be linked to each other to create a three-layer network. The QM8510 is, oddly enough, the smaller director switch and it is one quarter the size of the big bad one at 200 ports running at 200 Gb/sec.

Anything that a modular switch can do can be done with a group of the top of rack switches set up on a network with one, two, or three layers, Gilad Shainer, vice president of marketing for the HPC products at Mellanox, tells The Next Platform. “You have to deal with fewer cables with a modular switch,” he says, “and once a modular switch is scaled to about 90 percent of its capacity, it is priced competitively with a group of top of rack switches.” In some cases, it is just easier to put a modular switch in the middle or end of a row and be done with it, and some HPC shops do that. Those that are building very large networks, with thousands to tens of thousands of nodes, often use a mix of top of rack and modular switches.

The other big deal with the Quantum HDR InfiniBand switches is that, thanks to the higher port count per ASIC and the doubling of bandwidth and the possibility of running everything at 100 Gb/sec speeds, they allow InfiniBand networks to scale a lot further than was possible with the prior 100 Gb/sec Switch-IB and Switch-IB 2 chips. In a single tier EDR setup, with one switch with 36 ports, that is all you get, and with a Quantum ASIC, you can split it with cables or at the ASIC down to 80 ports at 100 Gb/sec. That is a factor of 2.5X more ports. With a two-tier network, EDR could have a mix of leaf and spine switches intermixed to create a setup with 648 ports at 100 Gb/sec, but the HDR setup can do 3,200 ports, or 4.9X more. And with a three-tier network, EDR topped out at 11,644 ports all interconnected at 100 Gb/sec, but HDR can stretch to 128,000 ports, or a factor of 11X more.

The other thing that Quantum chips will have in their favor, of course, is that the cost per bit has to go down. That is just the way the network is. If history is any guide, then HDR InfiniBand will carry a 30 percent to 40 percent premium over EDR InfiniBand, but deliver twice the bandwidth per port. That still works out to a 30 percent to 35 percent drop in the cost per bit, if you do the math, but that also translates into a 30 percent to 35 percent drop in the cost per port if you run the HDR switch at 100 Gb/sec.

The Quantum switches from Mellanox will be shipping around the middle of next year, and the question now will the ramp be faster or slower than the ramps for 56 Gb/sec FDR and 100 Gb/sec EDR InfiniBand. “I think the ramp is going to go faster, and that is because HDR InfiniBand allows you to drop back to 100 Gb/sec,” says Shainer. “Being able to do 80 ports at 100 Gb/sec, and they will get the same speed as EDR but do so cheaper and the HDR switches will go faster, too.”

s/by a lot two/by a lot too/