Intel is not the only system maker that is looking to converge its processor lines to make life a bit simpler for itself and for its customers as well as to save some money on engineering work. Oracle has just announced its Sparc M8 processor, and while this is an interesting chip, what is also interesting is that a Sparc T8 companion processor aimed at entry and midrange systems was not already introduced and does not appear to be in the works.

There is plenty a little weird here. The new Sparc T8 systems are, in fact, going to be using the Sparc M8 processors, which should mean that they are not really T8 systems at all, but rather just smaller M8 systems. It is also a bit strange that Oracle decided to launch these systems two weeks before its own OpenWorld user and partner conference held in San Francisco, and did so without much warning. It is also a bit weird that the M8 chip was not rolled out at the annual ISSCC or Hot Chips conferences if it was impending here in the late summer of 2017.

Subsequent to the publication of this story, Oracle tells The Next Platform that there never was a plan for a T8 chip and that it had always planned to converge the T and M processors. To be precise, this was the message from the Oracle team:

“There was never a plan for a T8 chip. We converged to one chip for both T and M servers with M7. T7 and M7 servers all had the same M7 processor. Same goes for M8 processor-based systems, Whether the server is a T- or M- it has the same M8 processor in it. The T- and M- server naming is a bit of a holdover from a long time ago when we originally did use different processors for each line.”

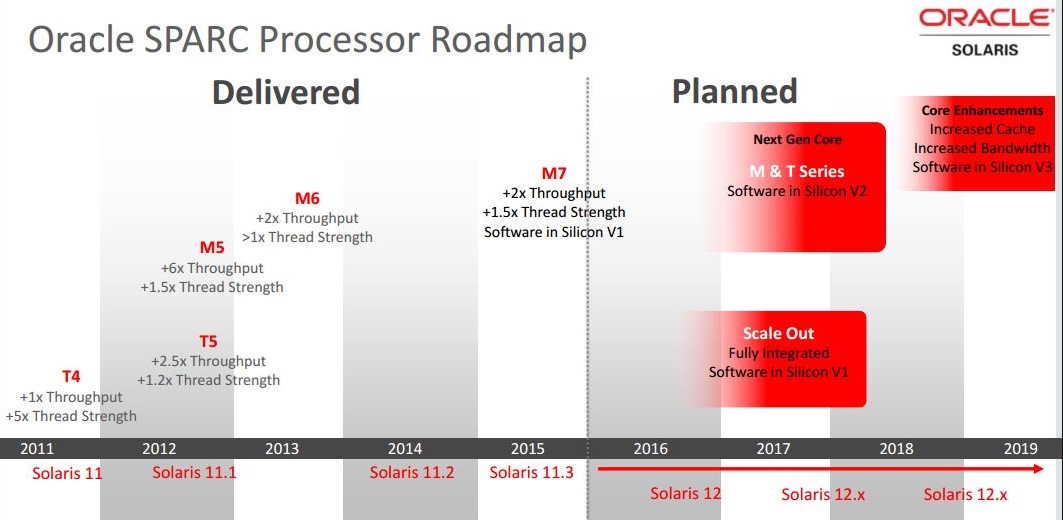

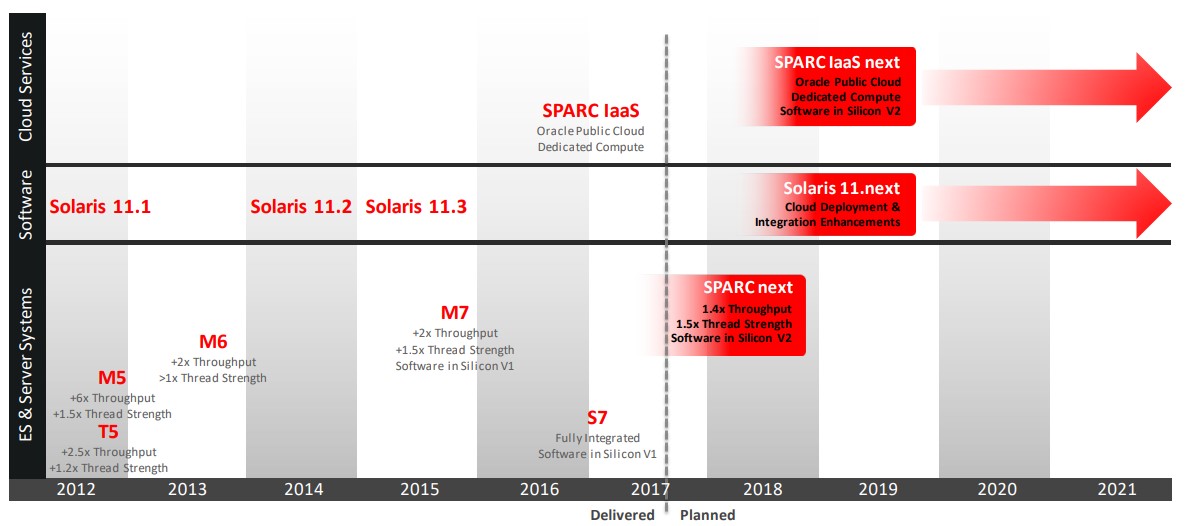

While it is certainly true that Oracle did create T7 and M7 servers using the M7 processor last year, the company’s roadmap and naming conventions absolutely gave the impression that there were T and M processors due in 2017:

it also showed there was something cooking for the scale-out part of the market, which was the “Sonoma” Sparc S7 chip, which was unveiled in August 2015 and which launched in two-socket servers in June 2016 that were squarely aimed at workhorse Intel Xeon systems commonly used in the datacenter for all manner of workloads. It looks like Oracle pulled the Sonoma chip in early, and they were unique in the server space in that they included on-chip InfiniBand ports, but these were never activated in the S7 servers, which was odd. (Oracle is an investor in InfiniBand networking upstart Mellanox Technologies, but did not license the InfiniBand tech from that company.)

There are no Sparc T8 processors on the current Oracle roadmap, and in fact, there is nothing beyond the “Sparc Next” chip, which is being delivered as the Sparc M8 processor. This is in stark contrast to the kind of roadmaps that Oracle put out in the wake of its $7.4 billion acquisition of Sun Microsystems back in early 2010, when it laid out a five year processor, server, and Solaris operating system roadmap and, to its credit, it largely stuck to and delivered on. It is looking a little thin going forward, which has prompted rumors of Oracle cutting back on Sparc and Solaris development. Oracle has indeed had layoffs, and also apparently has a Solaris/Sparc emulator in the works for X86 gear, which is not a very good sign for the Sparc M9, M10, and M11. In the Sparc M8 webcast given by the server top brass, Ed Screven, chief corporate architect at Oracle and second only to Oracle co-founder and chief technology officer Larry Ellison, stressed that Solaris would be supported to at least 2034, and he said “at least 2034” twice to make sure everybody heard it. But having an operating system supported is not the point. Having a steady stream of improving hardware that makes it worth invest in is just as important as having someone answer the phone when something goes awry.

If this Sparc/Solaris emulator comes to pass, it will not be the first one and we will have plenty to say about it. (Oracle has provided some clarification about this, and says that the emulator is coming from Stromasys, not Oracle.)

The Tock Without A Tick

In the old Intel “tick-tock” parlance, the Sparc M8 would be a tock, meaning it has some big architectural changes and is not just a process shrink with a few tweaks here and there.

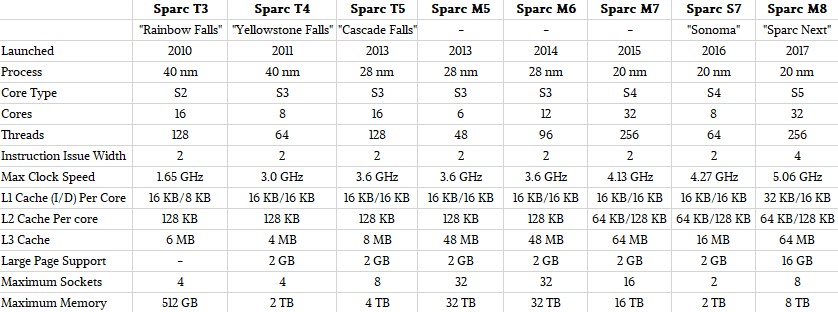

That said, Oracle has been able to do some pretty dramatic improvements in the year between the M7 and the M8, and all on the same 20 nanometer processes from fab partner Taiwan Semiconductor Manufacturing Corp. This is in part because Oracle is moving to a new S5 core that can issue four instructions per cycle, twice that of the prior seven Sparc T, Sparc M, and Sparc S processors that were based on the S2, S3, and S4 cores.

Even though the S5 cores used in the Sparc M8 chips only run at a 22.5 percent higher clock speed than the S4 cores used in the Sparc M7 (5.06 GHz versus 4.17 GHz, respectively), the S5 cores are delivering a 50 percent performance boost. As you can see from the table above, there is a slight boost in the L1 instruction cache, and large page memory support has been expanded from 2 GB pages with the prior generations of Sparc chips made by Oracle to 16 GB pages.

Oracle did not divulge the transistor count of the Sparc M8 processor, but it is our guess that it is not very different from the Sparc M7, which weighed in at 10 billion transistors across its 32 cores. The Sparc M8 has 32 cores as well, and essentially the same cache structure and “Bixby” family of on-chip NUMA interconnects. (We drilled down into the Sparc M7 chip and its M7 class systems back in October 2015.) The M8 chips have eight threads per core, something Sun Microsystems perfected a long time ago and that Oracle has stuck with ever since. Each Sparc M8 socket has faster main memory, running at 6 percent faster (2.4 GHz versus 2.33 GHz for the Sparc M7) and helping boost the memory bandwidth per socket. As it turns out, the Sparc M8 chip delivers 185 GB/sec of memory bandwidth per socket, which is 16 percent higher than that of the Sparc M7 at 160 GB/sec. Clearly, to get that 50 percent improvement in single thread performance, Oracle has radically tweaked the caching and pipelines in the S5 cores, and the wonder is why it did not move to the S5 cores a long time ago. (Wink.) Masood Heydari, senior vice president of Sparc development at Oracle, tossed out this other statistic in his part of the presentation: The Sparc M8 offers 145 GB/sec of I/O bandwidth into and out of its PCI-Express controllers.

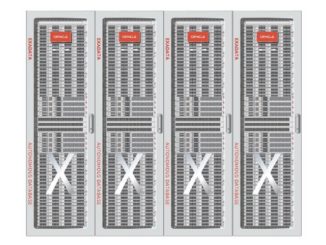

The very weird trend in the M series systems (until you think about it) is that are core counts and/or performance per core have expanded, the NUMA scalability of the boxes have been cut. The original “Bixby” interconnect that was used with the Sparc M4 systems, which were renamed the Sparc M5 machines later when they launched to get the name in synch with the T5 chip. was technically able to scale to 96 sockets and 96 TB of main memory. Oracle never pushed this, but did put out machines with 32 sockets for these generations. It capped the NUMA scalability at 32 sockets as well with the Sparc M6 machines in 2014, but when it made the jump to 32 cores with the Sparc M7 chip in 2015, it cut the officially supported Bixby-2 NUMA interconnect back to 16 sockets. With the Sparc M8, Oracle is cutting back the NUMA scale to eight sockets, and the memory capacity is cut back by the same proportion, from 32 TB with the Sparc M5 and M6 and 16 TB with the Sparc M7 to only 8 TB with the Sparc M8. This is perplexing, and we will ponder this when we get into chatting about the Sparc M8 systems in a separate article.

As has been the case with the prior Sparc chips from Oracle, if single thread performance is important for a workload (or a portion of it), the thread count can be dynamically scaled back to boost the speed of any one thread by making more registers, buffers, cache, and such available to it. This enabled, in part, in conjunction with dynamic voltage scaling, which allows the Sparc cores to boost their clocks if other threads or cores don’t need the juice, and thereby boost the single threaded oomph.

The Sparc M8 is, of course, binary compatible with prior Sparcs going all the way back to the UltraSparc family a zillion years ago. It can issue four instructions using out-of-order processing that has been common with RISC processors for a long, long time now, and the chip can have up to 192 instructions in flight at any time.

Oracle did not provide a die shot of the Sparc M8 chip, but we do have a block diagram that shows how all of the features of this monster chip fit together. Take a gander:

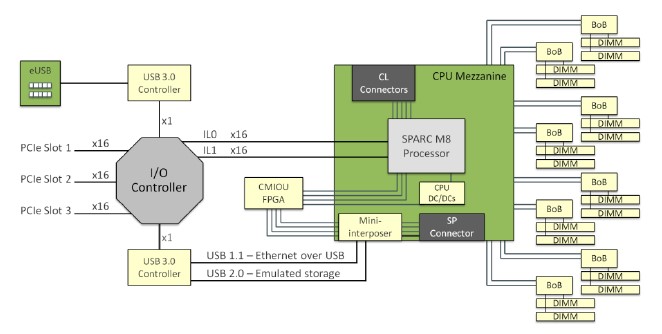

The M8 chip is organized into two halves, each with 16 cores and 32 MB of L3 cache; these two halves are actually blocks of eight cores and two L3 banks, each with 8 MB of L3 cache segment allocated to them. Each M8 core has 32 KB of L1 instruction cache and 16 KB of L1 data cache plus a 128 KB data cache. Every group of four cores has a shared 256 KB instruction cache, so to a certain way of looking at it, this is sort of like a baby NUMA server with eight four-core S5 cores all interconnected. Each quarter of the chip has its own memory controller and two memory buffer chips (buffer on board, or BoB, in the diagram) links out to two memory sticks, for a total of 16 sticks per socket, and importantly, one for every two cores to keep processing and memory bandwidth somewhat in step. Each processor has eight of the second generation of Data Analytics Acceleration (or DAX) units that allows for in-memory processing on the Sparc chips, plus four coherency interconnect units and two coherency crossbars on the die.

We begin to see why scalability per socket has been cut back by a factor of four. In essence, the Bixby interconnect has been pulled back into the M8 package, and we would not be at all surprised to find out that the M8 is really a multichip module with two 16-core or more likely four eight-core processors inside a package. AMD is using four eight-core chiplets in the Epyc 7000 series of server processors, and has moved its Infinity fabric back into the package as well as between sockets. This would explain why we are not seeing a die shot from Oracle. Moving to chiplets would be obvious, and immediately so. It also makes sense, if Oracle wants to keep die costs down and still offer a big fat footprint. So, to be really precise, it would not surprise us at all of the M8 is really four of the S7 chips implemented with the new S5 core and with their InfiniBand ports excised. And if it is a monolithic chip with the Bixby interconnects used as we suggest, it comes to the same in terms of limiting the NUMA scalability, and then the issue is why didn’t Oracle do a quad MCM and save some money and boost yields?

If we were Oracle, and we wanted to make Sparc chips cheaper, that is what we would do.

There is plenty of innovation in the Sparc M8 chip, and it is clear that it was created by the owner of the Oracle database and the Java programming language and runtime. The DAX functions include a rev of the SQL in silicon features that debuted in the Sparc M7 and S7 chips two years ago.

With all of the security breaches in the past several years, businesses are understandably interesting in having end-to-end encryption, for data at rest and data in motion, on the systems that underpin their applications. And Oracle is working, like other chip makers, to do this in a transparent fashion that also does not put a lot of load on the general purpose cores that do the real work.

Heydari said that the goal was to have the performance penalty from encryption be “so small that you can have it on all the time,” and then trotted out hybrid benchmark running Java on the Web and application tiers and Oracle 12c on the database tier on a single-socket M8 machine for the back-end database. On that 32-core system, with all of the encryption turned on and being processed by coprocessors, this encryption only incurred a 2 percent performance hit. That is pretty negligible.

With the SQL accelerators, and there is one associated with each core, selected SQL functions are run at memory speed and are very close to memory, and importantly, they also decompress data as it comes out of main memory to be chewed on. This work would otherwise consume CPU core cycles, and having the decompression offloaded and in-line with the memory bus makes data compression (which helps speed database scalability) a whole lot more attractive.

The more aggressive compression algorithms, as Heydari explained, take a lot more CPU to compress and decompress, so offloading to an accelerator is key, particularly for decompression. (You can always do the compression when you get around to it, but the decompression has to be done during a transaction.) The DAX units actually grab the data out of main memory, decompresses it, does the SQL processing on it, and drops the results in the L3 cache of the chip, cutting out some latency. These DAX functions are not just available for the Oracle database, but also for the Java Streams analytical engine for Java.

Another key feature in the DAX accelerators is Silicon Secure Memory, which provides encryption with separate keys for segments of main memory. Here is how it works, conceptually:

“In large pieces of software, you can use this to make sure that you don’t have pointers aiming at wrong areas of memory and overstepping data and corrupting it or other areas of the application,” Heydari said. “Or, you can protect against malicious attacks, like Heartbleed and Venom. The beauty of this is that all of this is running at normal speed, and there is no overhead associated with it.”

The encryption is key, of course, and each M8 core has its own encryption engine associated with it, and a slew of encryption and hashing algorithms are encoded in the circuits: AES, Camellia, CRC32c, DES, 3DES, DH, DSA, ECC, MD5, RSA, SHA-1, SHA-3, SHA-224, SHA-256, SHA-384, and SHA-512. The encryption bandwidth matches the I/O bandwidth of the cores and scales with the number of cores, and that is why the encryption penalty is so low.

All of these factors, said Heydari, contribute to the real goal of the M8 architecture, which is predictable performance under heavy load.

> “at least 2034”

So no commitment on fixing Y2038 bugs…

It says support until 2034. It doesn’t says that they fix bugs. Oracle has extended support for many of their eol products: It is very expensive and best effort support without fixing new bugs. (I think that itmust be a monkey searching in internal metalink)

Past experience with Maintenance Contracts (not Oracle or Sun) we learned that vendors had a habit of pricing support through the roof when they really did not want to support the product any longer. Best one was the vendor that initially said no more support then wanted I believe it was a 700% increase for remote tech support only, no hardware. We had seen massive price increase from them on enough times to know it was their way of saying they did not want to support. They had the only software for accessing the hardware and even though they did not want to support they refused to sell my employer the software. They lost on both counts, no Maintenance Contract no software sale.

I knew it I have been following this from day one announcement finally someone was able to connect the dots…the Sparc m8 is the box that wins the Next Gen future proof… CHOICE winner at the heart of all networks. Yaaaa I we did not want to commit to M8. Now the data is in and I at Transfer Data Comm owner can commit and move forward. Spec it in everywhere.. wall street here we come.

Said Heydarki enhanced future proof goal of the m8 architecture which is

predictable performance under heavy loads. Now proven data

Where find Oracle SPARC Architecture 2017?

Oracle SPARC Architecture 2017 was created internally at Oracle (I was involved, so know that for a fact). However, it seems that Oracle never published that specification. The SPARC M8 processor conformed to the Oracle SPARC Architecture 2017 specification.