The ubiquity of the Xeon server has been a boon for datacenters and makers of IT products alike, creating an ever more powerful on which to build compute, storage, and now networking or a mix of the three all in the same box. But that universal hardware substrate cuts both ways, and IT vendors have to be clever indeed if they hope to differentiate from their competitors.

So it is with the “Wolfcreek” storage platform from DataDirect Networks, which specializes in high-end storage arrays aimed at HPC, webscale, and high-end enterprise workloads. DDN started unveiling the Wolfcreek system last June, and since that time has brought out variants of the machine that support block storage and file systems and another that is tuned up for object storage as well as special version, called the Infinite Memory Engine, which is burst buffer that sits between compute and primary storage to deal with I/O spikes and accelerate performance of the underlying file systems.

In a sense, the new Flashscale array that DDN is launching today is taking that IME burst buffer and backing it off a notch and configuring the Wolfcreek platform to be an all-flash array that can compete on price against machines that rely on de-duplication and data compression to get the cost of usable capacity down. This is all well and good, but this also tends to sacrifice performance. DDN wants to provide supercomputer-class performance and scale, but at the prices that all-flash arrays from Pure Storage, EMC, SanDisk can’t match with similar metrics.

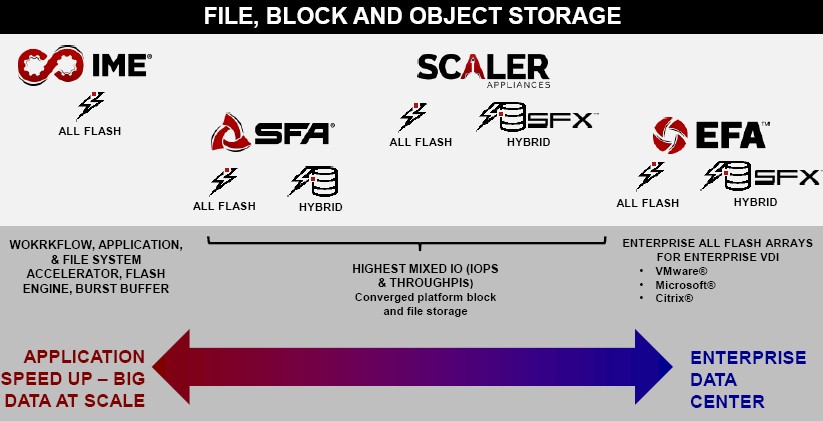

The way DDN sees flash, it is used across a spectrum of storage to solve different problems. While DDN doesn’t talk about them much, it does sell a hyperconverged storage platform called the Enterprise Fusion Architecture, that has heavy de-duplication and compression algorithms and is designed for supporting virtual desktop architecture and VMware virtualization workloads where driving down the cost per gigabyte is critical and where de-duplication is a big part of that.

This is where flash got its start in the enterprise, with a slew of companies offering products that had tens of terabytes of capacity that could be racked and stacked to offer cheap and fast virtual PC images or storage for selected virtualized workloads that needed higher IOPS than disk-based arrays could deliver. (Hyperscalers were rich enough to make their own flash controllers to be early adopters of very expensive PCI-Express flash cards for accelerating their database and caching workloads.) As the cost of flash has come down, it is being optimized for both PCI-Express cards and SSDs, and now with NVM-Express form factors you can get a hybrid that brings the familiarity of the SSD form factor and the directness of a PCI card.

Moving to the left on that flash spectrum are the Scaler appliances, which are based on the Wolfcreek platform as well and which are more generic all-flash or hybrid flash-disk arrays that are tuned up to run file systems. The Exascaler variants run the Intel variant of the Lustre parallel file system, the GridScaler variants run IBM’s GPFS parallel file system, and the MediaScaler variants run multiple protocols aimed at shops that need mixed protocols for more generic file serving.

Going all-flash is not necessary to boost performance, and Molly Rector, chief marketing officer at DDN, brings up an example of this to make her point. “GridScaler comes in all flash or hybrid versions, and on the hybrid side, we wrote software to be able to use SSDs not just for metadata performance but also as cache and it is kind of interesting from a historical perspective that when we went all flash with Scaler systems three years ago and were first running benchmarks for ourselves and our customers, the flash did not speed up the system at all. Our disk-based SFA systems were faster than the flash-based systems. So the notion of just throwing flash at a performance problem is something that is misdirected and that customers struggle with.”

Sometimes, when customers buy an all flash array to solve a performance issue, it doesn’t fix it, and that can be because the real problem is in their applications or in their networks, or that their storage layer doesn’t know how to take proper advantage of flash.

The other thing to note as you move right to left on the DDN product spectrum is that the data reduction techniques start peeling off, and that is done because performance starts taking precedence over capacity and cost as you move to the left. So the EFA arrays have de-dupe and compression, while the Scaler machines have just compression. The Flashscale all flash arrays, which are in the Storage Fusion Architecture (SFA) family and which are being announced today, have neither technology, and the IME burst buffer doesn’t support de-dupe or compression either. The reason is simple: these data reduction features slow down performance because of the compute and latency overheads they impose.

Getting Flashy With Wolfcreek

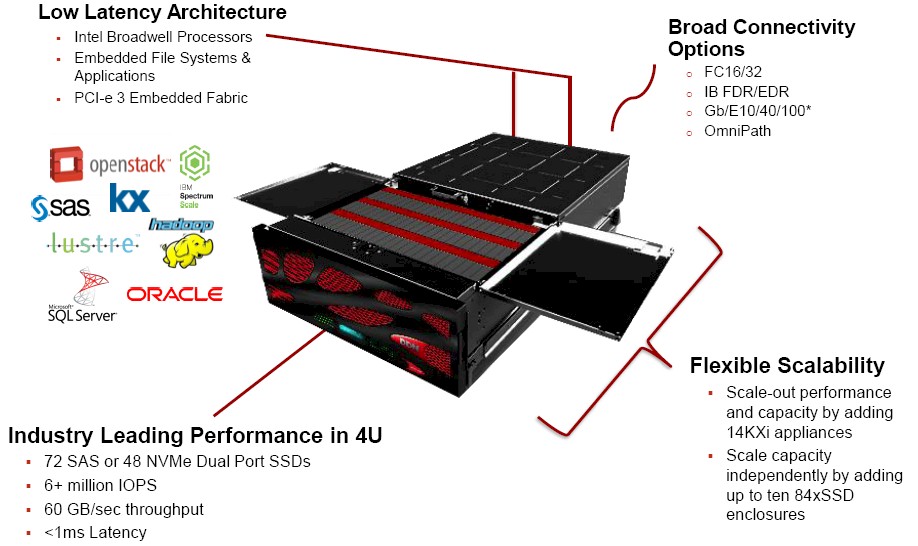

The basic feeds and speeds of the Flashscale SFA14KXi all flash appliances will be familiar from other DDN products based on the Wolfcreek platform. The 4U chassis has two “Haswell” Xeon E5 v3 processors from Intel, which will be upgraded to the latest “Broadwell” Xeon E5 v4 processors by the time they ship later this year. The chassis has 72 drive bays, which can be loaded up with SAS SSDs, or if you want extra performance, you can put up to 48 dual-port NVM-Express drives in the chassis. DDN is using dual-port NVM-Express drives from OCZ at the moment, but Intel is getting ready to launch its own dual-port unit with NVM-Express links directly back to the PCI-Express controllers in the processing complex, so it will soon have a second source. As for SAS SSDs that link more indirectly to the processors through a SAS controller, Rector tells The Next Platform that DDN has a mix of suppliers, with SanDisk and Toshiba topping the list for these units.

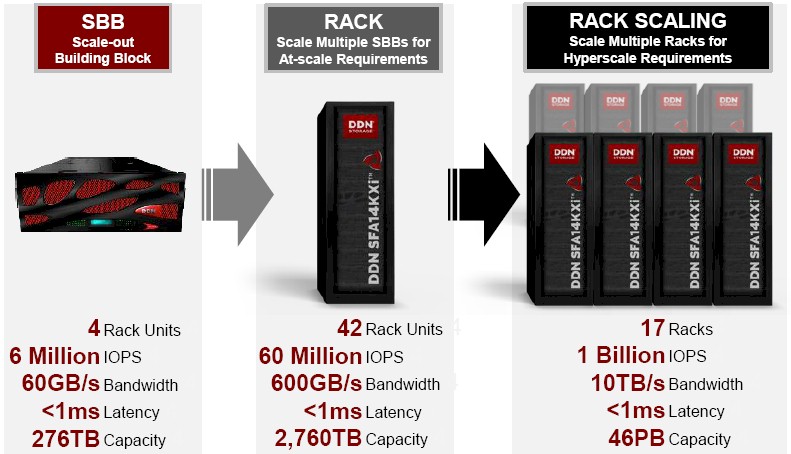

With the 48 NVM-Express flash drives and another 24 regular SAS flash drives, the Wolfcreek platform can deliver more than 6 million aggregate IOPS and deliver 60 GB/sec of peak throughput, and while the presentations promise average latencies of under 1 millisecond, Rector says that the benchmarks that are hot off the presses show that it can deliver average latency of around 100 microseconds. As these things go, that is pretty fast.

Being aimed at hyperscale, HPC, and enterprise workloads, the Flashscale arrays are able to expand both up and out as the compute and storage needs dictate. Compute performance can be scaled out by adding additional SFAKXi nodes and each node can scale up its storage by adding up to ten 84-drive SSD enclosures to each Wolfcreek node. You can scale up the storage on the nodes and the number of nodes to create a truly monstrous flash array.

Rector says that the top end configuration it needed to certify for the Flashscale arrays came from a financial services firm running high frequency trading applications that requires at least 1 TB/sec of aggregate bandwidth to run its Kx databases. (Kx Systems is a supplier of real-time databases that have been the backends of trading systems for many, many years, and it doesn’t talk much about itself.) In any event, DDN likes to demonstrate that it can meet the needs of its top end customer by a factor of 10X with each generation of its product, and this is how you reach that 10 TB/sec of bandwidth on a flash array:

At that 17 rack capacity required to meet that 10X ceiling, the system has 10 TB/sec of bandwidth and the setup has a whopping 46 PB of capacity and delivers 1 billion IOPS across its 170 nodes.

As for pricing, Rector says that with the Wolfcreek platform configured with SAS flash drives, DDN can deliver capacity as low as $1 per GB, and by switching in 48 NVM-Express drives and boosting the IOPS to the peak level specified above, that boosts the cost to around $1.50 per GB or so. This, mind you, without de-duplication and compression, which are not particularly useful for performance-minded customers like those who will be shopping for SFA Flashscale arrays and also kicking the tires on competitive products.

With 72 of the SAS flash drives in the chassis and with the expected 8 TB drives that DDN will be shipping in the third quarter, if you do the math a single enclosure will have 576 TB of capacity, double what it can ship now with 4 TB units. (This also implies that the Flashscale with the current 4 TB SAS drives will cost around $288,000 fully loaded with only those SAS drives.) What is not clear is what happens to latency and IOPS in a configuration that does not use any NVM-Express flash drives, but clearly the IOPS and bandwidth will be lower. For the NVM-Express variant, DDN will be offering a 6.4 TB capacity drive in the third quarter, double the capacity of the NVM-Express drives it is currently selling to early adopter customers. Using the existing 3.2 TB NVM-Express flash units from OCZ, capacity would top out at 48 of these drives for 153.6 TB plus another two dozen 4 TB SAS drives with a total of 96 TB, for a total of 249.6 TB. At around $1.50 per GB, DDN’s statements imply that this configuration (the one that delivers the 6 million IOPS) would cost around $375,000.

Even though DDN sources its some of its flash SSDs from SanDisk, it ends up competing against its InfiniFlash arrays, which are based on a SanDisk flash card instead of the Optimus Max SSD drives used by DDN. The IF700 array from SanDisk (now part of Western Digital) has 64 of these cards, and delivers on the order of 1 million IOPS and 12 GB/sec of bandwidth in a 3U enclosure; it only has SAS links to the servers, however, while the DDN Wolfcreek can link to servers using 16 Gb/sec Fibre Channel or 100 Gb/sec EDR InfiniBand links, and later this year will be able to link to systems via 100 Gb/sec Omni-Path links. And it also has five times the bandwidth and six times the IOPS.

As for the Pure Storage FlashBlades, which were previewed back in March, these 40 Gb/sec Ethernet as the backplane linking the blades to each other and linking multiple enclosures to each other; the compute and storage elements of the blades have a modified version of PCI-Express hooking them with very low latencies. The FlashBlades scale to two enclosures today with up to ten enclosures promised down the road. Pure Storage says it can sell the FlashBlades for under $1 per effective GB, or about $1.6 million 792 TB raw and 1.61 PB usable. We have no data on IOPS and bandwidth rates for the FlashBlades, but with raw capacity, that is more like $2 per GB for raw capacity. It is hard to believe the Flash Blades will hit anything like 100 microseconds of average latency.

The DSSD unit of EMC, which has a very high performance D5 all flash appliance that looks like local PCI-Express flash cards to the servers it attaches to, has about 50 microsecond average latency thanks to that PCI-Express extended switching between servers and the flash array. (EMC is only saying under 100 microseconds officially.) The DSSD unit also offers 10 million IOPS, and like the Flashscale from DDN does not use de-dupe or compression because it is also all about high performance. The DSSD D5 however tops out at 100 TB of effective capacity, and with a new two-node configuration that previewed at EMC World two weeks ago, that is still only doubled up to 200 TB. A DSSD D5 with 144 TB of capacity (100 TB usable) costs under $3 million. A single node of the Flashscale will offer 60 percent of the IOPS, maybe twice the latencies, and three times the capacity for one-eighth the dough.

There is another big difference with these above mentioned all flash arrays. The Flashscales have their own compute, and it can be put to work. With the addition of Broadwell Xeons when the Flashscale arrays ship in volume in August of this year, the machines will have enough processing oomph to allow customers to fire up KVM hypervisors on the processors that are running the DDN software stack and have plenty of cores leftover to run actual applications.

In one case being deployed by a beta tester, a financial services company is firing up Kx real-time databases on the Flashscale storage, creating what is essentially a convergence between compute and a high performance block storage device that links SSDs over a PCI-Express fabric. Rector says that companies will look to embed other distributed databases and data stores as well as various kinds of distributed file systems and OpenStack clouds on the Flashscale arrays given all of the compute available on the nodes.

Drawing the line between the server and the storage is getting tougher and tougher. In some sense, DDN is now a server vendor.

You should check out the benchmarks run by STAC Research (talk to Peter Lankford there). They include vendors like DDN, IBM and so on. Most recent ones have the previous DDN/Kx numbers being beaten by IBM/extremedb using POWER8 and IBM’s FlashSystem 900:

https://stacresearch.com/news/2016/05/09/XTR160413