LRZ Adopts Nvidia Engines For €250 Million “Blue Lion” Supercomputer In 2027

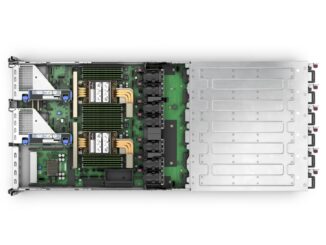

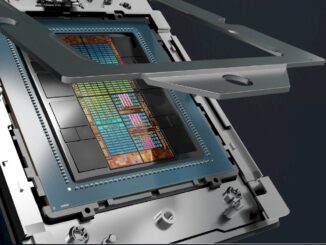

The expansion of the computing capacity in Europe for both traditional HPC simulation as well as AI training and modeling continues apace, with the Leibniz-Rechenzentrum lab in Germany announcing late last week (when we took a day of holiday) that it would be shelling out €250 million – about $262.7 million at current exchange rates – to build a hybrid CPU-GPU cluster based on Nvidia compute engines to tackle both kinds of high performance computing. …