Sponsored If you have a hundred or a thousand machines that you want to work in concert to run a simulation or a model or a machine learning training workload that cannot physically be done by any one single machine, you build a distributed systems cluster and there are all kinds of known tools to manage the underlying server nodes, to create the overarching computing environment, and to then carve it up into pieces to push work through it.

As it is currently evolving, the edge is taking distributed computing up another notch. Now, instead of having all of the server nodes that represent the cluster sitting in one place running one big job or several smaller ones that can be managed by a job scheduler and workload and configuration manager, the cluster will be spread across hundreds, or thousands, or potentially tens of thousands of physically distinct and very likely latency isolated compute and storage elements. These elements could be baby clusters or single nodes – it doesn’t really matter. What matters is that many of the same techniques that are used to manage a traditional HPC cluster can be extended out to manage the edge, which will very likely be running latency sensitive AI workloads that are, for all practical purposes, best thought of as HPC.

“Given all of these possible scenarios, having a consistent management layer no matter the consumption model or the location eliminates some of the complexity of this different kind of massive scale,” says Bill Wagner, chief executive officer at Bright Computing. “We don’t look at the edge in isolation because we don’t think it exists in isolation. If you think about what the edge is actually doing, it is serving as an accelerant for machine learning analytics, ultimately. And so, edge is a component in a bunch of architectures for the datacenter and outside of the datacenter that are all converging from an infrastructure perspective.

“Organizations want to tackle all this stuff in a consistent way and not have siloed approaches because they know they are going to need – and will have – infrastructure in many more places than they typically have today. Fast forward a couple of years and companies will have a hybrid infrastructure that is on premises, cloud, and edge. All this complexity relating to location is building and infrastructure is spreading out all over the place. Complexity is multiplying naturally at the same time, as new applications come into the fold and new technologies – new types of compute, storage, interconnects, and abstraction layers like containers – get deployed.”

Because complex problems usually require complex answers, organizations also have to reckon with public clouds and bring them into the mix, too. In some cases, cloud infrastructure will be used exactly as an on-premises HPC cluster, built from compute, storage, and networking services on one of the major public clouds in an ephemeral or reserved manner, depending on the circumstances.

In other cases, given the distributed nature of the largest public clouds, which have dozens of regions and hundreds of datacenters plus thousands of other points of presence – AWS calls them CloudFront Edge locations, Google Cloud calls them Network Edge locations or content delivery network points of presence (CDN POPs), and Microsoft calls them Azure Edge Zones – the public cloud will function like edge locations for organizations.

The complexity is just a little more, well, complex than even this. The lines between what is an edge, what is a datacenter, and what is in between – and there will be points of presence in between, for sure – are blurring. And a common language has not developed to accurately describe this.

The various layers of edge are just another flavor of distributed computing, where workloads have to be run closer to the source of data because latency has to be low or because datasets are too big to be transmitted from so many sites back to the datacenter, or both. Scale is going to explode on many different dimensions – within each location and across locations. It is like a fractal topology. But it is even stranger than that. In some cases, the public cloud will be your edge, or one of your edges. You will still need management. In other cases, the public cloud will be your datacenter. Still need management. Their clouds are your edge. Still need management. Their edge is my edge. Still need management. Those clouds are your datacenter. Still need management. Your datacenter is your datacenter and your edges are your edges. Still need management.

There will be many types of edges, but the 5G wireless network where many applications will live is a good example. Applications running on 5G base stations will be enhanced with machine learning inference and possibly localized machine learning training to provide all of us services through our smartphones and maybe our laptops and tablets and VR headsets once we have a consistent, fairly cheap, high bandwidth, low latency network in place.

The magnification of scale at the edge will be immense, which is why we are calling it hyperdistributed computing here at The Next Platform, as we see a complex of compute and memory and storage inside a server within a rack, across a row, spanning a datacenter, and stretching across the vastness of the edge out to tens of billions and eventually trillions of devices.

“The telco example is telling,” says Wagner, reminding everyone that 4G wavelengths can span up to 10 miles, but 5G wavelengths are about 1,000 feet, and the tradeoff we have to pay for having 10X the bandwidth moving from 4G to 5G is to have a lot more cell antennas and cell towers.” With the transition from RAN to OpenRAN, the major telcos have around 170,000 sites distributed in the United States, and each of the major telcos have tens of thousands of cell towers each. But with 5G networks, the number of sites is predicted to be in the range of 400,000 to 500,000, and that may prove to be a very conservative estimate.

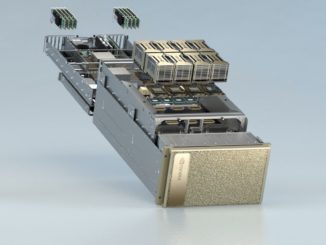

The difficult thing, in the early days of hyperdistributed computing with varying levels of the tightness or looseness of the coupling in clusters of machines, is to bridge the gap for people who are still thinking about edge locations as pets and not as cattle. The underlying infrastructure has to be provisioned and managed in a consistent way, whether the machines are relatively tightly coupled, as is the case with HPC simulation and modeling clusters using the Message Passing Interface (MPI) stack or Partitioned Global Address Space (PGAS) to lash nodes together; or loosely couple – or they might not be coupled at all – a collection of Web servers in a datacenter, perhaps, or OpenRAN servers housed underneath cell base stations.

“This has to be a unified infrastructure to help cope with the complexity, and it is best to think of this as clusters of clusters, with varying degrees of loose and tight coupling,” Wagner explains. “In the end, whether or not the machines talk to each other or not doesn’t really matter as much as the realization that they all need to be provisioned and managed. In a sense, there is nothing special about having to manage a lot of any particular thing or sets of things once you have a lot of them. The scale and diversity is the issue.”

Because of the high cost of cloud computing, at a certain scale and utilization level, which we have talked about here at The Next Platform recently, it becomes cost prohibitive to be only on a public cloud and it becomes much less expensive to provide infrastructure in a facility that the company owns or at least controls if they rent their co-location space and use cloud-priced hardware inside of it.

“This is why the term ‘edge’ is so limiting,” says Wagner with a certain amount of exasperation. “It suggests that there are only three scenarios, and what we want to help people do with Bright Cluster Manager is get people to see that at some point infrastructure should just be liquid and we have the tools that help it behave that way no matter where it is sourced, no matter how it is priced, and no matter where it is located.”

Sponsored by Bright Computing.

Be the first to comment