There has been a land rush of sorts by storage OEMs over the past few weeks to roll out systems and services designed to help enterprises manage and process the huge amounts of data that is being created and stored throughout their widely distributed IT environments. Organizations not only are being overwhelmed with data that needs to be processed and analyzed, but that data increasingly is generated and housed outside of the traditional datacenter, whether that’s in the cloud or at the rapidly expanding edge.

They are also running advanced workloads like artificial intelligence (AI), machine learning and analytics, that are further enhancing the need for enterprises to be able to address storage needs from the datacenter and out into the cloud and edge and to be able to vast amounts of data between those various locations.

Early last month, Hewlett Packard Enterprises said it is supporting IBM’s Spectrum Scale parallel file system on some ProLiant and Apollo systems, a move that highlighted the ongoing embrace by enterprises of AI and similar compute- and data-intensive applications that are no longer the domain primarily of HPC organizations. Bringing Spectrum Scale to some of its mainstream systems was a way to give enterprises the same scalability and speed advantages that had been available in the HPC and supercomputing realms.

Later in the month, Dell EMC expanded its year-old PowerStore midrange all-flash storage portfolio that included the introduction of a lower-cost appliance – the PowerStore 500 – as well as enhancements to all systems in the PowerStore family that included better performance, scale and automation, a 25 percent speed boost for all workloads and NVMe over Fibre Channel (NVMe-FC).

That same week IBM unveiled Spectrum Fusion, a software-defined storage (SDS) offering that includes Spectrum Scale and related capabilities as well as such Red Hat products as the OpenShift Kubernetes platform to enable enterprises to move data between the datacenter, cloud and edge with a single copy of data rather than having to replicate for each environment. It’s part of IBM’s larger corporate strategy of focusing on hybrid cloud and AI, at which Red Hat is at the center.

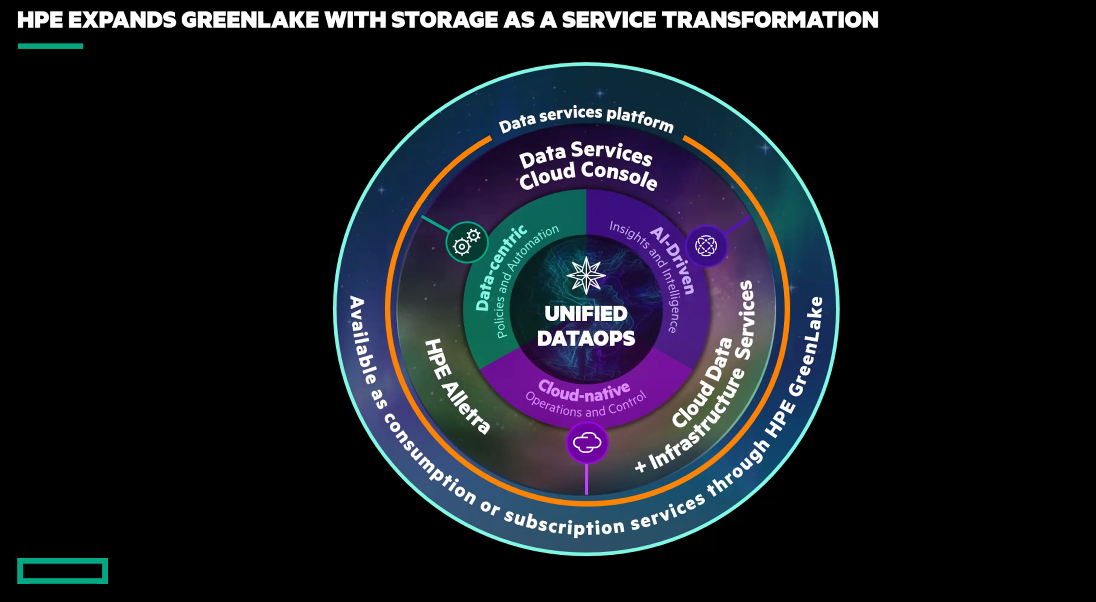

Now HPE this week is back with a storage-as-a-service push that includes new software and appliances and leverages its GreenLake hybrid cloud platform and technologies from its own portfolio and from its Aruba Networks company. As with previous moves by HPE and other OEMs, the goal is to help organizations manage and leverage the flood of storage throughout their highly distributed IT environments to help drive business and reduce what over the years has become a highly complex storage environment.

“You’ll still see classic mission-critical workloads stored on a high-end SAN array,” Tom Black, senior vice president and general manager of HPE’s storage business, said during a press briefing. “You’ll see easily disposable sorts of workloads, like developer pods, out on a very different either business-critical or potentially a software-defined solution. But there’s this plethora of user personas and identities that need to have a say. Then you overlay commodity compliance, complexity. If you’re staring at this as a customer, life has gotten more complex as you’ve cost-optimized. You managed to introduce technologies that have saved you money overall in terms of how you persist a dollar per gigabyte, but what you’ve done is you’ve introduced a massive policy security, compliance and management risk. This comes at a cost of their agility. This comes at a cost of their ability to innovate within their own business. It comes at a cost of being able to achieve their outcomes.”

In a survey of IT decision makers conducted for HPE by market research firm ESG, 93 percent sid storage and data management complexity it impeding their efforts to become more digital businesses and 67 percent said fragmented data visibility across their hybrid cloud is raising risks to their businesses.

The introduction of storage-as-a-service is the latest step in HPE’s efforts to deliver its products via a cloud-like pay-as-you-go consumption model based on the GreenLake platform. HPE announced in 2019 that its goal is to offer its entire portfolio as a service by next year and GreenLake is the foundation of that effort. The year before, the vendor committed to investing $4 billion over four years into building out its capabilities at the edge.

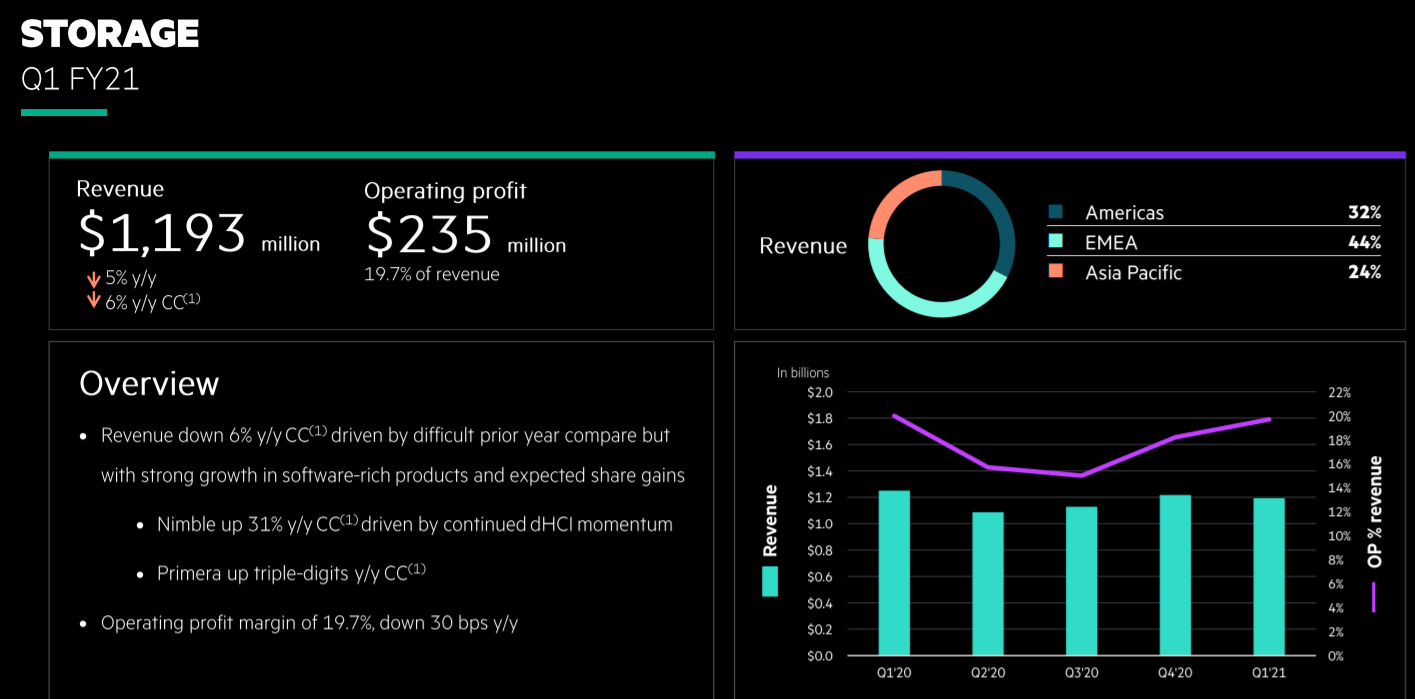

HPE has continued to add businesses to GreenLake, most recently in December when the company said it was making its vast portfolio of HPC products available as a service. Now with storage, HPE is doing with the same with one of its largest businesses. In the first quarter, the business generated almost $1.2 billion in revenue, a 6 percent year-over-year decline, though its flash-based Nimble storage unit saw revenue jump 31 percent due to growing adoption of hyperconverged infrastructure solutions and the Primera portfolio of mission-critical storage offerings saw triple-digit increases.

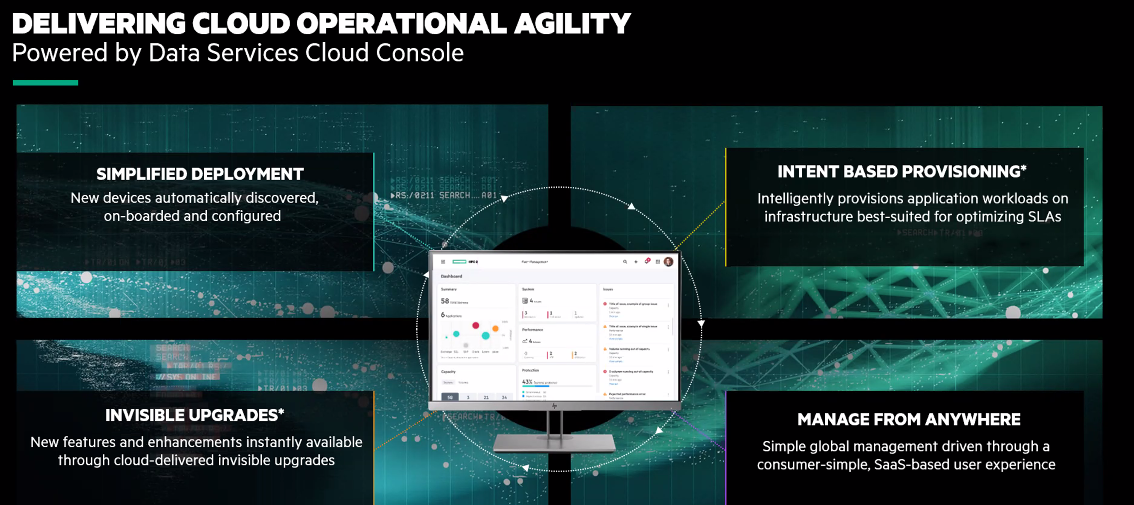

HPE is rolling out a few key technologies to get its GreenLake storage-as-a-service going. Central to the effort is the Data Services Console, a software-as-a-service (SaaS) offering designed to unify the management of storage and data operations and includes an API that provides application automation capabilities, a range of cloud data services and access to infrastructure and data as code to developers. One of the first capabilities will be Data Ops Manager for managing data infrastructure from anywhere on any device, said Ashish Prakash, vice president and general manager of cloud data services for HPE’s storage business.

Another cloud service is AI-driven intent-based provisioning, making it easier for organizations to provision their storage needs based on such metrics as the type of workload that’s running, how many volumes need to be created and how much storage is needed on each volume.

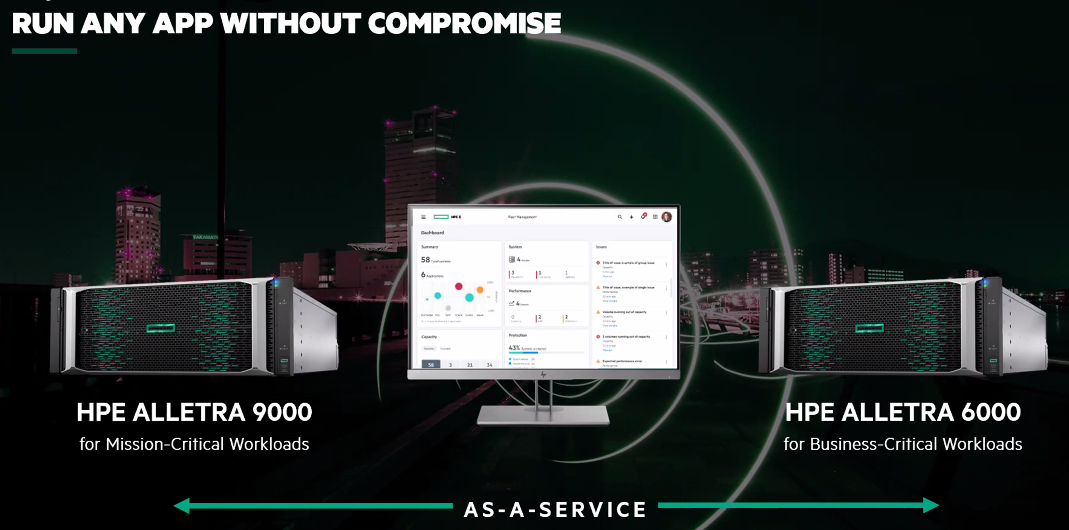

In addition, the company is unveiling a new line of storage systems in the storage-as-a-service initiative, with the first two being the Alletra 9000 for mission-critical workloads and the Alletra 6000 for business operations, both with all-NVMe storage capabilities. A key difference between the two is the level of availability (100 percent for the 9000, six nines for the 6000).

The systems are offered in a cloud-like experience, not only through the consumption model but also simplicity in deployment and management. An organization only needs to plug in the power and network cables and the console will discover and configure the systems. They also can be managed from anywhere using a smartphone.

HPE leveraged an array of technologies already available in its portfolio to develop the initial offerings in the storage-as-a-service initiative. The company’s InfoSight predictive analytics platform, which was designed to incorporate intelligence from datacenter operations to predict and prevent infrastructure problems, plays a role in the intent-based provisioning of the Alletra systems. The Data Services Cloud Console plugs into InfoSight, grabbing the telemetry around the appliances and uses the information to determine where particular workloads should run. In addition, upgrading is taken care of by the console.

HPE also leveraged the capabilities in Aruba Central, a cloud solution that has been developed over the past several years to enable use AI-powered insights to manage their networks, as the foundation for the services console. Since being bought by HPE in 2015 for $2.7 billion, the company has become HPE’s networking arm and the tip of the spear for its efforts at the edge.

“This is a platform that took well over five years to develop and mature to the state it is today – very hardened, very stable, global DevOps or Site Reliability Engineering clusters around the world deployed in US FedRAMP, code, you name it,” Black said. “We were fortunate to leverage this many thousands of years of work and then couple that to our deep, deep technical roots, history and intellectual property in our storage technology, taking a very talented group of engineers on a fairly tight timeline, but using these massive, massive investments and mature technologies at their core and involve them moving forward.”

He also said the Alletra 9000 shares some of the code base from the Primera line while the 6000 does the same with Nimble code, with HPE engineers taking “those very tried and true technology stacks – millions of lines of code, decades of engineering, years of investment – and we added all of them into what is now clearly define as a cloud-native” line of storage appliances.

HPE will continue to add to the storage-as-a-service portfolio but also will bring legacy storage lines, such as Primera and Nimble, into the Data Services Cloud Console to give enterprises a single place for data map across their existing environments.

“All our existing products, such as StoreOnce and other generations that are coming behind, are over time going to be absorbed into the same data services console,” Prakash said. “This is now the de facto standard for any new products that we will introduce. They will always be introduced on top of the Cloud Data Services Console.”

Be the first to comment