The coronavirus pandemic has obviously had an impact on spending trends in the IT market. As businesses temporarily shut their doors and sent most of their employees away to work from home, executives and IT administrators had to almost overnight shift their business model to adapt to a highly distributed workforce. The focus of spending by enterprises went away from many long-term projects to dealing with the crisis that was right in front of them. The money went to cloud services and software-as-a-service (SaaS) applications, businesses and consumers alike went online to do their shopping, and organizations put a greater emphasis on security and cloud-based collaboration.

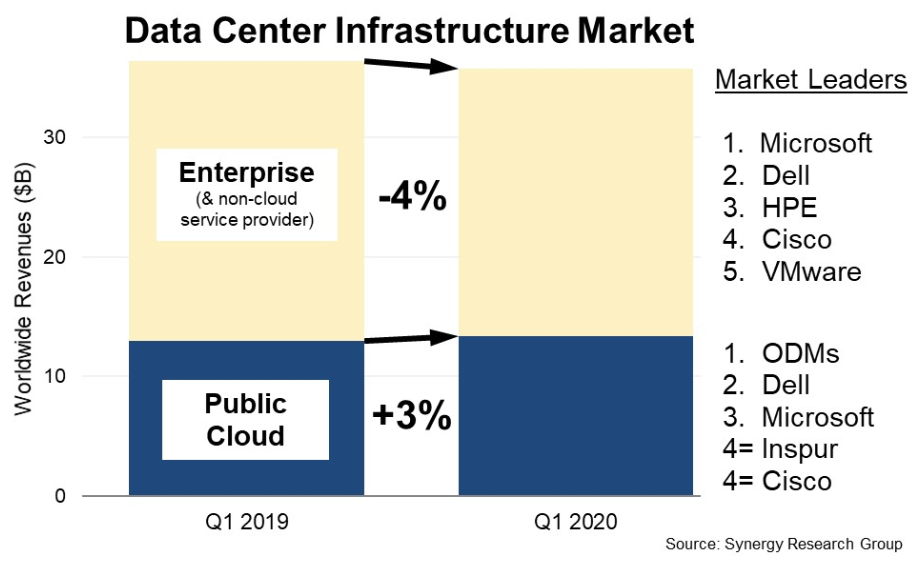

A look at spending on datacenter hardware and software reflects what’s happening in the market. Analysts at Synergy Research Group this week found that in the first quarter, sales of server, networking, and storage hardware and software like operating systems, virtualization, cloud management and network security to enterprises and traditional service providers fell 4 percent in the first three months of the year, just as the COVID-19 outbreak began to extend its reach around the globe. However, as the chart below shows, sales to public cloud services providers increased 3 percent, with ODMs – which find much of their business going to such companies as Amazon Web Services, Google Cloud, Microsoft Azure, and other cloud services companies – capturing the top spot.

However, that’s not to say the public health crisis is forcing enterprises to break new ground. For several years now they have been working to transform their IT environments into more of cloud-like experiences, both by moving workloads into the public cloud but also by looking to bring the same sort of flexibility, scalability and cost efficiencies into their on-premises arenas. They’ve been embracing greater automation and intelligence through artificial intelligence and machine learning, containers and Kubernetes, cloud-like consumption models, and management software to handle the massive amounts of data they’re generating. The pandemic has primarily accelerated the trends in those directions.

The pressures on enterprises have come mostly from two directions, according to David Wang, director of product marketing at Hewlett Packard Enterprise. One is the need to for greater IT agility and the other is budgetary pressures, which is forcing organizations to rethink how they procure IT equipment, moving away from large capital expenditures to adopt spending based on usage.

“It’s tied to COVID, but it’s also these are things they’ve always had to deal with and COVID made it worse,” Wang tells The Next Platform, adding that companies need to deal with the current crisis but also keep the long-term trend toward hybrid clouds in mind. “When we think about organizations, we think that right now they’re dealing with how they’re going to accelerate through recovery. But they’re also having to think about those investment decisions they’re making today for infrastructure. Our minds shouldn’t be made up as a reaction to crises, but you need to have a lens that on the investments you make today are going to set you up for success in the future as well. Despite some organizations saying, ‘Hey, let’s just go the public cloud. We’re going to shift workloads and there is going to be a quick fix,’ you can’t overlook the fact that when you think about the future of your applications, you have to take a strategic lens. What can on premises do that may in some ways bring more value than what the public cloud can provide?”

That strategic lens shows that not everything can go into the cloud, that many workloads will remain in the datacenter and that bringing the benefits of the cloud on premises will be key going forward. The public cloud gives enterprises the agility, elasticity and scale to meet unpredictable demands, but enterprise applications may have to be refactored when migrated to the cloud and there is a tradeoff when it comes to performance and resiliency, he says. Decisions often come down to where to run each workload.

Extending cloud features to the datacenter is a direction most vendors are headed in. Pure Storage this week unveiled its Purity 6.0 for FlashArray operating system that includes a host of new features available through its Evergreen subscription model, including offering file and block storage on the same system, and introducing its ActiveDR disaster recovery technology. Last month, Dell EMC rolled out its PowerStore flash portfolio that leverages such technologies as NVM-Express, storage-class memory (SCM) and AI-based software to monitor the systems. The company also launched Cloud Validated Designs for PowerStore for hybrid cloud environments and its Cloud Storage Services to directly connect PowerStore to public clouds.

HPE is looking to create that cloud-like environment in the datacenter. It has set a goal to offer its entire portfolio as a service by 2022, leaning heavily on its GreenLake IT-as-a-service platform that offers a consumption-based model and a way to unify everything from the datacenter to the cloud and the edge. The company last month made its Nimble Storage dHCI disaggregated hyperconverged infrastructure available via GreenLake.

The company this week rolled out more innovations in its Primera and Nimble storage portfolios that lean heavily on the idea of adding more intelligence and automation, more cloud-like capabilities and an as-a-service flavor. HPE inherited the InfoSight predictive analytics platform when it bought Nimble Storage for $1 billion in 2017. InfoSight brings in massive amounts of infrastructure data from more than 100,000 systems worldwide every second and uses machine learning to analyze the data and make the systems smarter and predict and prevent issues before they disrupt operations.

In the Primera systems for higher-end storage, the company is leveraging InfoSight to enable them to automatically act on the intelligence from the AI platform, addressing what Wang calls the “final mile” of AIOps.

“If you just take the category of AIOps by itself, AIOps as a category refers to monitoring, refers to having insights, it refers to having predictive analytics, it refers to having recommendations,” he says. “But the final mile across any industry that really has a maturity from an AI standpoint – like self-driving cars, robotics, intelligent drones – they’re taking AI in the final mile on the ability for the device to actually make its own decisions. You’re talking about devices that are smart enough to know a vehicle can apply a brake when it senses danger, when a drone knows to turn right or turn left and optimizes how it gets to where it needs to be without having to involve human intervention.”

HPE is bringing similar capabilities to Primera systems, enabling them to self-optimize and self-heal. Every 30 seconds the system is predicting performance and workload requirements for every application and then adapting system operations to improve the performance of the software. Through InfoSight, Primera will leverage both cloud-based machine learning and an embedded AI engine that similar to what self-driving cars to address what’s happening at the local level and dynamically change accordingly.

For Nimble systems that are managing mid-range storage workloads, InfoSight enables cross-stack analytics to simplify virtual machine (VM) management. Nimble systems have been able to use the AI platform to manage VMware environments and now add Microsoft Hyper-V into the mix.

“Customers are increasingly more virtual and they have this notion of VM sprawl where there’s just so many VMs running in the environment,” Wang says. “We have a single screen that shows all virtual machines in one pane glass that within seconds you identify that three virtual machines are running rogue and you can drill in and figure out why. Those time savings translate for customers into big, big money. Hyper-V customers in the industry have never had these kinds of insights.”

The company also is delivering all-NVMe support to Primera and storage-class memory (SCM) to Nimble, both designed to improve performance. The NVMe protocol is aimed at improving the throughput and latency of flash and other non-volatile memory. SCM also is about better performance.

“Customers are looking for ultra-low latency for read-intensive applications on the NVMe side,” Wang says. “It’s all about consolidation. Organizations want to be able to run more workloads on the same shared storage environment and the parallel nature of it in the NVMe protocol would lend itself well, assuming the architecture can maximize that value. When customers are trying to consolidate more workloads on the same environment, NVMe can make a lot of sense. On these storage-class memory side, it’s all about latency. It’s all about delivering the fastest read response time for those intensive Oracle and transactional databases that need to have very, very fast, rapid rate performance. SCM lends itself really well there.”

The issue with SCM is the expense, he says. It delivers memory-like performance, but the high cost makes it prohibitive for many companies and the use cases for it are narrow. Nimble’s architecture – which he calls “cache-accelerated” – leverages a caching tier developed for the product line that can use a marginal amount of SCM cache along with SSD persistent storage to deliver up to 95 percent of the read performance of an all-SCM environment. It’s similar to the when Nimble launched 10 years ago with a hybrid architecture that used spinning disks and flash to deliver near-all-flash performance at an affordable cost at a time when flash was prohibitively expensive. SCM is making its way into mainstream storage systems. Most recently, Dell EMC, when announcing its PowerStore flash lineup last month, noted support for Intel’s Optane SSDs as persistent storage for either SCM or flash.

With all-NVMe support, Primera can leverage the protocol’s highly parallel architecture to drive performance and density, including supporting twice the number of SAP HANA nodes for half the price.

For disaster recovery, HPE is including its Peer Persistence high-availability technology that delivers high transparency between sites to Primera. In addition, Peer Persistence for both Primera and Nimble now enables enterprises to replicate data to a third site, including the cloud – not only AWS, Google Cloud or Azure, but also HPE’s own Cloud Volumes storage cloud, which is hosted and managed by the vendor. With Primera, HPE also is offering near-instant asynchronous replication over extended distances with a recovery point objective (RPO) of one minute.

Be the first to comment