Enterprises continue to be inundated with data that needs to be collected, stored, moved, processed and analyzed, and the view down the road shows no let up. In 2025, there will be 175 zettabytes of data created, according to IDC – up from 59 zettabytes last year. In addition, the data increasingly is moving outside the confines of traditional datacenters, with some estimates showing as much as 70 percent of data being created, stored or accessed in the cloud or at the still-nascent edge within the next few years and 80 percent of it being unstructured.

OEMs are working to create systems that can help organizations with the rapid changes happening now while looking down the road to see what they’ll need to do to give companies the tools for handling the flood of data coming their way and the modern workloads they’re now running. Dell engineers a year ago introduced the vendor’s PowerStore all-flash platform for the midrange that was designed in a clean-sheet approach with high levels of automation, advanced analytics and monitoring capabilities and the ability to run both legacy and newer compute- and data-intensive workloads like artificial intelligence (AI) and machine learning, and that could both scale up and scale out. PowerStore was able to run faster and more efficiently than other midrange storage options in the Dell EMC portfolio.

Enterprises took to PowerStore, according to Travis Vigil, senior vice president of product management at Dell EMC. During a recent press briefing, Vigil said adoption of the offering was ramping faster than any other new architecture in the company’s history and that Dell EMC has shipped more than 400 petabytes of effective capacity sine the launch in May 2020. In the vendor’s last fiscal quarter, PowerStore saw a four-fold quarter-on-quarter revenue growth and that the product was drawing in new customers – about 20 percent of PowerStore users were new to Dell.

PowerStore also led a surge in growth for Dell EMC’s midrange storage business, with an 8 percent revenue increase in the portfolio year-over-year.

A year later, the company is expanding the reach of the platform with a lower-cost system that is aimed at enabling more enterprises to embrace is and a range of new features for all PowerStore systems aimed at improving performance, scale and automation, including a 25 percent speed boost for all workloads and NVMe over Fibre Channel (NVMe-FC).

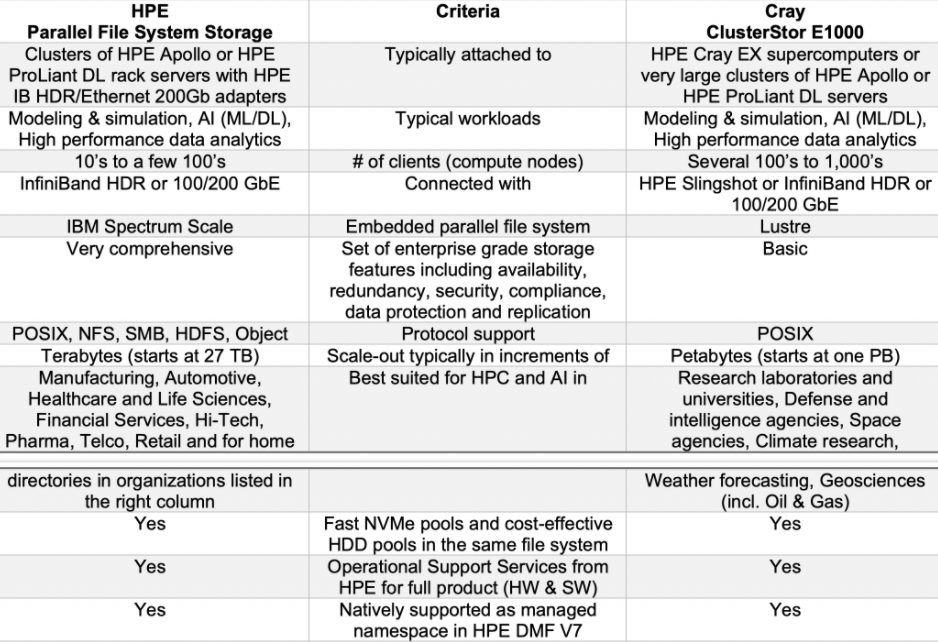

The updates to the platform were unveiled April 20 and will be generally available June 10. They come after rival Hewlett Packard Enterprise earlier this month announced it is now supporting IBM’s Spectrum Scale parallel file system on its ProLiant and Apollo servers. The company’s new offering is a way to bring parallel file system capabilities to enterprises that are increasingly running HPC and AI workloads that larger organizations do and are contending with similar challenges from the massive amounts of data they’re trying to manage.

HPE sees the use of IBM’s technology as an alternative for enterprises to network-attached storage (NAS) and Lustre parallel file system deployments. For larger environments like HPC and supercomputing, HPE will continue to offer its Cray ClusterStor E1000 Lustre-based storage solution, but the new support for Spectrum Scale will give organizations relief from challenges in performance, scalability and cost presented by NAS offerings, according to Uli Plechschmidt, product marketing manager parallel HPC and AI storage for HPE. Right now most enterprises are using NAS from such vendors as Dell or NetApp or using other companies’ parallel storage attached to HPE clusters.

“Unlike NFS-based Network Attached Storage (NAS) or Scale-out NAS, there are (almost) no limitations regarding the scale of storage performance or storage capacity in the same file system,” Plechschmidt wrote in a blog post. “NFS-based storage is great for classic enterprise file serving (e.g. home folders of employees on a shared file server). But when it comes to feeding modern CPU/GPU compute nodes with data at sufficient speeds to ensure a high utilization of this expensive resource – then NFS no longer stands for Network File System but instead, it’s Not For Speed.”

Dell’s new PowerStore 500 delivers 2 petabytes of effective capacity (PBe) in a 2U box that can be clustered together with other PowerStore 500 boxes for up to 4.8 PBe per cluster or combined with other PowerStore systems – such as the 1000, 5000, 7000, and 9000 T models – for up to 9.8 PBe per mixed cluster. It supports block, file and VMware vVol virtual NAS or SAN arrays, as well as storage-class memory (SCM), NVMe-FC and 4:1 DDR. The price tag on a 500 system starts at $28,000.

<<Dell PowerStore 500>>

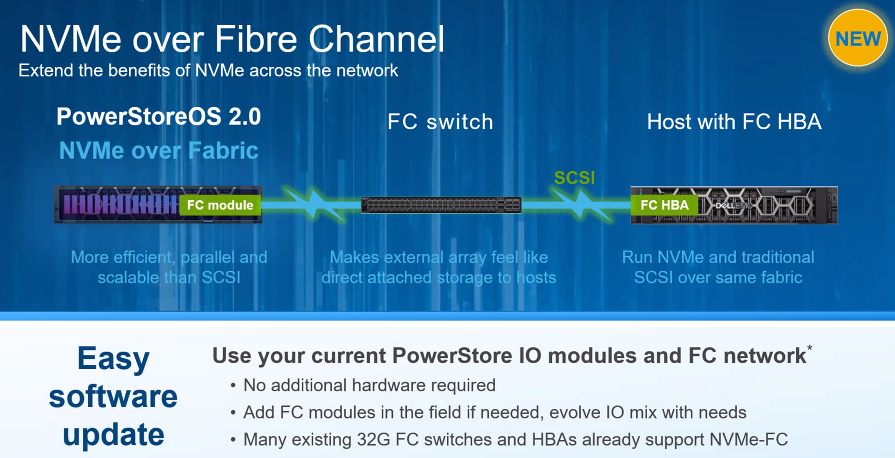

NVMe-FC and broader support for SCM are key upgrades to Dell’s PowerStore software. In the first iteration of PowerStore, NVMe was used in the appliance while standard SCSI was the primary transport mechanism over the network.

NVMe-FC “is much more efficient and scalable than SCSI and it’s designed to make an external network array essentially feel like direct-attached storage to the house itself,” Jon Siegal, vice president of product marketing for Dell’s Infrastructure Solutions Group, said during the briefing. “We’re also making it easy to activate this new capability, much like all the other software capabilities. It’s a simple software update; many customers won’t need to even swap out their network gear … if they already have 32 gig-capable switches in HPAs on premises. Any hosts that support NVMe can now use that protocol to talk to PowerStore while the SCSI hosts continue as if nothing changed because PowerStore in the network itself can still handle both protocols.”

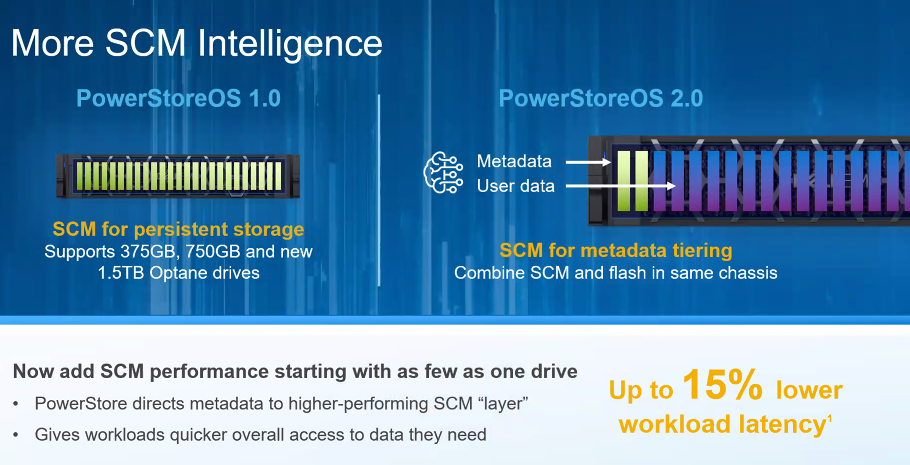

The PowerStore system could always support SCM in the form of Intel’s Optane drives in in 375 GB, 750 GB, and 1.5 TB capacities. In PowerStore OS 2.0, SCM and flash can be combined in the same chassis, with SCM being used for metadata, which can help lower workload latency by as much as 15 percent.

“We can now differentiate between SCM and standard NVMe drives in the same chassis so that the higher performing SCM can now be leveraged to increase the speed of metadata access, while user data continues to go directly to the NVMe drives itself,” Siegal said. “This is going to be really ideal for active datasets, where data moves out of the cache quickly.”

Other software upgrades include the use of Dell’s Dynamic Resiliency Engine (DRE) to protect against multi-drive failures and expanded capabilities for AppsOn, the feature introduced with PowerStore last year that enabled enterprises to run virtual machines and applications directly on the appliance. With the software upgrades, organizations can now move the VMs and applications among clustered appliances, delivering performance based on workload demand and enabling them to drive more computer power and performance where needed.

For HPE, the support for IBM’s Spectrum Scale in its new Parallel File System Storage offerings means being able to offer enterprises a parallel file system on clusters of standard ProLiant DLHPC Gen10 Plus rack systems and Apollo 6500 AI hardware, complementing the ClusterStor E1000. The ProLiant DL and Apollo systems with Spectrum Scale will be available May 3.

The vendor introduced the ClusterStor E1000 in 2019 as a storage platform for the upcoming exascale era, able to run all HPC workloads, from AI to simulations to analytics as well as a mix of HDDs and flash memory. The system, which became generally available last year, was unveiled just after HPE completed its acquisition of longtime supercomputer make Cray for $1.4 billion. According to Plechschmidt, the E1000 has reached the 2 exabyte milestone and will be used in the first three exascale systems – Aurora, Frontier and El Capitan – being built in the United States.

HPE expects the new offerings will be attractive to such industries as financial services and life sciences that don’t use Lustre for its lack of enterprise features but need parallel file systems given the growth of data and the modern workloads they’re running. IBM’s Spectrum Scale will be fully integrated into the HPE systems and enterprise won’t have to license storage capacity by terabyte or storage drive. They also will only have to call HPE for support for either the servers or parallel file system.

Be the first to comment