The thrust of much of the message that Red Hat was putting out last week at the Red Hat Summit 2019 conference in Boston revolved around multicloud and hybrid cloud environments – adopting them, deploying them, and managing them. It was a focus of the Red Hat Enterprise Linux (RHEL) 8 operating system, which was released on the first day of the show, as well as what the Linux vendor did with its OpenShift container and Kubernetes platform. The company even touched on it from the hardware side, with an expanded partnership with GPU-maker Nvidia that’s aimed at making it easier to run artificial intelligence workloads across hybrid clouds.

The beating of the hybrid cloud drum isn’t surprising given the fast-growing adoption of both hybrid cloud and multicloud strategies by enterprises, with some predicting that more than 90 percent of companies worldwide will be using more than one public cloud, often in conjunction with on-premises private clouds. In such a cloud- and data-centric IT world, containers like those from Docker have joined virtual machines as key ways to drive portability in applications, both between public and private clouds and between public clouds themselves. Kubernetes has become the dominant technology for automating and managing those complex Linux container environments.

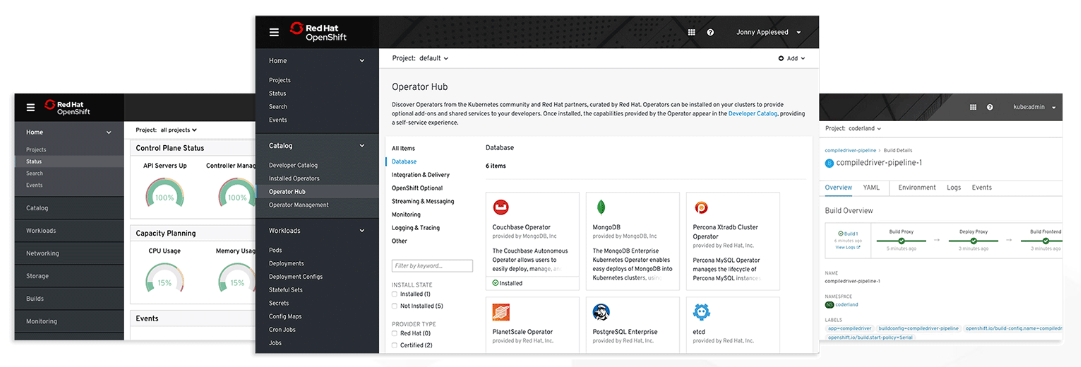

Red Hat first rolled out OpenShift four years ago, giving enterprises a product based on Kubernetes – an open-source technology that began as a project inside of Google – and over the past couple of years becoming a key part of what Red Hat sees as the company’s shift away from being a product vendor to a provider of a much more complex Linux software stack in the enterprise that also includes the OpenStack cloud platform and Insights predictive analytics tool. Red Hat has seen strong uptake in an array of industries. More than 1,000 customers – including ANZ Bank, Santander, Copel Telecom, Lufthansa Technik, Porsche Informatik, BP and Kohl’s – have adopted OpenShift.

The company hopes to keep the momentum going with OpenShift 4, which will be available within the next month. It aims to provide the kind of automation needed when managing a distributed computing environment like the hybrid cloud and leverages some of the technology Red Hat inherited when it spent $250 million to buy CoreOS, a five-year-old company that started with a Linux OS for containers and grew its container and Kubernetes capabilities from there. With CoreOS came Tectonic, its Kubernetes-based orchestration system. Red Hat began incorporating CoreOS features into OpenShift late last year with version 3.11 and now is expanding it in OpenShift 4.

“We wanted to make sure that the platform was going to be easier to deploy,” Brian Gracely, director for product strategy at Red Hat, tells The Next Platform. “Customers are telling us they like the benefits of these faster applications, this automation. But being able to get the platform up and running has to be really simple because finding Kubernetes talent sometimes is hard, so we have to embed more capabilities in the platform to make it simple to get updated or simple to get deployed and then also simpler to do updates because Kubernetes is the underlying technology and [updates for it come] out every three months, which is pretty rapidly for infrastructure software. We needed to do a number of things to retool the platform to make it simpler to keep up with that with that path. OpenShift 4 is a big re architecture of the platform.”

The combination of capabilities in RHEL and RHEL CoreOS – a new OpenShift-specific variant of RHEL for embedded environments – drive many of the self-managing aspects of OpenShift 4 around everything for security to auditability to installation. RHEL CoreOS is a lightweight Linux distribution that is optimized for containers and gets updates automatically managed by Kubernetes and enabled by OpenShift. OpenShift Certified Operators is done in conjunction with technology partners to develop applications in OpenShift that can run as-a-service.

“There’s also a number of other things that we’ve helped bring to the upstream Kubernetes community to make platforms simpler to deploy and support of update and support of scale,” Gracely says. “OpenShift has always been a multicloud or hybrid-cloud platform, so it can run Kubernetes in any environment: on Google, on VMware, on OpenStack, AWS. We’re going to make it simpler to get those deployed in those environments. There’s a number of things within the platform that are going to make life easier for developers. While the platform Kubernetes stuff is interesting, the goal is to make developers productive.”

A new feature is OpenShift Server Mesh – which brings together the Istio open-source project for managing microservices, the Jaeger distributed tracing technology and Kiali, an open source UI project – to create a single offering for encoding communications logic for microservices-based applications, taking that task off developers’ hands.

“As more distributed applications are being built – sort of cloud-native microservices applications – the needs of more granular networking between those micro services or granular security becomes really important,” he says, adding that Service Mesh is now a service in the OpenShift platform. “There are a number of things we do to create better visibility for developers as they’re building their applications.”

Code Ready Workspaces are aimed at enabling developers to use containers and Kubernetes while using IDEs they’re familiar with.

“Developers are used to working in their IDE space and what we do behind the scenes is say, ‘Most likely you just want to write software; you don’t want to talk about all this complicated complexity, so just write your software the way you always have,’” he says. “’Push it your software and custom software the way you have. But behind the scenes is an embedded IDE. We will make sure that it gets in your container, make sure it gets deployed correctly, make sure it integrates with your CI pipelines.’ Again, innovation around hiding the complexity of Kubernetes from developers so they don’t have to learn that.”

Still, Red Hat has included a suite of plug-ins to native services in places like Azure who want to use those tools but want them to be able to speak natively to OpenShift. About 3 million developers have downloaded the plug-ins over the last month, according to Gracely, giving them options for working with OpenShift.

Red Hat also will put the Knative open technology for building serverless applications into OpenShift 4, where it will be a native capability on the platform, he says. KEDA (Kubernetes-based event-driven autoscaling) was developed by Red Hat and Microsoft for the deployment of serverless containers on Kubernetes, which will enable Azure Function in OpenShift. KEDA is in Developer Preview.

In addition, the company is partnering with Microsoft to make OpenShift a native service on Azure. Enterprises already can run OpenShift on a broad array of cloud platforms, including Azure, Amazon Web Services and Google Cloud, as well as OpenStack for private clouds and virtualization platforms, but they need to buy the Kubernetes platform from Red Hat and deploy it in the cloud. With Azure Red Hat OpenShift, organizations can get OpenShift through Azure, a more tightly integrated service that makes deployment easier.

“This will be a native Azure service jointly integrated, jointly supported, jointly engineered by Red Hat and Microsoft,” Gracely says, adding that Microsoft customers will be able to buy OpenShift on-demand using their Azure credits. “It’ll be deployed in all the Azure locations around the world. It will be resalable by Microsoft, so for us it’s a really big expansion, of being able to get access to OpenShift for a whole new set of customers who ultimately say, ‘I like the technology, I just don’t want to manage it myself.’ The breadth of Microsoft is huge for us.”

Be the first to comment