In the wonderful world of software containers, it can feel like the ground is constantly shifting beneath your feet as new projects spring up to address problems that you thought had been solved long ago. In many cases, they were solved long ago, but modern cloud-native architectures are pushing application deployments out to greater scale than before, which calls for new tools and approaches.

Microservices are a case in point. Under this model, a typical application or service will be decomposed into independently deployable functional modules that can be scaled and maintained separately from each other, and which link together to deliver the full functionality of the entire application or service.

The latter piece can be the tricky one when using containers to develop microservices. How do you link up all the component parts when they may be spread across a cluster of server nodes, and instances of them are continually popping up and later being retired as they are replaced by updated versions? In a service-oriented architecture (SOA), which microservices can be seen as the evolutionary heir to, this kind of task is analogous to that taken care of by an enterprise service bus (ESB). So what is needed is a kind of cloud-native version of an ESB.

This is the job that Istio, a relatively new open source project, aims to fill. It is officially described as a service mesh, because parts of it are distributed across the infrastructure alongside the containers it manages, and it sets out to meet the requirements of service discovery, load balancing, message routing, telemetry, and monitoring – and, of course, security.

Istio sprung from a collaborative effort between IBM and Google, and actually incorporates some existing components, notably one developed by ride-hailing service Lyft. It has existed in some form for at least a year, but finally hit the milestone of version 1.0 at the end of July, meaning it is now finally considered mature enough to be operated as part of production infrastructure.

Jason McGee, an IBM Fellow and chief technology officer for IBM Cloud, tells The Next Platform that the cloud-native ecosystem has largely settled on containers as the core packaging and runtime constructs, and Kubernetes as the orchestration system to manage the containers. But McGee explains that there is a third piece of the puzzle that is still up in the air, which Istio is designed to meet.

“How do you manage the interactions between applications or services running on a container platform?” McGee asks. “If you are building microservices, or you have a collection of applications, there’s a whole bunch of interesting problems that arise in the communications between applications. How do you have visibility into who’s talking to who and performance, and collect data about the communication between applications, how do you secure those communications that control which services can talk to each other, and how do you secure applications, especially in the more kind of dynamic or distributed architecture that we have today, where you might have components on public cloud and components on prem?”

McGee says that his team at IBM had begun looking into this problem a couple of years ago, when he met up with his counterparts at Google and found they were going down the same road, but while IBM was chiefly concerned with routing of traffic, version control and A/B testing, Google was focused on security and telemetry. The two decided to merge their respective efforts, and Istio was the result.

Istio is made up of the following components:

- Envoy, which is described as a sidecar proxy because it is deployed as an agent alongside each microservice instance.

- Mixer, which is a central component used to enforce policies via the Envoy proxies and which collects telemetry metrics from them.

- Pilot is responsible for configuring the proxies, and

- Citadel, a centralized component responsible for issuing certificates, that also has its own per-node agents.

Envoy is the component that was developed by Lyft, and is described by McGee as a “very small footprint, layer 4 through 7 smart router” that traps all incoming and outgoing communications from the microservice it is paired with, acting as a way to control that traffic, applying policies and gathering telemetry. Pilot is the chief component contributed by IBM, and acts as the control plane for all of the Envoy agents deployed in the infrastructure.

“If you imagine in a service mesh, you might have a hundred microservices, and if each of those has multiple instances, you might have hundreds or thousands of these smart routers, and you need a way to program them all, so Istio introduces this thing called Pilot. Think of it as the programmer, the control plane for all these routers. So you have a single place through which you can program this network of services, and then there’s some other components around data gathering for telemetry, around certificate management for security, but fundamentally you have this smart routing layer and this control plane to manage it,” McGee explains.

Istio also has its own APIs that to allow users to plug it into existing backend systems, such as for logging and telemetry.

According to Google, Istio’s monitoring capabilities enable users to measure the actual traffic between services, such as requests per second, error rates, and latency, and it also generates a dependency graph so users can see how services affect one another.

Through its Envoy sidecar proxy, Istio can also apply Mutual TLS authentication (mTLS) on every service call, adding in flight encryption and giving users the ability to authorize every single call across the infrastructure.

The intention of Istio is that this takes away much of the need for the developer to worry about securing communications between instances, controlling which instance can talk to which others, and providing the ability to do things like canary deployments, whereby if a new version of the code for a specific microservice is published, only a subset of the instances get updated initially, until you are happy that the new code is functioning reliably.

It should be noted that other service mesh platforms already exist, such as Linkerd or Conduit on the open source side, while Microsoft has a service it calls Azure Service Fabric Mesh currently operating as a technical preview on its cloud platform. Moreover, a service mesh represents a layer of abstraction above the network plumbing, and so assumes that the network interface, IP address, and other network attributes have already been configured for each container instance. This typically means that deploying microservices will also call for a separate tool to automate network provisioning whenever a new container instance is created.

However, IBM hopes that Istio will become a standard part of the cloud-native toolkit, as has happened for Kubernetes, which is based on the Borg and Omega technology that Google developed for its own internal clusters and the container layer that rides on top of them.

“From a community perspective, my expectation is that Istio will become a default part of the architecture, just like containers and Kubernetes have become a default part of the cloud-native architecture,” says McGee. To this end, IBM expects to integrate Istio with the managed Kubernetes service it offers from its public cloud, and also with its on-premise IBM Cloud Private stack.

“So, you can run Istio today, and we support running Istio today on top of both those platforms, but the expectation should be that in the very near future, we will just build Istio in, so any time you are using our platform, the Istio componentry will be there, you can take advantage of it, and you don’t have to be responsible for deploying and managing Istio itself, you’ll just be able to use it in your application,” McGee says.

Google has already added Istio support, although it is only labelling it an alpha release, as part of a managed service that is automatically installed and maintained on a customer’s Google Kubernetes Engine (GKE) clusters in its cloud platform.

Istio is also picking up support from others in the industry, notably Red Hat, which re-engineered its OpenShift application platform a few years back to be based around Docker containers and Kubernetes.

Brian “Redbeard” Harrington, Red Hat’s product manager for Istio, says that Red Hat intends to integrate the service mesh into OpenShift, but there are a few rough edges that Red Hat would like to see improvements in before committing itself, such as multi-tenancy support.

“Istio right now has this goal of what they are calling soft multi-tenancy, which is to say, we want this to be available within an organization so that two different teams inside that organization can use it and trust it, so long as neither one is acting too maliciously, that they’re not going to be able to stomp all over each other’s services. With the way we run OpenShift Online, we have customers running code that we have never looked at, and we have to ultimately schedule those two customers alongside each other, and that’s a very different multi-tenancy challenge,” Harrington explains.

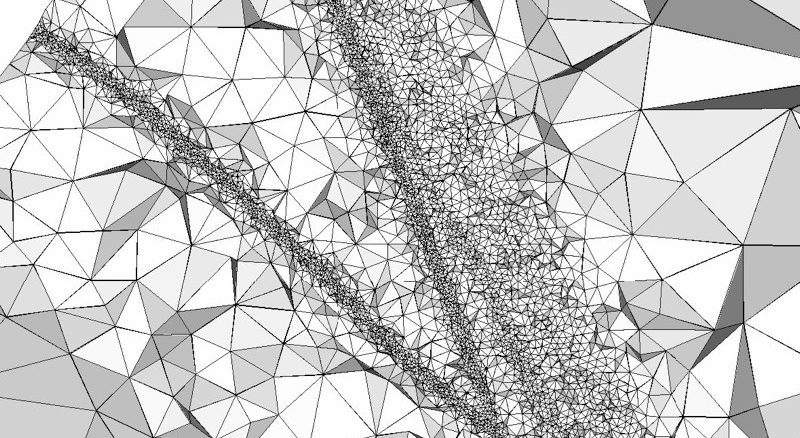

“We need to have a higher degree of confidence about that multi-tenancy story; we need to have a higher degree of confidence about the performance and stability. We have not seen any showstoppers with that, but we have seen areas where we feel we can provide a lot of value in doing scale testing and automating some regression testing, and we’ve also contributed a project at the community level called Kiali, which gives a visualization of what’s going on in Istio, and that is just a part of our offering,” he adds.

In other words, Istio is just another open source tool to add to the menu of choices for those looking to build a cloud-native application infrastructure. Vendors like Red Hat will take it and blend into their tested and supported enterprise platform offerings like OpenShift, while others will prefer to mix and match and build it themselves.

Be the first to comment