Even though graph analytics has not disappeared, especially in the select areas where this is the only efficient way to handle large-scale pattern matching and analysis, the attention has been largely silenced by the new wave machine learning and deep learning applications.

Before this newest hype cycle displaced its “big data” predecessor, there was a small explosion of new hardware and software approaches to tackling graphs at scale—from system-level offerings from companies like Cray with their Eureka appliance (which is now available as software on its standard server platforms) to unique hardware startups (ThinCI, for example) and graph acceleration frameworks.

But emerging machine learning frameworks and graph algorithms need not be competitors, according to Antonino Tumeo of Pacific Northwest National Lab (PNNL), there are instead ways to develop new workflows that allow high performance for both on standard CPU but also GPUs and custom processors. This work will take time, but graph algorithms can function as accelerators for certain parts of both supercomputing and machine learning training—the question is how to do this efficiently on commodity hardware and how much higher performing can graphs get with a new influx of custom processors funded by government entities like DARPA?

PNNL is one of the leading centers for graph analytics research with Tumeo spearheading several efforts that blend machine and deep learning, HPC, and graph analytics with an eye on performance at scale. In a chat with The Next Platform, he pointed to several ongoing efforts to extend graph analytics to emerging hardware and software platforms, several of which fall under the DARPA HIVE program, a processor-focused effort focused on developing a streaming graph device that can boost graph performance by 1000X with lower power than existing CPU or other chips by 2021 demonstrable in a 16-node system.

Tumeo has also done work via DARPA HIVE with both Qualcomm and Intel on developing such devices but these are not publicly available in test or other format. In addition to ThinCI chips mentioned above, he says the Graphcore deep learning chip is an interesting architecture, as is the Emu Technology architecture we described in some detail in 2016.

Work to further the performance, scalability, and capabilities profile of graph processors and software frameworks is also being supported through a few key projections tied to HIVE like the “HAGGLE” (Hybrid Attributed Generic Graph Library Environment), which is a five-year program to build an SDK of graph primitives, extendible application program interfaces (APIs), a hybrid data model, a data flow model and code transforms, data mapping mechanisms, and an abstract runtime system designed specifically for HIVE systems. The goal is that non-experts will be able to prototype code rapidly, while experts will have the option to apply domain- and system-specific optimizations on HIVE and other hardware.

In addition to keeping close tabs on high performance metrics like the Graph500, which pits Top500 supercomputing sites against one another in an edge traverse-a-thon, another effort to make graph analytics meet the capabilities of future exascale supercomputing is underway at an ECP co-design center focused on graph analysis. The co-design center is at PNNL and is called ExaGraph. Its focus areas are on a number of key data analytic computational motifs such as graph traversals; graph matching; graph coloring; graph clustering, including clique enumeration, parallel branch-and-bound, and graph partitioning – motifs that are currently not being addressed in existing ECP Co-Design Centers.

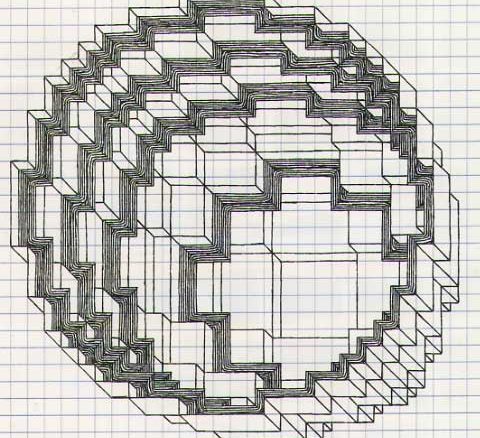

Since he has been at PNNL, Tumeo has lead the architecture exploration work for several program and its predecessors where his teams explored in this context GPUs, FPGAs, the EMU system, Data Vortex networking technology (the startup is closely tied to PNNL), and the original the Cray XMT. “A big outcome of the high performance data analytics work has been the Graph Engine for Multithreaded system, an ensemble of runtime, libraries, and compiler to manage a graph database on a commodity HPC systems, scaling dataset size and maintaining constant query throughput as compute nodes are added to the cluster.”

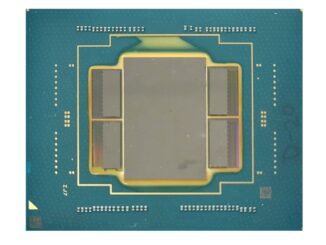

The custom processors are interesting, Tumeo says, but since HPC and deep learning systems are more likely to have GPUs or FPGAs, developing around commodity systems is critical. “GPUs are interesting to us even if they may not seem amenable to graphs. Unlike other areas, the memory bandwidth is actually a big benefit and an architecture like Volta has a much-improved memory subsystems so things like irregular data access go much faster now.” FPGAs are also of interest because of their flexibility in optimizing memory accesses and customizing the memory subsystem in a way that’s better than other architectures, even if they are harder to program he adds.

Even if the buzz around graphs has died down in the last couple of years, the applications where they fit are high-value, if not high in number. These include fraud detection, security, chemistry, power grid operations, transportation and other optimization domains, but increasingly, areas that mesh into the world of high performance computing (Bayesian networks for example) and deep learning since both have problem sets that can (or must) be represented as graphs.

Tumeo sees no slowdown for the existing areas and says that with the right integration, graph frameworks and hardware will be more of a fit for hybrid HPC and deep learning workloads.

Further work in supporting irregular access patterns in emerging codes will add to greater adoption as well, he says, but these are all research challenges that will take time to plow though, particularly in terms of fitting graphs into existing workflows to accelerate certain parts of applications.

More here on PNNL’s graph and analytics efforts—and how these might fit into a world where machine learning appears to dominate interest.

Be the first to comment