In emerging computing fields like quantum computing and neuromorphic computing, hardware usually grabs the lion’s share of attention. You can see the systems and the chips that drive them, talk about qubits and computing that simulates how the human brain works, sort through the speeds and feeds, talk about interconnects and power consumption and transistors, and imagine all this getting smaller and denser as the latest generations roll out.

But as Intel noted this week at its Intel Innovation 2022 show, while the hardware is important to bringing quantum and neuromorphic to life, what will drive adoption is the accompanying software. Systems are nice to look at, but they are decorations if organizations can’t use them.

That was the message behind some of the news Intel made related to quantum and neuromorphic computing at conference in San Jose, California. On the quantum side, Intel unveiled the beta of its Quantum SDK (software development kit), a package that includes various applications and algorithms, a quantum runtime, a C++ quantum compiler, and the Intel quantum simulator.

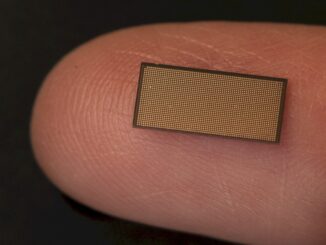

In the neuromorphic field, the company did unveil Kapoho Point, a system board holding Intel Loihi 2 research chips that can be used in such small form factors as drones, satellites, and smart cars. The boards – which can drive AI models that have up to 1 billion parameters and solve optimization problems that have up to 8 million variables – also can scale through stacking as many as eight (for now) to address larger problems.

According to Intel, Kapoho Point offers 10X the speed and 1,000X times the power efficiency as the most modern CPU-based systems.

However, Intel also offered an incremental enhancement of Lava, its open-source software stack for neuromorphic computing first introduced a year ago with Loihi 2. Improvements to the modular framework for developing neuromorphic algorithms include greater support for Loihi 2 features, including programmable neurons, graded events, and continual learning.

Such offerings fall in line with the expanding software approach Intel is taking throughout the company under CEO Pat Gelsinger as he looks to modernize a company known for decades as a hardware and component maker but which now is finding its way in a changing IT world where hardware is dictated by the needs of the application.

As with many software programs at the company, it remains to be seen how Quantum SDK and Lava will evolve within the Intel business model. The company is saying that both of these open software packages will be used to help expand these relatively new markets, but a question is whether Intel down the road will find ways to monetize the software to grow its bottom line or if they are more useful as ways to accelerate the adoption of its own quantum and neuromorphic ambitions by growing the customer base for the offerings.

Speaking to journalists a few days before Innovation began, Anne Matsuura, director of quantum and molecular technologies at Intel, said that the company had been working on software to support is quantum efforts for a while, but hadn’t until more recently thought of pulling it together into an SDK that others could use.

“Even though we’re saying ‘software first’ and we are changing towards a software-first company, Intel’s a hardware company,” Matsuura said. “We were not initially planning on putting the software out separately. But as we started, we were inspired by other companies out there. We thought, ‘We really need to be looking at developing an ecosystem of users for Intel quantum technologies, too,’ and we began to realize that’s actually a good idea to put the software out first. That’s the only reason why we waited so long. It hadn’t occurred to us.”

That said, the software is a key component to the eventual rollout of an Intel-based quantum computer, she said. A goal is to “get people used to using our software, get people used to using Intel quantum technology. That way you’re basically learning how to program an Intel quantum computer by using the Quantum SDK. That’s sort of the impact. As far as performance, I don’t know that there is much impact on the actual performance of the qubits themselves, but our software SDK, our stack, has been created in tandem with our qubit chip, so we’re uniquely suited for operating the Intel qubits.”

The foundation of the Quantum SDK is the use of LLVM intermediate-level description from classical computing and is optimized for quantum-classical algorithms, or variational algorithms, which are among the most popular today. According to Matsuura, the software also will work with such components as the company’s quantum simulators and eventually Intel’s spin-qubit-based quantum chips, which resemble transistors.

Intel has created a 300 millimeter wafer line for its quantum dot spin-qubit chips and is focusing on everything from the hardware and software architectures to applications and workloads.

Right now, the SDK includes a compiler for a binary quantum instruction set and a quantum runtime to manage the execution of the program, Matsuura said. The compiler “enables user-defined quantum operations and decomposes them into operations that are available on the intel quantum dot qubit chip,” she said. “We’ve enhanced the industry-standard LLVM [low-level virtual machine] intermediate representation with quantum extensions. We’ve done this intentionally using industry standards so that in future we can open source the front end of the compiler and allow people to use whatever front-end compiler they want and target our LLVM IR interface.”

Intel already has open sourced the quantum simulator in the SDK and can run one- and two-qubit operations and can simulate 30 qubits on a laptop and more than 40 qubits on distributed compute nodes. Intel intends to roll out version 1.0 of the SDK in the first quarter next year and later a full SDK that includes both hardware and software.

In version 1.0, Intel is working on enabling developers to import non-Intel tools, like Qiskit and Cirq, into the SDK. At the same time, Intel also is helping to fund higher education institutions like Penn State in the United States and Deggendorf Institute of Technology in Germany.

As with the Quantum SDK, Intel initially had no intention of creating a software framework out of the software developed and used in-house for neuromorphic computing, according to Mike Davies, senior principal engineer and director of the chip maker’s Neuromorphic Computing Lab. However, it became clear that the lack of a general-purpose software stack was hobbling the industry’s efforts in the field.

“Until Lava, it’s been very difficult for groups to build on other groups’ results even within our own community because software tends to be very siloed, very laborious to construct these compelling examples,” Davies told journalists. “But as long as those examples are developed in a way that cannot be readily transferred between groups and you can’t design those at a high level of abstraction, it becomes very difficult to move this into the commercial realm where we need to reach a broad community of mainstream developers that haven’t spent years doing PhDs in computational neuroscience and neuromorphic engineering.”

Lava is an open-source framework with permissive licensing, so the expectation is that other neuromorphic chip manufacturers – which include the likes of IBM, Qualcomm, and BrainChip – will port Lava to their own frameworks. It’s not proprietary, though Intel is the major contributor to it, Davies said.

The latest iteration of Lava is version 0.5, though the company has been steadily rolling out releases of Lava to GitHub, he said. It also illustrates, along with circuit improvements in Loihi 2, the advancements made in the chip for running deep feed forward neural networks, a basic type of network used in some supervised learning uses. There are fewer chip resources needed to support these networks, the inference operation is up to 12X faster than Loihi 1, and 50X more energy efficient.

These aren’t the types of workloads that Loihi was designed to support – GPUs and other accelerators can run deep feed forward networks well. And Intel is aware of this. “It is very important that we support feedforward neural networks of the kind that everyone is using and loves today because they’re just an important building block for future neuromorphic applications.” Davies said.

The basis of the Quantum SDK is the usage of LLVM intermediate-degree description from classical computing and is optimized for quantum-classical algorithms, or variational algorithms, which can be some of the maximum famous today. According to Matsuura, the software program will also paintings with such additives because of the company`s quantum simulators and in the end Intel`s spin-qubit-primarily based totally quantum chips, which resemble transistors.thanks for share this blog